AI Education — March 31, 2026 — Edu AI Team

Backpropagation is the method a neural network uses to learn from its mistakes. In plain English, it works like this: the model makes a prediction, checks how wrong that prediction was, then sends that error backward through the network so it can adjust itself and do a little better next time. If you have ever marked a practice quiz, seen what you got wrong, and changed how you studied, you already understand the basic idea behind backpropagation.

This idea sits at the heart of modern AI systems, especially neural networks, which are computer systems loosely inspired by the brain. Backpropagation may sound intimidating, but the core logic is simple: guess, measure the mistake, adjust, repeat. Once you see it step by step, it becomes much easier to understand why it matters in machine learning and deep learning.

Before we explain backpropagation, we need one small building block: the neural network itself.

A neural network is a computer model that tries to find patterns in data. For example, it might learn to tell whether an email is spam, whether a photo contains a cat, or whether a customer might cancel a subscription.

You can think of a neural network as a chain of tiny decision-makers. Each one takes in some information, does a small calculation, and passes the result forward. These decision-makers are often called neurons, but they are really just math units.

Each connection between these units has a number attached to it called a weight. A weight tells the model how important one piece of information is. If the weight is large, that input matters more. If it is small, that input matters less.

At the start, the network does not know the right weights. It begins with random guesses. Learning means finding better weights over time.

Imagine you are teaching a child to throw a ball into a basket.

A neural network learns in a similar way. It makes a prediction, compares it with the correct answer, and then changes its internal settings. The challenge is this: a neural network can have hundreds, thousands, or even millions of weights. So how does it know which ones to change?

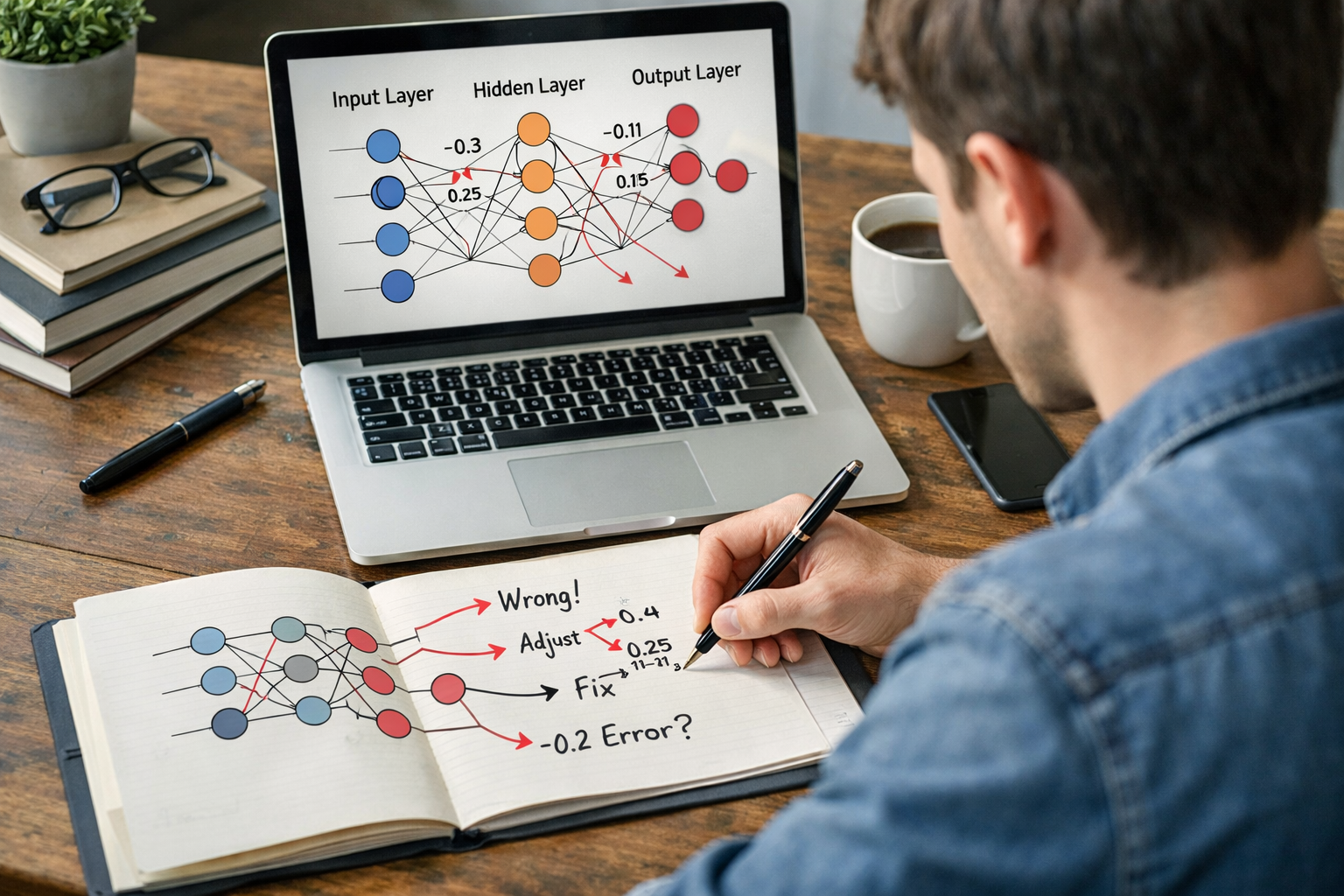

That is what backpropagation helps solve. It works backward through the network and estimates how much each weight contributed to the final error. Then the model can adjust the most responsible parts.

Let us imagine a very small neural network that tries to predict house prices.

Suppose you give it information about one house:

The correct price is $300,000.

The network makes a prediction of $250,000.

That means it is off by $50,000.

Now the network needs to improve. It has many weights inside it controlling how much size, bedrooms, and age affect the result. Backpropagation helps answer questions like:

Backpropagation sends the error backward through the model and calculates how much each connection should change. Then the weights are updated slightly.

After this adjustment, maybe the next prediction is $262,000. Still wrong, but less wrong. After many rounds of this process on many examples, the network may get much closer to the real prices.

This is called the forward pass. Data goes into the network, moves through the layers, and produces an answer.

For example, if the task is image recognition, the network might look at a photo and say, “I think this is a dog with 60% confidence.”

The model compares its prediction to the correct answer. The difference is called the error or loss.

If the image was actually a cat, then the model made a mistake. A loss function turns that mistake into a number. Bigger number = worse prediction. Smaller number = better prediction.

This is the actual backpropagation step. The network traces the mistake from the output layer back through the earlier layers.

In simple terms, it asks: “Which internal settings caused this wrong answer, and by how much?”

It does not just say, “Something was wrong.” It gives a more detailed message: “This weight should increase a little. That weight should decrease a little.”

After backpropagation estimates the responsibility of each weight, the network updates them. This update is usually done with a method called gradient descent, which simply means taking small steps toward lower error.

Then the cycle repeats:

This happens again and again, often thousands of times.

Imagine you bake cookies for the first time.

You use:

But the cookies come out too dry.

You now work backward:

Next time, you slightly reduce the flour and increase the butter. If the result improves, you are moving in the right direction.

That is very similar to backpropagation. The final result is bad, so you look backward through the process and adjust the ingredients that likely caused the problem.

Backpropagation matters because it gives neural networks an efficient way to improve. Without it, the model would be guessing blindly about how to change millions of weights.

Its main strengths are:

This is one reason neural networks became so important in speech recognition, image analysis, translation tools, and generative AI systems.

No. Machine learning is the bigger field. Backpropagation is one learning method used inside neural networks, which are one type of machine learning model.

It is mainly associated with neural networks, especially deep learning. Deep learning simply means neural networks with many layers.

No. It is adjusting numbers to reduce error. The system may become very good at a task, but that does not mean it understands in the human sense.

Because learning from mistakes works best when the model sees many examples. One or two examples are not enough to reliably discover useful patterns.

Even if you are a complete beginner, you have probably already used products that depend on neural networks trained with backpropagation. Examples include:

You do not need to memorize the equations to understand the big picture. What matters first is knowing that these systems improve by comparing predictions with correct answers and adjusting themselves over time.

No. For most beginners, understanding the intuition comes first. The math can come later.

If you jump straight into symbols and formulas, backpropagation can feel overwhelming. But once you understand the plain-English version, the math becomes much more meaningful. You know what the formulas are trying to do: assign blame for an error and improve the model step by step.

If you are just starting your AI journey, it often helps to begin with beginner-friendly lessons that explain neural networks, weights, errors, and training in simple language before introducing code. If that sounds useful, you can browse our AI courses to find beginner-focused learning paths in machine learning and deep learning.

Backpropagation is how a neural network learns: it checks how wrong its answer was, sends that mistake backward through the network, and slightly adjusts its internal weights so it can make better predictions next time.

If you remember that sentence, you already understand the core idea better than many beginners think they do.

Backpropagation is one of those topics that appears again and again in AI education. If you want to understand how modern AI systems are trained, this concept is a key stepping stone.

You do not need to become a mathematician overnight. Start with the intuition. Then build gradually. A strong beginner foundation can make later topics like deep learning, computer vision, and natural language processing much easier to follow.

If this explanation made backpropagation feel less intimidating, the next step is to keep building from the basics. A guided beginner course can help you connect ideas like neural networks, gradient descent, training data, and real AI applications without drowning in jargon.

You can register free on Edu AI to start learning at your own pace, or view course pricing if you want to compare learning options before committing. The goal is simple: take complex AI ideas and turn them into clear, practical skills for beginners.