AI Education — April 1, 2026 — Edu AI Team

Q-learning is a simple way to teach a computer or robot how to make better decisions by trial and error. Instead of being told the correct answer in advance, it tries actions, sees what reward or penalty happens, and slowly learns which choice is best in each situation. In plain English, Q-learning is like learning a game by testing moves, remembering what worked, and repeating the actions that lead to better results.

If that sounds abstract, do not worry. You do not need coding experience or a math background to understand the big idea. This guide breaks Q-learning down from first principles, using everyday examples so you can see how it fits into the wider world of artificial intelligence.

Many AI systems learn from examples. For example, if you want a computer to spot cats in photos, you show it many pictures labeled “cat” or “not cat.” But some problems do not come with clear labeled answers. Sometimes an AI must learn by interacting with an environment and seeing what happens.

Imagine teaching a robot to find the exit in a maze. You do not hand it the perfect path. Instead, it moves left, right, up, or down. If it hits a wall, that is bad. If it reaches the exit, that is good. Over time, it learns which decisions lead to success. That kind of learning is called reinforcement learning.

Reinforcement learning means learning through rewards and penalties. Q-learning is one of the best-known beginner-friendly methods in this area.

Think about a child learning which route through a playground gets to the slide fastest.

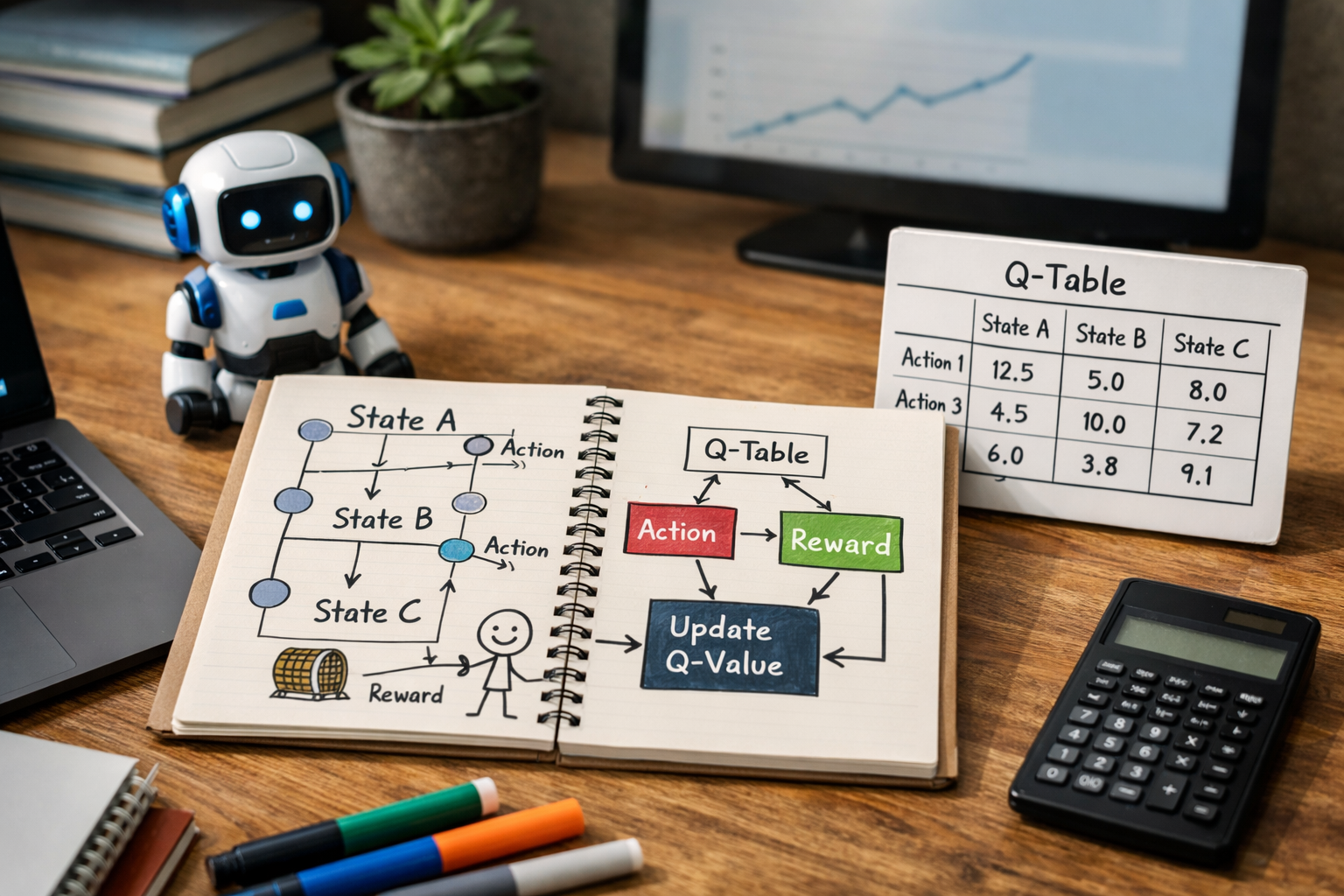

Q-learning works in a similar way. It keeps a running record of how useful each action seems to be in each situation.

That is what the letter Q stands for: quality. A Q-value is a number that estimates how good an action is in a given situation.

For example:

If going right usually gets you closer to the goal, then “go right” will eventually get a higher Q-value than “go left.”

You can understand most of Q-learning with just four ideas.

A state is the current situation. In a maze, a state could be the robot's location. In a game, it could be the current board position.

An action is a choice the learner can make. In a maze, actions might be move up, down, left, or right.

A reward is feedback. Good outcomes give positive reward, and bad outcomes may give zero or negative reward.

Example rewards in a maze:

This reward design encourages the learner to find the exit quickly instead of wandering around forever.

The Q-value is the score for taking a certain action in a certain state. The bigger the number, the better that action is expected to be.

Let us keep using the maze example. Here is the process in simple terms:

At first, the learner knows nothing. Its choices are mostly guesses. But after enough attempts, the Q-values become more useful. The learner then starts choosing better actions more often.

This is why Q-learning is often described as learning from experience.

Suppose an AI is in one room and can choose only two actions:

At the beginning, it may assign both actions a Q-value of 0 because it has no information.

After trying go left, it bumps into a wall and gets a reward of -5. That action now looks worse.

After trying go right, it gets closer to the exit and receives +2. That action now looks better.

After many repeats, the scores may start to look like this:

The AI does not “understand” the maze like a human. It simply has evidence that one choice tends to lead to better outcomes.

Q-learning matters because it shows a core idea in modern AI: a system can improve its behavior without being given every answer in advance. It can discover useful strategies from feedback.

This idea is important in areas like:

For beginners, Q-learning is also a great entry point into reinforcement learning because the logic is easier to see than in more advanced methods.

One of the most important ideas in Q-learning is the balance between exploration and exploitation.

Exploration means trying new actions to gather information. Exploitation means using the action that already seems best.

Imagine ordering food from a delivery app:

If you always exploit, you may miss an even better option. If you always explore, you may keep making poor choices. Q-learning works best when it does both: explore enough to learn, then exploit more as confidence grows.

Beginners often mix Q-learning up with other AI ideas, so let us clear that up.

In other words, Q-learning is useful when an agent must learn what to do by interacting with a setting step by step.

Q-learning is powerful for learning the basics, but it has limits.

If there are too many possible states and actions, storing every Q-value becomes difficult. A tiny maze is manageable. A self-driving car in the real world is much more complex.

If you reward the wrong behavior, the learner may find strange shortcuts. For example, if a robot gets points just for moving, it might spin in circles instead of reaching the goal.

Because it depends on repeated trial and error, Q-learning may need many runs before it performs well.

Still, these limits do not make it unimportant. In fact, learning Q-learning first makes advanced reinforcement learning much easier later.

If you are brand new to AI, these are the most common confusion points:

If you want a clearer path into these ideas, it helps to start with beginner-first lessons that explain AI and Python step by step. You can browse our AI courses to see beginner-friendly options in reinforcement learning, machine learning, and Python basics.

Q-learning teaches more than one algorithm. It helps you understand how AI can:

Those ideas show up again and again in AI, robotics, optimization, and even business decision systems. So even if you never build a maze-solving robot, the thinking behind Q-learning gives you a strong foundation.

It can also be a useful first step if you are thinking about moving into AI as a new learner or career switcher. Starting with simple, visual topics often feels less overwhelming than jumping straight into complex deep learning theory.

The easiest path is to learn in this order:

If you are comparing learning options, you can also view course pricing before choosing a path that fits your budget and goals.

Q-learning explained simply for beginners comes down to this: an AI tries actions, receives feedback, and gradually learns which choices lead to better outcomes. That simple loop is one of the clearest ways to understand reinforcement learning.

If you want to turn that understanding into practical skills, a structured beginner course can save you hours of confusion. You can register free on Edu AI to start exploring beginner-friendly lessons in AI, Python, and reinforcement learning at your own pace.