AI Education — April 2, 2026 — Edu AI Team

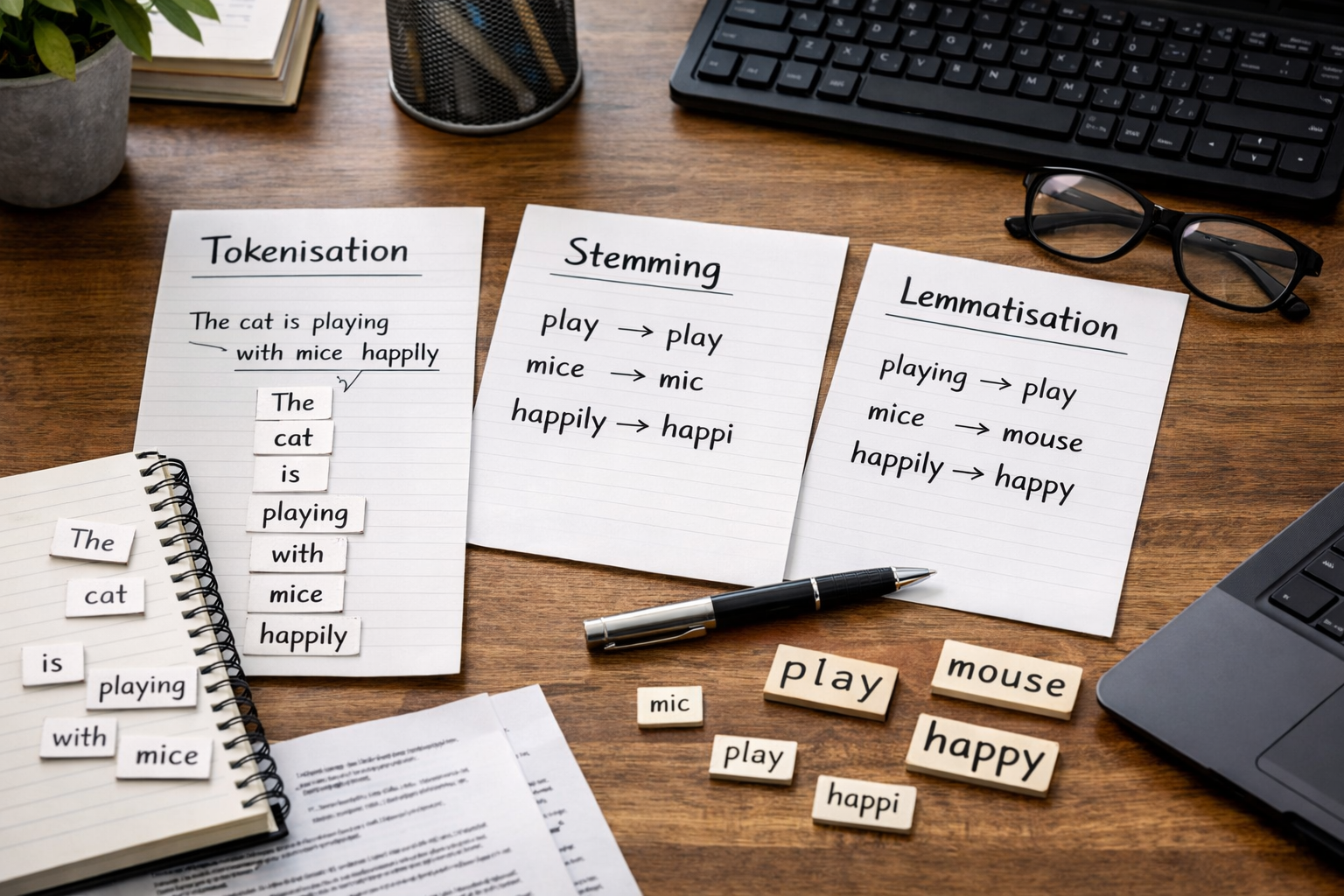

Tokenisation, stemming and lemmatisation are three basic steps used to help computers understand written language. Tokenisation breaks text into smaller pieces such as words or sentences. Stemming cuts words down to a simple root form, even if that root is not a real word. Lemmatisation turns words into their correct dictionary form, such as changing “running” to “run” or “better” to “good.” If you are new to artificial intelligence, these three ideas matter because they are often the first things a computer does before it can analyse text.

This article explains each term in plain English, shows simple examples, and helps you understand when each method is useful. You do not need any coding or AI background to follow along.

Humans read language naturally. If you see the words “play,” “playing,” and “played,” you instantly know they are related. A computer does not automatically understand that connection. To a machine, those may look like three separate items unless we prepare the text first.

This preparation step is called text preprocessing. It means cleaning and simplifying language so a computer can work with it more easily. Text preprocessing is used in many AI tasks, including:

Tokenisation, stemming, and lemmatisation are all part of this early preparation stage in natural language processing, often shortened to NLP. NLP is the area of AI that helps computers work with human language.

Tokenisation means splitting text into smaller units called tokens. A token is usually a word, but it can also be a sentence, part of a word, or even a punctuation mark depending on the task.

Take this sentence:

“I am learning AI today.”

Word tokenisation might split it into:

Sentence tokenisation works at a larger level. If you have a paragraph with 3 sentences, sentence tokenisation separates it into those 3 sentences.

A computer cannot analyse a full paragraph very easily if it sees it as one long string of characters. Tokenisation creates structure. It gives the machine clear pieces to count, compare, and learn from.

For example, if you want to know how many times the word “AI” appears in 1,000 product reviews, tokenisation is one of the first steps. Without it, the text is much harder to process.

As a beginner, the easiest way to think about tokenisation is this: it is the act of chopping text into manageable pieces.

Stemming means reducing words to a shorter base form called a stem. The goal is to treat related words as if they are the same.

Look at these words:

A stemming tool may reduce all of them to something like:

That sounds useful, but stemming is not always tidy. Sometimes it produces forms that are not proper words. For example:

Notice that “studi” and “happi” are not real dictionary words. That is normal in stemming.

Stemming is fast and simple. If your goal is just to group similar words together, it can work well enough. For example, in a search system, a user searching for “learn” may also want results containing “learning” or “learned.” Stemming can help match those words.

Because stemming cuts words mechanically, it can be rough. It does not always understand meaning or grammar. Two different words may be cut in strange ways, and sometimes important detail is lost.

So, the beginner-friendly summary is: stemming is a quick shortcut that trims words down, but it is not always accurate.

Lemmatisation also reduces words to a base form, but it does so more intelligently than stemming. The result is called a lemma, which is usually the correct dictionary version of a word.

Consider these words:

Lemmatisation can convert all of them to:

Another example:

This is something stemming usually cannot do well, because lemmatisation pays attention to grammar and meaning.

Lemmatisation gives cleaner and more meaningful results. If you are analysing customer reviews, news articles, or chatbot conversations, using proper base words often improves quality.

The trade-off is that lemmatisation is usually slower and slightly more complex than stemming because the system has to understand more about the language.

In simple terms: lemmatisation is slower but smarter.

These three steps are related, but they do different jobs.

If the input word is “studying”:

If the input is the sentence “The children are playing outside”:

This example shows the key difference. Stemming focuses on speed and cutting. Lemmatisation focuses on correct language form.

If you are just starting in AI or machine learning, here is an easy rule of thumb:

In real projects, tokenisation is almost always used first. After that, a developer may choose stemming or lemmatisation depending on the goal.

Imagine an online shop has 10,000 customer reviews. The company wants to know what people say most often.

Here is how these methods help:

If 500 reviews contain “delivered,” 300 contain “delivery,” and 200 contain “delivering,” a preprocessing method can help the system see that these words are related. That makes patterns easier to spot.

This is one reason NLP is so valuable in business, customer support, and search tools. If you want to understand these ideas in a practical, step-by-step way, you can browse our AI courses for beginner-friendly learning paths in NLP, Python, and machine learning.

Many new learners mix these terms up. Here are three common mistakes:

For example, if a task depends on tense or exact wording, aggressively reducing words may remove useful meaning. Good NLP is about choosing the right level of simplification.

If you want to move into AI, data science, or machine learning, understanding text preprocessing gives you a strong foundation. These are not advanced ideas reserved for experts. They are beginner topics because they explain how machines start turning messy human language into something learnable.

Even if you have never written code before, learning concepts like tokenisation, stemming, and lemmatisation helps you understand what happens behind chatbots, search bars, recommendation systems, and text analysis tools. That knowledge can make later topics feel much less intimidating.

At Edu AI, we focus on explaining technical topics in simple language for complete beginners. If you are exploring your first step into AI, it may help to view course pricing and see which learning path fits your goals and budget.

Tokenisation, stemming, and lemmatisation are three of the first building blocks in natural language processing. Remember the simplest version: tokenisation splits text, stemming trims words, and lemmatisation finds the correct base word. Once you understand that, you already know an important part of how computers work with language.

If you want to keep learning in a structured, beginner-friendly way, the next step is to register free on Edu AI. You can then explore introductory courses in NLP, Python, machine learning, and other AI topics at your own pace.