AI Certification Exam Prep — Beginner

Timed AI-900 simulations plus targeted drills to fix what costs points.

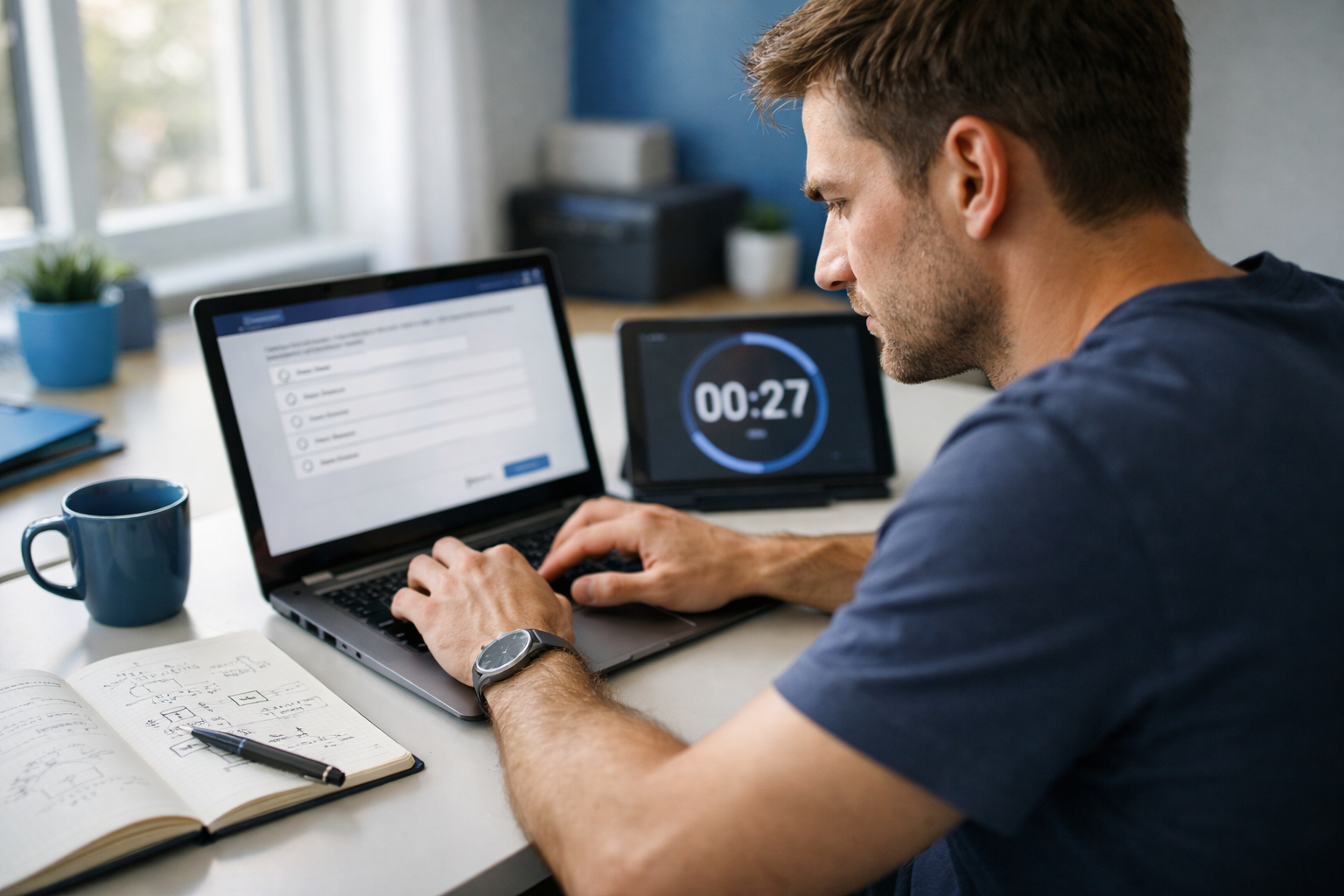

AI-900 (Microsoft Azure AI Fundamentals) rewards broad understanding, fast scenario recognition, and the ability to pick the right Azure AI approach under time pressure. This course is designed as a “mock exam marathon”: you’ll practice in timed conditions, diagnose what’s costing points, and then repair weak areas with focused drills aligned to the official exam domains.

You’ll start with an exam-ready foundation: how to register, what to expect in Microsoft’s exam interface, how scoring works, and how to build a realistic study plan even if you’ve never taken a certification exam before. From there, Chapters 2–5 map directly to the AI-900 domains and train you to answer the exam’s most common prompt patterns.

This blueprint follows the domains exactly as listed in the official objectives:

Each domain chapter combines concept clarity (what Microsoft expects you to know) with exam-style practice focused on scenario selection, terminology, and common distractors.

Chapter 1 sets your strategy: exam registration and logistics, study scheduling, time management, and a baseline diagnostic so you know where to focus. Chapters 2–5 go domain by domain, using “service selection” thinking (what workload is being described, what output is required, and what Azure capability best fits). You’ll also practice responsible AI considerations where they naturally appear in questions—especially around safety, privacy, transparency, and appropriate use of generative AI.

Chapter 6 is the capstone: two timed mock exam parts, followed by a structured review workflow to turn mistakes into repeatable rules. You’ll finish with a short exam-day checklist to manage pacing, reduce second-guessing, and avoid avoidable errors.

This course assumes basic IT literacy but no prior certification experience and no coding requirement. If you’re new to AI, you’ll learn the core vocabulary (training vs inference, features vs labels, common metrics) and how to translate a business scenario into an Azure AI approach.

If you’re ready to begin, Register free and start your first timed sprint. Prefer to compare options first? You can also browse all courses on the platform.

By the end, you won’t just “know” AI-900 topics—you’ll be practiced at answering Microsoft-style questions quickly, reviewing errors efficiently, and walking into exam day with a clear plan.

Microsoft Certified Trainer (MCT)

Nadia Herrera is a Microsoft Certified Trainer who builds beginner-friendly certification paths focused on real exam objectives and time-efficient practice. She has coached hundreds of learners through Microsoft Fundamentals exams using mock exams, targeted remediation, and exam-day strategies.

AI-900 (Azure AI Fundamentals) rewards clarity over complexity. Your job in this course is not to become a data scientist—it’s to become predictable under exam conditions: understand what Microsoft is testing, recognize common distractors, and manage time across mixed question formats. This “Mock Exam Marathon” approach starts with logistics (registration and scheduling), then locks in a scoring-aware strategy, and finally builds a repeatable practice loop that exposes weak spots quickly.

Across the exam, you’ll be asked to describe AI workloads, distinguish training vs. inference, select Azure services for computer vision and NLP, and explain generative AI and Azure OpenAI safety basics. You don’t need deep math; you do need crisp definitions, appropriate service selection, and responsible AI thinking that threads through every domain. This chapter sets your plan: book the exam date, choose a two- or four-week path mapped to official domains, and run a baseline diagnostic so you know where to spend your minutes.

Exam Tip: Treat AI-900 like a “terminology + scenario matching” test. When you miss items, it’s often because you chose a plausible-sounding service or metric, not because you lacked advanced knowledge.

Practice note for Understand AI-900 format, question types, and time management: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Register for the Microsoft AI-900 exam and set a test date: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Build a 2-week and 4-week study plan mapped to official domains: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Baseline diagnostic: identify strengths and weak spots: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Understand AI-900 format, question types, and time management: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Register for the Microsoft AI-900 exam and set a test date: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Build a 2-week and 4-week study plan mapped to official domains: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Baseline diagnostic: identify strengths and weak spots: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Understand AI-900 format, question types, and time management: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Register for the Microsoft AI-900 exam and set a test date: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

AI-900 is designed for broad audiences: business stakeholders, students, and technical practitioners who need a foundation in AI concepts and Azure AI services. The exam is not a coding test. Instead, it measures whether you can describe common AI solution types (classification, regression, clustering, anomaly detection), understand the basic machine learning lifecycle (training vs. inference), and select the right Azure service for common workloads in computer vision, natural language processing, and generative AI.

Expect scenario-style prompts that ask “what should you use?” rather than “how do you implement it?” For example, you may be given a need to extract text from scanned receipts (OCR), detect objects in images, analyze sentiment in customer reviews, or build a chatbot. The exam tests that you know which service family fits: Azure AI Vision for image analysis/OCR, Azure AI Language for text analytics, and Azure OpenAI for large language model use cases.

Common trap: confusing similarly named offerings or overcomplicating the solution. If the scenario is straightforward (e.g., “extract printed text”), do not jump to custom model training. Fundamentals questions usually favor managed, prebuilt capabilities unless the scenario explicitly requires custom labeling, bespoke categories, or model training.

Exam Tip: When a question mentions “training data,” “labels,” or “model versioning,” it’s pointing to custom ML concepts. When it mentions “quickly analyze,” “prebuilt,” or “no ML expertise,” it’s pointing to prebuilt Azure AI services.

Your study plan becomes real when you register and pick a date. Schedule first, then study toward the clock. Register through Microsoft Learn and the Microsoft Certification dashboard (Cert portal), where you can locate AI-900 and launch scheduling with the exam provider. You’ll choose delivery (online proctored or test center), language, time zone, and an appointment time.

Plan for the policies that can derail test day: ID requirements, check-in timing, room/desk rules for online proctoring, and reschedule/cancellation windows. Online exams typically require a clean desk, stable internet, and a private room—no second monitor, notes, or phone access. If you cannot guarantee a compliant environment, a test center may reduce stress and eliminate last-minute technical risk.

Exam Tip: Schedule the exam at your best cognitive time (often late morning). You’re training for timed performance, not just knowledge—timing matters.

Common trap: waiting to schedule “until you feel ready.” In exam prep, readiness follows commitment. A fixed date improves focus and prevents the endless “one more resource” loop.

Microsoft certification exams use scaled scoring. The headline number you care about is the passing score (commonly 700 on a 1–1000 scale). Do not assume each question is worth the same. Some items may be weighted differently, and some exams include unscored items used for validation. The practical takeaway: focus on consistent correctness across domains rather than trying to “game” point values.

Microsoft exams typically mix question types: multiple choice, multiple response (“choose all that apply”), drag-and-drop matching, and case-based scenario sets. You must manage attention switching. Multi-select items are a frequent score leak because candidates apply single-answer habits and miss that partial correctness may not be awarded—treat them as all-or-nothing unless the exam interface states otherwise.

Time management is part of the format. You need a pace that leaves room for review without rushing the last block. Build a simple rule: on first pass, answer what you can quickly, flag the rest, and return with remaining time. However, don’t over-flag—flagging is for uncertainty, not perfectionism.

Exam Tip: Read the last line first (“What should you use?”) before reading the scenario details. This prevents you from getting lost in irrelevant context and helps you identify what the question is truly testing: service selection, ML concept, metric interpretation, or responsible AI principle.

Common trap: overreading “Azure” branding and choosing a tool because it sounds advanced. Fundamentals questions reward the simplest correct service aligned to the requirement.

AI-900 preparation should be mapped to the official skill domains (AI workloads, machine learning fundamentals, computer vision, NLP, and generative AI). Your study time should mirror likely exam emphasis: you want coverage across all domains, with extra focus where you’re weakest. This course’s “Weak Spot Repair” approach starts with a baseline diagnostic (Section 1.5), then reallocates effort using your error log.

Choose a plan length based on your starting point:

Use active recall, not passive rereading. Build a one-page “service map” you can recite: which Azure service handles OCR, object detection, sentiment, key phrase extraction, translation, conversational agents, and generative text. Also include core ML terms: features vs. labels, training vs. inference, supervised vs. unsupervised, and common metrics like accuracy, precision, recall, and F1.

Exam Tip: When you study metrics, always pair them with the risk they manage. Precision reduces false positives; recall reduces false negatives. The exam often frames this as a business consequence (e.g., missing fraud vs. flagging legitimate transactions).

Common trap: spending too long on model algorithms. AI-900 rarely demands algorithm detail; it demands scenario-fit reasoning and correct terminology.

This course is a “Mock Exam Marathon,” which means your primary learning engine is timed simulation followed by disciplined review. Start with a baseline diagnostic: one timed mini-exam under realistic conditions. The goal is not a high score—it’s a clean signal about strengths and weak spots across the domains.

After each timed set, run a two-loop review:

Time management practice must be intentional. Use a pacing target (questions per minute) and practice making “good enough” decisions. If two options look close, anchor on the requirement: is it prebuilt analysis, custom training, conversational interaction, or generative creation? The exam rewards alignment to requirement more than breadth of features.

Exam Tip: Your error log is your score multiplier. Most candidates repeat the same confusion—Vision vs. Language, LUIS-era terms vs. newer Language capabilities, or training vs. inference. Fix the pattern once and you gain points repeatedly.

Common trap: reviewing only wrong answers. Review lucky guesses too; they are hidden weaknesses that often flip under time pressure.

Responsible AI is not a standalone topic you can “cram at the end.” In AI-900 it appears across domains as principles and practical safeguards. You should be able to recognize fairness, reliability and safety, privacy and security, inclusiveness, transparency, and accountability—and identify what actions support them in real solutions.

In machine learning scenarios, responsible AI often shows up as questions about bias in training data, explainability, and monitoring. If the dataset underrepresents a group, fairness risk increases; if stakeholders need to understand why a prediction happened (loan approval, healthcare), transparency matters. In computer vision, consider privacy and consent when analyzing faces or personal imagery. In NLP, watch for toxicity, sensitive data leakage, and overreliance on sentiment as “truth.”

Generative AI and Azure OpenAI add safety basics: content filters, grounded responses, prompt design to reduce hallucinations, and careful handling of personally identifiable information. You should be able to articulate why you would apply moderation, limit data exposure, and log/monitor outputs.

Exam Tip: If a question asks “what should you do first” in a responsible AI context, the best answer is often about defining requirements and risks, assessing data quality, or adding human oversight—not choosing a technical feature.

Common trap: treating responsible AI as optional governance. Microsoft exams frame it as a core design requirement. When two options both “work,” choose the one that reduces harm, improves transparency, or strengthens privacy/security while meeting the scenario goal.

1. You are planning your AI-900 preparation. You want a strategy that best matches how the exam is typically assessed. Which approach should you prioritize?

2. A company sets a strict time budget for the AI-900 exam because it includes mixed question formats. Which time-management action best supports certification-style success?

3. During a practice session, you notice you often confuse training and inference in scenario questions. Which scenario best represents inference rather than training?

4. You are creating a 2-week or 4-week study plan mapped to the official AI-900 domains. Which set of topics best reflects what you should expect across the exam according to the chapter summary?

5. After a baseline diagnostic, you find you missed several questions because multiple answers looked plausible (for example, selecting the wrong Azure AI service). What is the most appropriate next step to reduce this specific error pattern?

In AI-900, “Describe AI workloads” is less about building models and more about selecting the right solution type for a business scenario. The exam repeatedly tests whether you can translate a short prompt—often written in non-technical language—into the correct AI workload category, the typical approach (rules vs ML vs deep learning), and the appropriate Azure service family (Azure Machine Learning, Azure AI Vision, Azure AI Language, Azure AI Search, Azure OpenAI, and bot services). This chapter builds your scenario-selection reflexes: recognize the workload, apply responsible AI principles where the prompt hints at risk, and avoid distractors that sound sophisticated but don’t match the task.

You’ll see the same pattern across items: identify inputs (text, images, audio, tabular data), outputs (label, score, bounding box, summary, answer), and constraints (latency, explainability, privacy, human-in-the-loop). Then choose the simplest workload that satisfies the requirement. Exam Tip: When two answers both “could work,” AI-900 usually rewards the most direct managed service aligned to the scenario (for example, OCR → Vision rather than a custom ML pipeline), unless the prompt explicitly demands custom training or domain-specific data.

This chapter also sets up your timed mini-sim mindset: you’ll practice quick workload recognition and governance cues (responsible AI) under time pressure, then use the “weak spot repair” loop—review why a distractor was tempting and what keyword should have redirected you.

Practice note for Recognize AI workload types and when to use each: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Apply responsible AI principles to exam scenarios: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Practice set: workload selection and governance questions: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Timed mini-sim: Describe AI workloads domain sprint: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Recognize AI workload types and when to use each: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Apply responsible AI principles to exam scenarios: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Practice set: workload selection and governance questions: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Timed mini-sim: Describe AI workloads domain sprint: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Recognize AI workload types and when to use each: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

AI-900 uses “AI” as the umbrella: any system that exhibits human-like capabilities such as perception, language, and decision support. “Machine learning” is a subset of AI where models learn patterns from data rather than being explicitly programmed. “Deep learning” is a further subset of ML that uses multi-layer neural networks, especially common in vision, speech, and modern language models.

On the exam, you’re rarely asked to derive algorithms, but you are expected to classify a scenario as rules-based automation versus ML-based prediction. A common trap is assuming every intelligent behavior requires ML. If the prompt describes deterministic logic (“if customer is in region A and order total exceeds X, route to queue Y”), that’s rules or workflow automation, not ML.

Another trap is equating “deep learning” with “generative AI.” Deep learning also powers classical tasks like image classification and object detection. Generative AI (like large language models) is typically used to create new text, code, or images, or to answer questions in natural language. If the scenario’s output is a label or probability (fraud risk score, churn likelihood), that’s predictive ML; if it’s a paragraph or conversation, you’re in NLP or generative AI territory.

Exam Tip: Look for the output format: numbers and categories suggest ML prediction/classification; bounding boxes suggest detection; extracted fields suggest information extraction; fluent natural language responses suggest conversational AI or generative AI.

This section maps directly to frequent AI-900 scenario stems. “Prediction” typically means forecasting a numeric value: sales next month, delivery time, energy consumption. That aligns to regression. “Classification” means choosing a category: spam vs not spam, high/medium/low risk, product type, sentiment label. “Detection” most often refers to finding something in an image (object detection) or identifying anomalies (anomaly detection) in time series or telemetry. “Recommendation” means ranking items for a user based on behavior, history, or similarity (products, articles, movies).

Azure framing matters. For classic tabular prediction/classification, Azure Machine Learning is the common answer when custom training is implied. For image detection/classification/OCR, Azure AI Vision is the typical managed service when you are not asked to build a bespoke model. For recommendations, the exam expects you to recognize the workload even if the service naming evolves; focus on “recommendations require ranking based on user-item interactions,” not just “classification of products.”

Distractors often swap terms: classification vs detection. If the prompt says “identify whether an image contains a dog,” that’s classification. If it says “locate all dogs in the image and draw rectangles,” that’s object detection. Similarly, recommendation is not the same as prediction: “predict whether a customer will buy item X” is a classification/prediction problem; “show the top 5 items the customer is likely to buy” is recommendation (ranking).

Exam Tip: Under time pressure, reduce each scenario to: input type + output type + action. “Image + bounding boxes + count” → detection. “Tabular + numeric value” → prediction/regression. “User history + ranked list” → recommendation.

Knowledge mining is about turning large volumes of unstructured or semi-structured content into searchable, structured insights. AI-900 commonly frames this as: “We have thousands of PDFs, scans, emails, and need to search, extract key phrases, and find answers.” The correct mental model is an ingestion pipeline: extract text (OCR if needed), enrich with NLP (entities, key phrases, sentiment, language detection), and index for search. On Azure, this is typically anchored by Azure AI Search with AI enrichment.

Information extraction differs from conversational generation. Extraction means pulling specific fields or facts (invoice number, total amount, date, named entities, PII). If the prompt emphasizes compliance, review, or data entry automation, you’re likely in OCR + entity extraction rather than a chatbot. A common trap is choosing a generative AI answer because it “can read documents,” but the exam usually prefers purpose-built extraction when the output is structured fields.

Watch for keywords: “index,” “search across documents,” “enrich content,” “metadata,” “entity recognition,” “OCR,” “forms,” “scanned documents.” Those cue knowledge mining. Another distractor is over-selecting Azure Machine Learning. Unless the prompt says “train a custom model,” managed cognitive services + search are the standard selection.

Exam Tip: If the scenario’s success metric is “find relevant documents quickly” or “enable search,” your anchor is search indexing; extraction/enrichment is supporting. If the success metric is “capture fields accurately,” your anchor is OCR/document processing, not chatbot tech.

Conversational AI scenarios revolve around interacting with users in natural language through chat or voice, often to answer FAQs, gather information, or trigger actions. AI-900 expects you to distinguish between (1) intent-based bots that route conversations to actions (traditional language understanding) and (2) generative copilots that produce open-ended answers, summaries, or grounded responses using provided content.

Scenario cues: “customer support chat,” “IT helpdesk,” “book an appointment,” “reset password,” “track an order,” “handoff to human agent.” In these, the bot needs orchestration, guardrails, and integration with business systems. If the prompt emphasizes deterministic flows and known intents, think language understanding + bot framework patterns. If it emphasizes drafting responses, summarizing policy, or answering questions from knowledge bases, think Azure OpenAI-style generative responses—ideally with grounding (retrieval) to reduce hallucinations.

Automation is a frequent distractor: some scenarios are simple workflow automation (no AI needed). If the user asks for “create a ticket when email contains ‘urgent’,” that’s pattern matching or rules. If it asks to “understand the user’s request even with varied phrasing,” that pushes you into NLP. Exam Tip: Look for “varied wording,” “natural language,” “intent,” “entities,” and “conversation state” as the tell that AI is required.

Finally, differentiate “bot UI” from “AI brain.” The exam may offer an interface option as a distractor. The correct answer usually targets the capability (language understanding/generative model) rather than the channel (Teams, web chat) unless the prompt explicitly asks for the deployment surface.

Responsible AI is not a separate topic on AI-900—it’s embedded in workload selection and governance. You’re expected to recognize risk signals in scenario text and map them to principles: fairness (avoid biased outcomes), reliability and safety (consistent behavior, robust performance), privacy and security (protect sensitive data), inclusiveness (works for diverse users and abilities), transparency (users understand when AI is used and why), and accountability (humans remain responsible, with auditability and oversight).

Fairness cues: hiring, lending, insurance pricing, criminal justice, healthcare triage—any decision affecting people’s opportunities. The trap is choosing “maximize accuracy” as the primary goal when the prompt implies equity or regulatory scrutiny. Reliability cues: safety-critical environments, real-time operations, medical devices, autonomous systems. Privacy cues: PII, PHI, financial records, children’s data, or “must comply with regulations.” Inclusiveness cues: accessibility, multilingual audiences, speech impairments, low-bandwidth environments. Transparency cues: “explain why denied,” “users must be informed,” “auditable decisions.” Accountability cues: “human review,” “escalation,” “appeals process,” “monitoring and retraining.”

Exam Tip: When the prompt includes “must be explainable” or “users can appeal,” choose options that include interpretability, documentation, and human-in-the-loop—not only model performance. Another common trap is assuming privacy is solved by “anonymizing” without considering access control, encryption, and data minimization; the exam often expects the broader governance posture.

Your mini-sim performance improves when you apply a repeatable elimination routine. Step 1: classify the input (text, image, speech, tabular, documents). Step 2: classify the output (label/score, extracted fields, bounding boxes, ranked list, natural language response). Step 3: note constraints (real-time vs batch, custom vs prebuilt, regulated vs low-risk). Step 4: pick the workload family and only then match to the service family named in the options.

Eliminating distractors is often faster than proving the correct answer. Remove options that require custom training when the prompt never mentions labeled data or model building. Remove options that are “platform” when the prompt asks for a specific capability (for example, a bot channel vs language analysis). Remove options that mismatch output type (summarization tool for a numeric forecast; OCR tool for sentiment classification). If two options remain, prefer the one that is purpose-built and minimal for the task described.

Time traps in timed sprints: over-reading and second-guessing. Instead, underline (mentally) one noun for input and one verb for output. “Detect defects” on a manufacturing line implies vision detection; “predict maintenance needs” implies ML prediction/anomaly detection; “extract invoice totals” implies OCR + extraction; “answer employee questions from policy documents” implies retrieval-grounded conversational AI.

Exam Tip: After each practice set, do “weak spot repair” by writing a one-line rule for the distractor you fell for (e.g., “classification ≠ detection; detection draws boxes”). This converts mistakes into fast heuristics for the next timed mini-sim.

1. A retail company wants to automatically extract the total amount and invoice number from scanned PDF invoices. They prefer a managed service and do not want to build a custom ML pipeline. Which AI workload and Azure service family should you choose?

2. A support team wants to classify incoming customer emails into categories such as Billing, Technical Issue, and Cancellation. The input is unstructured text, and the output is a label. Which workload best fits?

3. A healthcare provider is considering an AI system to help prioritize patients for follow-up care. The decision could significantly impact individuals. Which responsible AI action is most appropriate to address governance concerns in this scenario?

4. A manufacturer has sensor readings (temperature, vibration, pressure) and wants to predict whether a machine will fail in the next 7 days. Which workload and Azure service family is the best match?

5. A company wants employees to type natural-language questions like "What is our vacation policy?" and get answers sourced from internal HR documents. They want a managed approach optimized for searching and retrieving relevant passages from content. Which service family is most appropriate?

AI-900 expects you to understand machine learning (ML) as a lifecycle, not as a single “train a model” event. On the exam, many questions are designed to see whether you can separate training from inference, pick an appropriate learning approach (supervised/unsupervised/reinforcement), and interpret the most common evaluation metrics without overcomplicating them. In Azure terms, you must also recognize the high-level parts of an ML workflow—what a workspace is, why you need compute, and how experiments and pipelines relate to repeatability and deployment.

This chapter is your weak-spot repair kit for ML fundamentals: you’ll practice translating business statements into ML problem types, predicting which metric matters, and spotting the common traps (like treating accuracy as universally “best,” or mixing up validation and test data). You are not expected to build deep models from scratch in AI-900; you are expected to choose sensible options and explain what the numbers mean.

Exam Tip: When an answer choice sounds like it requires advanced math or detailed algorithm tuning, it’s usually not the AI-900 target. Prefer answers focused on correct problem framing, dataset splits, and plain-language metric interpretation.

Practice note for Choose the right ML approach: supervised, unsupervised, and reinforcement learning: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Interpret core ML concepts: features, labels, overfitting, and metrics: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Select Azure tooling for ML workflows (conceptual, exam-level): document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Practice set: ML fundamentals and Azure ML scenario questions: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Choose the right ML approach: supervised, unsupervised, and reinforcement learning: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Interpret core ML concepts: features, labels, overfitting, and metrics: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Select Azure tooling for ML workflows (conceptual, exam-level): document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Practice set: ML fundamentals and Azure ML scenario questions: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Choose the right ML approach: supervised, unsupervised, and reinforcement learning: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Interpret core ML concepts: features, labels, overfitting, and metrics: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

AI-900 tests the ML lifecycle as a sequence of distinct phases with distinct goals: data preparation, training, validation, testing, and inference (deployment/use). The exam frequently uses scenarios where a team “trained a model and it did great” but then fails in production—your job is to identify which phase was skipped or misused.

Data prep includes collecting data, cleaning (missing values, duplicates), encoding categorical values, and feature engineering (creating inputs that help the model learn). A common exam angle is that “better data” often beats “more complex algorithm.” If the prompt mentions inconsistent formats or lots of nulls, the correct action is typically data preparation, not changing the model type.

Training is when the algorithm learns patterns from labeled or unlabeled data. In supervised learning, training uses features (inputs) and labels (the target). Inference is separate: it’s the act of using the trained model to make predictions on new data.

Validation is used during model development to tune choices (hyperparameters, feature selection, thresholds) without “peeking” at the test set. Testing is the final, unbiased estimate of performance on unseen data—ideally representative of production.

Common trap: Using the test set to tune the model. If the scenario implies repeated evaluations on the test data while adjusting the model, that’s leakage; the test metric becomes optimistic.

Exam Tip: If you see “model performs well in the lab but poorly after deployment,” think about data drift (production data differs), leakage, or an unrepresentative test set. The correct fix is often to retrain with newer data, monitor, and validate on data resembling production.

Choosing the right ML approach is a core exam objective. AI-900 keeps this conceptual: identify which learning type fits the data you have and the outcome you need.

Supervised learning applies when you have labeled examples (inputs with known outputs). Typical tasks: classification (predict a category) and regression (predict a numeric value). If the scenario mentions “historical records with outcomes,” that’s your cue for supervised learning.

Unsupervised learning applies when you do not have labels and want to discover structure, often via clustering. If the prompt says “group customers by similar behavior” or “find segments,” that points to unsupervised learning.

Reinforcement learning is about an agent learning actions through rewards and penalties, often in sequential decision-making (robot navigation, game playing, dynamic resource allocation). AI-900 usually frames it as “learn the best action over time” rather than “predict a label.”

Common trap: Mistaking anomaly detection or segmentation as supervised. Unless the scenario clearly states labeled “anomaly” examples, grouping-like tasks are typically unsupervised (clustering). Another trap is assuming reinforcement learning whenever “optimize” appears; many optimization problems are still supervised (predict demand) followed by business rules.

Exam Tip: Anchor on the presence (or absence) of labels. If the question gives you a column like “Churn: Yes/No,” it’s almost certainly supervised classification.

After choosing supervised vs unsupervised, the next exam skill is correct problem framing. AI-900 loves subtle wording: “predict whether” vs “estimate how much” vs “group similar.” Map each to the right technique.

Classification predicts discrete categories (fraud vs not fraud, spam vs not spam, approved/denied). Binary classification is common, but multi-class also appears (categorize support tickets into departments). If outputs are categories, it’s classification.

Regression predicts a continuous numeric value (house price, demand forecast, time-to-failure). If the question asks for an “amount,” “score,” “temperature,” or “number of units,” regression is typically correct.

Clustering groups items into clusters based on similarity without pre-defined labels (customer segmentation, grouping IoT devices by behavior). Outputs are cluster assignments discovered from data rather than “correct” labels.

Common trap: Confusing “rank” with regression. Ranking (e.g., ordering recommendations) can involve regression-like scores, but AI-900 usually expects you to pick classification/regression/clustering based on the described output. If the output is a probability of purchase, that’s often classification (will buy vs won’t) even though the model outputs a probability.

Exam Tip: Ignore the input data type (text, images, numeric) when choosing classification vs regression. The question is about the output you need, not the modality.

AI-900 does not require you to compute metrics by hand, but it does require you to choose and interpret them. The exam frequently contrasts accuracy with precision/recall, and expects you to know that regression uses different measures like RMSE.

Accuracy is the fraction of correct predictions. It can be misleading with imbalanced data (e.g., 99% non-fraud). If almost everything is the negative class, predicting “not fraud” always yields high accuracy but a useless model.

Precision answers: “When the model predicts positive, how often is it correct?” Precision matters when false positives are costly (flagging legitimate transactions as fraud, or incorrectly diagnosing disease).

Recall answers: “Of all actual positives, how many did we catch?” Recall matters when missing positives is costly (failing to detect fraud, missing a safety issue). Many scenarios are about trading off precision vs recall via a threshold.

Confusion matrix summarizes true positives, false positives, true negatives, and false negatives. You don’t need to memorize every formula, but you must identify which error type the business cares about.

RMSE (Root Mean Squared Error) measures typical prediction error magnitude in regression. Lower RMSE is better; it’s in the same unit as the target variable (e.g., dollars). It penalizes large errors more than small ones.

Common trap: Choosing accuracy for fraud, rare disease, intrusion detection, or defect detection. In imbalanced classification, precision/recall (and sometimes F1) are usually more informative.

Exam Tip: If the scenario says “minimize missed detections,” choose recall-focused thinking. If it says “avoid false alarms,” think precision. If it predicts a number, look for RMSE (or other regression metrics) rather than confusion matrices.

For AI-900, Azure Machine Learning (Azure ML) is tested at a recognition level: know the major building blocks and what they’re for. Don’t over-index on implementation details (SDK calls, YAML syntax); focus on concepts you can map to scenario questions.

Workspace is the top-level container for Azure ML assets: datasets/data connections, models, runs, endpoints, and linked resources. If a question asks where you manage models, runs, and deployments, “workspace” is the anchor.

Compute refers to the compute targets used for training or inference. Training compute might be a CPU/GPU cluster; inference compute might be a managed endpoint. The exam often checks that you understand training is resource-intensive and can be scaled, and inference can be real-time or batch.

Experiments are organized collections of runs. A “run” is a single execution of a training script/pipeline step with tracked parameters and metrics. This supports reproducibility and comparison (e.g., which model version performed best).

Pipelines represent repeatable workflows (data prep → train → evaluate → register → deploy). Pipelines help operationalize ML and reduce “works on my machine” issues.

Common trap: Mixing Azure ML with Azure AI services. Azure AI services (prebuilt) are often used when you want no/low training; Azure ML is used when you train custom models. If the scenario emphasizes custom training with your own labeled data and model selection, Azure ML is typically the better fit.

Exam Tip: When you see “repeatable,” “automated,” “orchestrate steps,” or “MLOps,” pipelines are a strong clue. When you see “track runs and metrics,” think experiments.

This chapter’s skills come together in the exam’s scenario style. Your success depends less on memorizing definitions and more on a consistent decision process: identify the goal, identify labels, select learning type, select problem framing, then select an evaluation metric that matches business risk.

Metric-based choices: If the prompt includes rare events (fraud, defects, security incidents), treat “high accuracy” skeptically. Ask yourself which is worse: a false positive (unnecessary escalation) or a false negative (missed incident). That directly maps to precision vs recall. For numerical forecasts, select regression metrics like RMSE and look for language about “average error magnitude.”

Overfitting signals: The exam may describe “excellent training performance but poor test performance.” That’s classic overfitting. The correct remedies at AI-900 level are conceptual: more data, simpler model, regularization, or better validation—rather than obscure algorithm tweaks.

Training vs inference trap: If a question describes “using the model to make predictions on new images/text,” that’s inference. If it describes “learning from labeled examples,” that’s training. Many wrong options swap these terms.

Azure workflow traps: If the scenario emphasizes rapid adoption with minimal ML expertise and prebuilt capabilities, that leans toward Azure AI services. If it emphasizes custom features, custom labels, and experiment tracking, it leans toward Azure ML workspace/experiments/pipelines.

Exam Tip: When two answers both sound plausible, choose the one that aligns with the stated business cost of errors and the presence/absence of labels. These two clues usually eliminate distractors faster than technical jargon does.

1. A retail company wants to predict the next-day demand (number of units) for each product based on historical sales, promotions, and weather. Which machine learning approach should you choose?

2. You trained a binary classification model to detect fraudulent transactions. Fraud cases are rare (about 1% of transactions). Which evaluation metric is typically most useful to understand how many flagged transactions are actually fraud and how many frauds you catch?

3. A data scientist says, "Our model performs extremely well on the training dataset but significantly worse on the validation dataset." What is the most likely issue?

4. You are designing an ML workflow in Azure. You want a central place to manage datasets, experiments, models, and deployments for a team. Which Azure resource is primarily used for this purpose?

5. A team has trained a model in Azure and wants to use it to generate predictions in a business application. Which statement best describes the difference between training and inference?

AI-900 rarely tests whether you can build a full vision pipeline; it tests whether you can recognize the type of vision problem, the shape of the output (label, bounding box, text, embedding), and then pick the right Azure service. In this chapter you’ll practice that exam reflex: read a scenario, identify the core vision task, and map it to the service that produces the needed output with the least custom work.

The most common confusion on the exam is mixing up “image analysis” (understanding what’s in a picture) with “object detection” (where an object is) and “OCR” (what text is visible). Another frequent trap: choosing a training service (custom model building) when the prompt clearly wants an out-of-the-box API, or choosing a generic service when the scenario demands domain-specific extraction.

We will follow the chapter lessons in sequence: (1) identify core computer vision tasks and outputs, (2) map scenarios to Azure vision services and capabilities, (3) practice set themes around OCR/image analysis/detection, and (4) finish with a timed mini-sim mindset—how to sprint through service-selection questions without overthinking.

Exam Tip: When a question describes “identify what is in the image” and does not mention training, default to a prebuilt vision API. When it describes “find each instance and its location,” you’re in detection territory. When it describes “extract printed/handwritten text,” it’s OCR. Force yourself to say the output out loud before picking the service.

Practice note for Identify core computer vision tasks and outputs: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Map scenarios to Azure vision services and capabilities: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Practice set: OCR, image analysis, and detection questions: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Timed mini-sim: computer vision domain sprint: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Identify core computer vision tasks and outputs: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Map scenarios to Azure vision services and capabilities: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Practice set: OCR, image analysis, and detection questions: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Timed mini-sim: computer vision domain sprint: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Computer vision questions on AI-900 start with a deceptively simple skill: naming the workload. The exam expects you to distinguish between classification, object detection, and segmentation based on the wording and the required output.

Classification answers “What is this image?” or “Which category best describes it?” The output is typically a label (or multiple labels) with confidence scores. This is used for sorting, routing, or moderation-like categorization. Object detection answers “Where are the objects?” and returns bounding boxes (x/y/width/height) with a class label and confidence for each detected instance. Segmentation goes further: it identifies the exact pixel region of an object (a mask/polygon), which is useful when shape matters (defects, medical imaging, or background removal).

AI-900 often embeds the answer in the output requirement: if you see “bounding box” or “coordinates,” think detection; if you see “mask” or “pixels,” think segmentation; if you see “label only,” think classification. This is part of “Identify core computer vision tasks and outputs.”

Common trap: confusing detection with classification when the scenario says “identify all items on a shelf.” “All items” implies multiple instances and location, which points to detection, not single-label classification. Another trap is assuming every vision scenario needs training—many exam prompts intend prebuilt analysis.

Exam Tip: In timed questions, do a two-step parse: (1) What is the user asking for—label, location, or pixels? (2) Do they mention a need to train on company-specific images? Only then consider custom models.

Image analysis scenarios on AI-900 are the “general understanding” bucket: generate tags, captions, detect common objects/attributes, and return a structured description of what’s present. In Azure, this is typically framed as using Azure AI Vision image analysis capabilities (often described as extracting tags, descriptions/captions, categories, or detecting adult/racy content depending on the wording of the exam item).

What the exam tests is your ability to match non-location understanding tasks to the right service. If the scenario says “add alt text for accessibility,” “auto-caption images,” “tag photos for search,” or “summarize what’s in the picture,” think prebuilt image analysis. The output is usually a list of tags/objects with confidence scores and possibly a natural-language caption. This differs from detection because the prompt often doesn’t require precise coordinates.

Common trap: choosing OCR when the scenario says “understand a meme” or “caption a photo.” Even if text may exist, the primary requirement is semantic understanding (caption/tags), not text extraction. Another trap is picking Custom Vision simply because the organization has images; unless the prompt explicitly calls for training on proprietary classes, prebuilt image analysis is the expected exam answer.

How to identify correct answers: Look for verbs like “describe,” “tag,” “categorize,” “generate a caption,” “identify landmarks,” or “detect inappropriate content.” These map to image analysis. If the question mentions “return JSON with tags and confidence,” that’s also a strong signal.

Exam Tip: When multiple services look plausible, prefer the one that is (a) prebuilt and (b) directly produces the requested output type. AI-900 rewards minimal-sufficient service selection, not architecture ambition.

OCR is one of the highest-frequency vision objectives: extracting printed or handwritten text from images and documents. The key phrase the exam wants you to notice is “text in an image,” “scan,” “receipt,” “invoice,” “ID card,” “PDF,” “handwritten notes,” or “convert images to editable text.” In Azure service terms for AI-900, expect Azure AI Vision OCR/Read concepts (often described simply as an OCR capability that returns lines/words with positions).

OCR outputs differ from image analysis outputs. OCR returns the extracted characters plus structure: lines, words, and often bounding boxes around words/lines, along with confidence. This “where the text is” is not object detection; it is text layout information. The exam may include hints like “store the extracted text,” “index the text,” or “enable search over scanned documents”—all OCR-aligned.

Forms-style use cases add a subtle layer: the goal is not just reading text, but extracting fields (invoice number, total, date) and sometimes tables. On AI-900, you’re usually not expected to design a complex document intelligence solution, but you should recognize that a scenario describing key-value extraction from forms is beyond generic tagging/captioning. If the prompt emphasizes structured fields from business documents, think document extraction capabilities rather than plain image captions.

Common trap: selecting image analysis because “it’s an image,” even though the requirement is “extract text.” Another trap is confusing OCR with translation: OCR gets you text; translation is a subsequent NLP step.

Exam Tip: If the scenario includes “handwritten” or “scanned PDF,” OCR/Read is the anchor service. Then ask: do they need raw text + coordinates (OCR) or business fields (forms/invoice-like extraction)? Pick the most direct match.

Face/person scenarios are where AI-900 blends service selection with Responsible AI. The exam may describe: detecting whether a face is present, locating faces in an image, or analyzing facial attributes in allowed contexts. In Azure, these map to Azure AI Face capabilities when the requirement is explicitly about faces (not general objects). The typical outputs are face bounding boxes (location) and face-related metadata depending on what’s permitted.

However, the exam also expects you to know there are constraints and ethical considerations around facial recognition and sensitive attribute inference. If the scenario implies identifying a specific person (“who is this?”) or performing surveillance-like tracking, this triggers Responsible AI red flags and service policy considerations. In many exam-style prompts, the “best answer” is the one that avoids inappropriate biometric identification and instead uses less intrusive alternatives (for example, detecting presence of people without identifying individuals).

Common trap: assuming “face detection” and “face recognition” are the same. Detection is “a face exists here” (location). Recognition/verification is “this is the same person” or “match to an identity.” If the question only needs “count how many people are in the photo” or “blur faces,” it’s detection/localization, not identity.

Exam Tip: When you see person-related scenarios, pause and check for policy triggers: identification, tracking across cameras, or inferring sensitive traits. On AI-900, demonstrating Responsible AI awareness can be the differentiator between two plausible choices.

How to identify correct answers: If the prompt says “detect faces” or “return face rectangles,” Face is likely. If it says “detect objects/people” without face specificity, general vision detection may be sufficient. If it asks to identify an employee by face, expect the exam to steer you toward compliance/ethics constraints rather than a purely technical selection.

The exam loves “custom vs prebuilt” because it tests judgment, not memorization. Prebuilt vision works when your task matches common concepts (tags, captions, OCR, generic detection). Custom vision is for when your organization has domain-specific classes the prebuilt model won’t reliably recognize (defects on a manufactured part, specific product SKUs, proprietary symbols, unusual safety gear types).

In Azure, custom model building is commonly associated with Azure AI Custom Vision concepts: you provide labeled images, train a model, and then run inference. The key exam indicator is the requirement for training on your images: “train,” “label,” “improve accuracy for our products,” “new categories,” “factory-specific defects,” or “detect our brand’s packaging variations.” Those phrases are signals that prebuilt image analysis will be insufficient.

Common trap: picking custom vision just because the scenario mentions “high accuracy.” The exam usually expects prebuilt services unless there’s explicit evidence that the classes are unique or that a custom dataset exists and training is feasible. Another trap is ignoring the operational split: training happens once in a controlled phase; inference is the runtime prediction. AI-900 can ask which phase needs labeled data (training) versus which phase runs on new images (inference).

Exam Tip: Use the “3 U’s” test for custom vision: Unusual classes, Unique environment, Unmet by prebuilt. If at least one is clearly stated, custom becomes likely. If none are stated, default to prebuilt to match the exam’s intent.

Finally, remember output alignment: classification models output labels; detection models output bounding boxes. Custom Vision can be used for both classification and detection, and the scenario’s output requirement should dictate which you choose.

This section mirrors the chapter’s “practice set” and “timed mini-sim” goals without turning into a quiz. Your job in the exam sprint is to (1) spot the workload, (2) choose the service, and (3) verify the output matches what the scenario needs. Most wrong answers fail step (3): they pick a plausible service but the output type doesn’t satisfy the requirement.

Service selection patterns to memorize:

Output interpretation: AI-900 may present short excerpts of results (confidence scores, rectangles, lists of words). Train yourself to map outputs back to tasks. Lists of “tag: confidence” imply classification/image analysis. Arrays of “rectangle: x,y,w,h” imply detection/localization. Hierarchies of “page → line → word” imply OCR. If you see confidence values, remember they are not “accuracy”; they are model certainty for that specific prediction.

Common timed-sim trap: over-reading the scenario and inventing requirements. The exam text is usually sufficient: if it never says “train,” don’t choose a training-centric solution. If it never says “identify a person,” don’t assume recognition is required. Answer what is asked, not what might be nice.

Exam Tip: In a domain sprint, spend your first pass eliminating answers that produce the wrong output type. Only then compare the remaining services on whether they are prebuilt vs custom and whether Responsible AI constraints make one choice unsafe or noncompliant.

1. A retail company wants an application that returns a short description of what is happening in a product photo (for example, “a person holding a red backpack”) and a set of tags (for example, “person”, “backpack”, “indoor”). The company does not want to train a model. Which Azure service and feature should you use?

2. A company is building a safety solution that must locate every hard hat in images taken at a construction site and return the coordinates for each hat. No custom training is requested. Which computer vision task and output best match the requirement?

3. An insurance company must extract the policy number and customer name from scanned claim forms that may include handwritten fields. The solution should return the recognized text. Which Azure service capability should you use?

4. A manufacturer wants to identify defects on a specific part type. The defects are unique to their product line, and the company has labeled images for training. They want the model to return the location of each defect in an image. Which service should you choose?

5. You are answering an AI-900-style question: “You need to process product images and return where the company logo appears in each image.” The prompt does not mention training. What is the best first step to select the correct service?

This chapter targets the AI-900 objective area that asks you to recognize NLP and generative AI workloads and select the right Azure service for each scenario. In the Mock Exam Marathon format, you’re not trying to become a language engineer—you’re trying to quickly map a business ask (“analyze reviews,” “build a support chatbot,” “translate calls,” “generate a draft email”) to the correct Azure offering and a few key concepts the exam expects you to know. The chapter is organized to match the lesson flow: first, choosing Azure NLP services for analytics, translation, and conversations; then, understanding generative AI basics (LLMs, prompts, grounding, safety); and finally, preparing you for mixed question sets and a timed mini-sim where speed and pattern recognition matter.

On AI-900, many wrong answers are “almost right” services. Your job is to spot the keywords that anchor the workload type: extract (key phrases/entities), classify (sentiment, language, intent), converse (bots), translate (Text Translation), or generate (Azure OpenAI). As you read, practice turning each requirement into: (1) workload type, (2) likely Azure service family, and (3) any responsible AI or safety constraint that should be mentioned.

Practice note for Choose Azure NLP services for analytics, translation, and conversations: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Understand generative AI basics: LLMs, prompts, grounding, and safety: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Practice set: NLP and generative AI mixed questions: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Timed mini-sim: language and generative AI domain sprint: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Choose Azure NLP services for analytics, translation, and conversations: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Understand generative AI basics: LLMs, prompts, grounding, and safety: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Practice set: NLP and generative AI mixed questions: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Timed mini-sim: language and generative AI domain sprint: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Choose Azure NLP services for analytics, translation, and conversations: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Understand generative AI basics: LLMs, prompts, grounding, and safety: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

NLP “analytics” workloads focus on understanding text rather than generating it. AI-900 commonly tests whether you can identify tasks like entity recognition, sentiment analysis, key phrase extraction, language detection, and summarization, and associate them with Azure’s language services (often referenced as Azure AI Language capabilities). The mental model: you submit text, the service returns structured outputs—labels, scores, spans, and summaries—used downstream in dashboards, routing rules, or search.

Entities are real-world “things” pulled from text (people, organizations, locations, dates). Expect scenarios like “extract company names from news articles” or “detect mentions of medications in notes.” Sentiment analysis classifies opinion (positive/neutral/negative) and may include a confidence score. Key phrase extraction highlights the most important terms—useful for tagging, indexing, and quick insights on large document sets. Summarization (extractive or abstractive, depending on the feature set described) appears when the requirement is “reduce long text to key points” for analysts or agents.

Exam Tip: Watch for the verb in the prompt. If the user wants to pull structure out of text (entities, phrases, sentiment), it’s NLP analytics. If they want to create new text (draft, rewrite, brainstorm), that’s generative AI (Azure OpenAI).

How to identify correct answers quickly: look for outputs described as “scores,” “labels,” “detected entities,” or “extracted phrases.” Those are classic Language/Text Analytics patterns. In the practice set portion of this chapter, build speed by translating each requirement into one of these standard outputs before you ever look at the choices.

Conversation scenarios on AI-900 often hinge on the difference between recognizing user goals and responding like a human. Language understanding (LU) is about mapping an utterance to an intent (the user’s goal) and extracting entities (parameters needed to fulfill it). For example: “Book a flight to Seattle tomorrow” might map to intent “BookFlight” and entities {destination: Seattle, date: tomorrow}. These are the core exam terms: intent = action; entity = detail.

Conversational AI commonly layers: (1) a channel (web chat, Teams, voice), (2) a bot to manage dialog, and (3) language understanding or a generative model to interpret messages. AI-900 questions frequently describe a support chatbot that must route tickets, look up order status, or capture structured fields. If the scenario emphasizes consistent, predictable routing and slot-filling (collecting required entities), think “intent/entity recognition” style solutions rather than open-ended generation.

Exam Tip: If the requirements include “trigger workflows,” “route to departments,” “fill in a form,” or “ensure consistent answers,” that’s a strong signal for intent/entity-based conversational design. Generative AI can assist, but the exam usually wants you to recognize when classic NLU fits best.

In the timed mini-sim, you’ll often see a brief transcript-like prompt. Under time pressure, underline the words that imply intent (“I want to…”, “help me…”, “how do I…”) and the fields that must be extracted (account number, product name, date). That points you to intent/entity language understanding patterns.

Translation questions are typically straightforward if you spot the input and output modalities. Text-to-text translation points to Azure translation capabilities (often framed as Azure AI Translator). The exam also mixes in speech scenarios: converting speech to text, translating spoken conversations, or generating synthesized speech. The key is to identify whether the scenario is about language conversion (translation) or audio modality (speech recognition/synthesis) or both.

Common patterns include: “Translate customer emails into English,” “Translate a website UI,” “Provide real-time translation for a call center,” or “Add captions/subtitles in another language.” For call centers and meetings, expect a chain: speech-to-text (recognition), translation, and possibly text-to-speech (voice output). Even when the question doesn’t name the services, the workflow clues are usually explicit: microphone input implies speech; multilingual output implies translation.

Exam Tip: Separate the pipeline into steps. If the prompt starts with audio, your first step is speech recognition. If it ends with spoken output, add speech synthesis. Translation sits in the middle when the language changes.

When you face mixed NLP/generative options in the practice set, use an “accuracy vs creativity” test: translation tasks demand faithful conversion (accuracy), while generative tasks are tolerant of variation (creativity). That one heuristic eliminates many distractors quickly.

Generative AI on AI-900 is concept-heavy: you’re expected to understand core terms well enough to select correct statements and match use cases. Start with tokens: models process text in token units (pieces of words/characters). Cost and limits are often token-based, and prompts plus responses both consume tokens. Next, context window: the maximum number of tokens the model can “see” at once (prompt + conversation history + retrieved content). If a scenario mentions “the model forgets earlier messages” or “long documents don’t fit,” the concept being tested is context window limits.

Embeddings are numeric vector representations of text (or other data) that capture semantic meaning. Embeddings are central to semantic search and retrieval-augmented generation (RAG): instead of asking the model to memorize your documents, you store embeddings for your content, retrieve the most relevant passages for a query, and include them as grounding context in the prompt. AI-900 won’t require you to implement RAG, but it may test the idea that embeddings enable similarity matching (e.g., “find the most similar support article”).

Exam Tip: If the scenario says “find similar documents,” “semantic search,” or “retrieve relevant passages,” think embeddings. If it says “generate an answer using company docs,” think grounding/RAG rather than training a new base model.

In the chapter’s weak spot repair drills, practice labeling each generative scenario with: tokens (cost/limits), context window (memory), and embeddings (retrieval/similarity). Those three terms show up repeatedly in AI-900 generative AI items.

Azure OpenAI Service is the Azure-hosted offering for using OpenAI models with enterprise controls. AI-900 typically tests what you would use it for (drafting, summarizing, Q&A, code assistance, content generation) and what constraints apply (responsible use, content filtering, data handling considerations). You should recognize three common interaction patterns: chat (multi-turn conversation), completions (single-turn prompt-to-output generation), and image generation (creating images from text prompts). The exam often describes these without naming them explicitly.

Chat is best when the user expects a conversational experience (support agent copilot, internal HR assistant, interactive tutoring). Completions fit “generate a product description” or “rewrite this paragraph” style tasks. Image generation is for marketing mockups, concept art, or creating variations—expect the question to mention “create images based on text descriptions.”

Exam Tip: When choices include both “Azure AI Language” and “Azure OpenAI,” decide if the output is structured insights (Language) or newly generated content (OpenAI). If the output is a paragraph, email, or creative text, it’s usually OpenAI.

For the timed mini-sim “domain sprint,” prioritize identifying the interaction style first (chat vs single-turn generation vs images). Then check for constraints like “must cite company policy” (grounding needed) or “must avoid disallowed content” (safety controls).

AI-900 includes responsible AI expectations across all workloads, but generative AI amplifies them because outputs can be unpredictable. The exam commonly tests that you understand the role of content filters (to reduce harmful, adult, violent, or hateful outputs), privacy/data handling (protecting sensitive inputs), and human-in-the-loop review for high-impact scenarios. Safety isn’t an add-on; it’s part of choosing and operating the solution.

Content filtering helps enforce usage policies and reduce unsafe generations, but it does not guarantee perfection. Privacy considerations include minimizing sensitive data in prompts, access control, auditing, and understanding where data is processed and stored in your Azure environment. Human-in-the-loop is the operational pattern where a person reviews or approves outputs—common for legal, healthcare, finance, or any scenario where mistakes have consequences.

Exam Tip: If a scenario mentions “must not generate offensive content,” “regulatory compliance,” “PII,” or “review before sending,” the correct answer usually includes safety controls and/or human oversight, not just “use an LLM.”

In your mixed practice set review, mark any missed items that involved safety language. Most learners miss these not because they don’t know the concept, but because they focus on functionality first. On AI-900, a “best answer” often balances capability with responsible deployment basics.

1. A retailer wants to analyze 50,000 customer reviews to identify sentiment and extract key phrases for dashboard reporting. Which Azure service is the best fit?

2. A travel company needs to translate chat messages from customers in multiple languages to English in near real time. Which Azure service should you use?

3. A company wants to build a customer support chatbot that can handle common questions like order status and store hours and escalate to an agent when needed. Which Azure service is designed for building this conversational solution?