AI In Marketing & Sales — Intermediate

Build an AI-driven cold email system that books meetings in 30 days.

Cold email fails when teams treat it like a copywriting exercise instead of a system. This course is a short, technical book disguised as a build guide: you’ll assemble a 30-day personalization engine that produces relevant outreach at speed while protecting deliverability and turning replies into booked meetings.

You’ll start with strategy—offer, ICP, and guardrails—so the AI has clear constraints. Then you’ll build the data inputs that make personalization real: account signals, contact context, and a scoring approach that prioritizes what matters. From there, you’ll develop a repeatable copy system: prompts, frameworks, and templates that generate tight, human-sounding emails without drifting off-brand or inventing facts.

The course is structured into six chapters that build on each other like a practical playbook. You’ll define the offer and ICP, design a research and enrichment pipeline, and then implement a copy and sending system that you can run weekly. You’ll also establish a booking playbook—follow-ups, objection handling, scheduling flow, and handoff notes—so positive replies become meetings rather than dead ends.

By the end, you’ll have a complete operating model: what to send, who to send it to, how to personalize it, how to protect your domain, how to measure results, and how to scale responsibly. If you’re building outbound for a startup, agency, or internal sales team, this blueprint will help you go from “spray and pray” to a controlled pipeline machine.

You can complete the course with a spreadsheet and an email account, plus any LLM assistant you prefer. CRM usage is optional but recommended for tracking. You’ll create templates, a prompt library, and SOPs that fit your stack—without assuming expensive tooling.

Ready to build your personalization engine and booking playbook? Register free to start, or browse all courses to compare learning paths.

Revenue Operations Lead & AI Outreach Specialist

Sofia Chen leads Revenue Operations for B2B teams, building outbound systems that balance personalization, deliverability, and pipeline efficiency. She specializes in applying LLM workflows to prospect research, copy frameworks, and experimentation while keeping compliance and domain health intact.

Most “AI cold email systems” fail for one predictable reason: they start with writing before they finish thinking. AI can accelerate research, draft personalization at scale, and help you iterate quickly—but it cannot rescue a vague offer, a fuzzy ICP, or an unrealistic volume plan. In this chapter, you’ll build the strategic foundation your 30-day engine will run on: what outcomes you can reasonably create in a month, how to frame an offer that earns replies, how to define an ICP and persona map that AI can apply consistently, and which guardrails prevent deliverability, compliance, and brand-risk surprises.

Cold outreach is not just copywriting; it’s a system with constraints. Your constraints include: list quality, sending capacity, domain reputation, time-to-research, and meeting availability. Your leverage points include: a narrow offer, a sharply defined audience, credible proof, and disciplined measurement. You’ll leave this chapter with a draft positioning statement, an ICP/persona hypothesis map, a 30-day capacity plan, a basic governance workflow, and a KPI model that lets you diagnose problems early (before you burn a domain or waste a week on the wrong segment).

Keep one principle in mind: your goal in 30 days is not to “go viral in inboxes.” Your goal is to run enough clean experiments to find repeatable traction—then scale carefully with safeguards.

Practice note for Define a clear offer and outcome-based positioning for cold outreach: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Build an ICP and persona map that AI can reliably use: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Create a 30-day outreach plan with volume, channels, and capacity: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Set guardrails: compliance, brand voice, and risk controls: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Draft your baseline KPI model (deliverability → replies → meetings): document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Define a clear offer and outcome-based positioning for cold outreach: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Build an ICP and persona map that AI can reliably use: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Create a 30-day outreach plan with volume, channels, and capacity: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Set guardrails: compliance, brand voice, and risk controls: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Cold email is a controlled experiment, not a guaranteed pipeline. In 30 days, you can reliably build a working outreach machine, learn which segments respond, and book initial meetings—if (and only if) you respect constraints. What you can do: (1) define a narrow offer, (2) contact the right people with relevant context, (3) run a short sequence with consistent voice, (4) measure deliverability and replies, and (5) iterate using evidence. What you cannot do: fix a weak product-market fit, overcome a poor sender reputation instantly, or “personalize” your way into enterprise deals with no credibility.

A useful 30-day expectation is learning velocity: you should be able to run 2–4 meaningful tests (segment, offer angle, CTA, or proof point) while protecting deliverability. You’re optimizing for signal, not vanity volume. Many teams fail by sending too much too soon, or by changing too many variables at once. If you change ICP, subject line style, CTA, and proof point in the same week, AI will happily generate thousands of emails—and you will have no idea what worked.

Engineering judgment matters here. Start with a conservative daily volume that your domains can support, and a meeting capacity that your calendar can handle. A practical constraint check looks like this: if you can only take 8 sales calls per week, your system should not be designed to create 30 meetings per week. Your aim is a stable “booked meetings per 1000 delivered emails” rate that you can trust and scale gradually.

In the next sections, you’ll define the offer and audience in a way that an AI-driven workflow can execute consistently—without turning your outreach into generic spam.

Your offer is the engine; copy is the exhaust. The most reliable cold email offers are outcome-based, specific, and low-friction. Start by naming a painful, expensive problem your buyer already recognizes. Then attach a credible outcome and a believable path to it. “We help B2B SaaS grow” is not an offer. “We cut time-to-first-demo by 25–40% in 60 days by fixing lead routing and follow-up SLAs” is much closer, because it’s measurable and operational.

Use a simple framing that AI can reuse without improvising: Pain → Promise → Proof → Differentiation → CTA. Pain is the operational symptom (not your feature). Promise is the near-term outcome you can reasonably influence. Proof is a data point, a recognizable customer type, a before/after, or a specific mechanism. Differentiation is why your approach is distinct (not “we use AI”). The CTA is the smallest next step—usually a short call, a teardown, or a benchmark check.

Common mistakes include stacking too many outcomes (“increase revenue, reduce churn, and improve SEO”), hiding behind jargon (“synergize GTM”), or using unverifiable claims (“guaranteed meetings”). AI will amplify these problems if you feed it vague inputs. Give AI structured components it can assemble: 3 pains, 3 outcomes, 3 proof points, and 2 CTAs per segment. That becomes your “offer palette.”

Your practical deliverable from this section is a single sentence positioning statement: “For [ICP], we help achieve [measurable outcome] by [mechanism], proven by [proof].” Everything else in the system should remain consistent with that statement.

An AI personalization engine is only as reliable as the audience definition you give it. “Mid-market companies” is not an ICP; it’s a guess. In this course, your ICP must be described with attributes AI can check from public signals: industry, business model, size band, geography, tech environment, recent events, and buying triggers. The best ICPs for cold email have three properties: (1) the pain is common, (2) the buyer is identifiable, and (3) the outcome is provable.

Build an ICP map with two layers: Firmographics (who the company is) and Situational triggers (what just happened that makes your message timely). Firmographics include revenue or employee band, funding stage, GTM motion (PLG vs sales-led), and compliance constraints. Triggers include recent hiring, product launches, new leadership, tool migrations, security incidents, or expansion to new markets. Triggers are where AI can add genuine relevance—if you define what to look for.

Next, define persona hypotheses: for each persona, document their job-to-be-done, success metrics, top objections, and what “proof” they trust. Don’t overcomplicate: start with 2–3 primary personas. For example, a RevOps leader cares about speed-to-lead and attribution integrity, while a VP Sales cares about pipeline coverage and rep activity quality. If you send the same proof point to both, your reply rate will look “random” because the message is misaligned.

By the end of this section, you should have a short, testable ICP shortlist (2–3 segments) and a persona map that AI can use to select the right angle, proof point, and CTA without hallucinating relevance.

List quality is a deliverability and performance multiplier. A “bad list” doesn’t just reduce replies—it increases bounces, spam complaints, and blocks that can poison your domain. In a 30-day program, poor data is the fastest way to waste the entire month. Your standard should be: every contact has (1) a valid role fit, (2) a credible reason they’re in your ICP, and (3) a verifiable email address with acceptable risk.

Set explicit acceptance rules before you collect data, so AI and humans can follow the same checklist. For each account, require minimum fields such as: company name, URL, country, employee band, segment tag, trigger signal (or “none”), and source link. For each contact, require: full name, title, department, seniority band, email, LinkedIn URL, and role-fit score. AI can help propose role-fit scores, but you should define the rubric (e.g., 3 = direct owner, 2 = strong influencer, 1 = adjacent).

Equally important: define exclusion criteria. Exclusions protect your brand and reduce wasted sends. Exclude companies that are competitors, existing customers (unless you have a cross-sell motion), industries you can’t serve, regions you cannot support, and roles that don’t control or influence the outcome. Also exclude risky addresses: “info@”, “support@”, or anything likely to be a catch-all unless you have a validation step.

Your practical output is a one-page “List Quality Standard” that your research workflow must satisfy. Later chapters will turn this into an AI-assisted research checklist, but the standards must come first.

AI can write in any style, including the wrong one. Without guardrails, you’ll get tone drift across sequences: overly casual lines, exaggerated claims, or phrasing that sounds like mass spam. Create brand voice rules that are specific enough to enforce and simple enough to follow. Define: formality level, sentence length preference, taboo phrases (e.g., “circling back,” “quick win,” “guaranteed”), and how you handle confidence (“we’ve seen” vs “we will”).

Next, set compliance and risk controls. At minimum: truthful claims only, proof must be verifiable, and personalization must be based on public or first-party information (no “I saw you were struggling” unless you have evidence). Include a policy for sensitive industries (health, finance) where claims and data handling are stricter. Also define your opt-out handling and signature requirements (company identity, contact method), aligned with the regions you email.

Now build a lightweight approval workflow so speed doesn’t create chaos. A practical setup is three layers:

Common mistakes are letting each SDR “tweak” messaging independently (creating untestable variation) or asking legal/compliance to approve every single email (creating a bottleneck). Your goal is repeatability: AI drafts within constraints; humans approve the pattern, not every instance. The practical outcome is a stable voice that prospects recognize as credible, not synthetic.

You can’t improve what you can’t diagnose. Your KPI model should mirror the funnel from technical health to human response: deliverability → engagement → replies → meetings → qualified meetings. Start with leading indicators that warn you early. If your bounce rate spikes, stop sending and fix list quality or validation. If spam complaints rise, reduce volume and revisit targeting and claims. If replies are low but deliverability is strong, your offer/ICP alignment is likely the issue.

Use practical benchmark ranges as guardrails, not promises. Many teams aim for: bounce rate under ~2% (lower is better), spam complaint rate near zero, and a positive reply rate that supports meeting goals. Reply rates vary widely by market and offer; what matters is trend and segment comparison under consistent conditions. Meetings booked per 1000 delivered emails is a useful normalization metric, because it forces you to account for deliverability and volume.

Build a simple 30-day capacity model: if you can send 50 emails/day per inbox safely, and you have 2 inboxes, that’s ~100/day. Over 20 business days, that’s ~2000 emails delivered (minus bounces). If your target is 10 meetings/month and your current performance is 3 meetings per 1000 delivered, you’ll need ~3333 delivered emails or you’ll need to improve conversion via ICP/offer/CTA. This makes constraints explicit and prevents wishful planning.

Finally, define a reply classification system early (positive, neutral, objection, referral, unsubscribe, not a fit). This enables structured iteration: you can see whether objections cluster around timing, relevance, trust, or authority—and update your offer framing and persona hypotheses accordingly. This chapter’s KPI model becomes the scoreboard for the rest of the course.

1. According to Chapter 1, what is the most common reason “AI cold email systems” fail?

2. Which deliverable best supports AI producing consistent personalization at scale?

3. Which set is described as key constraints of a cold outreach system?

4. What is the chapter’s recommended 30-day goal for cold outreach?

5. Why does the chapter recommend drafting a baseline KPI model (deliverability → replies → meetings)?

Personalization at scale is not a writing problem—it’s an input problem. If your data is thin, stale, or inconsistent, the best prompt in the world will produce generic lines, mismatched assumptions, or (worse) confident hallucinations. This chapter turns “research” into an engineered system: a prospect data schema, an enrichment checklist, a repeatable AI prompt stack, and a lightweight QA loop so your personalization stays accurate and usable over 30 days of sending.

The goal is practical: create a pipeline that (1) produces enough reliable context to write relevant emails quickly, (2) standardizes what you collect so it’s reusable in snippets, and (3) prioritizes who gets the highest-effort personalization. You’ll treat signals like features in a model—captured consistently, scored by strength and recency, and mapped to specific email components (hook, proof, CTA).

As you work through the sections, keep one principle in mind: every new field you add should earn its keep. If a field doesn’t change what you write, how you position, or who you prioritize, it’s noise. High reply rates come from a small number of high-value facts applied with discipline—plus the habit of verifying what matters.

Practice note for Design a prospect data schema and enrichment checklist: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Create an AI research prompt stack for accounts and contacts: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Collect and score personalization signals by strength and recency: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Build a reusable snippet library mapped to personas and triggers: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Implement a lightweight QA process to prevent hallucinations: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Design a prospect data schema and enrichment checklist: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Create an AI research prompt stack for accounts and contacts: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Collect and score personalization signals by strength and recency: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Build a reusable snippet library mapped to personas and triggers: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Implement a lightweight QA process to prevent hallucinations: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Your list-building workflow determines the ceiling of your results. Great copy cannot rescue a list that’s misaligned with your ICP or built from low-intent sources. Start by defining where prospects will come from, how often you refresh, and how records flow from “candidate” to “send-ready.” In practice, use two lanes: an ICP lane (broad coverage) and a trigger lane (high relevance from recent events).

Common sources include: LinkedIn Sales Navigator searches, company databases (Apollo, ZoomInfo, Clearbit), intent and website visitor tools, conference/exhibitor lists, job boards, and “built lists” from niche directories. The highest-quality lane is usually: Sales Nav (role accuracy) + a company database (email and firmographics) + your own trigger detection (news/hiring/tech).

Design your workflow as a small assembly line:

Engineering judgment: do not optimize for list size. Optimize for throughput of “high-confidence, relevant, contactable” records. A small daily batch (e.g., 25–50 accounts/day) with strong triggers will beat dumping 5,000 generic contacts into sequences.

Enrichment becomes valuable when it changes your message. Many teams over-collect: dozens of fields that never appear in an email. Instead, design a prospect data schema that supports specific email decisions: relevance (why you), timing (why now), and routing (who to email). Keep fields in three tiers: required (must have to send), recommended (improves personalization), and optional (nice to have).

Required account fields: company name, website, industry, employee range, geo/time zone, ICP fit flag, and a short “offer alignment” label (e.g., “PLG motion,” “contact center,” “revops automation”). Required contact fields: full name, title, persona tag, email, LinkedIn URL, and seniority.

Recommended fields that consistently improve replies:

Build an enrichment checklist so collection is consistent across reps or contractors. For each record, answer: (1) What’s the strongest recent signal? (2) Which persona is most accountable? (3) What proof point will land? (4) What CTA fits their stage (quick question vs. calendar ask)? If a field cannot be tied to one of those four, remove it from your schema.

AI is best used as a research assistant that summarizes, extracts, and drafts hypotheses—then you verify. Create a prompt stack (a small set of reusable prompts) that produces standardized outputs for every account so your personalization is consistent. The key is to ask for structured results: bullets, categories, and “evidence lines” with URLs.

Prompt stack (accounts):

Common mistake: asking AI for conclusions without sources (“What are their pain points?”). Instead, ask for observable evidence and then write your own hypothesis. Practical outcome: your account notes become “inputs” that map cleanly to email elements—one line for the hook, one line for relevance, one line for proof, and one line for CTA.

Keep the output small. If the AI returns a page of text, it will not be used. Your target is a 60–120 second review per account: scan the bullets, pick one strong trigger, and move on.

Account research tells you what the company is doing; contact research tells you who cares and how to speak their language. A contact-level workflow should produce: (1) persona classification, (2) likely priorities, (3) an initiative hypothesis, and (4) a safe personalization hook. The best signals are role-linked and initiative-linked, not personality-linked.

High-utility contact signals include: functional scope (owns pipeline vs. ops vs. enablement), seniority (director vs. VP), tenure (new leader often equals change window), prior companies (stack familiarity), public posts (topics they emphasize), and cross-functional ownership (e.g., “Sales & RevOps” titles imply process and tooling influence).

AI prompt stack (contacts) should be explicit about boundaries:

Common mistakes: over-personalizing trivialities (“Saw you like hiking”), assuming budget authority, or using vanity details that don’t connect to value. Practical outcome: you should be able to produce a persona-tagged snippet like “Noticed you’re hiring 3 SDRs—usually that’s when teams tighten lead routing and reply handling” that feels relevant and doesn’t overreach.

Not every prospect deserves the same level of personalization. A scoring model helps you allocate effort: high-signal accounts get bespoke first lines and tailored proof; low-signal accounts get standardized messaging with lighter customization. The model should be simple enough to use daily and strict enough to prevent “random personalization.”

Score signals on two axes: strength (how directly it implies need) and recency (how current it is). Use a 1–5 scale for each, then compute a priority score = strength × recency. Examples:

Then add a fit modifier (0.5–1.5) based on ICP closeness and a contactability modifier (0–1) based on email confidence and deliverability risk. This produces a ranked send list that naturally emphasizes the best opportunities.

Operationally, define tiers:

Practical outcome: your snippet library (next section) becomes the execution layer—signals map to pre-built phrasing, not improvisation. This is how you maintain quality while scaling output.

Hallucinations in cold email are expensive: they damage trust, trigger spam complaints, and create internal confusion about what “worked.” You need a lightweight QA process that fits into daily production. The goal is not perfection—it’s preventing avoidable false claims and making your research traceable.

Adopt citation habits: every high-impact personalization claim should have a source URL (company site, press release, job posting, LinkedIn post). Store it in your CRM/custom field or a research note. If you can’t cite it, rephrase as a hypothesis or remove it. For example, change “Congrats on expanding to EMEA” to “Noticed roles open in London—are you expanding the team there?”

Lightweight QA checklist (60 seconds) before an email goes live:

Use AI as a second-pass reviewer: paste your draft and ask it to flag “potentially unverifiable claims” and “places where a citation would be required.” The practical outcome is confidence: personalization becomes a repeatable capability, not a risky creative exercise. With solid inputs, your Chapter 3 work—building a snippet library and writing sequences—will be faster and noticeably more consistent.

1. According to Chapter 2, what is the primary bottleneck for personalization at scale?

2. Why does Chapter 2 emphasize creating a standardized prospect data schema and enrichment checklist?

3. How should personalization signals be handled to decide what to write and who to prioritize?

4. What is the purpose of building a reusable snippet library mapped to personas and triggers?

5. Which practice best reflects the chapter’s approach to preventing confident hallucinations?

In Chapters 1–2 you defined who you’re emailing and how you’ll research them. This chapter turns that research into a repeatable copy system: a core cold email template, persona-specific variants that still sound like you, subject lines that get opened without triggering filters, and a follow-up sequence that adds value instead of “bumping.” The goal is engineering judgment: you want a system that produces consistently readable emails at scale, not occasional “clever” drafts.

Think of your copy system as a small set of primitives you can recombine: hook (why this is worth their attention), relevance (why you chose them), proof (why you’re credible), and CTA (the smallest next step that drives replies). AI helps you draft quickly, but you’ll get better results when you constrain the model with tight formats, word limits, and a library of approved components (signals, snippets, proof points, CTA types).

As you build, enforce two constraints that protect performance: (1) each email must pass a “10-second skim test,” and (2) each email must be defensible if forwarded internally. That means short paragraphs, plain language, no hype, and claims that match what you can prove. You’re not writing an ad; you’re starting a conversation.

By the end of this chapter you should be able to open a blank doc and reliably produce a sequence that reads like a human wrote it—fast.

Practice note for Write a cold email core template: hook → relevance → proof → CTA: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Generate persona-specific variants while keeping voice consistent: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Create subject lines that avoid spam triggers and drive opens: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Build a follow-up sequence with escalation and new value: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Assemble a prompt library for drafting, rewriting, and tightening copy: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Write a cold email core template: hook → relevance → proof → CTA: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Generate persona-specific variants while keeping voice consistent: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Create subject lines that avoid spam triggers and drive opens: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Your best-performing cold emails will look almost boring on purpose. Deliverability and reply rate both benefit from readable, low-friction text: short lines, minimal punctuation, and no “newsletter” formatting. The structural rule is simple: one idea per sentence, one purpose per paragraph, and one clear ask at the end.

Use the core template as a fixed skeleton. Here’s a practical format that holds up across industries:

Readability constraints are not aesthetic—they are throughput controls. When you send 30–100 emails/day, you can’t afford copy that requires “interpretation.” Keep total length ~60–130 words. Aim for 2–4 short paragraphs. Avoid stacked clauses, jargon, and multiple asks (e.g., “book a call, watch a video, fill a form”).

Common mistakes: writing a mini-landing page, leading with your company bio, overexplaining the product, or burying the ask. Another frequent failure is “personalization that doesn’t connect” (e.g., praising an award but never bridging to a problem you solve). Structure prevents these because each block has a job.

Practical outcome: create a “core template” doc with bracketed placeholders, like [signal], [problem hypothesis], [proof snippet], [CTA type]. Your AI system will fill these slots, but the scaffolding stays constant—this is how you maintain quality at scale.

Personalization is not flattery; it’s a reasoning chain. The most reliable pattern is Observation → Hypothesis → Bridge. It forces your copy to connect a real-world signal to a plausible need, then to your offer without sounding presumptive.

Observation is the factual cue: a job change, product launch, hiring trend, tech stack, recent post, pricing page language, or a metric like traffic growth. Keep it specific and verifiable. Hypothesis is your “maybe”: what that signal could imply about priorities or friction. Use softening language (“maybe,” “curious if,” “often leads to”). Bridge is the connection to your value: the outcome you help create, not a feature dump.

Example pattern (fill-in style):

This pattern also makes persona variants easy. The observation may stay similar, but the hypothesis changes by role: a VP Sales cares about ramp and forecast risk; RevOps cares about process consistency; a founder cares about speed and burn. Keep your voice consistent by standardizing phrasing: choose a limited set of sentence starters (e.g., “Noticed…,” “Curious if…,” “In similar teams…”) and reuse them.

Common mistakes: “random personal detail” (mentions a podcast but no relevance), overconfident assumptions (“you must be struggling”), or “AI-sounding” hedging everywhere. Apply judgment: one strong personalization beat is enough. If you can’t form a credible hypothesis, do not force it—use a broader relevance cue (industry change, common priority) and stay honest.

Proof is where cold emails often fail: either there’s none (“we’re world-class”), or it’s too long (a paragraph of logos and awards). Your proof block should be compact, specific, and aligned with the hypothesis you just made. Treat proof as a modular component you can swap in depending on persona and offer.

There are three reliable proof types:

Engineering judgment: proof should match what the reader values. A VP Sales responds to outcomes and speed; RevOps responds to repeatability and controls; Marketing responds to positioning and segmentation clarity. Store proof blocks in a library with tags (persona, industry, offer, risk level). Then your AI prompt can pull “one metric + one credibility cue” without bloating the email.

Avoid these mistakes: unverifiable superlatives (“best,” “guaranteed”), name-dropping irrelevant logos, or using metrics without context. If you can’t share a number, use a mechanism: “We use a 30-day signal library and reply classification to iterate weekly.” Mechanism-based proof is still proof because it explains how you reduce risk.

Practical outcome: build 10–20 proof blocks in a spreadsheet. Each should be one or two sentences max, written in the same voice. This becomes the fuel for consistent drafts across sequences.

Cold email success is measured in replies, not link clicks. Links add friction, sometimes reduce deliverability, and often shift the burden to the prospect. A high-performing CTA is reply-based, low-commitment, and easy to answer in 10 seconds.

Use CTAs that create a binary or small-choice response. Examples:

Escalation matters across the sequence. Start with the smallest ask (permission or routing). If they show interest, then propose a meeting. This reduces the “sales pressure” feel while still moving toward booked meetings.

Common mistakes: asking for too much (“30 minutes this week”), offering too many options, or using click-first CTAs (“here’s my calendar link”) in the first email. Calendar links can work later, after engagement, or when you have strong brand trust. Keep your CTA aligned with your proof: if your proof is about a 30-day system, your CTA can be “Want the 30-day outline?” before “Want a call?”

Practical outcome: create a CTA library with 6–10 options mapped to sequence steps (Email 1 permission, Email 2 routing, Email 3 meeting, Email 4 break-up). Your AI drafts should select from this list rather than inventing new asks each time.

Subject lines are an optimization problem with constraints. You want enough curiosity to earn the open, enough specificity to avoid looking mass-sent, and enough “safety” to avoid spam triggers and distrust. In practice, that means 3–7 words, plain text, no hype, and no bait.

Build subject lines from three components:

Examples that balance all three:

Common mistakes: trying to be too clever, stuffing too many variables, or using “marketing language” (“Unlock growth now”). Another subtle error is misalignment: a specific subject line with a generic body. If your subject references hiring, the first sentence should connect to that observation. Consistency increases trust.

Practical outcome: maintain a subject line bank of 30–50 lines categorized by angle (hiring, launch, tech stack, metric). A/B test by holding body copy constant while swapping subjects, and track opens and downstream replies—opens without replies often indicate curiosity without relevance.

AI drafting only works when you treat prompts like production tooling. Your goal is not “write an email,” but “assemble an email from approved components under strict constraints.” The most important prompt elements are: role, inputs, format, constraints, and a self-check.

Use a two-pass workflow:

Draft prompt (template):

System: “You are an SDR copywriter. Write plain-text cold emails that are concise, credible, and compliant.”

User: “Write 3 cold email options using: Hook→Relevance→Proof→CTA. Constraints: 80–120 words, 3–4 short paragraphs, no links, no attachments, no hype words (best/guaranteed/free), 8th-grade readability. Voice: direct, calm, helpful. Inputs: Prospect role={{Role}}, company={{Company}}, signal={{Signal}}, hypothesis={{Hypothesis}}, proof blocks={{ProofOptions}}, CTA options={{CTAOptions}}. Output: label each block.”

Tighten prompt (template):

“Rewrite this email to be 15% shorter without losing meaning. Keep the same structure. Replace vague phrases with concrete nouns/verbs. Ensure the CTA is reply-based. Then output a one-line ‘risk check’ listing any claims that might require evidence.”

To generate persona-specific variants while keeping voice consistent, add a “voice lock”: include 3–5 example sentences that represent your house style, plus a banned phrase list. Also standardize your punctuation rules (e.g., no exclamation marks, no em-dashes) and keep greetings/signatures uniform.

Common mistakes: prompts without constraints (models ramble), mixing too many goals (email + LinkedIn + ad copy in one), or letting the model invent proof. Never allow AI to fabricate metrics, customers, or partnerships. Your prompt should explicitly state: “If proof is missing, use mechanism-based proof and mark [NEEDS DATA].”

Practical outcome: create a prompt library with 6 prompt types: Draft, Variant by persona, Subject lines, Follow-up with new value, Tighten, Compliance check. This library is what turns a one-off writing session into a 30-day personalization engine.

1. What is the intended purpose of the core cold email template (hook → relevance → proof → CTA) in this chapter?

2. Which set of “primitives” does the chapter say you should recombine to build cold emails?

3. What does the chapter recommend to get better AI-generated drafts?

4. What are the two constraints the chapter says protect email performance?

5. In the follow-up sequence described in the chapter, what should follow-ups primarily do?

Your personalization engine is only as good as its ability to reach the inbox. Deliverability is not a “one-time setup”; it’s an operating system you maintain: domain strategy, authentication, warm-up, sending patterns, content hygiene, and monitoring. The goal is simple: protect your primary brand domain, build a predictable sending reputation, and keep your outreach volume sustainable so you can run consistent experiments (subject lines, CTAs, segments) without triggering spam filters or burning your domain.

This chapter treats deliverability like engineering: we’ll set guardrails first, then scale. You’ll choose where to send from (primary vs. secondary domains), configure SPF/DKIM/DMARC for alignment, ramp volume gradually, implement throttling and reply handling rules, keep content “boring” enough for filters while still personal for humans, and monitor the leading indicators (bounces, complaints, inbox placement) before problems become outages.

Common mistake: teams obsess over copy while sending from an untrusted domain with missing authentication, blasting 300 emails on day one, and then “mysteriously” landing in spam. The practical outcome by the end of this chapter is a repeatable deliverability playbook you can run every month as you add new inboxes and sequences.

Practice note for Set up domains and authentication (SPF, DKIM, DMARC) correctly: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Create a warm-up and ramp schedule for sustainable sending: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Build sending rules: throttling, timing, and reply handling: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Reduce spam risk with content hygiene and link strategy: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Establish monitoring: bounces, spam complaints, and inbox placement: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Set up domains and authentication (SPF, DKIM, DMARC) correctly: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Create a warm-up and ramp schedule for sustainable sending: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Build sending rules: throttling, timing, and reply handling: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Reduce spam risk with content hygiene and link strategy: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Establish monitoring: bounces, spam complaints, and inbox placement: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Start with a clear separation between your brand’s “asset domain” (your primary website and core email) and your “prospecting domain(s)” used for cold outreach. The primary domain is where trust, customer email, and long-term brand equity live. Cold outreach is inherently riskier: even compliant campaigns generate more bounces, more “mark as spam,” and more negative engagement than warm inbound.

A practical approach is to buy 1–3 secondary domains that are close variations of your brand (e.g., useacme.com instead of acme.com) and create dedicated sending mailboxes on those domains. This protects your primary domain reputation while still looking legitimate to recipients. Avoid deceptive lookalikes or typosquats; they increase complaints and can create legal/brand issues.

Rotation basics: don’t rotate domains to “evade” filters; rotate to distribute volume safely across multiple mailboxes with consistent behavior. A good rule is to keep each mailbox under a conservative daily cap (discussed in Section 4.3) and add mailboxes when you need more throughput. Rotation without authentication, warm-up, and stable sending patterns will fail; rotation is capacity management, not a shortcut.

Engineering judgement: if your product relies heavily on email (password resets, alerts, transactional), be extra strict—use separate sending infrastructure for outbound so a cold campaign can’t degrade critical deliverability. Your best long-term strategy is compartmentalization.

Email authentication tells receiving providers (Google, Microsoft, Yahoo) whether your domain authorizes the sending server and whether the message was altered. Authentication is table stakes; the nuance is alignment—making sure the visible “From” domain aligns with the authenticated identity so filters see consistency.

SPF (Sender Policy Framework) is a DNS record listing which servers can send mail for your domain. Keep it tight: include only the providers you actually use (e.g., Google Workspace, Microsoft 365, your sending platform). Common mistake: stacking too many “include:” statements until you exceed DNS lookup limits and SPF effectively fails.

DKIM (DomainKeys Identified Mail) signs messages with a cryptographic key published in DNS. Enable DKIM for every sending domain and verify signatures pass. DKIM typically improves reputation because it proves integrity and ownership.

DMARC builds on SPF/DKIM to specify what receivers should do when authentication fails, and it provides reporting. Start with a monitoring policy and move toward enforcement once stable:

Alignment essentials: DMARC passes when SPF and/or DKIM pass and align with the From domain. If you send from name@useacme.com, ensure SPF/DKIM are set for useacme.com, not just a third-party subdomain. Many deliverability “mysteries” are simply misalignment.

Practical workflow: after configuring DNS, send test emails to multiple providers, then use header analyzers (many inbox tools show SPF/DKIM/DMARC results) to confirm “pass” with alignment. Only then start warm-up. Treat authentication like unit tests—you don’t deploy volume without green checks.

Warm-up is reputation building through consistent, low-risk sending before you introduce cold traffic. Providers watch behavior over time: volume spikes, low reply rates, and high deletes-without-open can push you into spam. A sustainable ramp looks boring on purpose.

Warm-up principles:

A practical ramp schedule per mailbox (example): Days 1–3: 10–15/day; Days 4–7: 20–30/day; Week 2: 35–50/day; Week 3: 60–80/day; Week 4: 80–120/day. Your safe ceiling depends on your domain age, list quality, and provider. If bounces or complaints increase, pause growth and stabilize.

Daily send caps are not just about provider limits; they’re about protecting reputation while your AI system iterates. If you’re testing new subject lines or personalization patterns, run smaller batches first. Many teams scale the wrong variable: they increase volume while still uncertain about targeting and data hygiene, which amplifies negative signals.

Sending rules (throttling and timing): throttle at both the mailbox and campaign level. Spread sends across a window (e.g., 3–5 hours) rather than “all at once.” Avoid sending large volumes at the top of the hour. Schedule around recipient time zones when possible. Reply handling is also part of deliverability: when someone replies, stop the sequence immediately for that contact and route the thread to a human (or to a monitored inbox) so responses are timely. Slow or absent replies can reduce engagement quality over time.

Content does not “fix” a poor sending reputation, but bad content can absolutely create spam triggers. Content hygiene is the set of choices that reduce filter suspicion while keeping your email clear and human.

Formatting: keep cold emails plain and readable. Use short paragraphs, minimal styling, and one clear ask. Over-designed HTML templates, heavy formatting, or tracking pixels can increase risk—especially early in ramp. A safe default is plain text or very light HTML that looks like plain text.

Personalization hygiene matters too. AI-generated personalization that is wrong (misstated role, incorrect company facts, invented achievements) increases spam complaints because it feels deceptive. Use your personalization library (signals, snippets, proof points) with verification rules: only include a claim if the source is known, recent, and specific. “I saw your Series B” is risky if your data is stale; “I noticed you’re hiring 3 SDRs in Austin” is strong if verified and time-bound.

Link strategy: if you need a calendar link, consider holding it until a reply, or present it as optional (“If easier, I can send a link”). Early-stage deliverability improves when the first message is reply-oriented rather than click-oriented.

Bounces are among the fastest ways to damage reputation because they signal poor list quality. Treat bounce management as a core subsystem of your 30-day engine, not an afterthought. Your objective: keep hard bounces near zero and react quickly when bounce rates rise.

First, know the difference:

Build suppression lists that operate across tools: your sequencing platform, your CRM, and any enrichment systems. When an address hard-bounces, it should be globally blocked so it can’t re-enter the pipeline next week via a new import. Likewise, if someone requests not to be contacted, suppress them and, where appropriate, their domain to prevent re-targeting through a different contact.

Practical workflow: validate emails before sending (especially for newly scraped or enriched contacts). Use a validation service or at minimum apply rules (reject role accounts if they historically bounce, reject domains with no MX records, flag “accept-all” domains for caution). Then, during sending, automatically label bounce types, sync those labels back to your database, and exclude them from future steps.

Common mistake: continuing to send follow-ups to a contact after a soft bounce without monitoring frequency. Another mistake is ignoring bounce spikes by segment; if one data source has higher bounces, quarantine that source and investigate rather than letting it contaminate the whole domain’s reputation.

Deliverability problems compound quietly. By the time the team notices “replies are down,” you may already be partially throttled or routed to spam. Monitoring turns deliverability into an observable system with leading indicators and clear actions.

Track these metrics per mailbox, per domain, and per campaign:

Troubleshooting sequence (use this order): (1) Stop scaling—freeze volume increases and reduce daily sends if needed. (2) Check authentication—SPF/DKIM/DMARC still passing and aligned; DNS changes can break things. (3) Inspect list quality—did a new segment, data vendor, or enrichment step increase bounces? (4) Review content changes—new links, new tracking, new phrasing, or an overly templated structure. (5) Evaluate sending patterns—bursty sends, too many new inboxes at once, or sending outside normal business hours for the recipient.

Practical outcomes: create an “email health dashboard” and a weekly deliverability review. When an issue appears, make one change at a time, then observe for 48–72 hours. Deliverability is probabilistic; multiple simultaneous changes destroy your ability to learn. If you detect a domain-level issue (widespread spam placement), shift campaigns to other warmed mailboxes, lower volume, and rebuild reputation rather than pushing harder.

Finally, remember that deliverability is a function of trust. The system that wins long term is the one that behaves like a careful human sender: accurate targeting, respectful volume, fast suppression, and consistent engagement.

1. Why does the chapter describe deliverability as an “operating system” rather than a one-time setup?

2. What is the primary deliverability goal stated in the chapter?

3. Which set of controls does the chapter treat as key “guardrails” before scaling outreach volume?

4. What is the risk described by the common mistake of blasting high volume on day one from an untrusted domain without authentication?

5. Which metrics are highlighted as leading indicators to monitor before deliverability problems become outages?

Cold email is not a creative writing contest; it’s a controlled learning system. The difference between “we tried outbound” and “outbound is a predictable meeting engine” is instrumentation, classification, and disciplined iteration. In earlier chapters you built your ICP, research workflow, and personalization library, then wrote sequences with deliverability safeguards. Now you need a measurement loop that tells you what to change—and what not to touch.

This chapter turns your campaign into an experiment pipeline. You’ll define a tracking taxonomy (so metrics mean the same thing each week), use AI to classify replies at scale (so you can interpret signal instead of drowning in inbox noise), design A/B tests that isolate variables (so you don’t change five things and learn nothing), and run weekly iteration sprints with decision rules (so improvements compound).

The mindset shift: stop “tweaking” and start “versioning.” Every meaningful change becomes a new version with a hypothesis, expected impact, and a place in your change log. That discipline is what prevents random edits from quietly damaging a sequence that was working.

Practice note for Instrument your funnel metrics and tracking taxonomy: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Classify replies with AI (positive, neutral, objection, unsubscribe): document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Design A/B tests for subject, hook, proof, CTA, and sequence length: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Run weekly iteration sprints using insights and decision rules: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Build a reporting dashboard and change log to prevent random tweaks: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Instrument your funnel metrics and tracking taxonomy: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Classify replies with AI (positive, neutral, objection, unsubscribe): document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Design A/B tests for subject, hook, proof, CTA, and sequence length: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Run weekly iteration sprints using insights and decision rules: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Build a reporting dashboard and change log to prevent random tweaks: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Outbound metrics are a funnel, not a scoreboard. You need a small set of metrics that map to business outcomes and can be trusted week to week. Start with four: open rate, reply rate, positive reply rate, and meetings booked rate. Track them per sequence version, per ICP segment, and per sender domain, because averages hide problems.

Open rate is primarily a deliverability and subject-line proxy, but treat it cautiously: privacy features can inflate or distort it. Use opens as an early warning signal (e.g., “something broke”) rather than a success KPI. Reply rate is a stronger indicator that your targeting and message are relevant enough to provoke a response. Positive reply rate is your core “message-market fit” signal—how many replies actually move you forward. Meetings booked rate ties everything to revenue workflow and exposes where handoffs fail (e.g., slow follow-up, unclear CTA, poor scheduling options).

Common mistake: optimizing for open rate by writing clickbait subjects. It may increase opens while decreasing positive replies. Engineering judgment here is to optimize the metric closest to revenue that you can measure reliably. For most teams, that is positive replies and meetings, with deliverability metrics as guardrails.

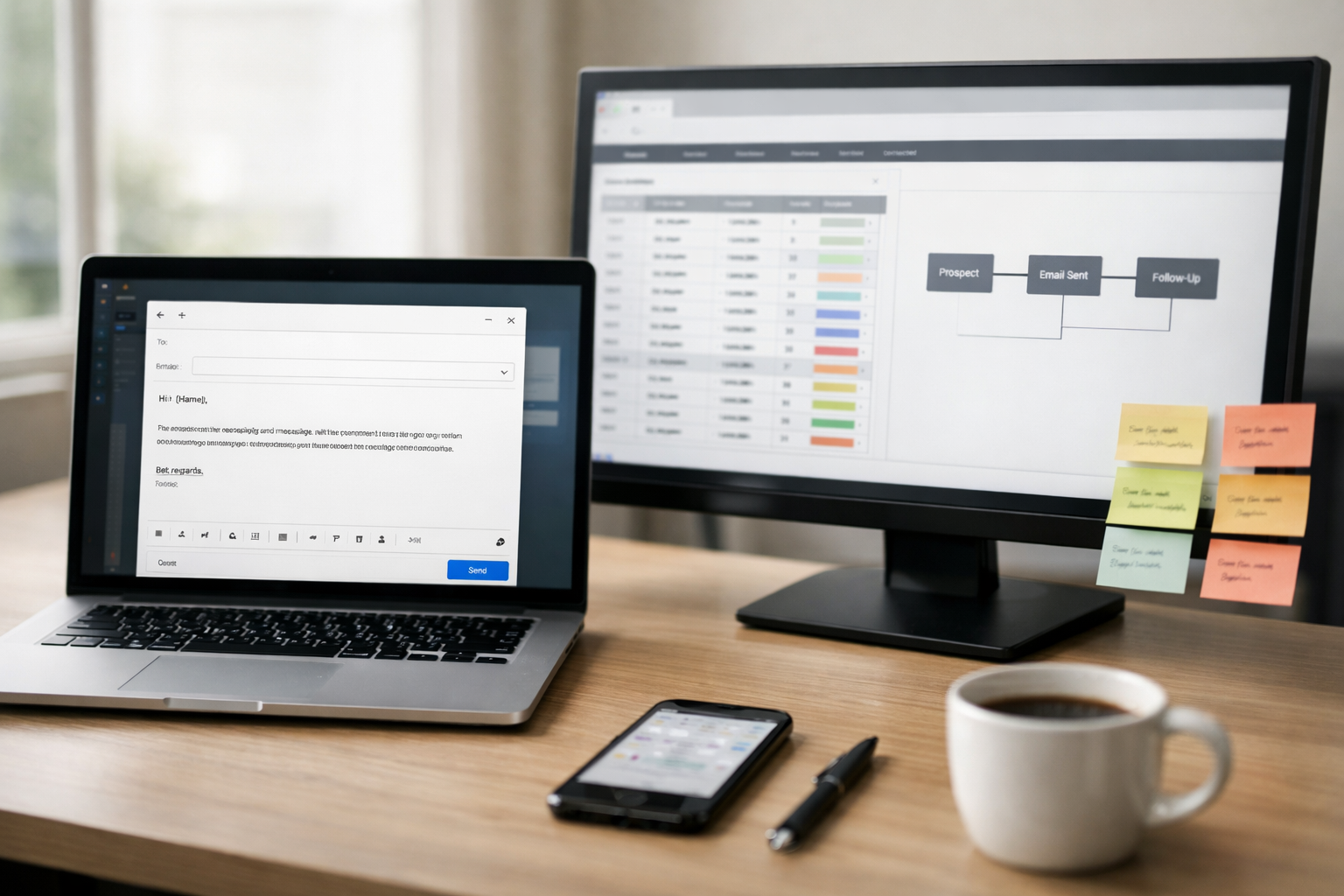

Your measurement system is only as good as your tracking taxonomy. If one person tags a reply as “Interested” and another tags it as “Maybe,” your data becomes unusable. Design a simple, enforced schema that works in either a CRM or a spreadsheet, and connect it to your sending tool through consistent campaign naming.

Start with a campaign naming convention that encodes the variables you’ll analyze later. Example: ICP-Persona | Offer | SequenceVersion | SenderDomain | Month. A concrete string might be: SaaS-RevOps | DataHygieneAudit | V3 | dom2 | 2026-03. This makes exports and pivot tables immediately actionable.

Then define required fields at the contact-level (who you emailed) and message-level (what they saw). Minimum viable tracking fields:

Include a “Version” field and never overwrite it. When you change the hook, proof point, or CTA, that’s a new version. Do not run two different bodies under the same “V3” label. If you do, you won’t know which change caused the result.

Practical workflow: maintain a spreadsheet “campaign ledger” even if you use a CRM. The ledger is your experimental control panel: each row is a version, with columns for hypothesis, change description, start/end dates, sample size, and results. The CRM remains the source of truth for contacts and meetings, while the ledger prevents the classic failure mode: random tweaks without traceability.

Reply classification is where AI provides immediate leverage. Human-in-the-loop is still important, but AI can triage thousands of replies into consistent categories so you can measure outcomes and mine objections. The goal is not “sentiment analysis for fun”; it’s turning inbox text into structured data that drives decisions.

Define a small set of mutually exclusive categories aligned to your funnel. A practical set is: Positive (open to meeting), Neutral (not now / ask for info / forward to someone), Objection (explicit reason to decline), Unsubscribe (opt-out request), plus operational tags like Auto-reply, Bounce, and Not the right person. Keep the primary label single-choice; you can add secondary labels for richer analysis.

Implementation approach: export replies daily (or via webhook) and run them through an AI classification prompt that outputs JSON with fields like category, intent, urgency, next_step, and objection_type. For example, “We already use Vendor X” becomes category=Objection and objection_type=ExistingSolution. “Send details” becomes category=Neutral and intent=InfoRequest.

Common mistake: collapsing “neutral” and “objection” into one bucket. You lose the difference between “busy this quarter” (timing) and “not relevant” (targeting). Capturing intent and objection type lets you improve your list, your offer, and your sequence steps with evidence instead of guesswork.

A/B testing in outbound is about isolating variables under noisy conditions. You’re dealing with small samples, changing market conditions, and deliverability variance across domains. The antidote is disciplined test design: test one variable at a time, define a success metric in advance, and run the test long enough to reduce randomness.

What to test first depends on where the funnel leaks. If opens are low (and deliverability is healthy), test subject lines. If opens are fine but replies are low, test the hook and first-sentence relevance. If replies exist but positive replies are low, test your proof and offer framing. If positive replies exist but meetings lag, test the CTA and scheduling friction.

Engineering judgment: treat A/B tests as versioned experiments. Document the hypothesis (“More specific proof will increase positive replies in RevOps persona”) and set a stop rule (“run until 200 delivered per variant or 2 weeks”). Avoid simultaneous multi-variable changes. If you change subject, hook, and CTA together, you may get a lift—but you won’t know why, and you can’t replicate it.

Every objection is product research you didn’t have to pay for. When you systematically mine objections, you learn what your market actually hears when you describe your offer. The objective is to convert qualitative replies into a ranked list of friction points you can address through targeting, positioning, proof, or process.

Use your AI classification output to aggregate objection types weekly. Typical buckets include: Timing (“not this quarter”), Existing solution (“we use X”), No priority (“not a focus”), Budget, Authority (“not my area”), Skepticism (“sounds spammy/too good to be true”), and Compliance/security. Count them by persona and segment; objections are often segment-specific.

Then translate each top objection into a specific message change:

Message-market fit shows up as a pattern: higher positive reply rates, fewer “not relevant” replies, and objections that shift from relevance-based (“no”) to logistics-based (“timing,” “send info,” “loop in X”). Logistics objections are often a good sign—you’re in the right neighborhood. Relevance objections mean your ICP, list, or hook needs work more than your follow-up cadence.

Common mistake: arguing with objections in long emails. The better move is to log the objection, adjust the sequence for future sends, and respond with one clarifying question or a single proof point—keeping tone respectful and compliant.

Fast improvement comes from a predictable cadence. Run your outbound program like a weekly sprint: measure, decide, change, and document. The discipline is to make few, high-quality changes rather than many reactive tweaks.

A practical weekly sprint structure:

Your dashboard should be simple enough to read in five minutes: rows as versions/segments, columns for the four core metrics plus unsubscribes and bounces. Pair it with a change log (date, version, hypothesis, diff summary, owner). This prevents the most expensive failure mode in outbound: someone “improves” the email, performance drops, and no one can trace what changed.

Treat sequences like code. Version your assets (even in a shared doc) and keep an archive of retired versions. When you find a winner, lock it and scale volume cautiously, preserving deliverability patterns. When performance dips, you’ll know whether it’s list quality, message relevance, or operational breakdown—because your system was built to learn.

1. What turns cold email from “we tried outbound” into a predictable meeting engine, according to the chapter?

2. Why does the chapter emphasize defining a tracking taxonomy before iterating?

3. What is the main reason to use AI to classify replies (positive, neutral, objection, unsubscribe)?

4. When designing an A/B test for a sequence, what principle does the chapter stress to ensure you actually learn something?

5. What mindset shift does the chapter recommend to prevent random tweaks from harming a working sequence?

You do not “win” cold email when you press send. You win when a prospect replies, a meeting gets booked, and the conversation arrives with enough context that it can convert. This chapter turns your personalization engine into a booking machine by defining follow-up logic, handling objections consistently, and reducing scheduling friction. Then we operationalize the full 30-day system with SOPs so it can run repeatedly without drifting in tone, quality, or compliance.

The core mindset shift: treat your outbound like a small product. It has a funnel (sent → delivered → opened → replied → booked → showed), an operating cadence, and clear escalation rules. Follow-ups are not “nagging.” They are an engineered sequence of helpful touches, new information, and easy next steps. Scheduling is not “send a calendar link.” It is a friction-reduction flow that pre-qualifies, sets an agenda, and prevents no-shows. SOPs are not bureaucracy. They are guardrails that allow you to scale volume while preserving the personalization that makes cold email work.

Throughout this chapter you will build: (1) a follow-up and escalation playbook that drives responses, (2) objection-handling macros powered by AI prompts, (3) a scheduling flow that makes saying “yes” effortless, (4) a handoff package that makes sales calls sharper, (5) a set of checklists and roles to run the engine weekly, and (6) a 60-day scaling plan with automation boundaries and quality controls.

Practice note for Create a follow-up and escalation playbook that drives responses: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Write objection-handling macros and AI-assisted response prompts: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Implement scheduling flows that reduce friction and no-shows: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Operationalize your 30-day engine with SOPs, templates, and roles: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Plan the next 60 days: scaling volume without losing personalization: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Create a follow-up and escalation playbook that drives responses: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Write objection-handling macros and AI-assisted response prompts: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Implement scheduling flows that reduce friction and no-shows: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Operationalize your 30-day engine with SOPs, templates, and roles: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Most sequences fail because follow-ups are treated as afterthoughts. Your follow-up playbook should specify timing, intent, and content type for each touch. A simple and effective structure is: bump (light reminder), nudge (reframe value), and new information (fresh signal, proof, or asset). Each follow-up should feel like it advances the conversation, not like it repeats the first email louder.

Start by defining an escalation ladder. Example: Day 1 initial email; Day 3 bump; Day 6 nudge with a different angle; Day 10 new information (case study or relevant benchmark); Day 14 breakup-style close; Day 21 channel switch (LinkedIn comment/DM or voicemail) if appropriate. The exact intervals depend on your ICP sales cycle, but the pattern matters: early touches are short and polite; later touches add substance or change the frame.

Engineering judgment: avoid over-optimizing for opens. Optimize for replies from the right people. A common mistake is sending too many “checking in” emails with no added value, which trains the recipient to ignore you and can increase complaint risk. Another mistake is including heavy assets (large images, attachments) that can trigger spam filters; keep follow-ups light, with links used sparingly and consistently.

AI can help you generate “new information” touches by transforming your research notes into alternate angles. Keep a fixed structure so your voice remains consistent: opening line referencing the previous note, one new insight, one proof point, and one CTA. Your escalation rules should be documented and enforced in your sending tool (sequence steps, stop conditions on reply, and suppression rules for bounces/unsubscribes).

Once replies start coming in, speed and consistency matter. Objection-handling macros turn reactive inbox work into a repeatable system. Your goal is not to “win” every objection; it is to classify it quickly, respond with clarity, and move to the next step (book, disqualify, or nurture). Build response trees for the most common patterns, and make your macros editable so they never sound robotic.

Start with a simple taxonomy: Not interested, Already have a vendor, No time, Send info, Too expensive, Wrong person, Not now, and Curious. For each, define: (1) the best next question, (2) the minimum proof needed, and (3) the recommended CTA (meeting vs. async info). Example: “Already have a vendor” is often a timing and differentiation problem; the next question is “What are you optimizing for this quarter—cost, speed, or coverage?” Then you offer one differentiator and a low-friction comparison call.

AI-assisted response prompts should be constrained to your brand voice and offer structure rather than creativity. Use a template prompt that includes: the original outbound email, the prospect’s reply, your product constraints (what you can/can’t promise), and the desired outcome (book/disqualify/nurture). Instruct the model to produce two versions: a “short” reply (under 80 words) and a “meeting-forward” reply (with two time options). Then you select and lightly edit.

Common mistakes: replying with long paragraphs that feel like a pitch deck; asking multiple questions at once; and pushing for a meeting when the reply clearly indicates a mismatch. Your response trees should include exit paths (e.g., “Understood—should I reach back out next quarter?”) so you preserve deliverability and brand trust. Over time, track which objection macros lead to booked meetings and refine the tree based on outcomes, not opinions.

Scheduling is a conversion step. Treat it like checkout: reduce friction, make choices easy, and confirm the value of moving forward. The simplest high-performing flow is: (1) confirm relevance, (2) propose a specific format and duration, (3) provide scheduling options, and (4) set a clear agenda so the prospect knows what they will get.

Calendar links are useful, but not always best as the only option. Many executives prefer you propose two specific times. A practical pattern is: “I can do Tue 11:00 or Wed 2:30 ET—if easier, here’s my calendar link.” This gives control without forcing a click. If you rely on a calendar link, ensure it respects time zones, avoids overly large availability (which looks low-demand), and includes buffers to prevent back-to-back meetings.

Operational detail: standardize your event type descriptions so every invite reinforces positioning. Include a short “what we’ll cover” and a one-line credibility marker (e.g., “We’ll share what we’re seeing across teams like X”). Add meeting logistics: video link, dial-in, and what you need from them (optional). If you serve regulated industries, ensure the invite language stays compliant and avoids over-claiming.

Common mistakes: asking for a meeting before confirming the prospect is the right persona; sending a calendar link without context; and making the first call too long. Your booking goal is to create momentum. A tight, well-framed first meeting increases show rates and reduces the chance that the prospect treats it as a low-priority “maybe.”

A booked meeting is only valuable if the salesperson arrives informed. Cold email systems often break here: the SDR/marketer books a call, but the AE has no context, asks basic questions, and the prospect feels like they are starting over. Your handoff SOP should package the “why this meeting exists” in a one-page brief that is attached to the CRM record and the calendar invite (or sent internally).