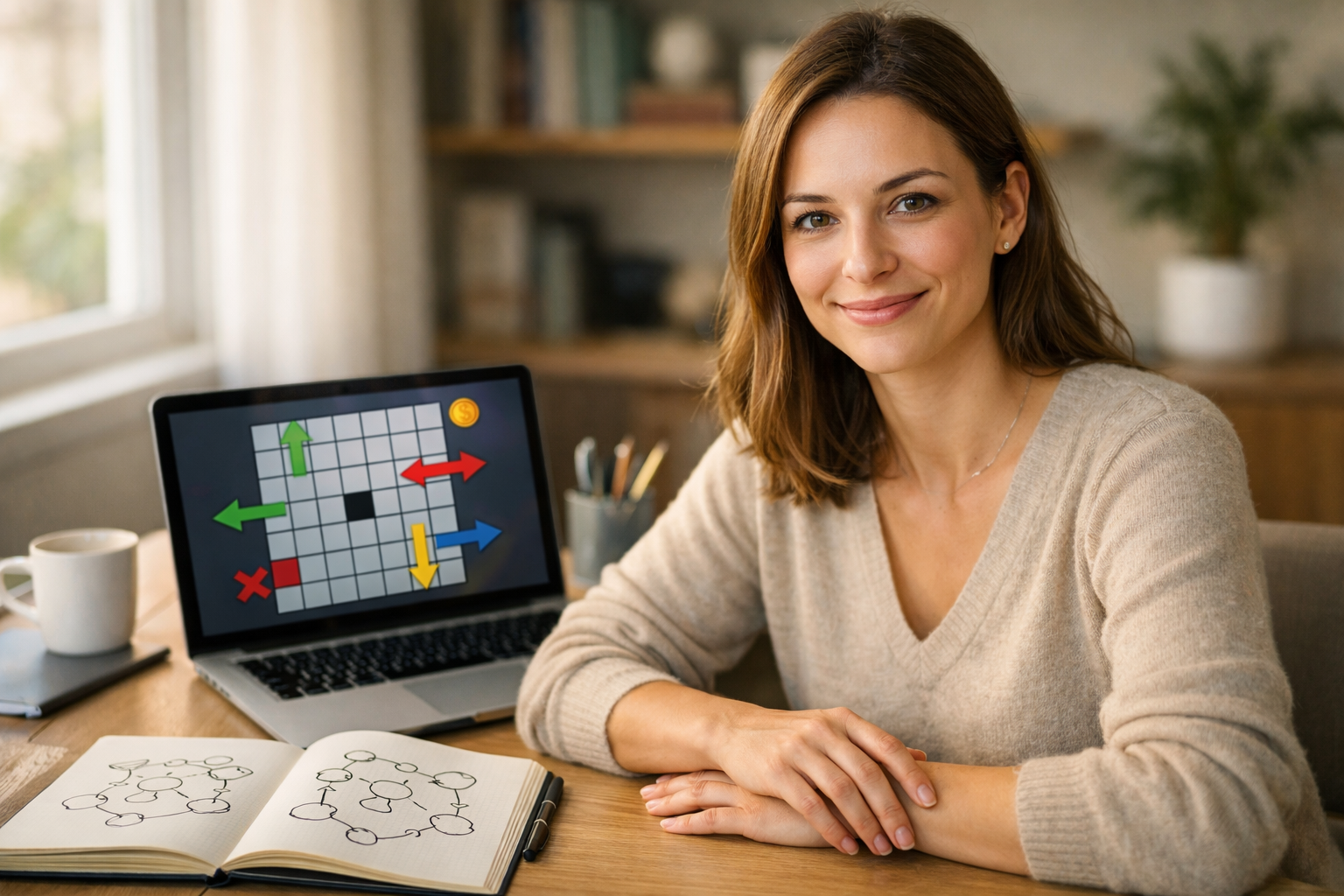

Reinforcement Learning — Beginner

Learn RL from zero and build a simple reward-based agent

This beginner course is designed as a short, clear technical book that teaches reinforcement learning in the simplest possible way. If you have heard that AI can learn by getting rewards and penalties, but you do not know where to begin, this course will guide you step by step. You do not need a background in coding, math, machine learning, or data science. Everything is introduced from first principles using plain language and small examples.

The main goal of this course is simple: help you build your first reward-based agent. Along the way, you will understand what an agent is, what an environment is, why rewards matter, and how a machine can slowly improve through trial and error. Instead of throwing complex formulas at you, the course explains the core ideas in a practical, beginner-friendly way.

Many reinforcement learning courses jump too quickly into advanced terms, libraries, and theory. This course takes the opposite approach. It starts with everyday examples, then moves into a tiny grid world project that you can understand fully. By keeping the problem small, you can focus on the big ideas without feeling lost.

The six chapters are arranged like a short technical book with a clear learning path. First, you discover what reinforcement learning really means and how it differs from other types of AI. Next, you learn how to describe a simple world with rules, goals, and rewards. Then you set up a beginner-friendly Python workspace and write just enough code to represent a tiny environment.

Once that foundation is ready, the course shows how a basic agent learns from rewards. You will build a simple action-value table, understand the logic behind Q-learning, and update the agent step by step. After that, you will connect everything together, train your first reward-based agent over many episodes, and observe how it improves. Finally, you will test the agent, troubleshoot common mistakes, and explore easy ways to expand the project.

By the end of the course, you will have more than just definitions. You will have a mental model of reinforcement learning and a working beginner project you can explain. This makes the subject feel less abstract and gives you a practical base for future study.

This course is for curious beginners, students, career changers, and professionals who want a gentle introduction to reinforcement learning. It is also a good fit if you have tried other AI content and found it too advanced or too abstract. If you want a clear first win in AI, this course is built for you.

If you are ready to begin, Register free and start learning today. You can also browse all courses to explore more beginner-friendly AI topics after you finish this one.

Reinforcement learning can seem intimidating at first, but it becomes much easier when you learn it through a small, complete project. This course gives you that first practical step. By the final chapter, you will not just know what a reward-based agent is—you will have built one yourself.

Senior Machine Learning Engineer

Sofia Chen is a machine learning engineer who designs beginner-friendly AI learning programs and practical training labs. She has helped new learners move from zero technical background to building simple AI projects with confidence.

Reinforcement learning, often shortened to RL, is one of the most intuitive ideas in artificial intelligence once you strip away the jargon. At its core, it is about learning from consequences. An agent tries something, the world responds, and the agent uses that response to choose better actions next time. That sounds technical, but it is also deeply familiar. People learn this way when they practice a sport, train a pet, play a video game, or figure out the fastest route home. They do not receive a giant answer key before they begin. Instead, they act, observe results, and gradually improve.

This chapter gives you a clear mental model for what reinforcement learning really is before you write any serious code. We will separate RL from other common AI approaches, define the basic parts of the learning setup, and map the loop that repeats again and again while an agent learns. You will meet the key ideas you will use throughout the course: agent, environment, state, action, reward, goal, episode, and feedback. By the end of the chapter, you should be able to explain RL in plain everyday language and recognize RL patterns in ordinary life.

Just as importantly, we will keep an engineering mindset from the very beginning. In practice, building a reward agent is not just about knowing definitions. It is about making sensible choices. What counts as success? What feedback should the agent receive? When should it try something new instead of repeating the best option it already knows? Beginners often think RL is magic because the examples can look impressive. In reality, good RL systems come from careful design, simple loops, and clear rewards.

You will also start preparing for the practical work ahead. In later chapters you will set up a beginner-friendly Python workspace and build a tiny reward-based agent step by step. For now, focus on understanding the structure of the problem. Once that structure is clear, the code becomes much easier to read and write.

Keep one sentence in mind as you read: reinforcement learning is learning what to do by trying actions and measuring outcomes. Everything else in the chapter builds on that simple idea.

Practice note for See how reward-based learning differs from other AI types: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Understand the agent, environment, and goal: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Recognize states, actions, and rewards in daily life: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Map a simple learning loop from start to finish: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for See how reward-based learning differs from other AI types: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Understand the agent, environment, and goal: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Many beginners hear the term artificial intelligence and imagine one giant field where every system works the same way. That is not how it works in practice. Different AI methods solve different kinds of problems. A useful first step is to contrast reinforcement learning with two other common patterns: supervised learning and unsupervised learning.

In supervised learning, a model learns from labeled examples. You show it inputs and correct answers. For example, you might train a system to recognize spam emails by giving it many emails already marked as spam or not spam. The model learns to copy the pattern in the labels. In unsupervised learning, there may be no correct answer labels at all. The system tries to find structure, such as grouping similar customers or reducing the complexity of data.

Reinforcement learning is different because the agent is not usually handed the right action for every situation. Instead, it acts first and receives feedback afterward. That feedback may be immediate, delayed, noisy, or incomplete. The agent must discover which actions lead to good outcomes over time. This makes RL feel more like practice than memorization. It is less like solving a worksheet with answers at the back and more like learning to ride a bicycle by wobbling, adjusting, and improving.

In plain language, RL is reward-based learning. If an action helps the agent move toward its goal, it receives a positive signal. If it does something unhelpful, it may receive no reward or a negative one. Over repeated attempts, the agent learns a policy, which is just a rule for what to do in different situations.

A common beginner mistake is to think reward means money, points, or praise in a human sense. In RL, reward is simply a number that represents whether the outcome was better or worse according to the task design. Another common mistake is assuming the agent understands the goal the way a person does. It does not. It only follows the reward structure you define. That is why engineering judgment matters. If the reward is poorly designed, the agent may learn strange behavior that technically earns reward but does not solve the real problem you care about.

So when you hear reinforcement learning, think: an AI system learns by trial, feedback, and improvement, not by being shown the correct move every time.

Trial and error is the heartbeat of reinforcement learning. The agent starts with limited knowledge. It tries an action, sees what happens, and updates its future choices. Then it repeats. This loop may look almost too simple, but it is the foundation of many powerful systems.

Think about a child learning which door in a hallway leads outside, or a person testing different shortcuts through a city. At first, there is uncertainty. Some choices work well, some waste time, and some may even make the situation worse. Over repeated attempts, better decisions emerge because past outcomes leave a trace. Reinforcement learning formalizes this process so that a computer can do it systematically.

The key challenge is that actions can have delayed effects. Suppose a game gives no reward for the first several moves, but a smart early move later leads to winning. The agent must learn that the early move was valuable even though the reward arrived later. This is one reason RL can be more subtle than it first appears. The agent is not only reacting to the last action. It is learning patterns across sequences of decisions.

There is also a practical tension between exploration and exploitation. Exploration means trying actions that might be useful but are not yet proven. Exploitation means choosing the action that currently seems best. If an agent only exploits, it may get stuck with a decent option and never discover a better one. If it only explores, it keeps experimenting and never settles on a strong strategy. Good RL systems balance both.

For beginners, a useful engineering instinct is to start with very small environments where trial and error is easy to observe. If the setup is too complex, it becomes hard to tell whether the agent is truly learning or just behaving randomly. Small examples make the learning loop visible. You can inspect each step, watch rewards change, and verify that your code matches your concept.

Another common mistake is expecting fast perfection. Early learning often looks messy. Agents make poor choices before they improve. That is normal. In RL, messy early behavior is not necessarily failure. It is part of the process of gathering information.

In the chapters ahead, when you build a tiny reward-based agent step by step, this trial-and-error loop will be the main engine. The code will simply automate what this section describes in words.

Every reinforcement learning problem has two central parts: the agent and the environment. The agent is the learner or decision-maker. The environment is everything the agent interacts with. When the agent acts, the environment changes or responds. Then the agent observes the result and chooses again.

This simple split is powerful because it helps you frame almost any RL problem. In a game, the agent could be the player controlled by the algorithm, while the environment includes the game board, rules, and other elements. In a robot problem, the agent is the controller and the environment includes the room, objects, and physical forces. In a recommendation setting, the agent may choose which item to show, while the environment includes the user’s response.

Beginners sometimes blur the line between agent and environment, which leads to confusion. A practical rule is this: the agent chooses actions; the environment returns consequences. The environment may also include randomness. The same action does not always produce exactly the same result. That is realistic, and RL methods are built to handle uncertainty.

It is also useful to distinguish the goal from the world itself. The goal is not a physical object. It is the target outcome encoded through rewards. For example, in a navigation task, the environment is the map, walls, and movement rules. The goal might be reaching the exit quickly. If you change the rewards, you can change the goal without changing the physical world.

From an engineering perspective, defining the environment clearly is one of the first important design tasks. What information can the agent observe? What actions are available? When does the environment reset? These choices strongly affect whether learning is easy, hard, or impossible. If the environment hides critical information, the agent may struggle. If the action choices are too broad too early, the problem may be needlessly difficult.

This is why small teaching environments are so valuable. They let you isolate the core parts of the interaction. In this course, you will soon use a simple Python workspace to create tiny experiments where the agent and environment are easy to inspect. That setup is beginner-friendly on purpose. It allows you to see the decision loop directly instead of getting lost in software complexity.

Whenever you study a new RL example, ask two practical questions first: who is making the choice, and what world is responding? That habit keeps the whole problem grounded.

Once you understand agent and environment, the next step is to name the three pieces that drive the learning loop: states, actions, and rewards. These are the core vocabulary words of reinforcement learning.

A state is the situation the agent is currently in. It is the information used to decide what to do next. In a board game, the state might be the current arrangement of pieces. In a traffic example, it could include your location and the traffic level on nearby roads. In everyday life, a state might be as simple as being hungry at noon with limited time for lunch.

An action is a choice the agent can make. In a game, move left or move right. In a recommendation system, show item A or item B. In daily life, cook at home, order delivery, or walk to a nearby cafe. RL becomes practical when actions are clear and limited enough to test and compare.

A reward is the feedback signal after an action. It tells the agent whether the result was better or worse relative to the goal. If a robot reaches a charging station, reward may be positive. If it crashes into a wall, reward may be negative. In a delivery task, arriving quickly may give higher reward than arriving late. Reward is not the same thing as emotion or intention. It is a design signal.

To recognize these ideas in ordinary life, consider studying habits. The state could be your current energy level and time of day. The actions might be review notes, watch a lesson, or take a short break. The reward might reflect whether you completed a useful study block and retained the material. Or think about choosing a checkout line at a grocery store. The state includes line lengths, basket sizes, and cashier speed. The action is which line you join. The reward is how quickly you finish.

Engineering judgment matters here because the agent can only learn from the signals you provide. If states leave out important information, the agent may not distinguish good situations from bad ones. If actions are vague, the agent has nothing concrete to learn. If rewards are inconsistent, learning becomes unstable. A classic beginner mistake is giving reward only at the very end and nothing in between, which can make the task hard to learn because the agent gets too little guidance. Another mistake is rewarding the wrong shortcut behavior by accident.

These definitions are simple, but they are the practical building blocks for everything that follows. When we later use a simple table to help an agent choose better actions, that table will organize expected value by state and action. So if you understand these three pieces well, you are already preparing for the first real implementation.

Reinforcement learning does not happen in one giant uninterrupted blur. It is usually organized into episodes. An episode is one full run of interaction from a starting point to some stopping condition. In a maze, one episode may start at the entrance and end when the agent reaches the exit or runs out of steps. In a game, one episode may be one complete match. Episodes make learning measurable because you can compare performance across many attempts.

The goal of the agent is to maximize total reward over time, not just grab the biggest immediate reward at the current step. This distinction is crucial. Sometimes a small short-term loss leads to a larger long-term gain. For example, taking a slightly longer route now may avoid traffic later. In RL, the best action is often the one that improves the overall outcome of the episode, not just the next moment.

Feedback can be immediate or delayed, dense or sparse. Dense feedback means the agent gets frequent signals, such as small positive or negative rewards at many steps. Sparse feedback means reward appears rarely, perhaps only at success or failure. Dense feedback can make learning easier because the agent gets more guidance. Sparse feedback may be more realistic but often requires more careful design and more learning time.

One practical workflow is to start with clearer feedback than you might use in a final system. In a teaching example, you may reward progress toward the goal so that the agent has a stronger signal. Later, once the basic loop works, you can simplify or toughen the reward structure. This is a sensible engineering move, not a shortcut. It helps you confirm that the agent and environment are connected correctly before tackling harder reward setups.

Common beginner mistakes in this area include ending episodes too early, making the goal ambiguous, or mixing up reward with scorekeeping that does not match the real objective. If your real goal is efficiency but your reward favors taking many unnecessary actions, the agent will optimize the wrong thing. RL systems do what the reward encourages, not what the designer vaguely intended.

So think of an episode as one learning attempt, the goal as the pattern of outcomes you want, and feedback as the signals that shape the agent’s behavior. Once those three are aligned, the learning loop becomes much easier to reason about and debug.

Let us end the chapter with a tiny mental model you can carry into the coding chapters. Imagine an agent in a one-room world with two buttons: blue and green. The environment responds in a simple way. Pressing blue usually gives a reward of 1. Pressing green usually gives a reward of 0. The agent does not know this at the start. It must learn by trying both.

The state is very simple: there is only one situation, standing in front of the two buttons. The actions are press blue or press green. The reward is the score returned by the environment after the press. One episode could be a fixed number of button presses, such as ten attempts. The goal is to maximize total reward during those attempts.

At first, the agent should explore. If it presses only one button forever without trying the other, it cannot learn which is better. After enough experience, it should exploit more often by choosing the button that has produced higher reward on average. This is the simplest form of the exploration versus exploitation trade-off. It is not abstract at all. It is the practical question of whether to test uncertain options or use the best-known option.

Now imagine storing what the agent has learned in a tiny table. The table records an estimate for each action in the current state. If blue has earned more reward across experience, its table value rises. If green performs poorly, its value stays low. The agent can then consult the table before acting. This is the beginning of value-based reinforcement learning, and it is exactly the kind of beginner-friendly idea you will implement later in Python.

Notice how complete the learning loop already is: observe state, choose action, receive reward, update knowledge, repeat. That is the full skeleton of reinforcement learning. More advanced systems add richer states, larger action spaces, and smarter update rules, but the basic pattern remains the same.

This tiny example may look almost trivial, but that is exactly why it is useful. Good engineering starts with simple cases you can understand fully. In the next steps of this course, you will move from this conceptual model into a real beginner-friendly Python workspace and build a small agent that updates a table and improves through experience. If this chapter is clear, the code will feel like a direct translation of ideas you already understand.

1. What is the core idea of reinforcement learning in this chapter?

2. In reinforcement learning, what best describes the agent?

3. Which example best matches reinforcement learning as described in the chapter?

4. Why does the chapter emphasize careful design in building a reward agent?

5. What is the basic learning loop in reinforcement learning?

Before an agent can learn, it needs a world to live in. In reinforcement learning, that world is called an environment. An environment is not just a picture or a game board. It is a set of rules that explains what the agent can observe, what actions it can take, what happens next, and what reward it receives. If the environment is vague, the learning problem becomes vague too. If the environment is clear, the agent has a fair chance to improve.

For beginners, the best environment is a small one. A tiny grid world is ideal because it is easy to imagine, easy to code, and easy to debug. You do not need advanced math to understand it. Think of a robot standing on a set of floor tiles. Each tile is a position. From one tile, the robot may move up, down, left, or right. Some tiles are safe. Some are blocked. One tile is the goal. The agent does not need a story to learn, but a story helps us reason about the design.

This chapter prepares the exact problem your first reward agent will solve. We will define a simple environment as a set of rules, build the idea of a grid world, describe wins, losses, and step penalties, and make sure the task is written clearly enough to implement in Python later. This may sound simple, but this step is where good reinforcement learning projects begin. Most beginner confusion does not come from the learning algorithm. It comes from poorly defined rules.

When you design an environment, you are making engineering choices. You decide what counts as progress, what counts as failure, and when the agent gets feedback. These choices shape what the agent learns. For example, if every move gives zero reward and only the final goal gives a positive reward, the agent may learn slowly because feedback is rare. If every move has a small penalty, the agent has a reason to reach the goal efficiently. That is a practical design choice, not abstract theory.

By the end of this chapter, you should be able to describe your first environment in plain language: where the agent starts, what actions exist, what the goal is, what the dangerous or blocked places are, what rewards are given, and when an episode ends. That description becomes the foundation for the code you will write next.

In short, Chapter 2 is about building the stage before the actor starts learning. A strong environment design makes everything that follows easier: the code, the reward table, the exploration choices, and the interpretation of results. Let us now break that world into concrete parts.

Practice note for Describe a small environment as a set of simple rules: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Build a grid world idea without advanced math: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Understand wins, losses, and step penalties: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Prepare the problem your first agent will solve: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

A simple environment is a small, rule-based world in which an agent can act and receive feedback. The key word is simple. As a beginner, you do not want randomness everywhere, dozens of actions, or complicated reward formulas. You want an environment that is small enough to understand fully. That way, when the agent behaves strangely, you can tell whether the problem is in the rules, the rewards, or the learning code.

The easiest way to think about environment design is to answer four questions. First, what can the agent see? Second, what can the agent do? Third, what happens after each action? Fourth, what reward does it get? If you can answer these clearly, your environment is already taking shape. In our beginner setting, the agent sees its location on a small grid. It can choose one of four actions: up, down, left, or right. The environment updates the location if the move is allowed. Then it returns a reward based on what happened.

Good engineering judgment means reducing unnecessary complexity. For a first project, avoid diagonal movement, avoid hidden state, and avoid too many special exceptions. You are not trying to build the most realistic world. You are trying to build the clearest world. A clear environment helps you learn the main reinforcement learning ideas: states, actions, rewards, transitions, and goals.

A common beginner mistake is designing an environment that sounds fun but is hard to test. For example, a maze with moving enemies, random teleporting, and changing rewards may sound exciting, but it hides the core lesson. A 4x4 or 5x5 grid with one start cell, one goal cell, and a few blocked cells is enough. You can draw it on paper, reason about every possible move, and later represent it with simple Python data structures.

Practical outcome: after this section, you should be able to describe your environment in one paragraph. If you cannot explain it plainly, the agent will not learn from it plainly either.

A grid world turns the environment into a set of cells arranged in rows and columns. Each cell represents a possible state, or at least part of the state. If the agent is standing in row 2, column 3, that position is the current state. This is one of the cleanest ways to introduce reinforcement learning because you can literally point to where the agent is and where it wants to go.

Movement rules should be predictable. In the basic version, the agent can move up, down, left, or right. If it tries to leave the grid, the move fails and the agent stays where it is. If it tries to move into an obstacle, the move also fails. These “stay in place” rules are useful because they keep the world consistent. The agent learns that some actions are ineffective in some cells.

You do not need advanced math to work with a grid. In code, a position can simply be a pair like (row, col). An action can be a short string such as "up" or "left". The transition rule is just a function that takes the current position and action, then returns the next position. That is the heart of the environment.

A practical workflow is to draw the grid before coding it. Label the start cell, goal cell, and obstacles. Then test a few examples by hand. If the agent is at the top row and moves up, what should happen? If it is next to a wall and moves into it, what should happen? Writing down these examples helps catch rule gaps early.

Common mistake: beginners sometimes mix up the visual map and the movement logic. A grid picture is not enough. You must define what each action does from each location. Practical outcome: by building the grid world idea now, you prepare a clean set of states and actions for the agent to learn from later.

Once the grid exists, the next step is to give the task meaning. The simplest way is to define a start cell, a goal cell, and a few obstacles. The start is where each episode begins. The goal is the desired destination. Obstacles are cells the agent cannot enter. This creates a problem worth solving: find a path from start to goal while avoiding blocked spaces.

The start location should be fixed at first. A fixed start makes debugging easier because you can compare runs more clearly. Later, you can randomize the start to make the problem richer. The goal should also be obvious and reachable. If the goal is impossible to reach because obstacles block every path, the agent receives no useful success signal. That is not a learning challenge. It is a broken task.

Obstacles matter because they force decisions. Without obstacles, the shortest route to the goal may be too obvious, and the environment teaches very little. With a few carefully placed blocked cells, the agent must try actions, discover dead ends, and find a better route. This is where reinforcement learning starts to feel purposeful.

Use engineering judgment here. Too many obstacles make the world frustrating and hard to solve. Too few make it trivial. For a first environment, choose only enough obstacles to create at least two possible paths, one better than the other. That makes rewards and policy improvement easier to observe later.

A common mistake is treating obstacles and failure states as the same thing. They are different. An obstacle usually means “you cannot move there.” A failure state means “you moved into a bad cell and the episode may end.” Keeping these ideas separate makes the rules cleaner. Practical outcome: your first agent now has a concrete job, not just movement for its own sake.

Rewards are the teaching signal of reinforcement learning. They tell the agent what outcomes are good and what outcomes are bad. In a beginner grid world, rewards do not need to be complicated. A common setup is: a positive reward for reaching the goal, a negative reward for stepping into a losing state if you have one, and a small step penalty for each move. This combination encourages success, discourages failure, and pushes the agent to solve the task efficiently.

The step penalty is especially important. If every normal move gives zero reward, the agent may wander without pressure to improve. A small penalty such as -1 per step gives the agent a reason to reach the goal in fewer moves. This is one of the most practical ideas in environment design. You are shaping behavior with simple feedback.

Wins, losses, and penalties should match the real task you want the agent to solve. If the goal reward is too small, the agent may not care enough about reaching it. If the penalty is too harsh, the agent may avoid exploring useful paths. If the losing reward is unclear or inconsistent, learning becomes unstable. Good reward design is less about fancy formulas and more about clear intent.

A common beginner mistake is rewarding the wrong behavior by accident. For example, if bumping into a wall gives the same reward as making progress, the agent has no reason to avoid useless actions. Another mistake is making the goal reward so huge that it hides everything else during debugging. Start with simple, interpretable numbers and adjust only if behavior is clearly off.

Practical outcome: you should now be able to define a reward scheme in one sentence, such as “+10 for the goal, -10 for a losing cell, and -1 for every step.” That is enough to begin learning in a meaningful way.

Reinforcement learning usually runs in episodes. An episode is one full attempt at the task, from a starting state until some ending condition is reached. In a grid world, the most common end conditions are simple: the agent reaches the goal, the agent enters a losing state, or the agent exceeds a maximum number of steps. This last condition is important because it prevents endless wandering.

Clear episode boundaries make training easier to reason about. Each episode gives the agent one chance to try, collect rewards, and stop. Then the environment resets, usually back to the start cell. This reset process is not a small detail. It creates repeated practice. The agent improves by trying many episodes, not by making one endlessly long journey.

The maximum-step rule is a practical safety tool. Imagine the agent gets stuck looping between two cells. Without a step limit, your program could waste time and make debugging harder. A limit such as 20, 30, or 50 steps in a small grid is usually enough. If the agent has not found the goal by then, that episode ends and a new attempt begins.

Common mistake: forgetting to define what happens after a terminal event. If the agent reaches the goal, the environment should not keep moving as if nothing happened. The same is true for a losing state. Terminal means stop this run, record the result, and reset. That clarity helps both coding and interpretation.

Practical outcome: by defining end conditions now, you prepare the exact loop your future Python code will use: reset environment, take actions, collect rewards, stop at terminal state or step limit, then start again.

The final step in preparing your first agent problem is to write the rules clearly enough that another person could implement them without guessing. This is where many beginner projects improve immediately. Instead of saying, “The agent moves around and tries to win,” write a precise description. For example: “The world is a 4x4 grid. The agent starts at cell (0,0). The goal is at (3,3). Obstacles are at (1,1) and (2,1). Available actions are up, down, left, and right. Invalid moves leave the agent in the same cell. Each move gives -1 reward. Reaching the goal gives +10 and ends the episode. The episode also ends after 20 steps.”

That kind of rule list is powerful because it removes ambiguity. It also creates a direct bridge to code. You can store the grid size, the start, the goal, and the obstacles as variables. You can write one transition function and one reward function. Later, when you build a table-based agent, those rules determine exactly what state-action experience means.

Good engineering judgment means being explicit about edge cases. What happens at boundaries? What happens on invalid moves? Are obstacle cells entered or blocked? Is the goal reward given only once? Does the episode stop immediately at the goal? These are not boring details. They are the environment.

A practical workflow is to test your written rules against a few imagined action sequences. If the agent starts at (0,0) and chooses right, right, down, what should the positions and rewards be after each step? If you can answer that without hesitation, your environment is ready. If not, keep refining the rules before coding.

Practical outcome: you now have a fully specified beginner-friendly problem. That means the next chapter can focus on the agent itself, because the world it lives in is finally clear, stable, and teachable.

1. In this chapter, what is an environment in reinforcement learning?

2. Why is a tiny grid world a good beginner environment?

3. What is the purpose of adding a small step penalty to each move?

4. According to the chapter, what often causes beginner confusion in reinforcement learning projects?

5. Which description best shows that an environment is written clearly enough to implement later?

Reinforcement learning becomes much easier when you can turn ideas into small experiments. In the last chapters, you learned the core language of RL: an agent, a state, an action, a reward, and a goal. In this chapter, we move from ideas to code. The goal is not to become a professional Python programmer in one sitting. The goal is to build a small, comfortable coding foundation that is just enough for this course.

Many beginners get stuck because they think they must learn all of Python before they can build anything in reinforcement learning. That is not true. RL at a beginner level uses a small and very practical slice of Python: variables to store values, lists to hold options, loops to repeat actions, functions to organize behavior, and simple print statements to inspect what is happening. If you can read a few lines of code and make small changes with confidence, you already have enough to build your first reward agent.

This chapter also introduces an important engineering habit: inspect everything. In reinforcement learning, bugs often hide in the interaction between the agent and the environment. An action may move in the wrong direction. A reward may be given at the wrong time. A state may be represented inconsistently. So before we train anything, we will learn how to run a tiny environment, step through its behavior, and verify that it follows the rules we intended.

We will start by setting up a beginner-friendly workspace. Then we will review only the Python pieces you truly need. After that, we will represent states and actions directly in code, build a tiny grid world environment, and test it by hand. By the end of the chapter, you will have a working sandbox where an agent can move, receive rewards, and report what happened after each step. That is the foundation for the reward-based agent we will build next.

Think of this chapter as preparing your workshop before building a machine. Good tools and clear organization reduce friction. Small tests build confidence. And once the environment behaves correctly, the learning algorithm has a fair chance to succeed.

Practice note for Set up a beginner-friendly coding workspace: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Write basic Python needed for this course: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Represent states and actions in code: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Run a tiny environment and inspect its behavior: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Set up a beginner-friendly coding workspace: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Write basic Python needed for this course: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Your coding setup should feel calm, not complicated. For this course, a beginner-friendly workspace means three things: Python installed, a code editor you can trust, and one simple way to run your file. The easiest path is usually to install Python 3 from the official website and use Visual Studio Code as your editor. If you prefer, a Jupyter notebook can also work, but for reinforcement learning, plain Python files are often cleaner because you will define functions, classes, and step-by-step environment logic in one place.

After installing Python, open a terminal or command prompt and type python --version. If your system uses python3 instead, try that. Seeing a version number is your first success signal. Next, create a project folder such as first_rl_agent. Inside it, create a file named grid_world.py. Run it with python grid_world.py. At first, the file can contain only print("Hello RL"). This tiny test matters because it confirms your tools work together before any real coding begins.

A good engineering habit is to keep your workspace simple. Put all chapter files in one folder. Use clear names. Save often. Run small tests frequently. Beginners often write many lines before pressing Run, then face a wall of errors and do not know where things broke. Instead, write a little, run a little, and verify each step. This approach is especially useful in RL because your code will involve several moving parts: states, actions, rewards, and transitions.

If you want an even cleaner setup, create a virtual environment, though it is optional at this stage. The main benefit is keeping project packages separate from other Python work on your machine. For now, the most important practical outcome is this: you should be able to open one file, write Python code, and run it reliably. Once that loop is working, learning becomes much faster because you can immediately test every idea from the chapter.

You do not need advanced Python to understand beginner reinforcement learning. You need only a small set of tools used over and over. First, you need variables. A variable stores something the program must remember, such as the current position of the agent, the reward from the last step, or whether the episode is finished. In Python, writing state = 0 or reward = 1 is enough to create a variable. Python is friendly in this way: it lets you focus on ideas quickly.

Next, you need functions. A function groups instructions into a reusable piece of logic. In RL, functions are natural for actions like resetting the environment or stepping once after an action. A function such as def reset(): can return the starting state. Another function such as def step(action): can update the environment and return the new state, reward, and done flag. This function-based design keeps your code readable and prepares you for more structured agents later.

You also need conditionals. An if statement lets the environment react differently depending on what the agent does. For example, if the action is "up", move one way; if it is "left", move another. Conditionals are also useful for reward logic. If the agent reaches the goal, give a positive reward. If not, maybe give zero or a small penalty. This is how game rules become program rules.

Finally, use print() generously. In beginner RL, printing the state, action, and reward after each step is not messy; it is smart. A common mistake is to assume the environment is behaving correctly because the code runs without crashing. Running is not the same as being correct. Your practical target is simple: understand every line that updates the environment, and verify it by printing what happened.

Reinforcement learning uses repeated interaction, so loops are one of the most important Python ideas in this course. But before loops, we need data to loop over. Variables store single values. Lists store collections. For example, you might store possible actions in a list such as actions = ["up", "down", "left", "right"]. This is useful because your agent can later choose one action from that list. Lists also help represent rows of a grid, stored rewards, or histories of what happened during an episode.

A for loop repeats a block of code a known number of times or once for each item in a list. For example, for action in actions: lets you inspect every possible move. A while loop repeats until a condition becomes false, which matches the idea of an episode continuing until the goal is reached. You may write: continue stepping while done == False. This simple pattern appears everywhere in RL.

Another practical Python habit is updating variables carefully. Suppose the agent is at position (0, 0). After an action, you compute a new position and assign it back to the state. If you forget to update the state, the environment appears frozen. If you update it incorrectly, the agent may jump in impossible ways. That is why small loops with printed output are so valuable. Run five steps. Print each state. Check whether the path makes sense.

Common beginner mistakes include mixing up the current state and next state, using the wrong list index, or writing loops that never stop. The fix is usually to make the code smaller and more visible. Use short variable names that remain meaningful, keep your action list explicit, and test one loop at a time. By the end of this section, you should feel comfortable reading code that stores an agent state, chooses from a list of actions, and repeats a step several times in sequence.

Now we connect Python directly to reinforcement learning language. A state is the information that describes where the agent currently is, or what situation it is facing. In a tiny grid world, a state can be represented as a pair of numbers like (row, col). For example, (0, 0) can mean top-left, while (1, 2) means one row down and two columns across. This is a good beginner representation because it is easy to print, compare, and update.

An action is a choice the agent can make. In code, actions can be strings such as "up", "down", "left", and "right". Strings are readable, which is valuable for learning. Later, more advanced systems often encode actions as numbers for speed, but readability matters more than optimization at this stage. When you print Action: right, you instantly understand what happened.

Good engineering judgment means choosing representations that are simple and consistent. If a state is sometimes a tuple and sometimes a list, comparison bugs may appear. If actions are sometimes strings and sometimes numbers, your logic becomes harder to follow. Pick one representation and stick to it. For this course, tuples for states and strings for actions are a practical choice.

You also need a rule that translates an action into movement. If the state is (1, 1) and the action is "up", the next state may become (0, 1). But what if the agent is already at the top edge? Should it stay in place? Should it receive a penalty? This is where RL design begins. The environment must define the consequences of actions clearly. Our tiny environment will keep the agent inside the grid. That means invalid moves do not crash the program; they simply leave the agent at the boundary. This kind of design is beginner-friendly, predictable, and easy to test.

A tiny grid world is one of the best first environments for reinforcement learning because everything is visible. Imagine a 3x3 grid. The agent starts at the top-left corner, and the goal is at the bottom-right corner. Each step changes the state based on the chosen action. Reaching the goal gives a reward, and the episode ends. This setup is small enough to understand completely, yet rich enough to teach the core RL workflow.

In code, start by defining the grid size, start state, goal state, and current state. Then create a reset() function that puts the agent back at the start and returns that starting state. Next, create a step(action) function. Inside it, read the current row and column, update them according to the action, clamp them so they stay inside the grid, build the next state tuple, and assign it back to the current state. After that, calculate the reward and whether the episode is done.

A practical design for the first version is this: every normal move gives reward 0, and reaching the goal gives reward 1. That keeps the reward signal easy to understand. Later, you may add a small step penalty such as -0.01 to encourage shorter paths, but for now simplicity is more important than realism. The environment should return four things after each step: next state, reward, done, and perhaps a small text message if you want to inspect behavior more easily.

One common mistake is packing too much into the first environment. Avoid walls, traps, random events, and complicated scoring until the basic grid works perfectly. A tiny environment is not childish; it is disciplined. It isolates the learning problem. If the agent behaves oddly later, you can ask whether the bug is in the learning rule or the environment. That question is answerable only when the environment itself is simple and trustworthy.

Before building an agent that learns, test the environment like a careful engineer. This means taking manual actions and checking whether the environment responds exactly as expected. Start with a reset. Confirm that the state is the start state. Then apply a few actions one by one: right, right, down, down. After each action, print the action taken, the new state, the reward, and the done value. If the goal is in the bottom-right corner of a 3x3 grid, that path should eventually end there and produce the goal reward.

You should also test edge cases. Try moving left when the agent is already in the leftmost column. Try moving up from the top row. The agent should stay inside the grid. If it moves outside, your boundary logic is wrong. If it crashes, your code is not robust yet. These simple edge tests matter because an RL agent will eventually try many actions, including unhelpful ones. The environment must handle them safely.

Another useful habit is to test a short fixed action sequence rather than random actions at first. Fixed sequences make debugging easier because the same inputs should produce the same outputs every time. Once the basic behavior is correct, you can sample random actions from the action list and observe how the state changes. Random testing helps reveal hidden mistakes, but deterministic testing should come first.

The practical outcome of this chapter is not just a Python file. It is a working mental model. You now have a beginner-friendly coding workspace, the Python basics required for the course, a clean representation of states and actions, and a tiny environment you can run and inspect. This is the bridge between RL concepts and RL implementation. In the next chapter, that bridge becomes even more useful when we let an agent interact with this environment repeatedly and begin improving its choices.

1. What is the main coding goal of Chapter 3?

2. Which set of Python skills does the chapter say is enough for beginner RL work?

3. Why does the chapter stress the habit of inspecting everything?

4. Before training an agent, what should you do with a tiny environment?

5. How does Chapter 3 describe the role of a beginner-friendly workspace and small tests?

In the previous parts of this course, you met the basic pieces of reinforcement learning: an agent, an environment, actions, states, rewards, and a goal. Now we bring those pieces together into the first real learning loop. This chapter explains how a beginner-friendly agent can start with no knowledge at all, try actions, receive rewards, and slowly improve its behavior. The key idea is simple: the agent does not need to be told the correct move in advance. Instead, it learns by interacting with the environment and remembering which choices seem to lead to better outcomes.

This matters because reinforcement learning is different from many other forms of machine learning. In supervised learning, a model is given many examples with correct answers. In reinforcement learning, the agent often has to discover useful behavior by trial and error. That can sound inefficient at first, but it mirrors how many real systems work. A game-playing agent, a robot, or a route-finding system may need to test actions, observe results, and adapt. Early behavior may look random or even foolish, yet those early steps generate the experience that learning depends on.

In this chapter, you will learn why random action can still teach an agent, how to build a simple action-value table, and how the core idea behind Q-learning works without heavy math. You will also see how the table is updated after every move. The engineering mindset here is important: keep the environment tiny, make the feedback clear, and inspect the agent's memory often. A small working example teaches more than a complex theory-heavy setup.

Think of the chapter as the moment when a collection of vocabulary words becomes a usable machine. We are moving from definitions into workflow. The workflow is: observe the current state, choose an action, receive a reward, move to a new state, and update memory. Repeat this cycle many times. Over time, a table of action values becomes a practical guide that helps the agent choose better actions more often.

The sections that follow walk through this process in the same order that a simple agent experiences it. We begin with ignorance, move into random exploration, introduce the Q-table, then show how reward and memory interact. Finally, we describe the Q-learning update in plain English and show what training looks like one step at a time. By the end of the chapter, you should be able to explain not just what Q-learning is, but why this style of learning works at all for a tiny beginner agent.

Practice note for Understand why random action can still teach an agent: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Create a simple action-value table: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Learn the idea behind Q-learning without heavy math: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Update the table after each move: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Understand why random action can still teach an agent: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

A beginner reinforcement learning agent starts out with no understanding of its world. It does not know which action is smart, which path is risky, or which state is valuable. This is not a flaw in the design. It is the natural starting point. If an agent already knew the best action in every situation, there would be nothing left to learn.

Imagine a tiny grid world where the agent can move up, down, left, or right. One square gives a reward, another gives a penalty, and the rest are neutral. At the beginning, every move is just a guess. The agent has not yet connected any state-action pair with a useful outcome. In practical terms, its internal memory might be a table full of zeros. Zero does not mean the action is bad. It means the agent has no evidence yet.

This starting condition is useful because it keeps the learning process honest. The agent must earn knowledge through experience. For beginners, this is an important mindset shift. Reinforcement learning is not about programming every rule by hand. It is about setting up feedback so the agent can discover patterns for itself.

A common mistake is expecting improvement after only a few actions. Early training often looks messy. The agent may walk into bad states, repeat unhelpful moves, or fail to reach the goal. That is normal. The key engineering judgment is to make sure the environment is simple enough and the rewards are clear enough that learning is possible. If the environment is confusing, the agent's cluelessness lasts longer than necessary.

When you inspect a beginner agent, ask practical questions:

If those pieces are in place, starting clueless is not a problem. It is the blank page that learning writes on.

One of the most surprising ideas in reinforcement learning is that random action can still teach an agent. At first, randomness may seem wasteful. Why let the agent make poor choices on purpose? The answer is that without trying different actions, the agent cannot gather evidence about what works.

Suppose the agent always picked the first action in its list. It might accidentally repeat a weak behavior forever and never discover a better option. Random choice solves this early learning problem by creating exploration. The agent samples different actions, sees the consequences, and begins to connect decisions with rewards.

In everyday terms, this is like trying different routes to school before deciding which one is fastest. If you only ever use the first route you think of, you may never learn that another route is shorter or safer. Random exploration creates the opportunity to compare.

For a beginner project, the practical version is simple: in the early stages of training, let the agent sometimes pick an action at random. This does not mean the whole system is random forever. It means the agent is collecting data. Those random moves produce state transitions and rewards, and that experience is what fills the action-value table with useful estimates.

A common mistake is turning off exploration too early. If the agent stops exploring before it has seen enough of the environment, it may lock into mediocre behavior. Another mistake is using pure randomness for too long. If every move stays random, the agent cannot reliably use what it has learned. Good training balances exploration and exploitation: sometimes try something new, but often use the best-known option so far.

The practical outcome of random early action is not immediate skill. The practical outcome is information. And in reinforcement learning, information is the raw material from which better choices are built.

Now we need a place to store what the agent is learning. A simple way to do that is with a Q-table. The letter Q is often used for action value. The basic idea is straightforward: for each state and each possible action, the table stores a number that estimates how good that action is in that state.

If the agent is in state A and can choose left or right, the Q-table might hold two values: one for A-left and one for A-right. Larger numbers suggest better long-term outcomes. Smaller numbers suggest weaker choices. At the beginning, the table is usually filled with zeros because the agent has not learned anything yet.

This table is useful because it turns learning into something visible. Instead of saying the agent is becoming smarter in an abstract way, you can inspect the numbers and see which state-action pairs are gaining value. For beginners, this is one of the best ways to understand reinforcement learning. The Q-table acts like the agent's memory notebook.

In a tiny environment, a Q-table is practical and easy to debug. You can print it after each episode and watch the values change. If the values never change, something is wrong in the training loop. If the wrong actions keep getting large values, your reward design or update logic may need checking.

Engineering judgment matters here. A Q-table works well when the number of states and actions is small. If the environment becomes very large, the table becomes too big to manage, and other methods are needed. But for a beginner reward agent, the table is ideal because it is concrete, transparent, and directly tied to behavior.

When people say the agent has learned, in this setup they usually mean one practical thing: the Q-table now contains better estimates than it did at the start, and the agent can use those estimates to choose stronger actions.

A reward by itself is just a signal. It becomes useful only when the agent stores and uses it. This is where reward and memory work together. The environment gives feedback after an action, and the agent updates its Q-table so future choices can improve.

Consider a simple example. The agent moves right from a state and reaches the goal, receiving a reward of +10. That single reward should increase the value of choosing right in that state. On the other hand, if moving left hits a trap and gives -5, the value of left should decrease. Over repeated experiences, the table begins to reflect the patterns in the environment.

The important idea is that the agent is not just reacting to the latest reward. It is building a memory of which actions tend to lead to good outcomes. That memory supports better decisions later. In practice, this means the agent gradually shifts from uninformed trial and error toward more purposeful action selection.

Beginners often make two mistakes here. First, they think a reward changes behavior instantly and perfectly. In reality, learning is gradual. A single lucky reward should not always dominate the table. Second, they focus only on immediate reward and forget that some actions are valuable because they lead to better future states. Reinforcement learning usually cares about both the current reward and what might happen next.

Good reward design is also essential. If rewards are too sparse, the agent may struggle to learn because useful feedback is rare. If rewards are inconsistent, the table may fill with confusing values. A small clean environment with understandable rewards makes the learning process much easier to observe and trust.

In practical terms, the Q-table becomes the bridge between past rewards and future choices. Memory turns feedback into strategy.

Q-learning sounds mathematical, but the core update can be explained in plain English. After the agent takes an action, it looks at three things: what it thought about that action before, what reward it just received, and how promising the next state seems. Then it adjusts the table entry a little in that direction.

You can think of the update as a correction step. The old Q-value is the agent's current guess. The reward plus future promise is better evidence. The update nudges the old guess toward the new evidence. If the outcome was better than expected, the value goes up. If the outcome was worse than expected, the value goes down.

This idea contains an important engineering choice: the value should not usually jump all the way to the latest result. Instead, it should move part of the way. That partial movement makes learning more stable because one unusual event does not completely rewrite memory. Over many training steps, the values become better estimates.

Another key idea is future promise. If an action leads to a state where the agent has strong options later, that action should get credit for setting up future success. This is why Q-learning is powerful even in simple environments. It does not only learn from immediate reward. It also learns from the best value available in the next state.

In plain workflow terms, the update is:

A common mistake is updating the wrong cell in the table. Another is forgetting to use the next state when computing future value. When debugging, print the state, action, reward, next state, and changed table value after each move. If you can explain each update in words, you usually understand the algorithm well enough to trust your implementation.

The full training loop becomes much easier to understand when you see it as a repeated sequence of small steps. Reinforcement learning is not magic. It is a disciplined cycle that runs again and again. The agent observes a state, chooses an action, receives a reward, moves to a next state, updates the Q-table, and repeats until the episode ends. Then a new episode begins.

For a beginner project, train one step at a time and inspect the process. Suppose the agent starts in a grid square. It chooses an action using a simple rule such as: sometimes explore randomly, otherwise pick the action with the highest Q-value. The environment returns a reward and new state. The agent updates the table entry for the action it just took. That single update is one unit of learning.

Over many steps, the pattern becomes clear. Productive actions slowly gain higher values. Harmful actions lose value. The policy, meaning the agent's action choices, becomes better because the table behind those choices becomes better. This is the practical outcome of training.

There is also an important discipline in how you build and test the loop. Keep logs. Print small tables. Run short episodes first. Confirm that rewards are actually being delivered. Check that terminal states stop the episode when expected. If you skip these checks, it becomes hard to tell whether the agent is learning or whether the code is just running.

A useful mental model is that each move teaches a tiny lesson. Most individual lessons are small, but together they create a behavior pattern. Do not expect perfection after ten steps. Expect gradual improvement after many consistent updates. That is the rhythm of reinforcement learning.

By the end of this chapter, the main story should feel concrete: the agent starts clueless, learns through random exploration, stores experience in a Q-table, updates that table after each move, and gradually chooses better actions. This step-by-step training loop is the foundation for building your first working reward agent in Python.

1. Why can random actions still help a beginner reinforcement learning agent learn?

2. What is the main role of a simple action-value table in this chapter?

3. Which sequence best matches the learning workflow described in Chapter 4?

4. How is the idea behind Q-learning presented in this chapter?

5. What engineering mindset does the chapter recommend for beginners building a learning agent?

In this chapter, everything comes together. In earlier parts of the course, you learned the basic language of reinforcement learning: an agent observes a state, chooses an action, receives a reward, and tries to improve over time. Now we will turn those ideas into a complete working loop. This is the moment where reinforcement learning stops feeling abstract and starts feeling like engineering.

A reward-based agent does not begin with wisdom. At first, it mostly guesses. It moves through the environment, receives good or bad feedback, and stores what it learns in a simple table. Over many episodes, that table becomes a rough map of which actions are promising in each state. The key idea is simple: repeat the cycle enough times, and the agent can discover a better path without being directly told the answer.

This chapter focuses on four practical lessons. First, we will combine the environment and the learning loop so the agent can actually interact with the world. Second, we will train over many episodes because one or two tries are never enough. Third, we will tune a few small settings, such as exploration, learning rate, and discount, to improve results. Fourth, we will watch the agent learn, which is important because training is easier to trust when you can see progress.

You do not need a complicated game engine or advanced math to understand this workflow. A tiny grid world or a few connected locations are enough. The same pattern appears in larger systems too: reset the environment, let the agent act step by step, update its table using rewards, and repeat. When beginners understand this loop clearly, they are ready for much bigger reinforcement learning ideas later.

As you read, notice the engineering judgement behind each decision. We are not only writing code that runs. We are choosing defaults that make learning stable, inspecting progress instead of guessing, and avoiding common mistakes such as training too briefly or using rewards that give no useful signal. A small agent can teach big lessons.

By the end of this chapter, you should be able to describe not just what a reward-based agent is, but how to build one in a beginner-friendly way. More importantly, you should understand why each moving part exists. Reinforcement learning is often introduced as a collection of formulas, but in practice it is a repeated decision process with feedback. When that process is clear, the formulas become much less intimidating.

Practice note for Combine the environment and learning loop: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Train the agent over many episodes: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Tune a few simple settings to improve results: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Watch the agent learn a better path: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

The first practical milestone is connecting the agent to the environment in a full learning loop. Until now, you may have looked at states, actions, and rewards as separate ideas. Training begins when they are chained together in the correct order. A typical episode starts by resetting the environment to a starting state. Then, while the episode is not finished, the agent selects an action, the environment responds with a new state and reward, and the agent updates its value table using that experience.

For a beginner-friendly table-based agent, the workflow often looks like this in plain language: look up the current state in the table, choose an action, perform that action, observe the reward and next state, update the table entry for the old state and chosen action, then move to the next state. Repeat until the goal is reached or a step limit is hit. That is the heart of training.

Engineering judgement matters here. It is wise to include a maximum number of steps per episode, even in a tiny environment. Otherwise, if the agent gets stuck wandering, your training loop may never end. It is also useful to define terminal states clearly. If the goal is reached, the episode should stop cleanly so the reward signal has meaning.

A common mistake is updating the table at the wrong time. The update should use the reward and next state produced by the action the agent just took. Another common mistake is forgetting to reset the environment at the start of each episode. Without reset, episodes blend together and learning becomes hard to interpret. Keep the loop simple and explicit. When each part is visible, debugging becomes much easier.

The practical outcome of this section is confidence: you can now build a full interaction cycle, not just isolated code fragments. Once the environment and agent are connected correctly, repeated learning becomes possible.

One episode rarely teaches enough. Even if the agent accidentally finds the goal once, that single path could be luck. Reinforcement learning depends on repeated experience, so training must run over many episodes. Each episode is one fresh attempt from the start. Across dozens, hundreds, or even thousands of attempts, the value table gradually reflects what tends to work.

Think of a child learning to navigate a maze. On the first try, they bump into walls and make random turns. By the tenth try, some routes feel familiar. By the hundredth try, they often head in the right direction quickly. Your agent learns in a similar way, except its memory is stored in numbers inside a table rather than in human intuition.

In code, this means wrapping the full step-by-step loop inside an outer loop over episodes. During each episode, collect basic information such as total reward, steps taken, and whether the goal was reached. These measurements help you understand if learning is improving. If you only train blindly and inspect the final result, you may miss useful clues about what happened along the way.

A practical decision is choosing how many episodes to run. In a tiny environment, 200 to 1000 episodes is often enough to see meaningful change. Too few episodes can make a good algorithm look broken. Too many episodes are usually less harmful in a small setting, although they may waste time. Start with a moderate number, observe the trend, then adjust.

A common beginner mistake is expecting steady improvement every episode. Learning is noisy. Some episodes will be worse than earlier ones, especially when exploration is active. That is normal. Focus on trends across many episodes, not on perfect short-term behavior. The practical outcome is that you now understand why repetition is essential: the agent becomes better not because it is told the answer, but because it accumulates enough experience to estimate better choices.

Exploration versus exploitation is one of the most important ideas in reinforcement learning. Exploration means trying actions that may not currently look best, just to gather information. Exploitation means choosing the action that the table currently believes is best. A good learning system needs both. If the agent only exploits from the beginning, it may repeat poor early guesses forever. If it only explores, it may never settle into a strong path.

A common beginner strategy is epsilon-greedy action selection. With probability epsilon, the agent explores by choosing a random action. With probability 1 minus epsilon, it exploits by choosing the action with the highest current value in the table. This simple rule works well in small examples because it is easy to explain and easy to implement.

Suppose epsilon is 0.2. That means about 20% of the time the agent tries something random, and about 80% of the time it follows its current best idea. Early in training, a higher epsilon can help the agent discover useful routes. Later, reducing epsilon can help it use what it has learned more consistently. This gradual reduction is often called epsilon decay.

The engineering judgement here is balance. If epsilon is too low too early, the agent may become stubborn and never find a better path. If epsilon stays too high forever, the agent behaves unpredictably and performance may stop improving. In a tiny grid world, a sensible starting point might be 0.1 to 0.3, then slowly decay it over episodes.

A common mistake is assuming random actions are bad. In learning, random actions are often the reason the agent discovers the goal at all. Exploration is not wasted effort; it is an investment in future knowledge. The practical outcome is that you can now explain why good agents do not always choose the current best-looking action while training. Sometimes a small amount of curiosity leads to a much better final policy.

Two settings strongly shape how a table-based reward agent learns: the learning rate and the discount factor. These names sound technical, but their meanings are intuitive. The learning rate controls how strongly new experience changes old estimates. The discount factor controls how much the agent cares about future rewards compared with immediate rewards.