Computer Vision — Beginner

Build simple AI tools that organize photos and find objects

"AI That Sees for Beginners: Create Photo Sorting and Object Spotting Tools" is a short, book-style course designed for complete beginners. If you have never studied AI, written code, or worked with data before, this course gives you a clear and gentle path into computer vision. You will start with the most basic idea: how a computer looks at an image differently from a person. From there, you will move step by step toward building two simple and practical AI tools: one that sorts photos into categories and one that spots objects inside a picture.

This course avoids heavy jargon and explains every concept in plain language. Instead of assuming technical experience, it focuses on first principles. You will learn what images are made of, why labels matter, how examples teach an AI system, and how to tell whether your results are useful. Every chapter builds on the previous one, so you will never feel lost or thrown into advanced material too early.

Many AI courses move too fast or assume you already know programming, math, or machine learning terms. This course is different. It is structured like a short technical book with six connected chapters, each one adding a small new layer of understanding. By the end, you will not only know what computer vision is, but also how to think through a tiny real-world project from beginning to end.

In the first half of the course, you will focus on image classification, which means teaching AI to sort photos into groups such as pets, food, travel, or objects. You will learn how to gather image examples, organize them into categories, and check whether the model is making sensible choices. You will also learn how to improve results by fixing messy data and reviewing wrong predictions.

In the second half, you will move into object spotting, also called object detection. Instead of giving one label to a whole image, this kind of AI finds specific items inside the picture and marks where they are. You will learn the idea of bounding boxes, confidence scores, and what makes object spotting more challenging than simple classification. By the end, you will understand how both tools work and when to use each one.

This course is made for true beginners who want practical AI skills without being overwhelmed. It is ideal for learners who are curious about visual AI, professionals who want to understand what modern image tools can do, and creators who want to organize photos or identify objects in simple workflows. If you have basic computer skills and are willing to learn by doing, you are ready to begin.

You do not need any background in coding, mathematics, statistics, or data science. The goal is to make computer vision feel approachable, useful, and exciting from day one. If you are ready to begin your first AI journey, Register free and start building visual tools with confidence.

You will understand the core ideas behind computer vision and be able to describe a simple image project from start to finish. You will know how to prepare beginner-friendly image data, train a basic sorter, review results, and understand the foundations of object spotting. Just as important, you will be able to spot common beginner mistakes and make sensible improvements.

This course also prepares you for the next step. Once you finish, you can continue exploring related topics in AI, machine learning, and practical automation. To discover more learning paths after this course, you can browse all courses on Edu AI.

Computer vision can sound advanced, but its basic ideas are easier to understand than many people think. With the right structure, examples, and explanations, absolute beginners can learn to build useful photo sorting and object spotting tools. This course gives you that structure in a friendly, practical format so you can move from curiosity to capability one chapter at a time.

Machine Learning Engineer and Computer Vision Educator

Sofia Chen designs beginner-friendly AI learning programs focused on practical visual tools. She has helped new learners build their first image classifiers and object spotting systems using clear, step-by-step methods. Her teaching style turns complex ideas into simple actions anyone can follow.

Computer vision is the part of artificial intelligence that helps machines work with pictures and video. A person can look at a photo and quickly say, “That is a dog on a couch,” but a computer does not begin with that kind of understanding. It receives numbers, patterns, and pixel values. The job of computer vision is to turn those raw picture signals into useful decisions, such as sorting vacation photos into folders, checking whether a package arrived, or finding a face in a camera frame.

For beginners, the most important idea is that computer vision is not magic. It is a process. First, we collect images. Then we organize them with names or labels. Next, we train a model to notice repeating patterns. Finally, we test the model, review mistakes, and improve the system. This chapter introduces the language and mental model you will use throughout the course. You will learn what images, labels, and objects mean, how a computer reads picture patterns, and how to plan a first project with realistic expectations.

In this course, we focus on two beginner-friendly tasks. The first is photo sorting, which means grouping whole images into categories such as cats, dogs, food, or cars. The second is object spotting, which means locating a specific item inside an image, such as identifying where a cup appears on a table. These tasks sound similar, but they solve different problems and require different kinds of thinking. Understanding that difference early will save time and frustration later.

Good computer vision work also depends on engineering judgment. A model can only learn from the examples you give it. If your photos are blurry, mixed up, or labeled carelessly, the results will be weak. If your project goal is too broad, such as “recognize everything in every picture,” you will create a difficult problem before you have learned the basics. Strong beginner projects are small, clear, and measurable. They use a limited number of categories, consistent images, and a simple method for checking correct and incorrect predictions.

As you read this chapter, keep one practical question in mind: what should a machine be able to do with an image by the end of my project? That question helps you choose the right data, the right labels, and the right type of model. It also helps you understand mistakes. If the model sorts beach photos well but fails on dark indoor photos, that is not random. It usually means the training examples did not prepare it for that case. Computer vision improves when we inspect failures and make small, targeted fixes.

By the end of this chapter, you should be able to describe computer vision in plain language, explain how digital pictures become machine-readable data, separate the ideas of images, labels, and objects, and sketch a beginner project plan. That foundation is essential because later chapters will build on it when you prepare data, train a model, and evaluate results. If you can think clearly about the problem before touching any tool, you will learn faster and make better choices.

Practice note for Understand what computer vision does in everyday life: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Recognize the difference between images, labels, and objects: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Computer vision means teaching a machine to use images as input and produce a useful output. The output could be a category, a location, a warning, or a count. In plain language, it is the skill that lets software “look at” a picture and do something helpful with what it finds. That does not mean the computer sees like a human. People understand context, meaning, and common sense. A computer starts with data and patterns. It learns by comparing many examples and adjusting itself to recognize similarities.

Think of a beginner photo sorting app. You give it many photos labeled “cat” and many photos labeled “dog.” Over time, the model learns visual patterns that often appear in one group and not the other. It may notice ear shapes, fur textures, body outlines, or color patterns. The model is not thinking about pets the way a person does. It is learning statistical relationships between image patterns and labels.

This is why project definition matters so much. If your goal is vague, the model has no clear target. A good beginner vision goal sounds like this: “Sort phone photos into food, pets, and outdoor scenes,” or “Spot whether a red backpack appears in the image.” Clear goals create a clear workflow. You know what images to collect, what labels to assign, and what success should look like. A common mistake is trying to solve a giant problem immediately. Start narrow. A smaller, cleaner task teaches the core ideas faster and produces more useful results.

In everyday life, computer vision powers face unlock, package scanners, quality checks in factories, shopping search tools, and camera filters. The same basic idea is underneath all of them: turn pictures into decisions. For beginners, the important habit is to ask not only “Can AI see this?” but also “What exact decision do I want the system to make?”

A digital image is a grid of tiny squares called pixels. Each pixel stores numeric values that represent color and brightness. In a simple color image, each pixel usually contains red, green, and blue values. A computer does not receive “a beach” or “a bicycle.” It receives a large table of numbers. That is the first big shift in thinking for computer vision: meaningful pictures for humans are numeric patterns for machines.

Image size matters because it changes how much detail is available. A high-resolution image contains more pixels, which can help show small features, but it also requires more memory and computation. A low-resolution image is faster to process, but fine details may disappear. In beginner projects, it is common to resize images to a consistent size so the model sees comparable inputs. This is a practical engineering choice. Standardized images make training simpler and reduce unexpected errors.

Computers look for patterns across nearby pixels. Edges, corners, textures, and shapes emerge when pixel values change in meaningful ways. For example, a sharp boundary between dark and light pixels may indicate the edge of an object. Repeating colors and textures may help identify grass, fur, or sky. Models do not inspect pixels one by one in isolation. They learn combinations and arrangements of pixels that often match certain categories.

A common beginner mistake is assuming the model understands an image the same way a person does. If your photos vary wildly in lighting, angle, blur, and background, the model may focus on the wrong pattern. It might associate “indoor yellow lighting” with one class and “bright blue sky” with another, even when that is not the real concept you want. That is why image preparation matters. Try to keep image quality reasonable, remove broken files, and use examples that represent the true task. Clean pixel data leads to more reliable learning.

Labels are the answers you give the model during training. If an image shows an apple and you assign the label “fruit,” you are telling the system what that example should represent. Categories are the set of possible labels, such as cat, dog, car, or flower. In a sorting project, each full image usually gets one main label. In a spotting project, labels may also include the object location, such as a box around the item.

Labels matter because they define what the model is learning. If labels are inconsistent, the model becomes confused. Suppose one image of a muffin is labeled “dessert,” another similar image is labeled “breakfast,” and another is labeled “bread.” That may be reasonable in real life, but for a beginner model it creates mixed signals. The model learns best when categories are clear and practical. Good categories are distinct, easy to explain, and supported by enough examples.

Another important distinction is between the image, the label, and the object. The image is the entire picture file. The label is the name assigned for learning. The object is the actual thing inside the image, such as a bottle in the corner or a person near the center. Beginners often mix these ideas together. If your task is photo sorting, the label usually applies to the whole image. If your task is object spotting, the object location becomes part of the training information.

Use engineering judgment when choosing categories. Start with a small number that makes sense for your data. Avoid overlapping labels, vague names, or categories with very few examples. Also watch for accidental shortcuts. If every “cat” photo is indoors and every “dog” photo is outdoors, the model may learn the background instead of the animal. Label quality is one of the strongest factors in project success. Good labels teach the right lesson; weak labels teach noise.

Sorting and spotting are related, but they answer different questions. Sorting asks, “What kind of image is this?” The model looks at the entire photo and chooses a category. For example, it might decide whether a picture belongs in pets, food, or travel. This is often called image classification. It is one of the best starting points for beginners because the setup is simpler: collect images, assign one label per image, and train the model to predict the category.

Spotting asks, “Where is the object in this image?” The model must do more than name the object. It must identify its location, often using a box or region. For example, instead of saying only “there is a cup,” the system points to the cup on the table. This is closer to object detection. It is more demanding because the training data needs more detail. You must mark not just what appears, but where it appears.

Understanding the difference helps you choose the right first project. If your goal is to organize your photo library, sorting is the natural fit. If your goal is to build a tool that finds items in a scene, such as locating packages on a shelf, spotting is the better match. A common mistake is using a sorting model for a spotting problem and then wondering why the system cannot show the object location. The model is only solving the task it was trained for.

There is also a practical workflow difference. Sorting projects usually need consistent category folders and a balanced set of examples. Spotting projects need image annotations that define object positions. For beginners, it often makes sense to start with sorting, learn how predictions and errors behave, and then move to spotting. That progression builds intuition. Once you understand how image patterns map to labels, it becomes easier to understand how location information adds another layer to the problem.

Computer vision becomes easier to understand when you connect it to ordinary situations. On phones, vision helps sort photo galleries, blur backgrounds, unlock screens with faces, and search for pictures by subject. When your phone groups images of pets or screenshots, it is doing a form of visual sorting. When it places a box around a face or tracks a person in video, it is doing a form of spotting. The same ideas you will learn in a beginner project already appear in tools many people use every day.

In shops, computer vision supports barcode scanning, shelf monitoring, self-checkout assistance, and product search. A store might use sorting to classify product images by type, or spotting to locate missing items on a shelf. In these settings, image quality matters a lot. Reflections, crowded backgrounds, and poor camera angles can cause errors. This teaches an important engineering lesson: a model’s performance is linked to the environment in which it operates. A system trained on neat studio photos may struggle in a busy real store.

At home, vision can help with smart doorbells, pet cameras, cleaning robots, and home inventory tools. A camera may spot a package near the front door or distinguish a person from a passing car. A robot vacuum may use vision to avoid shoes or cords. These examples show practical outcomes, but they also reveal limits. If lighting changes at night, if the camera lens is dirty, or if the object is partly hidden, mistakes become more likely. Real-world vision is always tied to data conditions.

For beginners, these examples offer two useful lessons. First, choose a project connected to a real need, even a small one. That keeps the task concrete. Second, define the environment clearly. Will your images come from a phone indoors? From product photos on a plain background? From a single room in daylight? The narrower and more realistic the setting, the easier it is to build something that works reliably. Good computer vision is not about solving every case. It is about solving a specific case well enough to be useful.

Your first vision project should be small, focused, and easy to evaluate. A strong beginner roadmap has four steps: define the task, gather and organize images, choose labels carefully, and plan how to check results. For example, you might decide to sort personal photos into three categories: pets, food, and outdoor scenes. That is much better than starting with twenty vague categories. The goal is to finish a complete learning cycle, not to build the biggest possible system.

Start by writing one clear project sentence: “I want a model that can sort phone photos into pets, food, and outdoor scenes.” Next, collect examples for each category. Try to include variety, but keep the categories distinct. Organize the images into folders or a simple dataset structure. Remove duplicate images, corrupted files, and examples that do not fit the chosen labels. This preparation stage is not boring cleanup; it is part of the model-building process. Better data usually beats clever tricks.

Then decide how you will measure results. A simple plan is enough at first: count correct predictions and incorrect predictions for each category. Review mistakes by hand. Ask practical questions. Did the model confuse food with outdoor picnic photos? Did it fail on dark pet images? Did the background dominate the decision? This mistake-checking step is where improvement begins. Instead of guessing, you inspect failure patterns and make small fixes, such as adding more examples, balancing categories, or refining labels.

If you want to prepare for object spotting later, keep notes about which images contain clear objects and where they appear. You do not need to build detection immediately, but you can begin thinking in that direction. The key beginner habit is disciplined planning. Choose one task, define success, organize data carefully, and learn from errors. That workflow reflects how real computer vision projects are built. Even simple projects teach the full cycle of problem definition, data preparation, training, evaluation, and refinement. That is the right foundation for everything that comes next.

1. What is the main job of computer vision described in this chapter?

2. Which choice correctly matches the terms image, label, and object?

3. What is the difference between photo sorting and object spotting?

4. Why might a model do well on beach photos but fail on dark indoor photos?

5. Which beginner project plan best fits the chapter's advice?

Before a computer vision model can learn anything useful, it needs images that are organized, understandable, and matched to a clear goal. Beginners often want to jump straight into training, but the quality of a project is usually decided earlier, during data preparation. In this chapter, you will learn how to collect useful photos for a beginner dataset, organize images into clear groups, clean messy data by removing confusing examples, and prepare a small training set the right way. These steps may sound simple, but they are the foundation of every successful image project.

Think of image preparation as teaching setup. If you show a learner random pictures with unclear labels, the learner will struggle. AI behaves the same way. A model can only discover patterns from the examples you provide. If one folder contains dog photos, another contains cat photos, and a third accidentally contains cartoons, screenshots, and duplicate files, the model receives mixed signals. It may still produce answers, but those answers will be unreliable. Good preparation reduces confusion and makes results easier to interpret later.

For a beginner project, your goal is not to build the biggest dataset. Your goal is to build a small, clean, understandable one. A good starter dataset is simple enough that you can inspect it yourself. You should be able to open every folder, scan the contents, and explain why each image belongs there. This habit builds engineering judgment. In real computer vision work, teams spend a large amount of time reviewing data because strong models depend on strong examples.

A practical workflow looks like this: first choose a narrow problem, then collect or create images that match that problem, then place them into clearly named classes, then remove weak or confusing images, and finally split the data into training and testing groups. If you follow that order, you avoid many beginner mistakes. You also make later stages easier, including reading model results, understanding incorrect predictions, and improving the system through small fixes.

This chapter also prepares you for later ideas like object spotting. Even when your first task is simple photo sorting, the same discipline applies. If you eventually want to detect objects inside an image, you still need useful images, clear categories, and careful review. Clean data is not a separate step from AI learning; it is part of how AI learning works.

As you read the sections in this chapter, focus on practical choices. Ask yourself: what is the model supposed to notice, what might confuse it, and how can I make the dataset match the real task? That mindset will help you move from random image collection to purposeful dataset design. For beginners, this is one of the most valuable habits in computer vision.

Practice note for Collect useful photos for a beginner dataset: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Organize images into clear groups: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Clean messy data by removing confusing examples: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Prepare a small training set the right way: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

The best beginner computer vision projects start with a problem that is small, clear, and visually distinct. Instead of trying to classify ten similar animal species or detect many object types at once, choose something like apples versus bananas, indoor plants versus outdoor plants, or mugs versus bottles. A simple problem helps you understand the full workflow without getting buried in complexity. It also makes errors easier to inspect later, because you can usually tell whether the model was confused by the background, the object shape, or the image quality.

A useful rule is this: if a human beginner would struggle to sort the images quickly, the problem may be too hard for a first project. You want categories that look meaningfully different. This helps the model learn visible patterns such as color, outline, texture, or common arrangement. It also helps you collect data faster because it is easier to judge whether a photo fits the category.

Good engineering judgment means defining the task before collecting images. Write the task in one sentence. For example: “Sort photos into cats and dogs,” or “Group images into bicycles and cars.” Then decide what counts and what does not count. Are toy cars allowed? Are drawings allowed? Are partial objects allowed? These choices matter because they shape the examples the model sees. If the rules stay vague, the dataset becomes inconsistent.

Another practical choice is whether your classes should be balanced. For a first project, try to keep roughly similar numbers of images in each class. If one folder has 40 images and the other has 400, the model may lean too heavily toward the larger class. Balanced classes make evaluation easier and reduce misleading results.

Keep the first version small on purpose. A beginner dataset might have 50 to 200 images per class, depending on the problem. That is enough to practice proper preparation and basic training. Once you understand the process, you can expand. Starting smaller lets you review your images carefully, which is more valuable than collecting a huge set that you have not checked.

Common mistakes at this stage include choosing classes that overlap too much, changing the project goal halfway through, or collecting images before writing clear class definitions. A simple, stable problem gives the rest of the chapter a solid foundation. If you define the task well now, every later step becomes easier.

Once the problem is clear, the next step is to gather images that actually help the model learn. For a beginner project, it is often best to use photos you take yourself, carefully selected public datasets, or openly licensed image sources. The key idea is not just quantity. It is usefulness. Useful images match the categories, are visible enough to inspect, and represent the kind of input your project will later see.

If you create your own images, try to include natural variation. Do not photograph every object from exactly the same angle against the same background. For example, if you are collecting fruit photos, take some close-up images, some from farther away, some in brighter light, and some on different surfaces. This teaches the model to focus on the object instead of memorizing one setting. Variation is healthy when the label stays correct.

If you gather images from existing sources, review them one by one. Search results and downloaded folders often contain surprises such as illustrations, collages, screenshots, watermarked images, or unrelated objects. A folder named “dogs” may contain people holding dogs, multiple animals in one frame, or logo graphics. For a beginner dataset, it is worth taking time to inspect everything manually. You are not only collecting photos; you are deciding what kind of visual evidence the model will trust.

Beginner-friendly images usually have one main subject and a readable label. They do not need to be perfect studio photographs, but the target object should be visible enough that a human can confidently say what it is. When possible, include some normal real-world messiness, such as slightly varied lighting or cluttered backgrounds, because that reflects practical use. But avoid making the dataset chaotic. There is a difference between realistic variation and random confusion.

It also helps to think ahead. If your eventual goal is photo sorting, collect full images with clear classes. If later you want to explore object spotting, try keeping some images where the object appears in different positions within the frame. That habit builds intuition about how vision systems see scenes, not just isolated objects.

A common mistake is collecting many nearly identical photos. If you take ten pictures of the same object from almost the same position, the dataset may look larger than it really is. The model then learns too much from repeated examples and too little from true variety. Better to have fewer, more diverse images than many copies of the same scene. In short, choose images that are clear, varied, and honestly representative of the task.

Organization is one of the most practical skills in computer vision. Even a small project becomes difficult to manage when folders are inconsistent, file names are vague, or labels change midway through the work. Clear structure helps both you and the training system. It reduces mistakes, supports repeatable experiments, and makes it easier to return to the project later.

For a simple image classification task, the most common beginner structure is one folder per class. For example, you might have folders named cats and dogs, or mugs and bottles. Choose names that are short, plain, and stable. Avoid using multiple labels for the same idea, such as mixing dog, dogs, and puppy unless they are intentionally different classes. Inconsistent naming creates accidental category splits, which can quietly break a project.

Good class names should match the real decision your model is making. If the model is sorting fruit, do not name a folder yellow_things if it actually contains bananas. Labels should describe the category, not a temporary visual clue. Otherwise the project becomes harder to explain and more likely to fail when images change.

It is also smart to keep file names readable and unique, even if the folders do most of the labeling. Names like cat_001.jpg or bottle_kitchen_12.jpg are more useful than IMG8392.jpg. Descriptive file names are not required for model training, but they help during troubleshooting. When you review mistakes later, clear names make it easier to trace where an image came from.

Another practical habit is writing down your class rules in a small note file. For example: “Only real photos, no drawings; main object must be visible; one label per image.” This turns folder structure into a documented decision system. Documentation is not only for large teams. Even beginners benefit from writing simple rules because memory becomes unreliable once the dataset grows.

Common mistakes include mixing uppercase and lowercase inconsistently, renaming classes after splitting the data, and creating vague extra folders like misc or other too early. If an image does not fit, review your class definitions instead of hiding confusion in a catch-all folder. Clear organization is not glamorous, but it saves hours of correction later and makes your training pipeline much more dependable.

Cleaning data means deciding which images teach the model well and which images send the wrong message. This is one of the most important parts of preparing a beginner dataset. A good example clearly supports the label. A bad example is not just low quality; it is any image that makes the task harder in an unhelpful way. That includes wrong labels, confusing content, duplicates, heavy blur, extreme cropping, unrelated subjects, or images where the category is impossible to judge.

Imagine a folder for bicycles. A good example might show a bicycle clearly, even if the background is busy. A bad example might show a distant street scene where the bicycle is tiny, half hidden, and easy to miss. Another bad example might be a motorcycle incorrectly placed in the bicycle folder. These mistakes matter because the model cannot ask for clarification. It simply learns from what it sees, even when the lesson is wrong.

Not every imperfect photo should be removed. Some variation helps the model become more robust. Slight shadows, different backgrounds, and moderate angle changes are often useful. The goal is not to create an unrealistically perfect dataset. The goal is to remove examples that are misleading. This is an important engineering judgment: keep realistic variety, remove damaging confusion.

One practical review method is to scan each folder and ask three questions for every image: Is the label correct? Is the main object visible enough? Does this image add useful variety or only noise? If the answer to the first two is no, remove it. If the third answer is “only noise,” consider removing it as well. This review process may feel slow, but it improves the final model far more than blindly adding more files.

Also watch for duplicates or near-duplicates. Repeated images make a dataset look larger without increasing true information. They can also create unfair testing later if almost identical copies appear in both training and test folders. Another issue is hidden bias. If all cat photos are indoors and all dog photos are outdoors, the model may learn the background instead of the animal. During cleaning, look for these accidental patterns.

Removing confusing examples is not about perfectionism. It is about helping the model focus on the right signal. A smaller clean dataset often beats a larger messy one, especially for a beginner project where you want understandable results and simpler debugging.

After collecting and cleaning your images, you need to separate them into groups for learning and evaluation. The training set is used to teach the model. The testing set is used to check how well the model performs on images it has not seen before. This split is essential because a model that performs well only on familiar images has not really learned the task. It may simply be memorizing patterns from the training data.

For a beginner project, a simple split such as 80% for training and 20% for testing works well. If you have 100 images in a class, place about 80 in training and 20 in testing. Try to keep the class balance similar in both groups. If one class has very few test examples, the results may be misleading. You want the testing set to be a fair small sample of the same problem.

The most important rule is separation. Do not move images between training and testing after looking at results just to improve the score. And never allow duplicate or near-duplicate images to appear in both groups. If the model sees almost the same photo during training and testing, the evaluation becomes too easy and no longer reflects real performance. This is called data leakage, and it gives false confidence.

A practical workflow is to create a clear folder structure such as train/cats, train/dogs, test/cats, and test/dogs. This makes the split visible and easy to verify. Once you create the split, avoid changing it casually. Keeping the test set fixed helps you compare future model versions fairly. If you later improve the dataset, document what changed so your results remain meaningful.

You should also think about variety in both groups. If every bright image goes into training and every dark image goes into testing, the test may become artificially difficult. Mix conditions sensibly. The testing set should not be easier than training, but it also should not be weirdly different from the real task.

Preparing a small training set the right way means respecting the role of each group. Training teaches. Testing checks. That separation allows you to read model results honestly, notice incorrect predictions, and make useful improvements later instead of chasing a misleading high score.

Most beginner computer vision problems do not fail because the model is too simple. They fail because the data pipeline contains avoidable mistakes. Learning to spot these issues early is one of the most valuable practical skills in AI work. When results look strange, the dataset is often the first place to investigate.

One common mistake is inconsistent labeling. For example, some images of cups may be labeled mug while others are labeled cup even though the project only intended one class. Another frequent problem is off-topic data: screenshots, icons, drawings, or unrelated photos mixed into real photo folders. These examples create noise and reduce the model’s ability to learn the actual visual category.

A second major mistake is background bias. If all images in one class share the same setting, the model may learn the setting instead of the object. For instance, if all apple photos are taken on a kitchen table and all banana photos are taken outdoors, the model may partly classify based on scene context rather than fruit shape. To avoid this, collect each class in varied environments and inspect whether one background appears too often.

Another issue is poor train-test separation. Duplicate images, edited versions of the same photo, or burst shots from the same moment can accidentally land in both groups. This makes the test score look stronger than it really is. A careful manual review helps, especially in small datasets. If two images are nearly identical, keep them together in one split or remove one.

File quality can also cause trouble. Corrupted files, unreadable formats, very tiny images, or extreme aspect ratios may break scripts or produce unexpected behavior during preprocessing. Before training, open a sample from each folder and verify that the files load correctly. A few minutes of checking can prevent wasted training runs.

Finally, avoid changing too many things at once. If you rename classes, add new images, and alter the split all together, it becomes hard to understand why results changed. Make small, traceable updates. Good improvement work in computer vision often comes from checking mistakes, identifying a pattern, and applying one targeted fix at a time. That habit supports every later course outcome, from reading incorrect predictions to improving object spotting systems with confidence.

1. What is the main goal of a beginner dataset in this chapter?

2. Why does messy image organization cause problems for an AI model?

3. Which workflow best matches the chapter's recommended order?

4. Which type of image should be removed during data cleaning?

5. Why should training and testing images be kept separate?

In this chapter, you will move from prepared image data to a working beginner-friendly photo sorting workflow. Earlier chapters likely focused on what computer vision is and how images can be organized for learning. Now the goal is more practical: teach a simple model to look at a photo and place it into the correct group, such as cats versus dogs, ripe versus unripe fruit, or cars versus bikes. This kind of task is called image classification, and it is one of the most useful starting points in computer vision because it turns a messy folder of pictures into a system that can make repeatable decisions.

A photo sorter may sound small, but it contains many important ideas used in real AI systems. You need labeled examples, a clean training process, a way to test whether the model works, and a simple method for reviewing mistakes. You also need engineering judgment. For example, a model can appear accurate while secretly learning the wrong clue, such as background color instead of the object itself. A practical builder does not only ask, "Did the model train?" but also asks, "What did it really learn, and would it still work on new images?"

This chapter ties together four key lessons. First, you will train a basic image classifier from prepared photos. Second, you will test whether the sorter places images in the right group. Third, you will review predictions using simple visual examples so the output is understandable. Fourth, you will connect the model to a small working workflow that feels like a real tool rather than an isolated experiment. These steps matter because a useful AI project is not only a model file. It is a repeatable process: load photos, predict categories, inspect errors, and make small improvements.

Keep your expectations realistic. A beginner photo sorter does not need to be perfect to be valuable. If it saves time by sorting a large photo collection into rough groups, that is already useful. If it shows you which photos are uncertain and need human review, that is even better. In computer vision, many successful tools are not fully automatic; they help people work faster and more consistently. As you read the sections in this chapter, focus on understanding the workflow and the reasoning behind each step.

By the end of the chapter, you should be able to describe how a basic classifier is trained, how its predictions are checked, how confidence scores can guide review, and how to package the whole process into a practical sorting tool. This chapter also prepares you for later ideas such as object spotting, where the system does not only name the image category but also helps locate items inside the picture.

Practice note for Train a basic image classifier from prepared photos: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Test whether the sorter places images in the right group: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Review predictions with simple visual examples: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Create a small working photo sorting workflow: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Image classification means giving an entire image one label from a set of categories. If you show a model a photo, it might answer "cat," "dog," "flower," or "car" depending on what it has learned. The key idea is that the model treats the whole image as one input and predicts the most likely group. This is different from object spotting, where the system tries to find where an item appears inside the image. In classification, the question is simpler: "What kind of image is this?"

For a beginner project, classification is an excellent starting point because it has a clear workflow. You collect photos, assign labels, train the model, and then test how well it sorts images. A phone gallery sorter is a useful mental example. If your categories are beach, food, pets, and receipts, the model is not trying to describe every detail in each picture. It is only trying to place each image into the best matching group. That narrow goal makes the problem manageable.

Good classification depends heavily on choosing sensible categories. The labels should be distinct enough that a human could usually agree on the correct answer. If your categories overlap too much, such as "outdoor" and "travel," the model may struggle because the classes are not truly separate. Clear category definitions improve both training quality and evaluation quality. Before you build, write down what each class means and what kinds of edge cases belong in each one.

Another important point is that classification is pattern learning, not human understanding. The model does not know what a dog is in the way a person does. It notices visual patterns across many examples and links them to labels. Because of that, it can be fooled by shortcuts in the data. If most dog photos are outdoors and most cat photos are indoors, the model might partly learn the background instead of the animal. This is why image classification is both a technical and a judgment-based task. You are teaching through examples, so the examples must represent the real problem well.

To teach a model, you need labeled examples: photos that are already sorted into the correct categories by a person. A folder structure is often enough for a first project. For example, one folder may contain images of apples and another folder may contain images of bananas. The model learns by comparing many examples and adjusting its internal parameters so that images from the same class produce similar patterns. The more representative the labeled set is, the better the model can generalize to new photos.

A strong beginner dataset does not need to be huge, but it does need to be clean. Remove broken files, duplicates, and mislabeled images where possible. If your banana folder contains several apples by mistake, training becomes less reliable. Also try to include variation: bright photos, dark photos, close-up shots, far-away shots, different backgrounds, and different camera angles. A model trained on only one style of image often fails when real usage looks slightly different.

You should also split the photos into separate groups for training and testing. The training set is what the model learns from. The test set is kept aside and used later to check whether the sorter places images in the right group on unseen examples. This separation matters. If you test on the same photos used during training, the results can look unrealistically good. A fair test simulates real use by asking the model to classify images it has never seen before.

There is also an engineering judgment step here: balance the classes when possible. If one category has 1,000 images and another has only 50, the model may become biased toward the larger class. If you cannot balance perfectly, at least be aware of the imbalance when reading results. Finally, review a handful of images from each category manually before training. This simple visual check often catches hidden problems early and saves a lot of time later.

Once the labeled data is prepared, you can run a first training session. In a beginner workflow, this usually means choosing a simple image classification model, resizing images to a consistent size, and training for a small number of rounds called epochs. During each epoch, the model sees batches of training images, makes predictions, compares them with the true labels, and updates itself to reduce mistakes. You do not need to understand every mathematical detail to begin. What matters is the process: show labeled examples, measure error, update the model, repeat.

Keep the first run small and observable. Many beginners try to optimize too early. A better approach is to train a basic version quickly, just to confirm that the data pipeline works and the model can learn something. Watch the training and validation results as they change over time. If training accuracy rises but test performance stays poor, the model may be memorizing the training set instead of learning general patterns. If both stay very low, the labels, categories, or image quality may need review.

It helps to record a few details from each run: image size, number of classes, number of training examples, number of test examples, number of epochs, and final performance. This makes your work reproducible. Practical AI building is not only about getting one good result; it is about being able to repeat the workflow and understand what changed. Even a simple spreadsheet of experiments is useful.

Common mistakes in first training sessions include using mixed-up labels, forgetting to separate test data, choosing categories that are too similar, and training on images that do not match future use. Another mistake is treating a single run as final truth. Your first session is a baseline. Its real purpose is to give you something concrete to inspect, improve, and compare against later versions.

After training, the model produces predictions. For each image, it usually returns a class label and a confidence score for each possible category. If an image is predicted as "cat" with 0.92 confidence, that means the model believes cat is the strongest match among the available classes. Confidence is useful, but it should not be treated as certainty. A model can be highly confident and still wrong, especially if the new image looks different from the training examples.

One of the best beginner habits is to review predictions visually. Look at examples the model got right and examples it got wrong. This turns abstract output into something understandable. If several dog images are misclassified as wolves, perhaps the training set lacks certain breeds. If food photos with white plates are often grouped together incorrectly, the model may be overusing background clues. Reviewing images side by side teaches you much more than a single accuracy value.

Simple measures like correct and incorrect predictions are enough to begin. Accuracy tells you what fraction of test images were placed in the right group. You can go one step further by checking which classes are confused most often. A confusion table or even a handwritten list of common mix-ups is practical. The goal is not advanced statistics at this stage. The goal is to understand where the sorter helps and where it still fails.

Confidence scores are also useful for workflow design. You might decide that very high-confidence predictions can be auto-sorted, while low-confidence ones are sent to a review folder for a person to check. This is often better than forcing the model to make every decision alone. In real tools, confidence-based review is a simple but powerful quality control method.

Testing on truly new photos is where the project becomes real. It is one thing for the model to perform well on a carefully prepared test set; it is another for it to handle random images from daily use. Gather a small batch of fresh photos that were not part of training or earlier testing. These should reflect the way the tool will actually be used. If the sorter is meant for phone pictures, use phone pictures. If it is meant for scanned documents, test on scans rather than polished sample images.

As you review new predictions, pay attention to patterns in mistakes. Are dark images often misclassified? Do close-up shots work better than wide scenes? Does the model struggle when multiple objects appear in the same photo? These observations help you judge whether the sorter is ready for practical use and what small fixes might improve it. Sometimes the answer is more training data. Sometimes it is clearer labels. Sometimes it is a workflow change, such as asking users to crop photos before sorting.

This stage also connects naturally to object spotting ideas. A classifier only gives one label for the whole image, so it can fail when the important object is small or off to the side. For example, a photo might contain both a bicycle and a car, but the sorter must choose only one category. That limitation is not a bug in your workflow; it is a reminder that classification and object spotting solve different problems. When whole-image labels are not enough, later computer vision methods can help locate items inside the image.

For now, your practical task is simpler: verify that the sorter places images in the right group often enough to be useful. Do not chase perfection. Instead, document which kinds of photos it handles reliably and which should be reviewed by a person.

A model becomes a tool when it fits into a repeatable workflow. For a photo sorter, the pipeline might look like this: load new images from an input folder, prepare them in the same way as during training, run the classifier, read the predicted label and confidence, move the image into a category folder, and send uncertain images to a review folder. This kind of small end-to-end system is often more valuable than a more complex model with no usable process around it.

Consistency matters here. The preprocessing used in the tool should match the preprocessing used in training. If the training images were resized and normalized in one way, the same steps should happen during prediction. Otherwise, the input distribution changes and performance may drop. It is also wise to save a simple log that records file name, predicted class, confidence score, and final destination folder. Logs make it easier to audit mistakes and improve the tool over time.

Good engineering judgment means designing for human collaboration. A practical sorter should not hide uncertainty. If the confidence is low, make that visible and route the image for review. If a class repeatedly causes confusion, consider merging categories, adding examples, or redefining the labels. Improvement often comes from small fixes rather than complete redesign. Add more varied photos, remove noisy labels, test again, and compare with the earlier baseline.

At this point, you have created more than a model. You have built a beginner computer vision workflow: train a basic image classifier from prepared photos, test whether the sorter places images correctly, review predictions visually, and use the result in a simple real-world pipeline. That is a strong practical milestone. It also lays the foundation for the next stage of vision work, where instead of only sorting whole photos, you begin thinking about locating specific items inside them.

1. What is the main goal of Chapter 3?

2. Why is it important to test a photo sorter on separate images after training?

3. What is one risk mentioned in the chapter when a model seems accurate?

4. According to the chapter, why should you check visual examples instead of trusting one number alone?

5. Which sequence best matches the practical workflow described in the chapter?

Training a beginner photo sorter can feel exciting because the model finally starts making predictions on its own. But the real learning begins after training, when you stop asking, “Did it run?” and start asking, “How well does it work, and why?” In this chapter, you will learn how to read model results in a practical way, notice common mistakes, and improve your project without guessing. This is an important step in computer vision because a model is never useful just because it produces output. It becomes useful when you can inspect its behavior, judge whether the results are trustworthy, and make targeted improvements.

For beginners, results are often introduced through simple counts: how many images were classified correctly and how many were classified incorrectly. That is a good starting point because it keeps the feedback concrete. If your photo sorter is deciding whether a picture contains a cat, dog, or bicycle, you can compare the model’s prediction to the true label and count matches and mismatches. This gives you a direct measure of performance. It also supports one of the most important course outcomes: reading model results using simple measures like correct and incorrect predictions.

However, strong engineering judgment means looking beyond a single score. A model can have a high overall accuracy and still fail badly on one category. It can also make errors that reveal problems in your dataset rather than problems in the algorithm. Maybe some photos are blurry, maybe labels were added inconsistently, or maybe the training examples do not represent the kinds of pictures you care about most. When you inspect mistakes carefully, you are not just criticizing the model. You are learning about the entire system: the data, the labels, the categories, and the intended real-world use.

This chapter also connects naturally to object spotting ideas. Even if your current project is a simple sorter that gives one label per image, the same mindset applies when locating items inside a picture. You still need to ask whether the AI found the right object, missed something important, or highlighted the wrong region. Whether you classify the whole image or spot objects inside it, the process of checking outputs and fixing errors follows the same pattern: measure, inspect, improve, and test again.

A beginner-friendly workflow for understanding results looks like this:

This process builds confidence. Instead of treating AI output like magic, you learn to check it calmly and systematically. You begin to see patterns in the mistakes. Maybe the model confuses dark-colored dogs with cats in low light. Maybe bicycles are often missed when only a small part of the wheel is visible. These observations point to practical fixes. A good beginner does not chase random changes. A good beginner uses evidence from the results to make small, smart improvements.

By the end of this chapter, you should feel comfortable measuring how well your photo sorter works, spotting common errors in predictions, improving results by refining labels and examples, and building confidence in checking AI output. Those skills turn a basic experiment into an actual computer vision workflow.

Practice note for Measure how well the photo sorter works: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Spot common errors in predictions: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Accuracy is one of the simplest ways to measure a photo sorter. It answers a basic question: out of all the images tested, how many did the model label correctly? If your model looked at 100 test photos and got 82 right, the accuracy is 82%. This is useful because it is easy to understand and easy to compare across different training runs. For a beginner, accuracy is a strong first checkpoint because it turns model behavior into a number you can track.

But accuracy only makes sense if you measure it on images the model did not train on. If you test on the same images used during training, the score can look unrealistically high. The model may have memorized patterns in those exact pictures instead of learning a general rule. That is why a separate validation or test set matters. It gives you a more honest view of how the model will behave on new photos.

It also helps to think about what “good” accuracy means in context. A model with 90% accuracy may sound impressive, but if one class is much easier than the others, that number may hide weak performance. Suppose your dataset has mostly dog photos and very few bicycle photos. The model might score well overall while still missing many bicycles. So accuracy is a helpful summary, but it is not the full story.

In practice, beginners should record accuracy after each training attempt and keep notes about the dataset used. If accuracy changes, ask why. Did you add cleaner examples? Did you fix mislabeled images? Did you simplify confusing categories? This turns the score into a tool for learning, not just a number to celebrate or fear.

Once you know the overall accuracy, the next step is to inspect individual predictions. Every result falls into one of three practical groups: clearly correct answers, clearly wrong answers, and edge cases. Clearly correct answers are the easiest to understand. The image is obvious, the label is clear, and the model gets it right. These examples tell you the model has learned at least some useful patterns.

Clearly wrong answers are also valuable. If a photo of a bicycle is predicted as a dog, something is off. Maybe the image is unusual, maybe the object is tiny, or maybe the training set did not include enough similar bicycles. Wrong answers are not just failures. They are clues. They show exactly where the model needs help.

Edge cases are especially important in computer vision. These are images where even a human might pause. A cat hidden in shadow, a toy dog that looks real, or a bicycle partly covered by another object may all create uncertainty. In object spotting, edge cases can appear when only part of an item is visible or when the background looks similar to the object itself. These examples matter because real-world images are often messy. If your model only works on clean, centered, bright photos, it may struggle in practical use.

A strong review habit is to create folders or notes for these three groups and inspect a sample from each after every training run. This builds confidence because you stop treating all errors the same way. Some are simple mistakes, some reveal label problems, and some are borderline examples where even the task definition may need improvement.

Models do not get confused for mysterious reasons. Usually, the confusion comes from patterns in the data, the labels, or the image itself. One common cause is visual similarity between classes. Cats and small dogs may share fur textures, face shapes, or indoor backgrounds. If the categories overlap visually, the model needs stronger and more varied examples to learn the difference.

Another common cause is poor image quality. Blurry, dark, low-resolution, or cropped images remove useful details. A model trained mostly on clear photos may struggle when a test image is noisy or badly lit. In object spotting, this problem gets worse when the object is small relative to the full image. The model may focus on the background instead of the item you care about.

Label quality is another major issue. If some bicycle images are labeled correctly and others are accidentally labeled as motorcycles or toys, the model receives mixed signals. It cannot learn a clean rule from inconsistent labels. Beginners often assume the model is weak when the real problem is that the data is teaching conflicting lessons.

Category design also matters. If your labels are too broad or too narrow, the task becomes harder than it needs to be. For example, mixing “pets” and “dogs” as separate categories can create confusion because one label includes the other. The model is then asked to separate classes that are not clearly defined. Good engineering judgment means checking whether the classes make sense before changing training settings.

When you review mistakes, try to name the likely reason in plain language: similar appearance, bad lighting, poor crop, inconsistent label, rare example, or confusing class definition. That habit helps you choose a fix based on evidence instead of frustration.

When results are disappointing, beginners often want to change everything at once: more training, different settings, new software, or a more advanced model. But one of the most practical lessons in computer vision is that better data usually helps more than random tuning. If the model is learning from weak examples, no amount of guessing will reliably solve the problem.

Start by checking labels carefully. Look for images placed in the wrong folder, duplicate files, unclear class boundaries, or examples that do not really belong to any category. Fixing labels may feel boring, but it is one of the highest-value improvements you can make. A clean dataset teaches the model more clearly.

Next, look for missing variety. If all your cat images are indoor close-ups and all your dog images are outdoor full-body shots, the model may rely on background clues instead of the animals themselves. Then it might fail on an outdoor cat or an indoor dog. To improve this, add examples with different lighting, positions, backgrounds, sizes, and camera angles. The goal is not just more images. The goal is better coverage of realistic variation.

You should also refine the examples that represent hard cases. If bicycles are often misclassified when partly hidden, include more partial-view bicycle photos. If object spotting misses small items, add more examples where the object appears at different scales and locations. This is how you improve results by refining labels and examples rather than hoping the model somehow corrects itself.

A practical rule is to change the dataset in direct response to observed mistakes. If the model fails on shadows, add shadow examples. If it fails on side views, add side views. Data improvement works best when it is targeted, not random.

After you identify likely problems, make small changes and test again. This sounds simple, but it reflects an important engineering habit. If you change ten things at once, you will not know which change helped, which one hurt, and which one did nothing. Small, controlled edits make the improvement process understandable.

For example, you might first fix obvious label mistakes and retrain. Then compare the new results to the previous version. Did overall accuracy rise? Did the confusion between cats and dogs decrease? After that, you might add 20 more difficult bicycle images and test again. By moving step by step, you build a clear record of what actually improves performance.

Keep a simple experiment log. Write down the date, what changed, and the new results. Include notes like “added low-light dog images” or “removed duplicate cat photos.” This makes your project easier to manage and helps you avoid repeating unhelpful changes. Even small beginner projects benefit from this level of discipline.

Testing again should include more than the final score. Revisit the wrong predictions from the earlier run and see whether those same types of mistakes still happen. Sometimes accuracy barely changes, but the errors become more reasonable and useful. For example, a model may stop making wild mistakes and start only missing difficult edge cases. That is still progress.

This cycle of inspect, adjust, retrain, and retest builds confidence in checking AI output. You are no longer hoping the system works. You are actively guiding it with evidence and learning from each round.

A beginner model does not need to be perfect to be useful. The question is not whether it makes any mistakes. The real question is whether it performs well enough for your goal. If your project is a simple personal photo sorter, a modest number of errors may be acceptable because you can quickly correct them by hand. If the system will support a more important task, you may need stronger reliability before using it.

To decide whether a model is good enough, consider three things. First, look at the overall accuracy and class-by-class behavior. Second, inspect the kinds of errors it makes. Third, think about the impact of those errors. Mixing up similar pets may be annoying but manageable. Missing an important object in a spotting task may be more serious depending on the use case.

It is also worth checking consistency. Does the model work well across bright and dark images, close-up and far-away objects, centered and off-center items? A model that performs only on ideal examples is less dependable than one that handles ordinary variation. Good enough usually means stable and understandable, not just occasionally impressive.

Another useful sign is whether further improvements are becoming small. If you have cleaned labels, added better examples, and fixed the biggest error patterns, later gains may slow down. At that point, the model may already be sufficient for a beginner project. You can still improve it later, but it may be better to move on and use what you have learned in a new experiment.

The key lesson is confidence, not perfection. You should be able to look at the model’s output, explain where it works, describe where it struggles, and justify whether it is ready for your current purpose. That is a practical computer vision skill and a strong foundation for more advanced work.

1. What is the best first step after training a beginner photo sorter?

2. Why is overall accuracy alone not enough to judge a model?

3. When inspecting mistakes, what are you learning about?

4. Which action best matches the chapter's beginner-friendly workflow?

5. What mindset does the chapter encourage when checking AI output?

In the earlier part of this course, you learned how to sort whole photos into categories. That kind of model looks at an entire image and answers a question such as, “Is this a cat or a dog?” or “Is this a kitchen or a bedroom?” Object spotting, often called object detection, is the next practical step. Instead of giving only one label for the whole image, the model tries to find individual items inside the picture and say where they are.

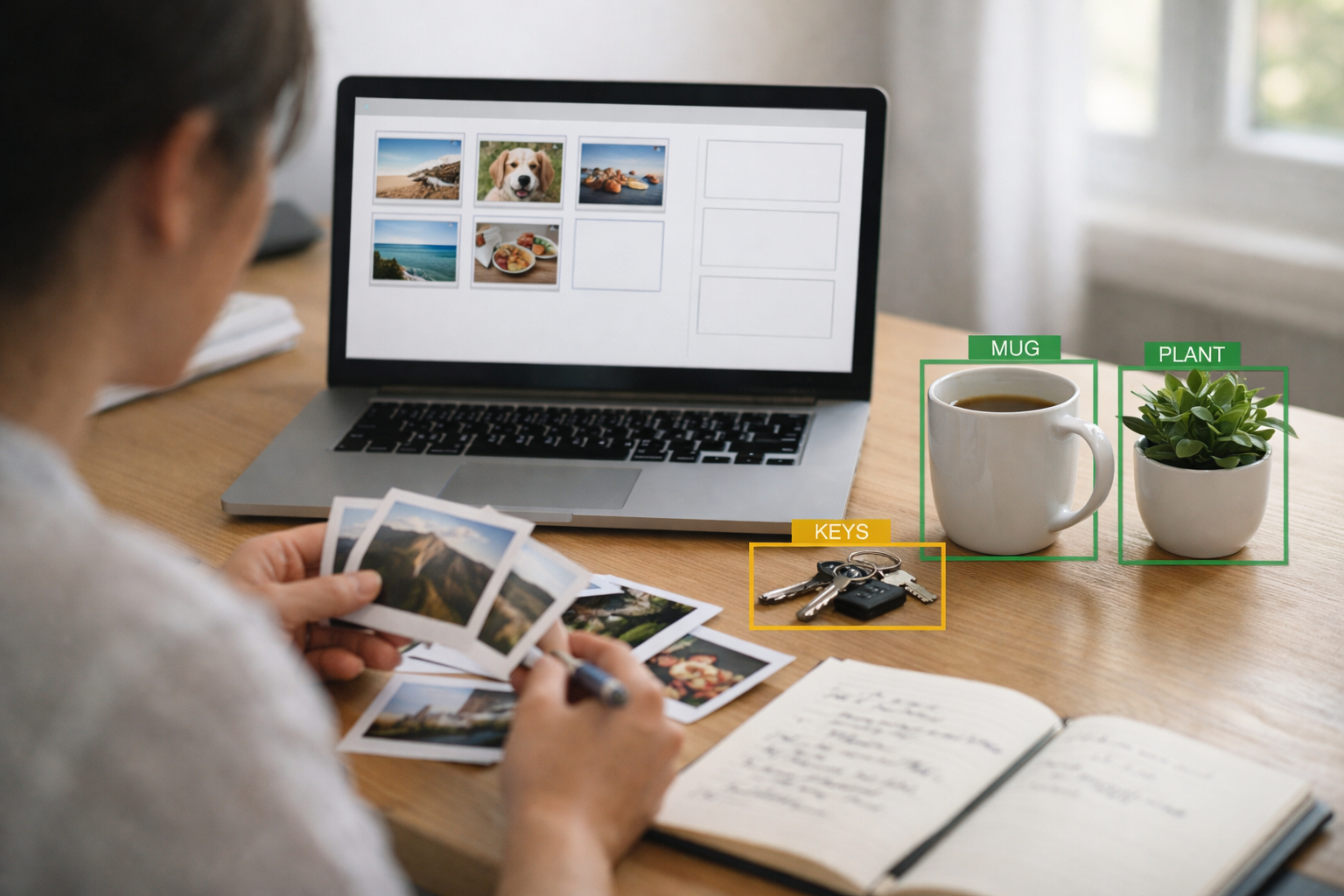

This is an important shift in computer vision thinking. A sorting model treats the image like one complete unit. An object spotting model breaks the scene into meaningful parts. If a desk photo contains a laptop, a mug, a pen, and a notebook, a sorting model might simply say “desk.” A spotting model tries to say, “There is a laptop here, a mug here, a pen here, and a notebook here.” That makes the result far more useful for real applications.

Why does this matter for beginners? Because many simple AI tools become much more practical once location is included. A photo organizer might find all images containing backpacks. A study assistant might count books on a shelf. A workspace tool might check whether safety gear appears on a table. In each case, the system needs more than a single label. It needs categories plus positions.

Object spotting also introduces a new kind of training data. For photo sorting, each image usually gets one label. For object spotting, each image may have many labels, and each label needs a marked area showing where the object appears. These marked areas are usually rectangles called bounding boxes. Preparing this data takes more effort, but it teaches an essential computer vision idea: AI systems often improve when we describe the task more precisely.

As you read this chapter, focus on workflow and engineering judgment, not just vocabulary. Good beginner object spotting depends on clear categories, consistent box marking, realistic expectations, and careful reading of model outputs. You do not need a large industrial system to understand the core ideas. A small demo with everyday objects can teach you nearly everything important about how object detection works in practice.

We will move from the basic meaning of object spotting, to categories and locations, to bounding boxes, to a simple desk-scene example, then to reading confidence scores, and finally to the limits of beginner systems. By the end of the chapter, you should be able to explain how object spotting differs from image sorting and sketch a practical demo idea of your own.

Practice note for Learn how object spotting differs from image sorting: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Identify objects and their positions inside a picture: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Work with boxes that mark where items appear: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Create a simple object spotting demo idea: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Learn how object spotting differs from image sorting: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Object detection means teaching a model to answer two questions at once: what is in the image, and where it is. This is the key difference from image classification, or photo sorting. In classification, the model produces one result for the whole picture, such as “fruit bowl” or “street scene.” In object detection, the model may return several results from the same picture, such as “apple,” “banana,” and “bowl,” each with its own position.

A useful beginner way to think about detection is this: classification labels the entire photograph, while detection labels parts of the photograph. That simple distinction changes everything about the task. The model must learn not only visual appearance, but also how to separate one object from another in a busy scene. It needs to tell that a pen overlaps a notebook, or that two cups are separate even if they are close together.

In practical terms, object detection outputs a list. Each entry usually includes a category name, a confidence score, and a box location. For example, the result might say: notebook, 92%, box near the center; mug, 88%, box on the left; phone, 73%, box near the bottom edge. This is much richer than a single label and helps you build tools that can count, locate, or highlight items.

Engineering judgment matters here. A beginner often wants a detector that recognizes everything. That is too broad. A better first project is narrow and well defined. For example, detect only three desk items: mug, notebook, and keyboard. Limiting the categories reduces confusion in the training data and gives you a much better chance of seeing useful results.

A common mistake is to assume object detection is just “harder classification.” It is related, but the data and outputs are different enough that you must plan differently. You need images where objects appear in different positions, sizes, and lighting conditions. You also need consistent labeling. If one image marks a full mug including the handle, while another marks only the cup body, the model learns mixed signals. Clear rules help more than extra complexity.

For a simple demo idea, imagine a study desk assistant that spots school items in a photo before class begins. The goal is not perfection. The goal is a working system that can show boxes around a few common items and give understandable results. That is exactly the right scale for learning.

Once you move from sorting photos to spotting objects, you start working with two pieces of information together: category and location. The category tells you what the item is. The location tells you where it appears in the image. These two parts are equally important. A model that knows there is a laptop somewhere is not enough if your application needs to draw a box around it or count how many are present.

One image can contain multiple objects from the same category and from different categories. For example, a desk photo might include two pens, one notebook, one mug, and one phone. A classification model would struggle to express all of that. A detection model is designed for exactly this case. It can return several separate findings from a single image, even when the objects overlap or appear at different scales.

When preparing data, think carefully about your category set. Categories should be visually meaningful and easy to distinguish. “Electronics” may be too broad, while “laptop,” “keyboard,” and “phone” are often better because they look different and serve different purposes in the scene. Avoid categories that are vague or inconsistent. If you sometimes label a closed notebook as “book” and sometimes as “notebook,” the model will be confused.

Location is usually represented using box coordinates. These coordinates define a rectangle that surrounds the object. Together with the category, they form one training example inside the image. If an image contains five objects, then that single image contributes five object annotations. This makes object spotting datasets richer, but also more sensitive to labeling quality.

In a practical workflow, you normally collect images, define your categories, label each object with a box, split data into training and validation sets, train the model, and inspect outputs visually. Visual inspection matters because a detector can seem numerically decent while making obvious mistakes on real images. Looking at predicted boxes often reveals issues faster than reading metrics alone.

A common beginner mistake is to choose categories based on words rather than appearance. For example, “work item” and “study item” are meaningful to humans, but they may not have clear visual boundaries. Good detection categories should be things the camera can actually see. Thinking in visible shapes and surfaces is one of the most useful mindset shifts in computer vision.

Bounding boxes are the most common way to mark where an object appears in an image. A bounding box is a rectangle drawn around the visible object. It does not need to match the shape perfectly; it simply needs to contain the object clearly and consistently. For a beginner, boxes are a practical compromise. They are much easier to create than detailed outlines, and they are good enough for many useful tasks.

You can describe a box in different ways, but the usual idea is the same: store the left, top, right, and bottom edges, or store the center point plus width and height. The exact format depends on the tool or model you use. What matters most at this stage is understanding that the box is the model’s way of saying, “I believe the object is here.”