AI Robotics & Autonomous Systems — Beginner

Learn how AI robots work and where beginners can start

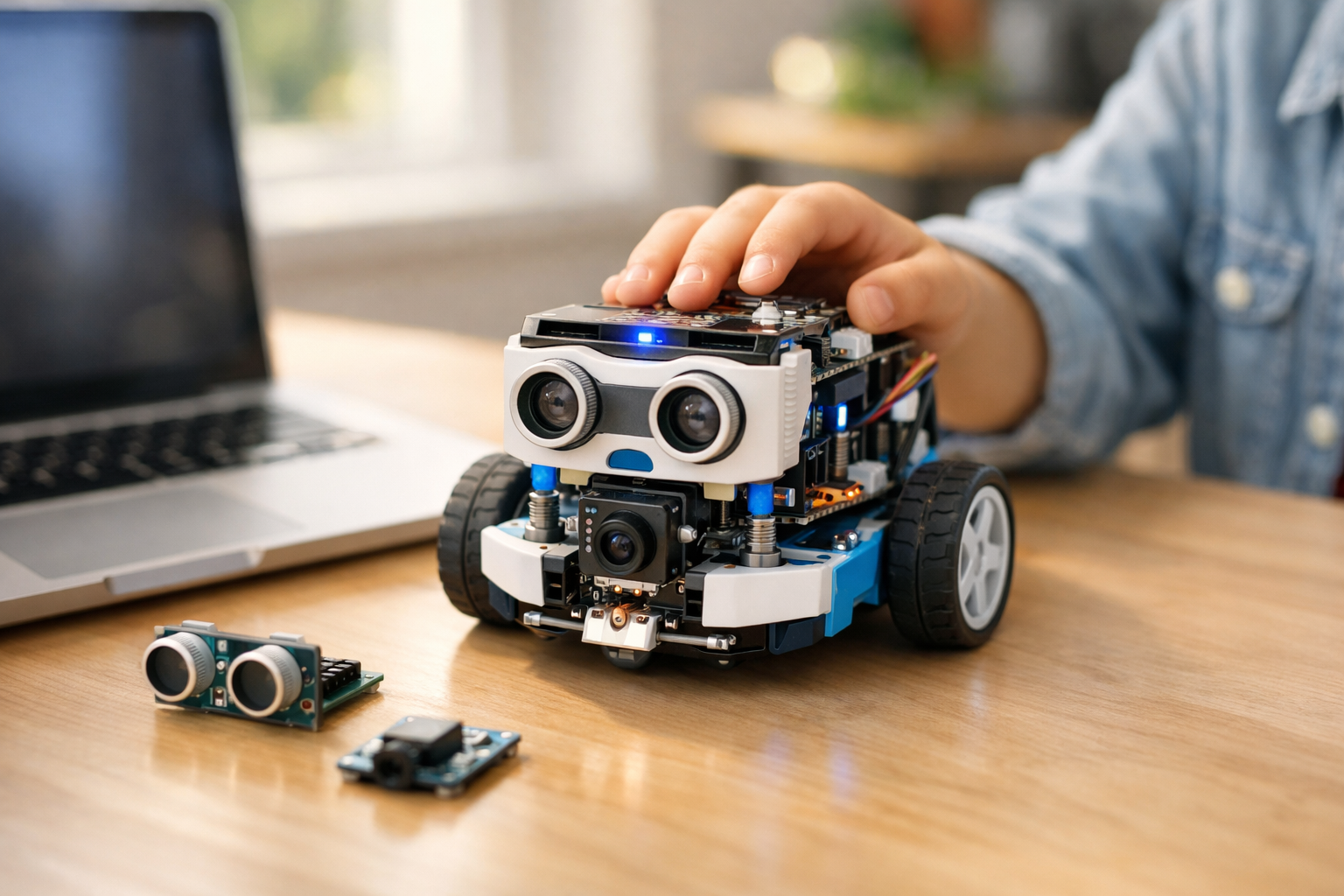

Getting Started with AI Robots for Complete Beginners is a short, book-style course designed for people who have never studied artificial intelligence, robotics, coding, or engineering before. If terms like sensors, computer vision, automation, and autonomous systems sound new or confusing, this course is built for you. It starts at the very beginning and explains each idea in clear, simple language.

Instead of throwing you into advanced math or programming, this course helps you understand the big picture first. You will learn what AI robots are, how they work, what parts they use, and how they make simple decisions. By the end, you will not only know the vocabulary, but also feel confident discussing real-world robotics systems and planning your own beginner-friendly next steps.

Many robotics courses assume you already know coding or electronics. This one does not. It is structured like a short technical book with six chapters, each building naturally on the one before it. You first learn what a robot is, then the physical parts, then how AI helps a robot process information, and finally how robots move, interact, and are used in the real world.

This course gives you a strong beginner foundation in AI robotics. You will understand the difference between a basic automated machine and an AI-powered robot. You will discover how sensors help robots notice the world, how actuators help them move, and how onboard controllers help connect inputs to actions. You will also explore how data, pattern recognition, and training help robots make better decisions.

As the chapters progress, you will learn how robots navigate spaces, avoid obstacles, and interact with people. You will see how AI robots are used in homes, warehouses, hospitals, farms, and public services. The final chapter introduces safety, ethics, and responsible design so you can think about robots not only as tools, but also as systems that affect people and society.

This course is ideal for curious beginners, students, career changers, business professionals, and anyone who wants to understand the growing world of AI robotics without feeling overwhelmed. If you have seen delivery robots, robotic vacuums, warehouse machines, or healthcare robots and wondered how they work, this course will give you the answers in an approachable format.

It is also a good starting point if you plan to explore more technical topics later. Once you understand the foundations, future learning in robotics, automation, computer vision, or machine learning becomes much easier. If you are ready to begin, Register free and take your first step.

AI robots are becoming more common in daily life and professional environments. They clean floors, move products, inspect equipment, help doctors, monitor crops, and support public safety. Understanding the basics of these systems is becoming a useful skill, even for people who do not want to become engineers. This course helps you build that awareness in a simple and practical way.

Because the course is short and focused, you can complete it quickly while still gaining a meaningful understanding of the field. It is a strong starting point for learners who want confidence first, before moving on to hands-on tools or technical projects. You can also browse all courses if you want to continue learning after this introduction.

By the end of this course, you will be able to explain how AI robots sense, think, and act. You will understand the role of sensors, actuators, controllers, data, and simple learning systems. You will also know the common uses, limits, and safety concerns of autonomous machines. Most importantly, you will leave with a clear beginner roadmap and the confidence to keep learning.

Robotics Educator and AI Systems Specialist

Sofia Chen teaches beginner-friendly robotics and artificial intelligence courses for new learners and career changers. She has helped students understand complex technical ideas using simple examples, hands-on thinking, and practical learning paths.

When people hear the word robot, they often imagine a human-shaped machine walking around and talking. In real life, most robots do not look like movie characters. Many are simple, practical machines built to sense what is happening around them, make some kind of decision, and then do a physical action. That action might be moving a wheel, lifting a box, vacuuming a floor, or delivering supplies in a hospital. A useful beginner definition is this: a robot is a machine that can interact with the physical world using sensors and actuators, and an AI robot is a robot that uses artificial intelligence to improve how it chooses what to do.

This chapter gives you a beginner-friendly mental model of how robots work. You will learn the difference between a robot and a regular machine, what makes a robot seem intelligent, and why AI matters. You will also see where AI robots appear in everyday life, from homes and warehouses to farms, hospitals, and public services. Most importantly, you will build a simple engineering view of robotics: robots sense, think, and act. If you remember that cycle, many later topics will feel much easier.

A regular machine usually does one job in a fixed way. A blender spins blades. A lamp produces light. A washing machine runs a program, but it does not usually move through space or adapt very much to a changing environment. A robot is different because it connects information from the world to physical action. It has parts that detect, parts that decide, and parts that move. Even a small robot vacuum shows this pattern clearly: sensors detect walls and dirt, software chooses a path, and motors drive the wheels and brushes.

As a beginner, one common mistake is to think that every robot must be highly intelligent. That is not true. Some robots simply follow rules. Others use AI to recognize patterns or make better choices under uncertainty. Another common mistake is to think AI alone makes something a robot. It does not. A chatbot may use AI, but unless it can sense and act in the physical world through robotic hardware, it is not a robot. In the same way, a factory arm may be a robot even if it follows fixed instructions and does not use modern AI at all.

Engineering judgment begins with asking practical questions. What must the robot notice? What decisions must it make? What physical action must it take? How much uncertainty is in the environment? A robot folding laundry in a messy home needs more sensing and smarter decision-making than a robot arm placing identical parts on a factory line. This is why not all robots need advanced AI. Good design is not about adding intelligence everywhere. It is about matching the hardware and software to the real problem.

By the end of this chapter, you should be able to explain in simple language what an AI robot is, identify the main parts of a basic robot, describe how robots sense, decide, and act, and compare rule-based robots with AI-powered robots. Those ideas form the foundation for everything else in AI robotics.

Practice note for Recognize the difference between a robot and a regular machine: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Understand what makes a robot 'intelligent': document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

A robot is a machine that can detect something about the world and then do something physical in response. That definition is simple, but it is powerful. It helps us separate robots from ordinary machines. A microwave heats food, but it does not usually move itself through the world or physically respond to many changing conditions. A robot vacuum, by contrast, senses obstacles, changes direction, and keeps cleaning. That ability to connect information to action is what makes it a robot.

In plain language, a robot usually has three basic ingredients: it can sense, it can decide, and it can act. Sensors are the robot's way of noticing what is around it. These might be cameras, touch sensors, distance sensors, microphones, GPS receivers, or simple switches. The decision part is usually software running on a computer chip. It interprets the sensor data and selects what should happen next. The action part is handled by actuators such as motors, wheels, robotic arms, pumps, or grippers.

Beginners often assume a robot must look human. In practice, robots come in many forms. A warehouse robot may look like a low platform with wheels. A farm robot may look like a small vehicle with cameras and spraying tools. A surgical robot may have highly precise arms controlled by specialists. Form follows function. Engineers care less about appearance and more about whether the machine can do the physical task reliably and safely.

A useful test is this: if the machine only follows a fixed internal process and does not really interact with the outside physical world, it may be a machine but not a robot. If it monitors the world and changes its physical behavior based on what it senses, it is likely a robot. This distinction is not always perfect, but it is a good beginner rule. It also introduces the practical mindset of robotics: the world is messy, so robots must be built to handle change, uncertainty, and unexpected situations.

Artificial intelligence, or AI, means using computer methods that help machines perform tasks that normally seem to require human judgment. For beginners, the easiest way to think about AI is pattern recognition and decision support. AI helps a robot notice meaningful patterns in sensor data and choose actions more flexibly than a rigid step-by-step script. For example, instead of following only one fixed route, a robot may learn to avoid crowded areas or identify objects it has not seen in exactly the same position before.

AI does not mean magic, self-awareness, or perfect understanding. In robotics, AI is usually practical and limited. A delivery robot may use AI to recognize sidewalks, detect pedestrians, and estimate safe paths. A robot arm may use AI vision to identify randomly placed parts in a bin. A home robot may use AI to recognize a table leg, pet bowl, or staircase. In each case, AI improves the robot's ability to interpret messy real-world data.

One of the most helpful beginner comparisons is this: traditional programming says, "If this happens, do that." AI often says, "Based on patterns from many examples, this is probably what I am seeing or what I should do." That is why AI is useful when the world is too variable to describe with simple rules alone. Faces, spoken language, road scenes, cluttered rooms, and human activity are all full of variation.

Still, engineering judgment matters. AI is not automatically better than simple logic. If a robot only needs to press a part into place in the exact same location every time, fixed programming may be cheaper, safer, and easier to maintain. A common beginner mistake is to think adding AI makes a system advanced. In reality, good robotics design uses AI where uncertainty is high and uses ordinary control rules where the task is stable and predictable.

Automation means a machine performs a task with limited or no direct human control. Intelligence means the machine can interpret information and adapt its behavior in a useful way. These ideas overlap, but they are not the same. Many systems are automated without being intelligent. A timed sprinkler system is automated. It runs on schedule. But if it cannot detect rain, changing soil conditions, or plant health, it is not showing much intelligence.

The same idea appears in robotics. A rule-based robot follows predefined instructions. If sensor A detects an obstacle, turn left. If battery is low, return to charger. If object is in position, close gripper. This can be very effective. In fact, many industrial robots are successful precisely because their environments are carefully controlled. But a rule-based robot can struggle when the world changes in ways the designer did not predict.

An AI-powered robot goes further by handling uncertainty better. It may classify objects from camera images, predict where a moving person will be, or improve navigation from past experience. It is still a machine with limits, but it can make choices based on learned patterns rather than only fixed if-then rules. This is what often makes a robot seem "intelligent" to people.

A practical way to compare the two is to ask how they respond to novelty. If every object, path, or condition is known in advance, automation may be enough. If the robot must work in changing homes, crowded streets, busy warehouses, or variable natural environments, intelligence becomes more valuable. The common mistake is treating automation and AI as opposites. In real systems, they often work together. A smart robot may use AI for perception, ordinary planning for workflow, and strict safety rules for emergency stops.

AI robots are already part of daily life, even if people do not always notice them. One familiar example is the robot vacuum. Basic models follow simple patterns, but more advanced ones map rooms, recognize furniture, avoid stairs, and detect obstacles such as shoes or pet waste. That combination of sensing, decision-making, and movement makes them a great beginner example of an AI robot.

In business settings, warehouse robots are common. Some move shelves, bring products to human workers, or transport bins between stations. Others use cameras and AI vision to identify packages, scan barcodes, and assist sorting. Here, AI helps with navigation, object recognition, and traffic management, especially in busy environments where paths and workloads change often.

Public services also use AI robotics. Hospitals may use mobile robots to deliver medicine, linens, or meals. Sidewalk delivery robots bring groceries or food across short distances. Some cities test inspection robots for infrastructure, such as sewer pipes, roads, or utility tunnels. Agriculture uses robots to monitor crops, detect weeds, and apply treatment more precisely. In each case, the robot is valuable because it connects sensing with physical action in an environment that is not perfectly controlled.

When looking at examples, ask practical questions. What is the robot sensing? What decisions is it making? What action is it taking? A drone inspecting a bridge may use cameras and depth sensors, AI to spot damage patterns, and motors to fly to the right position. A restaurant robot may use mapping sensors, route planning, and motors to deliver dishes. This way of thinking helps you move beyond the exciting surface appearance and understand the actual system underneath.

Robots matter because they solve practical problems. Businesses use robots to improve speed, consistency, safety, and cost control. If a task is repetitive, physically demanding, time-sensitive, or dangerous, a robot may help. In a warehouse, robots reduce walking time and speed up order handling. In manufacturing, robotic arms repeat precise movements with less variation. In farming, robots can work long hours collecting data or applying treatments exactly where needed.

Communities and public services use robots for similar reasons. Hospitals use delivery robots to free staff for more human-centered work. Inspection robots can enter spaces that are risky, dirty, or hard to reach. Disaster response robots may search unstable environments before rescue teams enter. In these cases, the value is not just efficiency. It is also safety, service quality, and better use of human skills.

That said, good engineering judgment requires balance. A robot is not always the best answer. Robots bring costs for maintenance, training, software updates, charging, repairs, and integration with existing workflows. A common mistake is focusing only on what the robot can do in a demonstration, not what it can do reliably every day. A successful robot must fit the real environment, handle edge cases, and fail safely when something unexpected happens.

For beginners, the key practical outcome is this: robots are adopted when they create enough value to justify their complexity. That value might be lower labor strain, improved accuracy, continuous operation, faster service, or safer working conditions. AI increases that value when the robot must deal with unpredictable people, objects, layouts, or timing. This is why AI robots matter economically and socially, not just technically.

The most useful beginner mental model in robotics is the sense-think-act cycle. First, the robot senses the world through cameras, microphones, touch sensors, wheel encoders, GPS, lidar, temperature probes, or other devices. Second, it thinks by processing that information in software. This may include simple rules, mapping, path planning, object detection, or AI models that recognize patterns. Third, it acts through motors, arms, wheels, grippers, lights, or speakers. Then the cycle repeats again and again.

Imagine a robot delivering supplies in a hospital. It senses the hallway and people nearby. It thinks about where it is, whether the path is clear, and which route is safest. Then it acts by moving forward, slowing down, stopping, or turning. If a person steps into its path, the next sensing step updates the information, and the robot chooses a new action. This continuous loop is how robots behave in dynamic environments.

Each part of the cycle can fail in different ways. If sensing is poor, the robot may not notice an obstacle. If thinking is weak, it may misclassify what it sees or choose a bad route. If acting is inaccurate, the motors may not move as intended. Beginners often focus only on the AI decision part, but real robot performance depends on all three. A brilliant AI model cannot fix a broken wheel or a badly placed camera.

From an engineering point of view, this model helps you diagnose problems and design better systems. Ask: what information does the robot need, how quickly must it decide, and what exact action mechanism will carry out the decision? Once you can answer those questions, you are already thinking like a robotics practitioner. This chapter's core lesson is simple but foundational: AI robots matter because they combine physical action with flexible decision-making in the real world.

1. Which choice best describes the difference between a robot and a regular machine?

2. According to the chapter, what makes a robot an AI robot?

3. Which example from the chapter is a robot even if it does not use modern AI?

4. What is the simple mental model of how robots work introduced in this chapter?

5. Why might a robot folding laundry in a messy home need more advanced AI than a robot arm on a factory line?

When beginners first hear the word robot, they often imagine a human-shaped machine walking and talking. In practice, most robots are much simpler. A robot is any machine that can sense something about its surroundings, make a decision based on that information or on pre-set instructions, and then do something in the physical world. That means a robot needs a body, a source of power, parts that detect the world, parts that create movement, and some kind of controller that coordinates everything. In this chapter, you will learn the main physical parts of a robot and see how those parts connect to behavior.

A useful way to think about robotics is as a loop: sense, decide, act. Sensors collect information. A controller processes that information. Motors or other actuators create movement or some physical change. Then the robot senses again and adjusts. Even a simple vacuum robot follows this loop many times every second. If it detects a wall, it changes direction. If the battery gets low, it looks for its charging dock. If dirt is detected, it may increase suction. The robot seems intelligent because its parts are working together in a coordinated way.

For complete beginners, it helps to compare a robot to a human body. The frame is like a skeleton. The battery is like stored energy from food. Sensors are like eyes, ears, and skin. Actuators are like muscles. The controller is like a very small brain that follows instructions and, in AI-powered robots, may also use learned patterns to make better choices. This comparison is not perfect, but it makes the engineering easier to understand in everyday language.

As you read, notice that each part of a robot has limits. A robot can only react to what its sensors can detect. It can only move in ways its actuators allow. It can only compute what its controller can handle with the available power. Good robot design is not about adding every possible part. It is about choosing the right parts for the job. Engineering judgment means asking practical questions: Does this robot need wheels or a robotic arm? Does it need a camera, or is a distance sensor enough? Does it need AI to recognize patterns, or will simple rules work reliably?

Beginners often make the mistake of thinking that AI alone makes a robot powerful. In reality, weak hardware limits even the smartest software. A robot with poor sensors cannot gather good information. A robot with underpowered motors cannot move effectively. A robot with a tiny battery may stop before it finishes its task. In robotics, physical design and intelligent behavior are tightly linked. By the end of this chapter, you should be able to name the main parts of a basic robot, explain what sensors and actuators do, and describe how hardware choices shape what a robot can actually do in the real world.

In the sections that follow, we will break the robot into its major building blocks. You will see not only what each part does, but also why that part matters in practice. This is the foundation for understanding more advanced topics later, including how AI helps robots adapt, recognize patterns, and make better decisions than a purely rule-based machine.

Practice note for Name the main physical parts of a robot: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Understand what sensors do: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Every robot starts with a physical body. This body is often called the frame or chassis. It holds the robot together and gives other parts a place to attach. Wheels, batteries, circuit boards, sensors, and motors all need stable mounting points. If the frame is weak, badly balanced, or too heavy, the robot may shake, tip over, move inefficiently, or break under stress. This is why the body of a robot is not just decoration. It is a practical engineering choice that affects performance.

Frames are made from materials such as plastic, aluminum, steel, wood, or composite materials. A classroom robot might use lightweight plastic because it is cheap and easy to build. An industrial robot arm may use metal because it needs strength and precision. The best material depends on the robot's job. A delivery robot that drives over sidewalks needs a different frame from a toy robot on a smooth floor. Beginners often focus on electronics first, but mechanical stability matters just as much.

Power is the next basic requirement. Most mobile robots use batteries. Some larger robots use wall power, replaceable battery packs, or even hybrid systems. The power source must match the robot's needs. Motors often require more energy than the controller or sensors. If the battery is too small, the robot may work for only a short time. If it is too heavy, the robot may become slow or unstable. That trade-off is an example of engineering judgment: more battery gives longer operation, but it also adds weight and cost.

Another practical concern is power distribution. Not all parts need the same voltage or current. A sensor may need a small, stable supply, while a motor may draw a sudden burst of current when starting. If power is poorly managed, the robot may reset unexpectedly or sensors may produce unreliable readings. A common beginner mistake is connecting everything to one supply without checking the requirements of each part. Good designs separate sensitive electronics from noisy motor power when needed.

When you look at a robot body, ask simple questions. Where is the weight concentrated? Is the center of gravity low enough to prevent tipping? Is there space for wires, cooling, and maintenance? Can the battery be replaced easily? Can the frame protect the electronics from bumps or dust? These details shape how reliable the robot will be in everyday use. A robot that looks simple from the outside may reflect many careful decisions about structure and power inside.

Sensors are the parts that allow a robot to notice what is happening inside and outside its body. Without sensors, a robot is effectively blind and unaware. It may still move, but it cannot adjust to changing conditions. Sensors turn real-world information into signals the controller can use. This is one of the most important ideas in robotics: a robot can only react to information it is able to collect.

There are many kinds of sensors. Distance sensors help a robot estimate how close it is to a wall or object. Light sensors detect brightness or color. Temperature sensors measure heat. Gyroscopes and accelerometers help detect turning, tilt, or movement. Wheel encoders measure how far wheels have rotated. Battery sensors report remaining power. In many robots, internal sensing is just as important as external sensing. A robot needs to know both what is around it and what its own body is doing.

The job of a sensor is not to think. It only measures. The controller must interpret the measurement. For example, a distance sensor may report that an obstacle is 20 centimeters away. A rule-based robot might follow a simple instruction: if the obstacle is closer than 25 centimeters, stop and turn. An AI-powered robot could combine that reading with camera data, past experience, and a map to choose a better path. The sensor provides the raw information, but the behavior depends on what the robot does with it.

In practice, sensors are imperfect. Readings may be noisy, delayed, or affected by lighting, weather, dust, shiny surfaces, or vibrations. This is why beginners should not assume sensor values are always correct. A common mistake is trusting one sensor too much. Better robots often use multiple sensors together. For example, a mobile robot may use wheel encoders, a gyroscope, and a distance sensor so that one weak signal can be checked against another. This improves reliability.

Choosing sensors also depends on purpose. A line-following robot may only need basic light sensors. A warehouse robot may need laser range sensing, cameras, and load detection. More sensors are not always better. Each added sensor increases cost, wiring, data processing, and possible failure points. Good design means selecting the fewest sensors that still allow safe and effective behavior. When beginners understand what sensors do, they begin to see how robots gather the information needed to sense, decide, and act in a structured loop.

If sensors allow a robot to notice the world, actuators allow it to change the world. An actuator is any component that creates physical action. The most common examples are electric motors, but actuators can also include servos, linear actuators, pumps, grippers, valves, speakers, lights, and other output devices. In simple terms, actuators are how the robot does something after it has decided what to do.

Motors are especially important because they create movement. A wheeled robot may use DC motors to spin the wheels. A robotic arm may use servo motors to place joints at exact angles. A drone uses rapidly spinning motors and propellers to create lift and control direction. Different tasks need different kinds of motion. Speed, force, precision, and smoothness all matter. A small fast motor may be useful for a toy car, but not for lifting a heavy object.

Beginners often think movement is just about turning a motor on and off. Real robot movement is more controlled. The controller may vary speed, direction, angle, and timing. Sensors often help here too. For example, if a wheel motor is supposed to rotate a certain amount, an encoder can measure whether it actually did. This allows feedback control. Instead of simply hoping the wheel moved correctly, the robot can check and correct itself. That is how robots become more accurate and dependable.

Actuators also affect safety and energy use. Stronger motors can move heavier loads, but they draw more power and can create more risk if something goes wrong. A robot arm in a factory must move with enough force to do its job, but also with protective systems to avoid harming people or damaging products. For home robots, quiet operation, smooth motion, and battery efficiency may be more important than raw strength.

One common beginner mistake is choosing actuators without thinking about the real environment. A motor that works on a desk may fail on carpet, slopes, or outdoor surfaces. Wheels may slip. Joints may stall. Parts may overheat. The practical lesson is simple: actuators connect robot decisions to real-world results, but real-world conditions always push back. Good robotics means matching the moving parts to the task, the terrain, the weight, and the required level of control.

The controller is the part of the robot that coordinates the whole system. It receives signals from sensors, follows programmed instructions, and sends commands to actuators. In a simple robot, the controller may be a microcontroller such as an Arduino-type board. In a more advanced robot, it may be a single-board computer or a combination of several processors. Some robots also connect to cloud systems, but a robot still needs onboard control for fast local decisions.

It is helpful to think of the controller as the robot's organizer rather than as magical intelligence. It does not automatically understand the world. It runs software. That software may be simple rules, like turning left when a bump sensor is pressed, or more advanced AI methods, like recognizing objects in camera images. This is where the difference between rule-based robots and AI-powered robots becomes clear. Rule-based systems follow fixed instructions. AI-powered systems can use learned patterns to classify, predict, or choose among options in more flexible ways.

Controllers have limits. They have only so much memory, processing speed, and electrical power available. A small chip may be excellent for reading a few sensors and controlling wheels, but too weak for real-time image recognition. That is why not every robot can run advanced AI directly onboard. Engineering judgment means deciding what level of computing is actually needed. If a robot's job is simple and repetitive, a basic controller may be more reliable than a complex AI setup.

The controller also manages timing. In robotics, timing matters. Sensors may need to be read many times per second. Motors may need updates at precise intervals. If the software is slow or poorly structured, the robot can react too late. A common beginner mistake is adding many features until the controller becomes overloaded. The result can be delays, jerky movement, or missed sensor events.

Practical robot design often separates responsibilities. One chip may handle low-level motor control, while another handles navigation or AI tasks. This makes the system easier to manage and more robust. The important point is that the controller links hardware to behavior. It is where sensing becomes decision-making and where decisions are turned into commands. Without a controller, the robot has parts but no coordination. With the right controller, the robot becomes an organized system capable of purposeful action.

Some sensors deserve special attention because they are common in AI robotics and closely connected to human-style interaction. Cameras, microphones, and touch inputs help robots work in spaces built for people. These sensors can make a robot appear much smarter because they collect rich information, but they also create more demanding engineering challenges.

Cameras allow a robot to capture visual information. A robot can use a camera to detect objects, read labels, follow lines, recognize faces in limited settings, or estimate where it is. With AI, camera data becomes especially powerful because machine learning models can find patterns in images that are difficult to express with simple rules. For example, instead of programming every possible shape of a cup, an AI model can learn what cups tend to look like from training examples. However, cameras depend heavily on lighting, angle, focus, and processing power. A camera alone does not guarantee understanding.

Microphones let robots respond to sound. A home assistant robot may listen for voice commands. A service robot may detect alarms, speech, or direction of sound. AI can help by turning speech into text or classifying sound events. But microphones also collect background noise, echoes, and overlapping voices. In a quiet demo, voice control may seem easy. In a busy real environment, it becomes much harder. This is a common lesson in robotics: real-world conditions are messier than controlled examples.

Touch inputs include buttons, bump sensors, pressure pads, and touch-sensitive surfaces. These are often simpler and more reliable than vision or audio. A vacuum robot may use bumper switches to detect contact with furniture. A robotic gripper may use pressure sensing to avoid crushing an object. In many cases, touch is the final confirmation that an action physically happened.

For beginners, the practical takeaway is that high-information sensors like cameras and microphones are useful, but they require stronger controllers, more careful software, and often AI support. Simpler touch inputs are easier to trust but provide less information. Good robot design often combines them. A delivery robot might use a camera to identify a doorway, a microphone for voice interaction, and touch sensors for safety at close range. Each input adds a different piece of understanding.

A robot becomes useful only when its parts operate as one system. The frame supports the hardware. The power source supplies energy. Sensors collect information. The controller interprets that information and chooses a response. Actuators create movement or another output. This full workflow is what connects hardware parts to robot behavior. When you understand this flow, robots stop seeming mysterious and start making practical sense.

Consider a simple home robot vacuum. Its body holds wheels, brushes, battery, sensors, and controller. Its power system keeps everything running. Distance sensors and bumper sensors detect walls and furniture. Wheel motors drive movement. Brush motors collect dirt. The controller follows a cleaning strategy. In a rule-based version, it may turn away whenever it hits an obstacle. In an AI-powered version, it may also recognize room layouts, learn common paths, and improve navigation over time. The robot's visible behavior comes from cooperation among all of these parts.

This systems view is also where troubleshooting becomes easier. If a robot does not avoid obstacles, the problem may be with the sensor, the wiring, the controller logic, or the motor response. Beginners often blame the software first, but robotics problems are often shared across mechanical, electrical, and software layers. Good engineers test each layer. Is power stable? Are sensor readings sensible? Are commands reaching the motors? Is the frame causing vibration that affects sensing? Real robot behavior is the result of the whole chain, not one part alone.

Another practical lesson is that robots are built for trade-offs. Faster movement may reduce battery life. Better sensors may increase cost. Stronger motors may require a heavier battery. More AI may require more computing and create delays if the hardware is too weak. There is rarely one perfect design. Instead, robot builders aim for a balanced system that works well enough for a specific job.

As you move forward in this course, keep the loop in mind: sense, decide, act. That loop is the heart of robotics. AI can improve the decide step by helping the robot recognize patterns and make better choices, but AI still depends on the body, power, sensors, and actuators around it. A robot is not just a machine with a computer inside. It is a complete physical system whose parts must work together in the real world.

1. Which set of parts best matches the chapter's description of the main building blocks a robot needs?

2. What is the main job of sensors in a robot?

3. In the sense-decide-act loop, what usually happens right after a robot senses something?

4. Why does the chapter say weak hardware can limit even smart AI software?

5. According to the chapter, what makes a robot seem intelligent in practice?

In the last chapter, you learned that robots use sensors to gather information and actuators to do physical work. In this chapter, we focus on the part in the middle: decision making. This is where a robot turns incoming data into a choice, and then turns that choice into an action. For a complete beginner, the easiest way to picture this is as a simple flow: the robot senses something, interprets what it sensed, decides what to do next, and then acts. AI becomes useful in the interpretation and decision steps, especially when the world is messy, noisy, or changing.

A non-AI robot can still make decisions, but those decisions usually come from fixed rules written by a programmer. For example, a robot vacuum might be told: if the front bumper is pressed, stop and turn right. That works for a basic case. But what if the robot sees a pet bowl, a dark rug, a child’s toy, and a moving dog all in the same room? A fixed rule system can become hard to manage very quickly. AI helps by finding patterns in data and by making choices based on examples, probabilities, or learned behavior instead of only following a rigid list of instructions.

This does not mean AI is magic. A robot still depends on hardware, software, and engineering judgment. Good robot decisions come from good sensor placement, useful data, careful testing, and clear limits on what the robot should do. AI can improve flexibility, but it can also make mistakes if it was trained on poor examples or used in the wrong situation. A good beginner understanding is this: AI helps robots make better guesses in complex situations, while traditional rules help robots behave predictably in simple situations.

As you read this chapter, keep one practical decision flow in mind:

This simple loop is the heart of robotics. The rest of the chapter explains how AI supports each stage, how it differs from fixed-rule logic, and what beginners should watch out for when thinking about robot learning and real-world choices.

Practice note for Understand how robots turn data into actions: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Compare fixed rules with AI-based decisions: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Learn simple ideas behind robot learning: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Follow a beginner decision flow from input to output: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Understand how robots turn data into actions: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Compare fixed rules with AI-based decisions: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Robots do not understand the world the way people do. They receive raw data from sensors, and that data must be turned into something useful before a decision can happen. A camera produces pixels, a distance sensor produces measurements, a microphone produces sound waves, and a touch sensor reports contact. By themselves, these are just numbers. The robot must process them into meaningful information such as “there is a wall ahead,” “the floor is dirty here,” or “a person is standing nearby.”

This step is important because bad interpretation leads to bad action. Imagine a delivery robot reading shiny glass as open space, or mistaking a shadow for a step. In engineering, this is why sensor data is often filtered, checked, and combined. A robot may use more than one sensor at the same time because each sensor has strengths and weaknesses. A camera can recognize objects, but poor lighting hurts performance. A distance sensor works in darkness, but may not identify what the object actually is. Together, they can give a fuller picture.

For beginners, a helpful way to think about this is: data becomes information when it answers a practical question. The question might be “Is there an obstacle?” or “Is the object safe to pick up?” Once the robot has useful information, it can move to the next stage and decide what to do. In simple systems, this conversion may be straightforward. In AI systems, software may detect patterns in the sensor data and label what is happening. The practical outcome is better robot behavior, because useful information is easier to act on than raw numbers.

A common mistake is assuming more data always means better decisions. In reality, extra data can slow processing or add confusion if it is noisy or irrelevant. Good design focuses on collecting the right data for the robot’s task and turning it into clear signals that support action.

Rule-based decisions follow direct instructions written in advance. A programmer creates conditions and matching actions, such as: if battery is low, return to charger; if obstacle is near, stop; if room is dark, turn on light. This approach is easy to understand and often very reliable in controlled situations. It is common in factory robots, safety systems, and beginner robotics projects because the behavior is predictable.

AI decisions work differently. Instead of listing every possible situation, developers may train a system to recognize patterns and choose likely actions from examples. An AI-powered warehouse robot might learn to identify boxes of different sizes from camera images. A service robot might estimate whether a person wants help based on movement, position, and timing. The robot is not simply matching one hard-coded rule. It is using learned patterns to make a decision in a situation that may vary.

Neither method is automatically better in every case. Rule-based systems are excellent when the environment is stable and the desired behavior is clear. AI is more useful when the robot faces variation that would be difficult to describe with hundreds of rigid rules. In practice, many real robots use both. For example, a robot may use AI to recognize an object, but fixed rules to enforce safety, speed limits, and emergency stops.

Engineering judgment matters here. Beginners sometimes expect AI to replace all rules. That is rarely wise. Safety-critical behaviors should often remain explicit and testable. AI can help with uncertainty, but rules provide boundaries. A practical comparison is this: rules answer known situations clearly, while AI helps with messy situations where exact instructions are too long, too fragile, or impossible to write completely.

Pattern recognition is one of the simplest and most useful ideas in AI. It means finding regular shapes, signals, or relationships in data so the robot can classify what it senses. A person does this naturally. You recognize a chair even if it is a different color or style than other chairs you have seen. AI tries to give robots a basic version of that skill by learning what kinds of sensor data usually match a certain object, event, or condition.

For a robot, pattern recognition could mean identifying a doorway in camera images, recognizing the sound of a spoken command, detecting that a machine is vibrating in an unusual way, or deciding that a floor area is likely dirty based on repeated sensor readings. The robot does not “understand” these things like a human. It matches current input to patterns it has learned or been programmed to detect.

Why is this useful? Because the real world is not perfectly neat. A cup can be large or small, a room can be bright or dim, and people can move unpredictably. A purely fixed system may fail if something looks slightly different from what was expected. Pattern recognition gives the robot more flexibility. It can make a best estimate instead of waiting for a perfect match.

Still, pattern recognition has limits. It may confuse similar objects or make a confident guess from poor data. This is why robot decisions often include confidence levels, repeated checks, or confirmation from multiple sensors. A practical lesson for beginners is that pattern recognition is not about certainty. It is about improving the robot’s ability to choose a reasonable action when exact rules are not enough.

If an AI robot learns from examples, then the quality of those examples matters greatly. Training data is the set of images, sounds, measurements, or labeled cases used to teach the AI what to look for. If the data is limited, unbalanced, or unrealistic, the robot can learn the wrong lesson. For example, if a home robot is trained mostly on clean, bright rooms, it may struggle in cluttered spaces or low light. If a robot arm is shown only one style of package, it may fail when a slightly different box appears.

This is one of the most practical ideas in beginner AI robotics: the robot is shaped by the examples it sees. Good training data should represent the real world where the robot will operate. That includes different lighting, object shapes, positions, noise levels, and common disturbances. A robot used in a hospital needs different data than one used in a farm or office. Context matters.

Training data also affects fairness, safety, and reliability. If important situations are missing, the robot may perform well in testing but poorly in actual use. Engineers therefore spend a lot of time gathering varied data, labeling it correctly, and checking whether the trained model behaves sensibly. This is not glamorous work, but it is essential.

A common beginner mistake is focusing only on the algorithm and ignoring the data. In many projects, improving the training data produces more benefit than choosing a more advanced model. The practical outcome is clear: better examples usually lead to better robot decisions. Poor examples create hidden weaknesses that appear later in the field, where mistakes can be expensive or unsafe.

Robot learning can sound complicated, but the basic idea is simple: the robot improves how it responds by using experience, examples, or feedback. One common example is object recognition. A robot may be shown many pictures of cups, tools, or packages so it can get better at identifying them later. Another example is path selection. A robot moving through a building may learn which routes are usually faster or less crowded.

Some robots learn from labeled data, where humans provide the right answer during training. For instance, images may be labeled “chair,” “table,” or “person.” Other systems learn from feedback. A robot might try a gripping action, measure whether the object slipped, and then adjust its future grip strength. In both cases, the system is improving from information gathered over time.

Consider a beginner-friendly decision flow. A robot vacuum senses dirt levels and obstacle distances. It processes the data and notices that certain readings often mean a high-dust area. It decides to slow down and clean more thoroughly. After acting, it checks whether the dirt level has dropped. If the result is good, the system can keep using that behavior. If not, it may adjust. This is a simple example of input, processing, decision, output, and feedback working as a loop.

Good engineering keeps learning within safe boundaries. A robot should not “experiment” in a way that risks damage or injury. That is why many learning systems operate in simulation first or learn only specific parts of behavior while fixed rules control safety. The practical outcome is a robot that can improve in narrow tasks without becoming unpredictable everywhere else.

AI helps robots make decisions, but it does not remove uncertainty. Robots can still misread sensor data, classify objects incorrectly, or choose actions that seem reasonable but are wrong for the situation. A delivery robot may mistake a reflection for open space. A service robot may fail to hear a voice command in a noisy room. A warehouse robot may place an item in the wrong location because two packages look similar. These are not unusual failures. They are part of working with imperfect data and changing environments.

There are several common causes. One is poor sensor quality or bad sensor placement. Another is weak training data that does not reflect real conditions. A third is overconfidence in AI outputs. Beginners sometimes assume that if the robot gives an answer, the answer must be correct. In reality, AI decisions are often probability-based. The robot may be selecting the most likely option, not a guaranteed truth.

A practical engineering habit is to build checks and backup plans. If confidence is low, the robot can slow down, ask for human input, or re-scan the area. If safety is involved, simple hard rules should override uncertain AI behavior. Logging errors is also important because mistakes reveal where the system needs improvement.

The key lesson is not that AI is unreliable. It is that good robotics requires understanding limits. Strong robot design combines useful AI, sensible rules, careful testing, and realistic expectations. When beginners understand this, they can compare rule-based robots and AI-powered robots more clearly. Rule-based robots are often easier to predict. AI-powered robots are often more flexible. The best practical systems use each approach where it fits best.

1. What is the main idea of robot decision making in this chapter?

2. How does AI-based decision making differ from fixed-rule decision making?

3. In which kind of situation is AI especially useful for robots?

4. Which sequence correctly matches the beginner decision flow described in the chapter?

5. What is an important beginner takeaway about AI in robots?

In earlier chapters, you learned that a robot is more than a machine with moving parts. A useful robot combines sensing, decision-making, and action. This chapter brings those pieces together by showing how robots move through real spaces, avoid problems, notice people and objects, and interact in ways that feel helpful rather than random. For a complete beginner, this is the point where a robot starts to feel alive—not because it has emotions, but because it can respond to what is happening around it.

At the simplest level, robot movement begins with motors and control signals. But real movement is not just about turning wheels or lifting an arm. A robot must decide where to go, how fast to move, what to avoid, and when to stop. Even a small cleaning robot has to handle walls, furniture, table legs, and people walking by. A delivery robot in a hallway has an even harder job because it must keep moving toward a goal while adjusting to change. That combination of movement and adjustment is a core part of robotics engineering.

When engineers design motion for robots, they usually think in layers. One layer handles direct control, such as telling the left wheel to spin faster than the right wheel. Another layer handles navigation, such as following a path to the kitchen. A higher layer may handle interaction and priority, such as pausing for a person, listening for a command, or choosing a safer route. Breaking the problem into layers helps engineers build systems that are easier to test and improve.

Navigation adds another important idea: a robot often needs some kind of map, plan, or reference point. In a simple home robot, that map may be rough and temporary. In a warehouse robot, the map may be detailed and connected to shelves, work zones, and safety rules. The robot compares what its sensors detect with its planned route and updates its actions as conditions change. This is why navigation is not the same as movement. Movement is how the robot physically travels. Navigation is how it chooses where and when to travel.

Interaction matters just as much as movement. A robot that can drive perfectly but cannot notice a person standing nearby is not truly useful in shared spaces. Robots often use cameras, distance sensors, microphones, touch sensors, and sometimes screens or lights to create basic interaction. They may detect a face, recognize that a person is blocking a path, stop when touched, or respond to a spoken command. These abilities are not magic. They come from practical engineering choices about which signals matter most and how the robot should respond safely.

One common mistake beginners make is imagining that robots either fully understand the world or do not understand it at all. In practice, most robots work with partial information. They estimate. They classify. They predict. A robot may not know that an object is a chair in the human sense, but it may still know there is a solid obstacle ahead and choose to turn. A service robot may not fully understand speech like a person does, but it can still detect simple commands such as stop, follow, or return to base. Good robotic systems are built to work reliably even with incomplete knowledge.

As you read this chapter, keep one simple workflow in mind: sense, decide, act, and repeat. The robot senses the world, decides what to do next, acts through motors or other outputs, then senses again. This loop happens over and over, sometimes many times each second. The better this loop is designed, the more capable the robot feels in everyday use. By the end of this chapter, you should be able to connect movement and interaction to the bigger idea of autonomy and explain, in simple language, why some robots seem smart and adaptable while others only follow fixed rules.

Robot movement starts with actuators, usually motors, that create physical motion. In a wheeled robot, motors spin the wheels. In a robotic arm, motors rotate joints. But motors alone do not create useful movement. The robot also needs control logic that tells each motor what to do and when to do it. This is where simple commands like move forward, turn left, slow down, or stop become real action.

A beginner-friendly example is a two-wheeled mobile robot. If both wheels turn at the same speed, the robot goes straight. If one wheel turns faster, the robot curves. If the wheels turn in opposite directions, the robot can spin in place. This may sound basic, but it shows an important robotics principle: complex behavior can come from simple control rules. Many robots use this idea because it is reliable and easier to maintain.

Engineers often use feedback to improve movement. Feedback means the robot measures what actually happened and compares it with what it wanted to happen. For example, wheel sensors may report that one wheel slipped on a smooth floor. The robot can then correct its speed. Without feedback, a robot only assumes its commands worked. That is risky in the real world.

Good engineering judgment also means choosing motion that matches the environment. Fast movement may look impressive, but in a narrow hallway or crowded room it creates safety problems. Smooth starts and stops are often better than sudden motion. A beginner mistake is focusing only on whether the robot can move, rather than whether it can move predictably and safely. In real applications, stable control matters more than dramatic motion.

Practical robot control is usually built in small steps. First, engineers test whether the robot can move forward accurately. Then they test turning. Then they test repeating those actions under different conditions, such as carpet, tile, or uneven surfaces. This step-by-step process is important because many navigation failures begin as simple movement errors. If the robot cannot control its own body reliably, higher-level intelligence will not save it.

Once a robot can move, the next question is where it should go. Navigation is the process of reaching a goal location through a space. Some robots use a fixed path, such as a line on the floor or a magnetic guide. Others build or use a map. A map gives the robot a reference for walls, doors, shelves, or work areas. Even a simple map helps the robot make better decisions than just wandering.

A path is the route from the robot’s current position to its target. In a home setting, the target might be a charging dock. In a warehouse, it might be shelf B12. The robot plans a path that is efficient and safe. That path may be a straight line if the area is clear, or it may involve several turns around blocked zones. Planning is useful because it saves time, battery power, and wear on the machine.

Not all robots need detailed maps. Some only need local guidance, such as following a wall or moving toward a beacon signal. Others need precise positioning because mistakes are expensive. A delivery robot that stops at the wrong door causes inconvenience. A factory robot moving to the wrong station can disrupt an entire process. Engineering judgment means matching the navigation method to the cost of error and the complexity of the environment.

A common mistake is assuming that once a path is planned, the robot can simply follow it without change. Real spaces are dynamic. A person may stand in the hallway. A box may appear where none existed before. Good navigation systems treat the path as a plan, not a promise. The robot keeps checking whether the path still makes sense and adjusts if needed.

In practical terms, successful navigation combines three tasks: estimating where the robot is, deciding where it needs to go, and controlling motion along the route. Beginners should remember that reaching a goal is not one single skill. It depends on sensing, planning, and control all working together. When these parts align, the robot looks purposeful instead of random.

Obstacle detection and avoidance allow a robot to move safely through changing spaces. This is one of the clearest examples of the sense-decide-act loop. The robot senses an object, decides whether it is a problem, and changes its motion. Without this ability, even a well-planned robot would fail as soon as the environment changed.

Robots detect obstacles using sensors such as ultrasonic sensors, infrared sensors, bump switches, lidar, or depth cameras. Each option has strengths and weaknesses. A bump sensor is simple and cheap, but it only detects an obstacle after contact. A distance sensor can detect obstacles earlier, giving the robot time to slow down or turn. More advanced systems can estimate object size and direction, which helps the robot choose a safer path.

Avoidance does not always mean taking a large detour. Sometimes the best choice is to stop and wait, especially if a person is crossing nearby. In human spaces, smooth and polite behavior matters. A robot that swerves suddenly can startle people even if it avoids collision. This is why practical robotics includes social behavior as well as physical safety.

Beginners often think obstacle avoidance is just a single rule like “if object ahead, turn right.” That may work in a simple demo, but it breaks down quickly. What if there is also an obstacle on the right? What if the robot is in a narrow corridor? What if the obstacle is temporary, like a moving person? Better systems compare several options, reduce speed near uncertainty, and keep checking sensor data as they move.

Good engineering judgment also includes handling sensor mistakes. Shiny surfaces, bright sunlight, dark materials, or clutter can confuse sensors. For that reason, robots often combine more than one sensor type. Practical outcomes improve when the robot uses layered safety: detect early, slow down, choose a new direction, and stop completely if confidence is low. That is how robots become dependable in the real world.

Computer vision gives robots a way to use cameras to detect and interpret parts of the world. For beginners, the key idea is simple: a camera captures images, and software looks for useful patterns. Those patterns may represent people, doors, floor markings, packages, or obstacles. Vision does not need to be perfect to be useful. Even basic visual detection can make a robot far more capable.

For example, a robot may use vision to detect a person standing in front of it. It may use visual markers on the floor to follow a route. A warehouse robot may identify a labeled bin. A home robot may look for its charging station. These are practical uses of pattern recognition. The robot is not “seeing” exactly as a human does. Instead, it is extracting signals that help with decisions.

AI often improves vision by helping the robot classify images or detect objects more flexibly than a simple rule-based system. A rule-based robot might only detect a dark line against a light floor. An AI-powered robot may learn to recognize common objects from many examples. This supports one of the major course outcomes: the difference between fixed rules and systems that can handle variation. AI does not remove the need for engineering discipline, but it helps robots cope with messier environments.

Common mistakes include trusting camera results too much or ignoring lighting conditions. Vision can fail in darkness, glare, shadows, or busy scenes. A practical robot may combine vision with distance sensing so that if the camera is confused, the robot still avoids hitting something. Engineers also simplify the task whenever possible. Rather than asking the robot to understand an entire room, they may ask it to recognize only a doorway or a marked object.

The practical outcome of basic computer vision is better awareness. A robot that can detect people and objects can move with more confidence, interact more naturally, and make decisions that feel intelligent. Vision becomes especially powerful when linked with movement, because the robot can look, adjust, and continue toward a goal instead of relying only on fixed instructions.

Robots often work around people, so interaction matters. Human-robot interaction includes any method the robot uses to receive input from people or communicate back. Common examples are speech commands, touch sensors, buttons, lights, screens, and sounds. These channels help the robot behave in a way that people can understand and influence.

Speech is useful because it feels natural. A person can say “stop,” “come here,” or “start cleaning.” For beginners, it is important to know that many robots do not understand language deeply. Instead, they detect a limited set of commands or patterns. That is still valuable. In practical use, a robot only needs to understand the small set of instructions relevant to its job. Simpler command systems are often more reliable than trying to process every possible sentence.

Touch is another important interaction method. A robot may stop when bumped, respond to a tap on a sensor panel, or detect that someone is guiding its arm. Touch creates direct and immediate communication. In safety design, it is especially useful because it gives a clear signal that a person is very close.

Good interaction also means the robot gives feedback. A beep, spoken message, colored light, or screen icon can tell the user what is happening. If the robot is confused, blocked, or returning to charge, the user should not have to guess. A common beginner mistake is designing robots that accept commands but provide little explanation of their status. That makes them frustrating to use.

The practical goal is not human-like conversation. It is smooth cooperation. A successful robot listens in a limited but dependable way, responds clearly, and adjusts its movement around people. This connection between interaction and mobility is important. A robot in a shared space should not only move efficiently; it should move in a way that feels safe, readable, and helpful to the people around it.

Autonomy means a robot can do useful work with limited human control. It does not mean the robot is fully independent in every situation. Instead, autonomy is a practical level of self-management. The robot senses its environment, makes decisions, acts on those decisions, and adjusts when conditions change. This chapter’s ideas—movement, path following, obstacle avoidance, object detection, and interaction—come together here.

A rule-based robot can be somewhat autonomous if the environment is simple and predictable. For example, a robot vacuum can move around a room, avoid stairs, and return to charge using mostly programmed behaviors. An AI-powered robot may go further by learning patterns, improving object recognition, or choosing better routes based on past experience. This comparison is central to understanding modern robotics. Autonomy is not only about moving without a joystick. It is about adapting to real conditions.

Engineering judgment matters because more autonomy is not always better. In some settings, a robot should ask for help when confidence is low. In others, it should stop rather than guess. A common beginner mistake is assuming that a robot should always continue acting. In reality, safe autonomy includes knowing when not to act. A robot that pauses and requests assistance may be more useful than one that pushes ahead and makes errors.

In practical systems, autonomy often depends on a repeating loop: gather sensor data, estimate the situation, choose an action, execute that action, and monitor the result. This loop may happen many times per second. The stronger the loop, the more natural the robot’s behavior appears. The robot is not magically intelligent. It is continuously updating its understanding and actions.

The practical outcome is simple to state: a robot becomes autonomous when movement, sensing, decision-making, and interaction work together toward a goal. That is why autonomy is best understood as a combination of abilities rather than a single feature. When those abilities are designed well, the robot can operate safely, helpfully, and with less human supervision in homes, businesses, and public services.

1. According to the chapter, what is the difference between movement and navigation in a robot?

2. Why do engineers often design robot motion in layers?

3. What does obstacle avoidance mainly help a robot do?

4. What is the chapter's main point about how most robots understand the world?

5. When does autonomy appear in a robot, according to the chapter?

AI robots are not just science fiction machines or expensive lab projects. They already work in homes, hospitals, warehouses, farms, factories, airports, and city services. For a beginner, this matters because robotics becomes easier to understand when you connect each machine to a real job. A robot is built to do something practical: move items, inspect equipment, guide a person, clean a floor, monitor crops, or help a nurse. When we look at real use cases, we also begin to see an important pattern: the best robot is not the most advanced one. It is the one that fits the task, the environment, the budget, and the safety rules.

In earlier chapters, you learned that robots sense, decide, and act. In the real world, this cycle happens under many constraints. A warehouse robot may need fast navigation and obstacle detection. A healthcare robot may need safe movement and clear communication. A farm robot may need rugged hardware that survives dust, rain, and uneven ground. AI adds value when conditions change and the robot must handle variation instead of following one rigid script every time. That is why some jobs can be done with rule-based robots, while others benefit from AI-powered robots that recognize patterns, estimate risk, or adapt to changing situations.

This chapter explores major industries that use AI robots and helps you match robot types to practical jobs. You will also see the benefits and trade-offs. Robots can improve speed, consistency, and safety, but they also bring costs, maintenance needs, and ethical questions. Good engineering judgment means asking simple but powerful questions: What problem are we solving? Does the robot really help? What could go wrong? Who supervises it? How much training is needed? These questions matter more than flashy demonstrations.

As you read, notice that most successful robots are part of a larger workflow. A robot usually does not replace an entire business process. Instead, it handles one part well, while people manage exceptions, make final decisions, and maintain the system. This practical view is especially helpful for beginners because it shows where entry-level learning starts. You do not need to build a humanoid robot to begin. You can study sensing, navigation, computer vision, simple automation, safety design, or data labeling, and each of these connects to real jobs in robotics.

By the end of this chapter, you should be able to recognize common uses of AI robots at home, in business, and in public services, compare AI-powered systems with simpler rule-based machines, and identify beginner-friendly paths into this field. Real robotics is practical, messy, and collaborative. That is exactly what makes it valuable.

Practice note for Explore major industries that use AI robots: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Match robot types to practical jobs: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Understand benefits and trade-offs in real settings: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for See where beginner opportunities exist: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Home robots are often the first robots that beginners encounter. The most familiar examples are robot vacuum cleaners, lawn-mowing robots, smart home assistants on wheels, and educational companion robots. These systems show a clear difference between simple automation and AI-enhanced robotics. A basic robot vacuum may follow rule-based patterns such as bump, turn, and continue. A more advanced one uses sensors, mapping, and AI to recognize room layouts, avoid cables, detect stairs, and learn efficient cleaning routes over time.

The practical job of a home robot is usually narrow. It cleans floors, patrols a room, reminds a user about medication, or provides simple interaction. This is a good reminder that successful robots do not need to do everything. In engineering, narrowing the task often improves reliability. A robot that only needs to clean flat indoor floors is easier to design than one that must climb stairs, sort laundry, and wash dishes. Beginners sometimes imagine a single general-purpose household robot, but real products succeed by solving one useful problem well.

AI helps home robots handle variation. Furniture moves. Pets appear unexpectedly. Lighting changes during the day. Children leave toys on the floor. In these situations, a rigid rule set may fail, while AI-based perception can classify obstacles, recognize areas, and update paths. Still, there are trade-offs. More AI often means more sensors, more processing, more cost, and more privacy concerns if cameras or cloud services are involved. A practical designer must decide whether a simple sensor and fixed behavior are enough or whether adaptive behavior is worth the added complexity.

Common mistakes in this area include overtrusting the robot, ignoring maintenance, and misunderstanding the environment. A user may expect perfect cleaning even when the floor is cluttered. Dust can block sensors. Wheels can get tangled in cords. Maps can become outdated when furniture is rearranged. The lesson is simple: robots work best when the environment supports them. In many homes, people still prepare the space so the robot can succeed. That is not failure; it is part of real human-robot workflow.

For beginners, home robots are a useful learning model because they combine sensors, actuators, navigation, battery management, and user interaction in one visible system. By studying them, you can begin matching robot types to practical jobs: a mobile cleaning robot for floor care, a companion robot for reminders and simple conversation, and a telepresence robot for remote family support. Each choice reflects a real use, a technical design, and a limit.

Warehouses are one of the strongest real-world examples of robotics success. In these spaces, robots move shelves, carry bins, scan inventory, sort packages, and sometimes assist with picking items for orders. The environment is more controlled than a public street, which makes automation easier to deploy at scale. This is why many businesses adopt warehouse robots before attempting fully autonomous city delivery.

Different robot types match different jobs. Autonomous mobile robots, often called AMRs, navigate through warehouse aisles and transport goods from one station to another. Automated guided vehicles, or AGVs, usually follow fixed paths or markers and are more rule-based. Robotic arms may pick, place, palletize, or sort items. Drones can sometimes be used for inventory checking in large storage facilities. Matching the robot to the job requires engineering judgment. If the route never changes, a simpler AGV may be enough. If the layout changes often and human workers share the space, an AI-enabled AMR may be a better fit.

The workflow matters more than the robot by itself. A warehouse robot usually connects to barcode systems, order software, battery charging stations, and human workers. The robot senses location, decides a path, and acts by moving or lifting. AI may help with path planning, object recognition, demand prediction, or pick optimization. But even strong AI does not remove the need for clear process design. If package labels are unreadable, storage is inconsistent, or aisles are blocked, performance drops quickly.

In delivery, the challenge becomes harder. Sidewalk robots and self-driving delivery vehicles must deal with weather, pedestrians, curbs, road rules, and unusual obstacles. Here the trade-offs become obvious. The benefit is lower labor pressure and possibly faster local delivery. The cost is technical complexity, safety concerns, legal restrictions, and difficult edge cases. A robot may handle 95 percent of situations well, but the remaining 5 percent can include the hardest and most dangerous ones.

Beginners should notice an important lesson from this industry: robots thrive in structured environments first. Warehouses work because they are designed around repeatable movement and measurable tasks. Public delivery is attractive, but much less predictable. That is why many entry-level robotics projects focus on indoor navigation, shelf scanning, or package sorting before moving into open-world autonomy. These are excellent practical pathways into robotics because they teach sensing, mapping, motion planning, and human safety in a business setting.

Healthcare robotics is one of the most sensitive and important application areas. In hospitals and care facilities, robots can transport supplies, disinfect rooms, support rehabilitation exercises, assist with telepresence, and help staff monitor patients. In elder support, robots may provide reminders, fall detection, communication support, or mobility assistance. The practical goal is usually not to replace caregivers, but to reduce repetitive workload and improve safety and consistency.