Reinforcement Learning — Beginner

Learn RL from zero with simple game and robot projects

This beginner course is designed like a short technical book, but taught as a guided learning journey. If you have heard the term reinforcement learning and assumed it was too advanced, too mathematical, or too technical, this course is for you. You do not need prior experience in artificial intelligence, programming, machine learning, or data science. Everything starts from first principles and uses plain language, simple examples, and a steady step-by-step structure.

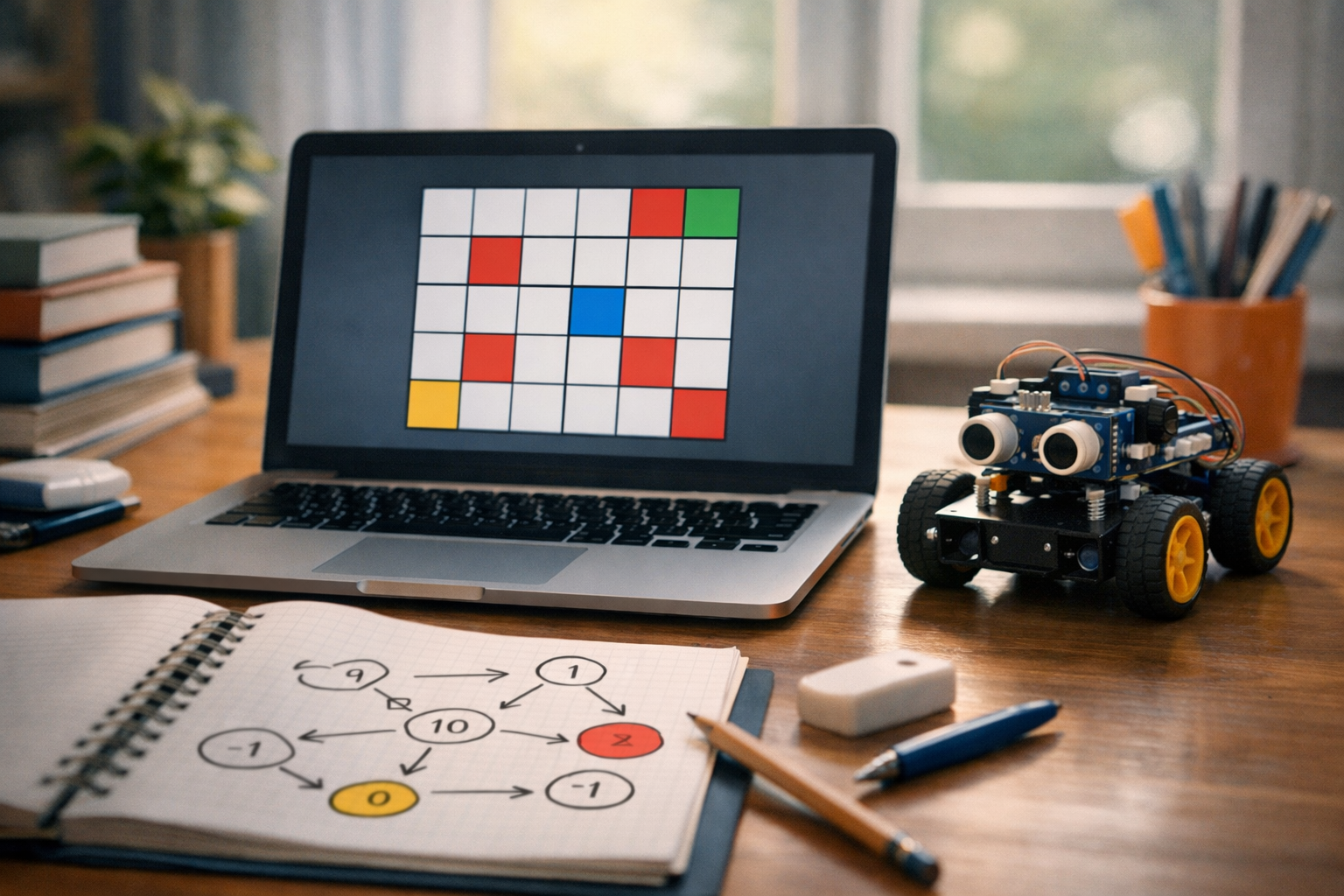

Reinforcement learning is a way for a computer agent to learn by trying actions and receiving rewards or penalties. That idea may sound abstract at first, so this course makes it concrete through game and robot ideas. You will see how an agent learns to move through a small game world, make better decisions over time, and improve through repeated experience. You will also see how the same logic can be used to think about robot tasks such as moving, avoiding obstacles, or reaching a target.

Many introductions to reinforcement learning begin with formulas, advanced code, or technical vocabulary. This course does the opposite. First, you learn what the main parts of reinforcement learning mean: agent, environment, state, action, reward, and goal. Then you learn how choices are made, why reward design matters, and how an agent balances trying new things with using what it already knows. Only after that do you move into value, Q-values, and the basic logic behind Q-learning.

The course follows a clear sequence across six chapters. Each chapter builds on the previous one so you never feel lost. The result is not just memorizing terms, but actually understanding how learning by trial and error works.

By the end of the course, you will be able to explain reinforcement learning in simple words, understand the role of states, actions, and rewards, and describe how an agent improves over time. You will understand the difference between immediate and future reward, know why exploration matters, and see how a Q-table stores useful experience. You will also build beginner intuition for game-based learning tasks and simple robot-learning scenarios.

This course is not about making you an expert overnight. Instead, it gives you a strong foundation that many beginners never receive. Once you understand the basic loop of reinforcement learning, later topics become much easier to approach.

The structure is intentionally compact and coherent. Think of it as a short book you can study chapter by chapter. Chapter 1 introduces the core idea of reinforcement learning. Chapter 2 focuses on decision-making and reward design. Chapter 3 explains value and Q-learning in a beginner-safe way. Chapter 4 applies the ideas to simple games. Chapter 5 extends that thinking into beginner robot ideas. Chapter 6 ties everything together, explains limits and next steps, and helps you move forward with confidence.

If you are ready to begin, Register free and start learning at your own pace. You can also browse all courses if you want to explore related AI topics first.

This course is ideal for curious beginners, students, career changers, hobbyists, and professionals who want a gentle entry point into AI. It is especially helpful if you want to understand how learning agents work in games, simulations, and simple robotic systems. If you have ever wanted an RL course that explains the basics slowly and clearly, this is a strong place to start.

By the end, you will not just know the words used in reinforcement learning. You will understand the logic behind them, recognize where RL fits in the AI landscape, and feel prepared to take your next step with much more confidence.

Machine Learning Engineer and AI Educator

Sofia Chen designs beginner-friendly AI learning programs that turn difficult ideas into practical steps. She has helped students and working professionals understand machine learning through clear examples, hands-on projects, and plain-language teaching.

Reinforcement learning, often shortened to RL, is a way of teaching a system to make decisions by interacting with a situation and learning from the results. Instead of giving a machine a full list of rules for every possible case, we give it a goal and a way to notice whether its behavior is helping or hurting. Over time, it improves through trial and error. This makes RL feel different from normal programming. In ordinary programming, a developer writes explicit instructions: if this happens, do that. In reinforcement learning, we define the setting, the choices available, and the feedback signal, then let the agent discover useful behavior by experience.

This idea sounds abstract at first, but it is easier to understand when you think about how people learn many real skills. A child does not memorize a giant rulebook before learning to balance on a bicycle. They try, wobble, adjust, and slowly connect actions to outcomes. A game-playing system does something similar. A simple robot trying to move across a room also does something similar. It takes an action, sees what changed, and learns whether that change was good, bad, or neutral. That repeated loop is the heart of reinforcement learning.

Throughout this chapter, we will keep the language practical. You do not need advanced math to understand the key ideas. The core pieces are simple: an agent is the learner or decision-maker, the environment is everything it interacts with, an action is a choice it can make, and a reward is the feedback it receives. The agent wants to collect good rewards over time, not just in one moment. That last detail matters because the best immediate move is not always the best long-term move. Much of RL is about learning to think ahead without being manually told how.

As you work through this course, these ideas will lead directly into practical tools. You will build intuition for policies, which are decision rules; value, which estimates how useful situations and actions are; and exploration versus exploitation, which is the balance between trying something new and using what already seems to work. Later, when you study Q-learning, these ideas will snap into place because you will already understand the learning loop in plain language. For now, focus on the picture: reinforcement learning is learning by acting, seeing consequences, and gradually improving behavior toward a goal.

This chapter is designed to give you a solid mental model before any heavy technical detail appears. If you can clearly explain why rewards are different from hand-written instructions, how an agent and environment interact, and why goals shape behavior, then you are already thinking like a reinforcement learning practitioner. That practical intuition is the foundation for everything that follows, from tiny grid games to movement tasks for simulated robots.

Practice note for See how learning by rewards differs from normal programming: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Understand agents, environments, actions, and rewards: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Connect RL ideas to games, robots, and daily life: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Build a simple mental model of trial-and-error learning: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

The most important shift in reinforcement learning is this: we stop trying to program every correct move directly, and instead create a system that can learn from consequences. In normal programming, we often know the exact logic we want. For example, if a login password is wrong, reject it. If a file does not exist, show an error. Those tasks are predictable and rule-based. Reinforcement learning is useful when writing all the right rules by hand is too hard, too brittle, or too dependent on experience.

Imagine a small game where a character must reach a goal square while avoiding traps. You could try to write a massive list of rules for every board layout, but that quickly becomes messy. An RL approach works differently. The character tries moving up, down, left, or right. If it reaches the goal, it gets a positive reward. If it falls into a trap, it gets a negative reward. If it wastes time, maybe it gets a small penalty. Over many attempts, it starts to notice which actions tend to lead to better outcomes.

This is why people describe RL as learning by trial and error. The phrase sounds simple, but there is engineering judgment hidden inside it. You must design the task so that good behavior can actually be discovered. If rewards are too rare, the agent may wander without learning much. If rewards are poorly chosen, the agent may learn strange shortcuts that technically earn points but miss the spirit of the task. Beginners often assume the agent will “understand” the real intention automatically. It will not. It only learns from the feedback you define.

A practical way to think about RL is as a loop:

That loop repeats again and again. The system improves not because it was told the perfect answer, but because it keeps connecting actions to results. This is the central idea you should carry into every later chapter.

Every reinforcement learning problem can be described as an interaction between two parts: the agent and the environment. The agent is the learner, the decision-maker, or the controller. The environment is the world the agent acts in. In a video game, the agent could be the player character controlled by the learning system, and the environment would include the game map, enemies, walls, score rules, and timing. In a robot problem, the agent is the robot controller, while the environment includes the floor, obstacles, battery state, and physical responses to movement.

This separation is useful because it gives structure to the problem. When you build an RL project, you ask clear questions. What information does the agent get to observe? What actions is it allowed to take? What reward signal will it receive? When does one attempt end? These questions are practical, not just theoretical. They shape whether the system can learn well or struggle for no clear reason.

For beginners, one common mistake is to make the environment too vague. If you say, “I want the agent to move well,” that is not enough. Move where? Avoid what? How does the world respond? Another common mistake is to give the agent unrealistic powers, such as seeing hidden future information that would not be available in real use. A well-designed environment should match the problem you actually care about solving.

It also helps to remember that the environment does not need to be a physical place. It can be a board game, a recommendation system simulation, a warehouse route planner, or a toy grid where an object moves between cells. The world can be tiny and simple. In fact, it should be simple when you are learning. Small environments are easier to debug, easier to visualize, and much better for building intuition.

Good engineering practice in reinforcement learning begins with a clear agent-environment boundary. If you define that boundary well, the rest of the workflow becomes much easier to reason about. If you define it poorly, even a correct algorithm can appear broken.

Now let us unpack the three most common RL words: state, action, and reward. A state is the current situation the agent is in, described in whatever form matters for decision-making. In a grid game, the state might be the agent’s location, where the goal is, and where the obstacles are. In a robot movement task, the state might include position, direction, and distance from a target. You can think of the state as “what the agent knows right now that helps it choose what to do next.”

An action is a choice the agent can make. In simple tasks, actions are easy to name: move left, move right, pick up, drop, stop, accelerate. Actions do not need to be smart by themselves. They are just options. What matters is learning which action is useful in which state. A move that is good in one place may be terrible in another. That is why RL is not about finding one universally good action. It is about matching actions to situations.

A reward is the feedback signal. It tells the agent whether the immediate result of its action was desirable. Rewards can be positive, negative, or zero. Reaching a goal may give +10. Hitting a wall may give -5. Taking a step may give -1 to encourage shorter paths. Notice that reward is not the same as human praise or explanation. It is a simple number-like signal that pushes learning.

Beginners sometimes confuse rewards with goals. They are related, but not identical. The goal is the overall behavior you want. The reward is the mechanism used to guide learning toward that behavior. If the reward is poorly designed, the agent may optimize the wrong thing. For example, if a cleaning robot gets reward only for moving, it may spin in circles forever. If it gets reward for covering new floor area efficiently, it has a better reason to clean properly.

When you later study value and Q-learning, these simple ideas become very powerful. Value asks how good a state or action seems over time, not just right now. But the base vocabulary never changes: state, action, reward. If you understand those in plain language, you are ready to move forward.

Goals are the compass of reinforcement learning. Without a clear goal, the agent has no meaningful direction. It may still collect rewards if the environment is set up somehow, but the behavior it learns could be random, wasteful, or misleading. In practice, one of the biggest differences between a toy RL demo and a useful RL system is how well the goal has been translated into rewards, success conditions, and episode structure.

Consider two versions of a movement task. In the first, a robot gets a small positive reward for every second it remains powered on. It might learn to stand still forever. In the second, it gets a larger reward for reaching a target quickly and a small penalty for each time step. Now the learned behavior is more likely to involve purposeful movement. The goal changed the reward design, and the reward design changed the behavior.

This is where engineering judgment matters. A good RL setup does not just ask, “Can the agent get points?” It asks, “Are these points aligned with the behavior I actually want?” If you reward only the final success and nothing else, learning may be slow because the agent rarely stumbles onto the right sequence by chance. If you reward too many small shortcuts, the agent may exploit those shortcuts instead of solving the intended task. Designing goals and rewards is often as important as choosing the algorithm.

Another practical point is that goals often involve long-term thinking. In many tasks, the best immediate reward is not the best total reward. A game agent may need to step away from a small coin to avoid a trap and reach a much larger prize later. A delivery robot may take a slightly longer path now to avoid a blocked route that would waste more time later. RL is powerful because it can learn behavior that values future outcomes, not just instant gains.

If you remember one lesson here, let it be this: the agent learns what your setup measures, not what you secretly mean. Clear goals create clear learning. Fuzzy goals create surprising mistakes.

Before touching code, it helps to connect reinforcement learning to everyday situations. Think about learning to park a bicycle in a crowded rack. Early attempts may be awkward. You try an angle, the wheel slips, you adjust, and eventually you learn what placement works. The “reward” is not a number in real life, but the idea is the same: stable parking is a good outcome, bumping into other bikes is a bad one, and repeated practice improves your choices.

Games are one of the clearest RL examples. In a maze game, the player learns which directions lead to treasure and which lead to dead ends or enemies. In a racing game, turning too sharply may crash the car, while smooth steering and speed control help finish the track faster. The agent does not need a written essay explaining every move. It needs experience and feedback.

Robots provide another strong example. A small warehouse robot may need to navigate around shelves to reach a package. It senses where it is, chooses movement actions, and receives feedback based on progress, collisions, delay, or successful delivery. Even a very simple movement task can teach the core RL idea: good control emerges from repeated interaction with the environment.

RL ideas also appear in daily habits. Suppose you are trying different routes to commute to work. You test one road, hit traffic, and remember it was slow. Another route is longer in distance but faster in time. Over repeated days, you build a policy for which route to choose under different conditions. That word policy simply means the strategy the agent uses to decide actions in states.

These examples also hint at exploration versus exploitation. Exploration means trying something new to gather information. Exploitation means using what already seems to work. If you always take the same commute route, you may miss a better one. If you always experiment, you never benefit from what you have learned. Reinforcement learning lives in that balance. Good learners do both: they explore enough to discover useful options, then exploit enough to make progress.

Let us end with a tiny story that previews how reinforcement learning works in practice. Imagine a 4-by-4 grid. A small agent starts in the top-left corner. The goal is in the bottom-right corner. One cell contains a trap. The agent can move up, down, left, or right. Reaching the goal gives +10 reward. Falling into the trap gives -10. Every normal step gives -1, so shorter paths are better.

At the beginning, the agent knows nothing. It may move randomly and often make poor choices. Some attempts end quickly in the trap. Some wander in circles. Occasionally it reaches the goal by luck. But each run produces experience: “When I was in this place and moved right, the result tended to be bad,” or “From this cell, moving down often led closer to success.” This experience starts shaping future decisions.

Now we can gently introduce the logic behind Q-learning without formal math. Q-learning keeps a running estimate of how useful each action is in each state. If taking an action from a particular cell often leads to better future rewards, that action’s score goes up. If it often leads to traps or wasted steps, the score goes down. Over time, the agent begins choosing actions with better learned scores more often.

Notice the workflow. First, define the world. Second, define allowed actions. Third, define rewards that reflect the goal. Fourth, let the agent interact many times. Fifth, update the action estimates from the results. This is exactly the practical pattern you will use later for both grid games and movement tasks.

A common beginner mistake is expecting perfect behavior after only a few attempts. Reinforcement learning usually needs many episodes because the agent is discovering patterns through experience. Another mistake is making the tiny world too complicated too early. Start small. A simple grid teaches the core ideas clearly: trial and error, delayed outcomes, better and worse actions, and gradual improvement. Once that picture is solid, larger examples become much easier to understand.

That is the real spirit of reinforcement learning: not magic, not memorization, but repeated action, feedback, and improvement toward a goal.

1. What makes reinforcement learning different from normal programming?

2. In reinforcement learning, what is the agent?

3. Which example best matches the chapter’s mental model of reinforcement learning?

4. Why does the chapter say rewards matter over time, not just in one moment?

5. Which description best captures the core loop of reinforcement learning?

In reinforcement learning, the heart of the problem is choice. An agent is placed in a situation, notices what is happening, picks an action, and then experiences a result. That cycle repeats again and again. If you keep this simple loop in mind, most beginner ideas in reinforcement learning become easier to follow. The agent does not begin with deep understanding. It learns from experience, one decision at a time. In practical terms, this means the agent is always asking a small question: given what I can observe right now, what should I do next?

This chapter builds intuition for that decision process without heavy math. We will look at how states describe the current situation, how actions are the available moves, and how rewards push the agent toward better behavior. We will also connect those ideas to everyday engineering judgment. In real projects, the hardest part is often not the algorithm itself. It is defining the task clearly enough that the agent can learn something useful. A small mistake in reward design or action choices can create strange behavior that looks clever at first but fails the real goal.

Another key idea is that learning happens over time, not just in one step. A move that looks bad right now may lead to a better outcome later. A robot may need to back up before moving forward. A game agent may step away from a small coin so it can later reach a larger reward. This is why reinforcement learning feels different from simple rule-based programming. We are not only asking what seems best immediately. We are asking what helps over the whole journey.

As you read, keep a concrete image in mind: a small character in a grid world. It sees its current square, can move up, down, left, or right, and gets rewards based on where it ends up. That toy example is powerful because it mirrors larger real systems. A warehouse robot, a game bot, or a scheduling agent all operate through the same loop of state, action, reward, and repeated adjustment. By the end of this chapter, you should be able to describe that loop clearly, understand why exploration matters, and explain a policy as a practical decision rule rather than an abstract technical object.

We will also prepare the ground for Q-learning. You do not need formulas yet to follow its logic. Q-learning asks a practical question: for each state and action, how good does that choice seem based on experience so far? If the agent keeps trying actions, collecting rewards, and updating its estimates, it gradually learns which choices are promising. That is the big picture. The rest of this chapter focuses on the pieces that make those updates meaningful.

Practice note for Understand decisions one step at a time: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Learn why good rewards shape good behavior: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Explore exploration versus exploitation: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Describe a policy without technical overload: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Understand decisions one step at a time: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

A state is the information the agent uses to decide what to do. An action is one of the moves available from that situation. If a game character is standing in the top-left corner of a grid, that position is part of the state. If a robot senses that a wall is close on the left side, that reading may also be part of the state. The action might be move right, move forward, stop, or turn. Reinforcement learning begins by connecting these two ideas: when the state looks like this, which action should I take?

For beginners, it helps to think of the state as a snapshot. It does not need to include everything in the universe. It needs to include enough useful information for a good next decision. This is an engineering judgment call. If the state leaves out something important, the agent may appear confused because different situations look identical to it. If the state includes too much irrelevant detail, learning may become slow because the agent must handle many unnecessary variations.

A common mistake is to define actions that are either too weak or too broad. If actions are too weak, the agent may need far too many steps to do anything meaningful. If they are too broad, one action may change too much at once, making outcomes hard to learn from. In practice, good action design means each move should be understandable, repeatable, and clearly tied to the task. In a grid, the four movement actions are a good beginner choice because their effects are easy to observe.

When we say the agent chooses an action from a state, we are describing the most basic workflow in reinforcement learning:

That loop is simple, but it carries a deep idea. The agent does not learn by being told the perfect move directly. It learns by trying moves and seeing the consequences. In early training, choices may look random. Over time, useful patterns appear. If moving right from one square often leads closer to a goal, that action starts to look better in that state. This is the basic intuition behind value estimates and later Q-learning tables.

The practical outcome is that every reinforcement learning system depends on clear state and action definitions. Before worrying about algorithms, ask: what does the agent know right now, and what exactly can it do next? Good answers make learning possible.

One of the most important mindset shifts in reinforcement learning is learning to look beyond the next step. Some actions produce a reward immediately. Others seem unhelpful now but create better opportunities later. An agent has to learn the difference. If it only chases what pays right away, it may miss larger gains that require patience.

Imagine a maze with a small coin nearby and a treasure chest farther away. If the agent grabs the coin and gets stuck in a dead end, the immediate reward looked good but the total outcome was poor. Another path may involve taking several empty moves with no reward at all, then reaching the treasure. A beginner might wonder why the agent would ever choose the empty path. The answer is that reinforcement learning tries to judge actions by what they lead to, not only by what they give in the moment.

This idea is central to building intuition for value. Value is not just about the present state looking pleasant. It is about how promising that state is for future rewards. In practical language, a good state is one from which the agent is likely to do well soon. A good action is one that tends to move the agent into such states. Q-learning builds exactly this kind of memory. It updates its estimate for a state-action pair based partly on the reward now and partly on what seems possible next.

Engineering judgment matters here because tasks differ. In some environments, short-term rewards are enough. In others, a focus on future reward is essential. A balancing robot might need immediate feedback to avoid falling, while a path-planning agent might accept small step costs as it heads toward a distant goal. This is why reward timing affects behavior so strongly.

A common mistake is to assume the agent is failing when it temporarily moves away from reward. Sometimes that behavior is actually smart. Another mistake is giving rewards only at the final goal and nowhere else. That can make learning painfully slow, especially for beginners, because the agent receives too little guidance. A practical compromise is often to keep the main goal reward large, while also using small signals that encourage progress without changing the real objective.

The practical outcome is clear: when you evaluate an agent's choice, do not ask only, “What happened right after the move?” Also ask, “What path did this move open up?” Reinforcement learning becomes much easier to understand when you think in sequences rather than isolated steps.

Rewards shape behavior. This sounds obvious, but it is one of the most important practical truths in reinforcement learning. The agent does not understand your intentions. It only experiences the reward signal you provide. If that signal points in the wrong direction, the agent may learn behavior that technically earns reward while failing the real task.

Good reward design starts by asking what success actually looks like. In a navigation task, success might mean reaching the target quickly and safely. In a game, it might mean collecting points while avoiding hazards. Once the goal is clear, the reward should make useful behavior easier to discover. For example, a positive reward for reaching the goal, a small negative reward per step, and a larger negative reward for hitting a trap is a simple and often effective design. The step penalty encourages efficiency, the goal reward encourages completion, and the trap penalty discourages risky mistakes.

Bad reward design often creates loopholes. Suppose a robot gets reward every time it moves its wheels, regardless of whether it gets closer to the destination. It may learn to spin in place forever because wheel movement keeps paying. Suppose a game agent gets small rewards for touching coins but no penalty for wasting time. It may circle around easy coins instead of finishing the level. These are not signs that the agent is broken. They are signs that the reward taught the wrong lesson.

There are several practical checks you can apply when designing rewards:

A common beginner mistake is to pile on many reward rules at once. That can make behavior hard to interpret. Start simple. Observe what the agent learns. Then adjust one part at a time. Another mistake is making rewards too noisy or inconsistent, so the same behavior is sometimes praised and sometimes punished without a clear reason.

The practical outcome is that reward design is not decoration around the algorithm. It is part of the algorithm's teaching method. Good rewards produce good habits. Poor rewards produce surprising, often amusing, but usually unhelpful behavior. In real reinforcement learning work, a thoughtful reward function is often the difference between a trainable agent and a frustrating experiment.

An agent faces a constant tradeoff between exploration and exploitation. Exploration means trying actions that may be uncertain, simply to learn more. Exploitation means choosing the action that currently seems best based on past experience. Both are necessary. If the agent only exploits, it may get stuck doing something that is good enough but not truly best. If it only explores, it may never settle into strong performance.

Think about a child choosing between two paths in a park. One path has led to a small reward before, so it feels safe. The other path is unknown and might lead to something better. Reinforcement learning systems face this same situation all the time. In a grid world, the agent may find a nearby coin early and keep returning to it. Without exploration, it may never discover a larger treasure in another corner.

This tradeoff is especially important in the early stages of learning. At first, the agent knows very little, so exploration should be common. Later, after enough experience, it should spend more time exploiting what it has learned. In practical systems, this often means starting with more randomness and gradually reducing it. That approach lets the agent gather information first and then use it more confidently.

A common beginner mistake is to judge a learning agent too early. During exploration, the agent may look clumsy or irrational because it is intentionally trying actions that are not yet proven. Another mistake is reducing exploration too fast. The agent then locks in mediocre behavior because it stops searching before it has enough evidence.

Engineering judgment is about balance. In a small grid game, modest exploration may be enough because the space is simple. In a larger robot task, broader exploration may be needed to discover workable strategies. Safety also matters. For a real robot, pure random exploration can be dangerous, so action limits or safe training environments are important.

The practical outcome is that exploration is not wasted time. It is how the agent builds the knowledge needed for better choices later. Exploitation uses that knowledge. A good reinforcement learning workflow respects both phases instead of expecting perfect behavior from the start.

A policy is simply the agent's rule for choosing actions. That is the beginner-friendly way to think about it. If the agent is in this state, the policy says what to do next. Sometimes the policy always picks one action. Sometimes it assigns chances to different actions. Either way, it is the practical map from situation to behavior.

This idea sounds formal, but it is very natural. A human driver has a policy too, even if they never use that word. When the traffic light is red, stop. When the lane is clear, move forward. In reinforcement learning, the policy may begin as random or weak, then improve through experience. Over time, as the agent learns which actions lead to stronger outcomes, the policy becomes more useful.

It helps to separate a policy from rewards and values. Rewards are signals from the environment. Value is the agent's estimate of how promising states or actions are. The policy is the behavior that results from using those estimates. In Q-learning, for example, the agent stores a score-like estimate for each state-action pair. A simple policy can then say: choose the action with the highest estimated score most of the time, but occasionally explore. This is a practical bridge between learning and acting.

A common mistake is thinking there must be one perfect policy from the beginning. In reality, policies evolve. Early policies may be poor but still useful because they generate experience. Another mistake is describing a policy too vaguely. In engineering work, you should be able to explain what the agent does in typical states and why. If you cannot describe the decision rule, debugging becomes hard.

Useful questions to ask about a policy include:

The practical outcome is that a policy is not an abstract mystery. It is the agent's current way of making choices. As learning improves, the policy improves. If you can describe the policy in plain language, you are already thinking like a reinforcement learning practitioner.

Small examples are the best way to build intuition before moving to bigger reinforcement learning problems. Consider a 4 by 4 grid with a coin at one square, a trap at another, and the agent starting in the corner. The state is the current square. The actions are up, down, left, and right. The rewards might be plus 10 for the coin, minus 10 for the trap, and minus 1 for each step. This simple setup teaches several ideas at once. The step penalty encourages shorter routes. The trap penalty teaches avoidance. The coin reward defines the main goal.

Now imagine a maze where the shortest-looking route leads near danger, but a slightly longer route is safer. If the agent learns only from immediate reward, it may make poor choices. If it learns to value future outcomes, it starts to prefer the safer path. This is exactly the kind of pattern Q-learning can capture over repeated episodes. After enough attempts, the agent's action estimates improve, and its policy becomes more reliable.

A movement task can make the same ideas feel physical. Picture a tiny robot on a line of five positions. It starts in the center. Moving right eventually reaches a charging station worth plus 5. Moving left reaches a slippery zone worth minus 5. There may also be a small penalty for every move. At first, the robot tries both sides. Over time, it discovers that some choices reliably lead to better total return. Even this toy problem reflects a real robotics idea: actions are judged by where they tend to lead, not just by the immediate sensation after moving.

When building your own beginner examples, keep them small enough to inspect manually. You should be able to trace one episode by hand and explain why the reward changed. Good starter setups include:

A common mistake is adding too many features too soon, such as multiple reward types, random teleports, moving enemies, and large maps all at once. That makes it hard to tell what the agent is learning. Start with one clean behavior objective. Then expand carefully.

The practical outcome is that these tiny worlds give you a safe place to understand states, actions, rewards, policies, and exploration. If you can explain why the agent chooses certain moves in a coin grid or a small maze, you are ready to follow the step-by-step logic of Q-learning in the next stage of the course.

1. What is the basic decision loop described in this chapter?

2. Why does reward design matter so much in reinforcement learning?

3. What does the chapter suggest about judging an action?

4. In this chapter, how should a beginner think about a policy?

5. How does this chapter introduce the idea behind Q-learning?

In the previous parts of this course, you saw the basic shape of reinforcement learning: an agent observes a situation, takes an action, receives a reward, and tries again. That loop is simple to describe, but the real question is this: how does the agent decide what is worth doing? This chapter answers that question by building intuition for value, Q-values, and the Q-table. These ideas are the working memory of many beginner-friendly reinforcement learning systems.

Think of value as a practical estimate of future usefulness. A reward is immediate: maybe the robot reaches the target, maybe the game character hits a wall, maybe a move earns a point. Value is broader. It asks whether a state or action is likely to lead to good outcomes soon after. In everyday life, this is like choosing a road not because it is pleasant for one second, but because it tends to get you home faster overall. Reinforcement learning agents need this longer view, because many good decisions do not pay off immediately.

This chapter focuses on first principles rather than advanced math. We will treat learning as a repeated correction process. The agent starts with rough guesses. After trying actions and seeing results, it adjusts those guesses. Over time, some actions begin to look promising, others start to look costly, and a simple table can hold that growing experience. That table is called a Q-table. It is one of the clearest ways to see how trial-and-error learning works in a small environment such as a grid world, a maze, or a simple movement task.

As you read, keep an engineering mindset. Reinforcement learning is not only about theory. It also involves judgment: how fast should the agent update its beliefs, how much should it care about future rewards, and when should it keep exploring instead of repeating its current best action? Even with a tiny example, these design choices matter. A learner that updates too aggressively can become unstable. A learner that ignores the future can miss better routes. A learner that never explores may get stuck doing something merely adequate.

By the end of this chapter, you should be able to explain what value and Q-values mean in plain language, understand how a Q-table stores experience, and follow the Q-learning update idea step by step. Most importantly, you should be able to read a tiny example and understand why the numbers in the table change the way they do.

The six sections that follow build this picture carefully. We begin with the meaning of value, then separate state value from action value, then show the table structure that stores learned estimates. After that, we introduce two core controls of the learning process, walk through the update idea, and finish with a tiny Q-learning example that makes the whole workflow concrete.

Practice note for See how an agent estimates what is worth doing: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Understand value and Q-values from first principles: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Learn how a Q-table stores experience: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

In reinforcement learning, value is an estimate of how good something is for the future. That “something” might be a state, such as standing in the middle of a grid, or it might be an action taken from that state, such as moving right. The key point is that value is not the same as immediate reward. A reward is what happens now. Value is a prediction about what tends to happen next if the agent continues from here in a sensible way.

This distinction matters because many tasks delay success. Imagine a robot in a hallway. Taking one step toward the charging station may not produce any reward at that exact moment, but it is still a useful move because it leads closer to a later positive outcome. In the same way, moving away from a trap may not instantly look impressive, but it increases the chance of future success. Value captures that delayed usefulness. Without it, an agent would chase only immediate gains and often fail in tasks that require planning over several steps.

A helpful everyday analogy is choosing between two paths in a building. One corridor may look shorter at first glance, but it leads to a locked door. Another path may take one extra turn, yet it reliably reaches the exit. If you had enough experience, you would begin to assign higher value to the second path. Reinforcement learning agents do something similar. They estimate which situations are promising based on the outcomes that usually follow them.

From an engineering point of view, value is useful because it compresses experience into a practical signal for decision-making. Instead of remembering every episode in detail, the agent builds a summary: “being here is usually good,” or “taking this move here usually leads to trouble.” That summary supports faster decisions later. In small environments, these value estimates can be stored directly. In larger environments, the same idea remains, even if the storage method changes.

A common beginner mistake is to confuse a high immediate reward with a good long-term choice. Another is to assume value must be exact. It does not. Early in training, value is often just a rough guess. What matters is that the estimates become more useful over time. Reinforcement learning works because the agent keeps correcting itself after each new experience, gradually improving its sense of what is worth doing.

There are two closely related ideas that beginners should separate clearly: state value and action value. State value asks, “How good is it to be in this state?” Action value asks, “How good is it to take this action in this state?” Both are useful, but action value is especially practical because an agent must eventually choose an action, not just admire a state.

Suppose a character in a grid game stands one square away from a goal but also near a hazard. The state might be fairly good overall because the goal is close. But the possible actions from that state are not equally good. Moving toward the goal may be excellent, standing still may waste time, and moving into the hazard may be terrible. This is why action value, often called a Q-value, is so powerful. It gives a separate estimate for each state-action pair.

State value is still conceptually important because it helps build intuition. If a state has high value, that usually means there exists at least one promising way forward from there. Action value goes one step further and says which move is likely to produce that good future. In a practical system, this difference affects how decisions are made. If you store only state values, you still need some extra method to turn them into actions. If you store Q-values, you can often choose the action with the highest number directly.

For a beginner-friendly workflow, Q-values are often easier to use because they connect learning and acting in one structure. Each number answers a very concrete question: “If I am here and I do this, how good does that seem?” This makes debugging easier as well. When behavior looks wrong, you can inspect the row for one state and compare the estimated values of all available actions.

A common mistake is to assume Q-values represent certainty. They do not. They are estimates based on experience so far. Another mistake is to forget that the same action can have very different value in different states. Moving right might be useful in one place and disastrous in another. Reinforcement learning depends on this context. Good actions are not universally good; they are good relative to the current situation.

A Q-table is a simple way to store learned action values. You can think of it as a memory sheet with one row for each state and one column for each action. The entry at row state S and column action A is the current Q-value for taking action A in state S. If the environment is small and has a limited number of states and actions, this table is one of the clearest teaching tools in reinforcement learning.

For example, in a tiny grid world, states might be cells on the grid, and actions might be up, down, left, and right. At the beginning, the table often starts with zeros or small random numbers. That means the agent has no useful experience yet. As it moves around and receives rewards, the entries begin to change. Some numbers rise because those actions tend to lead toward the goal. Other numbers fall because they tend to hit walls, waste time, or enter bad states.

The engineering advantage of a Q-table is transparency. You can inspect it directly. If a policy seems odd, the table often explains why. Maybe the agent keeps moving left because the left-action value in a certain state became too high due to limited exploration. Maybe several actions still have the same value because the agent has not visited that area enough. This visibility makes the Q-table ideal for learning the logic of reinforcement learning before moving to more complex function approximators.

It is also useful to view the table as compressed experience rather than a logbook. The agent does not need to store every journey separately. Instead, it keeps updating summary estimates. That is efficient and practical for small tasks. However, this same simplicity creates a limitation: if there are too many states, the table becomes large and sparse. In real systems with huge state spaces, plain tables no longer scale well. But for beginner tasks, they are exactly the right tool.

One common mistake is indexing the table incorrectly, especially when states are represented as grid coordinates or encoded numbers. Another is forgetting to handle invalid actions consistently, such as walking through a wall. In practical implementations, define state labels clearly, define action ordering clearly, and document how blocked moves are treated. Good reinforcement learning engineering begins with clean representations, not only with clever update rules.

Two settings strongly affect how Q-learning behaves: the learning rate and the discount factor. These names can sound technical, but the ideas are simple. The learning rate controls how much the agent changes its current estimate after new experience. The discount factor controls how much the agent cares about future rewards compared with immediate ones.

Start with the learning rate. Imagine the agent currently believes an action is worth 5, then a new experience suggests it might really be closer to 7. Should the agent jump all the way to 7 immediately, or should it move only part of the way? The learning rate answers that. A high learning rate means “change your mind quickly.” A low learning rate means “update more cautiously.” In noisy environments, cautious updates can be more stable. In simple environments, faster updates can help learning progress more quickly.

Now consider the discount factor. This setting reflects patience. If the agent cares only about immediate reward, it behaves myopically. It may ignore actions that look neutral now but lead to success later. A higher discount factor encourages longer-term thinking. In a maze, for example, the goal might be several steps away, so the agent should give some credit to moves that bring it closer even before the final reward appears. If the discount factor is too low, the agent may fail to value those useful setup moves.

In practice, these two settings interact with the task. If rewards are delayed, a higher discount factor is usually helpful. If values change too wildly from one episode to another, the learning rate may be too high. If learning feels painfully slow, the learning rate may be too low. There is no magical universal choice; good reinforcement learning work often involves trying reasonable values, observing behavior, and adjusting carefully.

Beginners often make two mistakes here. First, they treat these settings as abstract formulas instead of behavior controls. It is better to ask: does the agent adapt too fast or too slowly, and does it think too short-term or appropriately far ahead? Second, they change many settings at once and then cannot tell what caused improvement or failure. A practical habit is to tune one thing at a time and watch how the policy changes.

The core learning event in Q-learning happens after each step. The agent is in some state, takes an action, receives a reward, lands in a new state, and then updates one entry in the Q-table. The goal of the update is simple: make the old estimate a little closer to what the new experience suggests. This is not magic. It is repeated correction.

Here is the workflow in plain language. First, read the current Q-value for the state-action pair just used. Second, look at the reward that arrived immediately after the action. Third, inspect the next state and ask which action in that next state currently looks best according to the table. Fourth, combine the immediate reward with the expected future usefulness of that next state. This combination becomes the new target. Finally, move the old Q-value partway toward that target using the learning rate.

The important idea is that the agent learns not only from the reward it just got, but also from what it currently believes about the future. If the next state contains a strong action according to the table, that future promise raises the target. If the next state looks poor, the target remains low. In this way, useful information gradually spreads backward through the environment. An action several steps away from the goal can become more valuable because it leads into states that already look promising.

This step-by-step update process is the heart of trial-and-error learning. At first, the table is nearly empty of meaning. Then the goal is discovered once, creating a strong signal near the end of a path. After many updates, earlier states begin to inherit some of that usefulness. Over time, the agent no longer acts randomly as often because the table starts to reveal a sensible route.

A common mistake is updating the wrong state or action, especially in code where the current state and next state can be mixed up. Another is forgetting that the future estimate should come from the next state, not from the current one. Also be careful with terminal states. If the episode ends, there may be no future action to consider, so the update should rely only on the final reward. These details seem small, but they strongly affect whether learning succeeds.

Let us make the ideas concrete with a tiny movement task. Imagine a line of three positions: Left, Middle, and Goal. The agent starts in Middle. It has two actions: move left or move right. Reaching Goal gives a reward of +10 and ends the episode. Moving left gives no reward and leads to Left. From Left, moving right returns to Middle with no reward. This tiny world is enough to show how Q-learning fills a table with meaning.

At the start, suppose all Q-values are zero. In Middle, the agent tries move right and reaches Goal, receiving +10. The current value for Q(Middle, Right) was 0, but now the target is high because the immediate reward is +10 and the episode is over. After the update, Q(Middle, Right) becomes positive. It may not jump all the way to 10 if the learning rate is less than 1, but it moves upward. Already, the table has learned something useful: from Middle, moving right looks promising.

Now imagine another episode where the agent starts in Middle and, due to exploration, tries move left. It goes to Left and gets 0 reward. At first this does not seem informative. But from Left, if later experience shows that moving right tends to lead back to Middle, and from Middle moving right leads to Goal, then Q(Left, Right) can also become positive over time. Why? Because the future value of the next state, Middle, is no longer zero. The update begins to pass useful credit backward.

This example demonstrates a practical reading skill: when you inspect a Q-table, do not look only for immediate rewards. Look for chains of usefulness. A state-action pair can earn a decent Q-value simply because it leads into another state with strong options. That is how reinforcement learning handles delayed success without explicitly programming a full plan.

From an engineering perspective, this tiny example also shows why exploration matters. If the agent never tries move left, it may never learn the values around Left at all. If it explores sometimes, the table becomes more complete. The practical outcome is a better policy: the agent can act well not only in the most common state, but also after mistakes or unusual starting positions. Reading a small example carefully is one of the best ways to build intuition before writing code for larger grid worlds or robot movement tasks.

1. What is the main difference between a reward and value in this chapter?

2. What does a Q-value represent?

3. Why is a Q-table useful in beginner reinforcement learning systems?

4. How does learning happen in the chapter's first-principles view?

5. Which design choice could cause an agent to miss better routes?

This chapter is where reinforcement learning starts to feel real. Up to this point, ideas like state, action, reward, and policy may have sounded simple in theory, but now you will see how they work together inside small game worlds. A beginner-friendly game is the perfect training ground because the environment is clear, the actions are limited, and the results are easy to observe. Instead of trying to solve a huge robot problem or a complex video game, you can build intuition by watching an agent learn how to move through a tiny world one step at a time.

The central tool in this chapter is Q-learning. You do not need advanced math to use it well at this stage. Think of Q-learning as a table of experience. For each situation the agent can be in, and for each action it can take, the agent keeps a score. That score estimates how useful that action is in that situation. At first, those scores are mostly guesses. Then the agent plays again and again, wins sometimes, fails often, and slowly updates the table. Over repeated attempts, good actions gain higher values and bad actions lose appeal.

We will focus on simple game worlds such as a gridworld. In a gridworld, an agent moves on a board made of squares. One square may be the goal. Some squares may be dangerous. Some moves may give a small penalty to encourage faster solutions. This setup is powerful because it exposes the core learning loop very clearly: observe the current state, choose an action, receive a reward, move to a new state, and update the Q-value. That is the practical heart of trial-and-error learning.

As you build your first projects, it is important to think like both a learner and an engineer. A learner asks, “What is the agent discovering?” An engineer asks, “Is the environment designed clearly enough for learning to happen?” Small design choices matter. If rewards are confusing, the agent may learn strange habits. If exploration is too low, it may never find the goal. If you only look at one successful episode, you may miss the fact that learning is unstable. Good reinforcement learning practice means observing patterns over many episodes and checking whether the behavior matches the goal you intended.

Another important theme in this chapter is measurement. Beginners often say, “My agent seems better,” but in reinforcement learning you should aim for something more concrete. Measure wins, total reward, and number of steps to reach the goal. These simple metrics show whether learning is actually improving. An agent that wins more often is usually improving. An agent that gets the same reward but takes fewer steps is also improving. An agent that collects reward in a strange way while ignoring the real objective may be exploiting a flaw in your setup rather than learning what you wanted.

You will also see that weird behavior is normal. Reinforcement learning agents often get stuck against walls, loop around safe cells, chase immediate rewards, or avoid the goal if the penalty design is poor. These are not signs that the whole idea is broken. They are signs that the environment, reward design, exploration rate, or learning settings need adjustment. A large part of practical reinforcement learning is troubleshooting these beginner mistakes with patience and observation.

By the end of this chapter, you should be able to create a small grid-based learning project, train it over many episodes, inspect whether it is improving, and make sensible changes when it is not. More importantly, you will build intuition. You will start to see a policy not as an abstract term, but as a map from situations to useful actions. You will see value as an estimate built from experience. And you will understand exploration versus exploitation not just as vocabulary, but as a real tradeoff that shapes whether your first game agent succeeds or stalls.

Keep the projects small. A tiny world is not a toy in a negative sense. It is a laboratory. When the world is simple, you can clearly see what the agent is learning, why it succeeds, and where it fails. That clarity is exactly what beginners need before moving to larger environments.

A gridworld is one of the best first reinforcement learning projects because it strips the problem down to essentials. Imagine a small board such as 4 by 4 or 5 by 5. The agent starts in one square and can move up, down, left, or right. One square is the goal. A few squares may be blocked or dangerous. That is enough to build a complete learning problem. The state is the agent’s current position. The actions are the movement choices. The reward tells the agent whether a move was helpful. The goal is to reach the target efficiently.

When designing your first gridworld, simplicity is a strength. Use a board that is small enough to inspect by eye. If the world is too large at the start, debugging becomes harder because you cannot easily track whether the agent is making sensible decisions. A small board also means a small Q-table, which helps you understand how Q-learning stores experience. Each cell-action pair has its own value estimate. Over time, the table becomes a rough map of which moves are promising from each location.

Engineering judgment matters even in tiny projects. Decide whether the agent should bump into walls and stay in place, or whether illegal moves should be disallowed entirely. Decide whether obstacles are fixed or random. Fixed layouts are better for first experiments because they make behavior easier to compare across episodes. Also decide what counts as the end of an episode. Usually the episode ends when the agent reaches the goal, falls into a trap, or exceeds a maximum number of steps.

A practical workflow is to sketch the world first, then write down the state and action definitions before touching code. This prevents confusion later. If you cannot describe the environment clearly in plain language, the agent will not learn clearly either. For a first project, avoid fancy features like moving enemies or hidden information. Build one clean game loop that the agent can repeat many times.

The practical outcome of this section is a game world that is simple enough to train quickly but rich enough to show real learning. That balance makes gridworld the perfect first laboratory for Q-learning.

Once the world exists, the next important job is reward design. Beginners often underestimate how much the start location, goal location, and penalty structure affect behavior. A reinforcement learning agent does not understand your intention. It only responds to the signals you provide. If the reward system is unclear, the behavior will also be unclear.

Start points should usually be consistent at first. A fixed starting square helps you see whether the agent is learning a repeatable path. Later, you can randomize the start to make the policy more flexible. The goal should be easy to identify in the environment and should provide a strong positive reward. For example, reaching the goal might give +10. Dangerous cells might give -10 and end the episode. A small step penalty such as -1 per move often helps because it encourages shorter paths instead of endless wandering.

The key idea is balance. If the goal reward is too small, the agent may not care enough about reaching it. If the step penalty is too harsh, the agent may learn to avoid moving at all if your environment allows that. If trap penalties are weak, the agent may repeatedly risk them. In small game worlds, reward design is often the difference between clear learning and strange shortcuts.

A useful practical habit is to predict behavior before training. Ask yourself what the best strategy should be under your current reward design. If the shortest safe path to the goal should clearly win, your rewards are probably aligned. If there are odd reward loopholes, the agent may find them. For example, if sitting in a safe square gives zero reward and moving gives negative reward, the agent may prefer doing nothing if that action exists.

Another good beginner practice is to avoid too many reward rules. Start with just three signals: positive for goal, negative for danger, and small negative per step. This makes it much easier to reason about the learning process and troubleshoot later.

Thoughtful reward design gives the agent a clean learning objective. In practical projects, this clarity leads to faster training, easier debugging, and more meaningful behavior.

Reinforcement learning rarely looks impressive in a single run. The real story appears across many episodes. An episode is one full attempt, from start to finish. The agent starts, makes decisions, receives rewards, updates the Q-table, and eventually reaches a terminal condition. Then it starts again. This repetition is how learning happens.

In Q-learning, each step updates the estimated usefulness of a state-action pair. Early in training, the agent knows very little, so exploration is essential. Exploration means trying actions that are not currently believed to be best. Without exploration, the agent may settle too quickly on mediocre behavior. A common beginner strategy is epsilon-greedy action selection. Most of the time the agent chooses the best-known action so far, but some of the time it picks a random action. This helps it discover better paths that it would otherwise miss.

As training continues, you often reduce exploration gradually. Early on, high exploration helps discovery. Later, lower exploration helps the agent use what it has learned more consistently. This is a practical example of exploration versus exploitation. Exploration gathers information. Exploitation uses current knowledge to earn reward. Good training usually needs both, in the right proportions over time.

Do not expect steady improvement every episode. Some episodes will be worse than earlier ones because exploration causes mistakes. That is normal. What matters is the trend over dozens or hundreds of episodes. In a small gridworld, you might see the agent wander randomly at first, then occasionally reach the goal, then do so more often, and eventually follow a short path reliably.

A practical workflow is to train in batches and inspect behavior at checkpoints. For example, after every 100 episodes, test the current policy with little or no exploration. This shows what the agent has actually learned, separate from random training behavior. Keep a maximum step limit in each episode so the agent cannot wander forever.

Another engineering tip is reproducibility. Use a fixed random seed during early debugging so you can compare changes fairly. Once the setup works, test with different seeds to make sure the result is not a lucky accident.

The practical outcome of training over many episodes is not just a better agent. It is a clearer understanding of how trial-and-error learning accumulates knowledge gradually through repeated interaction with the environment.

Training without measurement is guesswork. A beginner can easily watch one good episode and assume the agent has learned, or watch one bad episode and assume training has failed. To avoid this, track simple metrics over time. In small game projects, the most useful ones are win rate, average total reward, and average number of steps per episode.

Win rate answers a basic question: how often does the agent reach the goal? Average reward gives a broader view because it includes both success and the cost of wandering or hitting penalties. Average steps help show efficiency. An agent that reaches the goal in 8 steps is usually better than one that reaches it in 25, assuming rewards are designed sensibly. Together, these measures reveal whether the policy is becoming both effective and efficient.

Learning curves make these changes visible. If you plot reward or win rate against episode number, you often see a noisy but rising pattern. Noise is normal because exploration adds randomness. To make trends clearer, compute a moving average over recent episodes. This smooths out short-term variation and highlights long-term improvement.

Practical engineering judgment matters here too. Use evaluation runs with low exploration when measuring learned performance. If you measure only training episodes, your metrics include random exploratory actions and may underestimate the quality of the current policy. Also compare multiple runs, not just one. Reinforcement learning can vary from seed to seed, especially in small projects with limited training.

Keep your metrics close to the task goal. If the task is to reach a target quickly and safely, then wins, rewards, and steps are meaningful. Avoid measuring too many things at once. A short, focused dashboard is better than a confusing pile of numbers.

The practical benefit of tracking progress is confidence. You stop relying on impressions and start using evidence. That habit becomes essential as environments become more complex later in the course.

Odd behavior is one of the most valuable learning opportunities in beginner reinforcement learning. If your agent loops between two cells, hugs a wall, ignores the goal, or repeatedly walks into danger, do not panic. These behaviors usually point to a specific issue in setup or training. The task is to diagnose the cause calmly.

One common reason is poor reward design. If the rewards accidentally favor a strange pattern, the agent will exploit it. For example, if staying alive without reaching the goal avoids penalties, the agent may wander safely forever. Another common cause is too little exploration. If the agent never tries the right path early on, it may never discover that the goal is valuable. On the other hand, too much exploration late in training can make the behavior look worse than the learned policy actually is.

State representation can also be a hidden problem. In a simple gridworld, the state is usually just the current position, which is enough. But if the environment has special conditions and the state does not include them, the agent may treat different situations as if they were the same. That leads to inconsistent learning. Update logic is another place to check. A small implementation error in the Q-learning update, terminal reward handling, or action selection can produce behavior that looks mysterious but is really just a bug.

Beginner troubleshooting should follow a short checklist. First, test the environment manually. Can a human reach the goal under the current rules? Second, print a few episode traces step by step. Third, inspect sample Q-values for important states. Fourth, reduce the problem size if needed. A tiny world often reveals the issue faster than a larger one.

The practical lesson is that strange behavior is often informative. It tells you where the design or implementation is misaligned with the goal. Learning to read these signals is part of becoming effective at reinforcement learning.

The best way to improve a beginner agent is through small, controlled changes. Do not rewrite everything after one disappointing result. Instead, adjust one factor at a time and observe the effect. This is how practical reinforcement learning work is done. A gridworld is ideal for this because training is fast and results are easy to inspect.

Start by making sure the baseline setup is correct: a clear goal reward, a modest step penalty, a sensible learning rate, a discount factor that values future success, and an exploration rate that begins high enough to discover the world. Once that baseline trains at least somewhat, improve it gradually. You might add epsilon decay so the agent explores more at the start and less later. You might tune the step penalty to encourage shorter routes. You might randomize the start state after the agent masters a fixed start.

It is useful to keep notes for each experiment. Write down what changed, why you changed it, and what happened to wins, rewards, and steps. This simple habit turns random tinkering into systematic learning. Over time, you will notice patterns. For example, too little exploration may lead to fast but brittle learning, while moderate exploration may take longer but produce a stronger policy.

Another practical improvement is separating training from evaluation. Train with exploration, but evaluate the agent with mostly greedy actions. This shows whether the policy itself has improved. You can also visualize the best action in each cell after training. Seeing arrows across the grid is a simple and powerful way to inspect the learned policy.

Most importantly, think in iterations. A small game agent rarely becomes good all at once. It improves because you define a simple task, train over many episodes, measure performance, identify mistakes, and refine the setup. That loop mirrors the larger workflow used in real reinforcement learning projects.

By improving a small agent step by step, you develop intuition that will transfer to more advanced tasks. You learn not only how Q-learning works, but how to shape an environment, interpret results, and make thoughtful engineering decisions when the agent does not behave the way you hoped.

1. What does Q-learning represent in this chapter?

2. Why are simple game worlds like gridworld useful for beginners?

3. Which set of measurements does the chapter recommend for checking improvement?

4. If an agent keeps looping around safe cells or avoids the goal, what does the chapter suggest?

5. Why is it important to look at many episodes instead of one successful run?

In earlier chapters, reinforcement learning may have felt most natural in games: a character moves on a grid, chooses left or right, collects rewards, and improves over time. This chapter shifts that same way of thinking into robot tasks. The important idea is that a robot learning problem is still an agent learning from experience. The difference is that the agent now acts through motors, receives information from sensors, and lives in a physical world that is slower, messier, and less forgiving than a game board.

For beginners, this is a very useful transition point. Many people understand reinforcement learning in a simple maze but feel intimidated when the word robot appears. In practice, the basic structure remains the same. The robot observes a state, chooses an action, receives feedback, and tries to improve its future decisions. A mobile robot that learns to move toward a charging station is not conceptually far from a game character that learns to move toward a goal square. What changes is the engineering judgment needed to model movement, sensor readings, delay, safety, and uncertainty.

This chapter introduces robot learning ideas without heavy math. We will focus on how to describe movement, define simple goals, think about reward design, and avoid common beginner mistakes. You will also see why simulation is usually the first step before testing on real machines. Real robots can collide with walls, drain batteries, misread sensors, and behave differently from one run to the next. That is why practical reinforcement learning for robots begins with tiny experiments and careful limits.

A good beginner mindset is to design tasks that are small enough to understand completely. Instead of saying, “I want a robot to do everything,” start with one clear behavior: move forward without hitting anything, turn toward a target, or reach a marked spot on the floor. When the task is small, the state description is clearer, the action choices are manageable, and the rewards are easier to reason about. This helps you build intuition that transfers from toy examples to more realistic robot systems.

By the end of this chapter, you should be able to translate reinforcement learning ideas from simple games into beginner-friendly robot tasks, describe states and actions in a practical way, explain why safety matters, and sketch a small robot learning experiment that is realistic enough to teach you something valuable.

Practice note for Transfer RL thinking from games to robot tasks: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Model movement, sensors, and simple goals: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Understand safety and simulation for beginners: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Design tiny robot learning experiments: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Transfer RL thinking from games to robot tasks: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.