AI Robotics & Autonomous Systems — Beginner

Understand how robots sense, decide, learn, and move

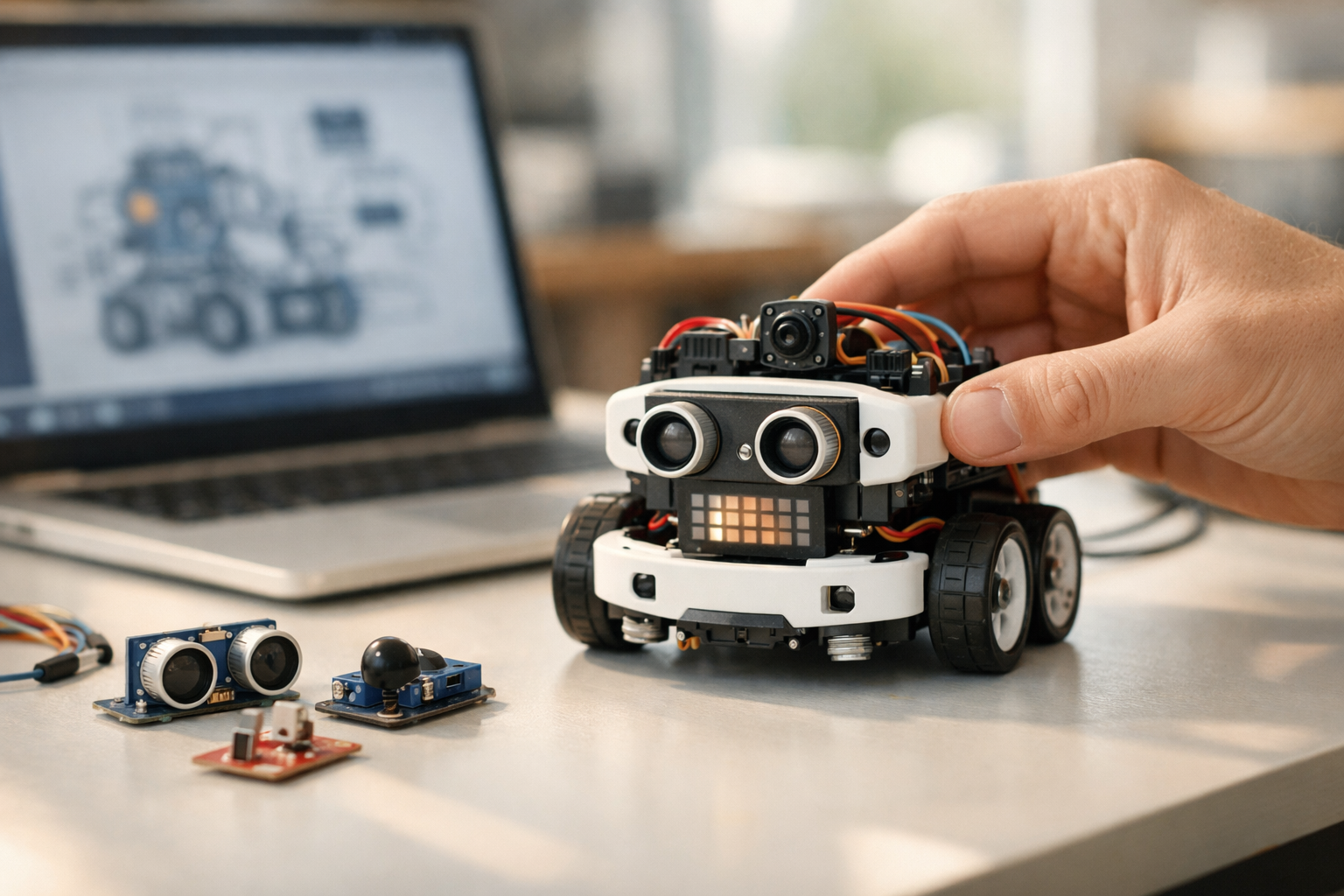

Robots can look mysterious to beginners. They move, react, avoid obstacles, and sometimes even improve over time. This course breaks that mystery down into simple ideas anyone can understand. You do not need any background in artificial intelligence, coding, engineering, or data science. If you have ever wondered how a robot knows where to go, how it notices the world around it, or how it gets better through experience, this course gives you a clear starting point.

This book-style course is designed as a short, structured journey through the core logic of robot learning. Instead of jumping into advanced math or software tools, it starts with the basics: what a robot is, what makes it different from ordinary software, and why sensing, deciding, and acting form the heart of all autonomous systems. Every chapter builds on the previous one so you can develop real understanding step by step.

You will begin by learning the simple loop used by most robots: sense the world, make a decision, and take action. From there, you will explore the kinds of sensors robots use, such as cameras, distance sensors, touch sensors, and motion sensors. You will also learn why sensor data is often messy and uncertain, and how robots still manage to use it.

Next, the course explains robot decision making in plain language. You will see how a robot can use rules, goals, and simple planning to choose what to do next. Then you will move into learning itself: how robots improve through examples, patterns, rewards, and feedback. Finally, you will connect those ideas to movement and control, so you can understand how decisions become real actions in the physical world.

Many robotics resources are written for engineers or programmers. This course is not. It is built for complete beginners who want a strong foundation without being overwhelmed. The language stays simple, the concepts are explained from the ground up, and each chapter acts like part of a short technical book. By the end, you will not just know isolated facts. You will understand the full story of how robots learn from sensors, make decisions, and act in the world.

The course also focuses on practical mental models. That means you will learn ideas you can use right away when reading robotics news, watching demos, evaluating products, or deciding whether to study robotics more deeply. You will gain confidence with terms and concepts that often seem difficult at first.

This course is ideal for curious learners, students, career switchers, and professionals who want a clear introduction to robot learning. It is especially useful if you want to understand autonomous systems without first learning to code. If you are exploring AI topics on the Edu AI platform, you can browse all courses after this one to continue building your knowledge.

If you are ready to start from zero and build a solid understanding of robotics in a friendly way, this course is a great entry point. You can Register free and begin learning how robots turn sensors into decisions and decisions into action.

Rather than treating robot learning as magic, this course shows the logic behind it. It explains both what robots can do and what they still struggle with. It also introduces safety, reliability, and human oversight so beginners can see the bigger picture of autonomous systems in the real world. The result is a balanced, approachable introduction that helps you think clearly about robotics today and where it may go next.

Robotics Learning Specialist

Sofia Chen designs beginner-friendly robotics education for learners entering AI and automation for the first time. She has worked on robot perception, navigation, and human-centered training materials that turn complex systems into clear, practical lessons.

When people hear the word robot, they often imagine a humanoid machine that talks, walks, and makes intelligent decisions. In engineering, the idea is both simpler and more useful. A robot is a physical system that can sense its surroundings, make some form of decision, and act on the world through motors or other mechanisms. That definition matters because it separates robots from ordinary software. A spreadsheet can process data. A robot must process data while dealing with friction, noise, delays, uncertainty, battery limits, and the stubborn reality of the physical world.

This chapter builds the first mental model you need for the rest of the course. We will look at the basic parts every robot needs, explain the sense-think-act loop, and distinguish plain automation from true learning. Along the way, we will separate data, information, decisions, and actions, because confusion between those layers causes many beginner mistakes. A distance sensor reading is data. Interpreting that reading as “there is an obstacle ahead” is information. Choosing to slow down is a decision. Sending a motor command is an action.

A useful way to picture robot behavior is as a repeated cycle. First, the robot gathers measurements from sensors such as cameras, bump switches, encoders, microphones, GPS units, or laser scanners. Next, software interprets those measurements and selects a response. Finally, actuators carry out the response by moving wheels, joints, grippers, or other parts. After acting, the robot senses again. This loop repeats many times per second in some systems and more slowly in others. The speed of that loop is not just a technical detail; it often determines whether a robot appears smooth and competent or clumsy and unsafe.

Learning enters the story when the robot improves how it maps sensor input to action based on feedback. A rule-based robot might always turn right when it sees an obstacle. A learning robot might discover that turning direction should depend on context, past outcomes, or patterns in sensor data. Feedback is the bridge between behavior and improvement. If the robot can observe whether its choice helped or hurt, it has a basis for changing future choices.

In practice, engineers rarely begin with learning alone. They build a stable control loop, choose the right sensors, define safe actions, and only then add adaptation where it creates value. This chapter introduces that mindset. A learning robot is not magic. It is a machine with sensors, computation, action, and feedback, designed to behave better over time in the real world.

Practice note for See the basic parts every robot needs: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Understand the sense-think-act loop: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Separate automation from true learning: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Build a first mental model of robot behavior: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for See the basic parts every robot needs: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Not every machine is a robot. A washing machine follows programmed steps, but it has very limited awareness and very little freedom in how it responds. A robot, by contrast, has three essential ingredients: sensing, computation, and action. It can measure something about the world, process those measurements, and then change the world through movement or control. If one of those ingredients is missing, the system is usually better described as a device, tool, or software service rather than a robot.

The physical body of the robot matters. Wheels, arms, legs, propellers, grippers, and chassis parts determine what actions are possible. Sensors matter just as much. Common examples include cameras for visual scenes, ultrasonic or infrared sensors for distance, LiDAR for detailed range measurement, GPS for outdoor position, wheel encoders for movement tracking, force sensors for contact, and inertial measurement units for orientation and acceleration. Each sensor offers only a partial view. Cameras are rich but can be sensitive to lighting. Ultrasonic sensors are simple but may struggle with soft surfaces or awkward angles. Good engineering means knowing what a sensor is good at, what it misses, and how much trust to place in it.

New learners often think the “intelligence” of a robot is only in its software. In reality, intelligence starts with system design. A cheap sensor placed well may outperform an expensive sensor placed badly. A stable robot with simple controls often works better than a complicated robot with advanced algorithms. The first mental model to build is this: a robot is a complete loop between body, sensors, software, and environment. You cannot understand one part in isolation.

That is why robotics is interdisciplinary. Mechanical design affects what data sensors can collect. Electrical design affects reliability and timing. Software affects decisions. Control design affects how smoothly the robot moves. A machine becomes a robot when these parts work together to produce purposeful behavior in the world.

Every robot, from a line-following toy to a warehouse vehicle, performs three jobs again and again: sense, decide, and act. This is the core loop of robot behavior. Understanding it clearly helps you separate raw measurements from meaning and from motion.

First comes sensing. Sensors produce data, not understanding. A camera creates pixels. A sonar unit returns a distance estimate. A temperature probe outputs a number. These signals may be noisy, delayed, incomplete, or wrong. The robot must convert them into useful information. For example, 1,200 raw depth points from a range sensor may become the statement, “there is a free path on the left.” That interpretation step is where simple processing, filtering, and modeling begin.

Second comes deciding. A decision is a choice among possible actions based on current information, goals, and constraints. The robot may decide to stop, speed up, turn, grab an object, or ask for more information. Decisions can come from fixed rules, a planner, a learned model, or a mixture of methods. Good decisions are not just about achieving a task; they must also respect safety, energy use, timing, and uncertainty.

Third comes acting. Decisions only matter when translated into physical commands. A motor controller may receive a target speed. A robotic arm may receive a joint angle. A gripper may receive a close command. In real systems, action is rarely perfect. Wheels slip. Motors lag. Loads vary. That is why the loop must repeat: the robot acts, senses the result, and corrects itself.

A common beginner mistake is to skip the distinction between these layers. If you do not know whether your problem is bad data, poor interpretation, weak decision logic, or unreliable action, debugging becomes difficult. Experienced robot engineers trace failure through the full chain: what was sensed, how it was interpreted, why that led to the chosen action, and what the robot actually did.

Robotics is often described as software meeting hardware, but that phrase is too gentle. Robotics is really software negotiating with reality. In pure software systems, the environment is often structured and repeatable. In robots, the environment is messy. Floors are uneven, lighting changes, objects move, batteries drain, and sensors drift over time. The same code can behave very differently when the robot is bumped, a wheel slips, or a camera lens gets dusty.

This is why robotics engineers think in terms of uncertainty and feedback. You do not simply tell a mobile robot, “go forward one meter,” and assume success. You measure wheel rotation with encoders, perhaps compare with inertial sensors, maybe use vision or landmarks, and constantly check whether the robot is still on track. A robot that ignores feedback is usually brittle. A robot that uses feedback can correct small errors before they become failures.

Physical systems also introduce timing concerns. If sensor updates are too slow, the robot reacts late. If computation is too heavy, decisions arrive after the world has changed. If actuator commands are jerky, the robot may wobble or overshoot. Engineering judgment means matching complexity to the job. A simple obstacle-avoidance robot may need only lightweight logic running quickly and reliably. A self-driving platform may justify more complex perception, but only if the system can still respond within safe time limits.

Another practical lesson is that the environment often supplies the hardest test cases. A robot that works in a clean lab may fail in a hallway with echoes, sunlight, clutter, or people crossing unexpectedly. Beginners sometimes blame the algorithm first. In many cases, the deeper issue is mismatch between assumptions and reality. Strong robot design begins by asking what the world will do to the system, not just what the system intends to do to the world.

It is important to separate automation from learning, because many systems are useful robots without being learning robots. Automation means following predefined logic. If sensor A detects a wall, stop. If battery is low, return to the charging dock. If line sensor sees black, steer right. These behaviors can be effective, reliable, and easy to verify. In fact, many industrial robots depend heavily on well-tested rule-based control because predictability matters.

Adaptation is different. An adaptive or learning robot changes its behavior based on experience, data, or feedback. It does not just execute a fixed map from input to action; it updates that map. For instance, a delivery robot may learn that one corridor is often crowded at certain times and choose an alternative route. A robotic arm may improve its grasping strategy after repeated failures on slippery objects. The key idea is improvement over time, not just repeated execution.

Rule-based control and learning-based behavior are not enemies. They are usually partners. Rule-based control provides safety rails, minimum performance, and understandable fallback behavior. Learning-based components can add flexibility where fixed rules become too rigid. Good engineering often asks: which parts of the problem are stable enough for explicit rules, and which parts vary enough that adaptation is worth the risk and complexity?

A common mistake is to label any robot that uses sensors as “AI.” A thermostat senses temperature and changes output, but that does not make it a learning system. Likewise, a robot vacuum that follows fixed obstacle rules may be automated but not adaptive. To call a robot learning-based, you should be able to point to what changes with experience, what feedback drives that change, and how improvement is measured. Without those elements, there is sensing and action, but not learning in the meaningful robotics sense.

Learning matters because the world does not stay still long enough for fixed rules to cover every case. A robot deployed in a real environment faces variation in lighting, surface texture, human movement, object placement, weather, wear, and sensor quality. If the environment changes faster than engineers can manually rewrite rules, learning becomes valuable. It allows the robot to adjust from feedback rather than waiting for a human programmer to anticipate every possibility.

Feedback is the engine of this improvement. A robot needs some signal that tells it whether a result was good or bad. That feedback may be explicit, such as “the object was picked up successfully,” or indirect, such as reduced collision rate or faster completion time. Over time, the robot can connect patterns in sensor input to outcomes and refine its choices. This does not mean the robot understands the world like a human. It means it improves a practical mapping from observations to actions.

Consider a robot navigating a building. A rule-based system might say, “if obstacle ahead, turn right.” That can work in simple spaces but fail in narrow corridors, crowded areas, or unusual layouts. A learning system may discover that certain visual patterns predict dead ends, that slowing down near people avoids future delays, or that one route is consistently more reliable during busy periods. Learning helps when relationships are too complex, variable, or subtle for hand-coded logic alone.

Still, learning is not automatically better. It requires data, careful evaluation, and protection against unsafe behavior. The practical outcome is balance. Use rules for hard constraints and safety-critical responses. Use learning where adaptation to changing environments genuinely improves robustness, efficiency, or success rate. That combination is the hallmark of many effective modern robots.

Imagine a small wheeled robot whose job is to drive down a hallway without hitting obstacles. This example is simple, but it captures the full sense-think-act loop and shows where learning can enter. The robot has three front-facing distance sensors, wheel motors, wheel encoders, and a battery monitor. Its goal is to move forward efficiently while avoiding collisions.

The process begins with sensing. Every fraction of a second, the robot reads the left, center, and right distance sensors. Those raw numbers are data. The software then interprets them into information such as “obstacle close in front” or “more free space on the left.” It also checks encoders to estimate whether the robot is moving as expected. If motor commands were sent but encoder readings show little movement, the robot may be stuck or slipping.

Next comes decision-making. A rule-based version might use simple logic: if the center sensor is clear, go forward; if blocked, turn toward the side with more open space; if both sides are blocked, stop and rotate. That is automation. Now add a learning element. Suppose the robot records which turning choices lead to smooth progress and which often result in repeated corrections. Over time, it can prefer actions that worked better in similar sensor situations. Feedback may come from travel speed, number of near-collisions, or how often it becomes trapped.

Finally comes action. The robot sends speed commands to the left and right motors. As it moves, new sensor readings arrive, and the cycle repeats. If the hallway is bright in one area and dim in another, or if boxes appear in new positions, the robot keeps updating its behavior from fresh input. The practical lesson is that robot behavior is not one big decision. It is many small loops of sensing, interpreting, choosing, and correcting.

This example also highlights common mistakes. If the robot crashes, the problem might be bad sensors, poor thresholds, slow decisions, weak motor control, or flawed feedback. Effective engineers do not immediately say, “the AI failed.” They inspect the whole chain. That habit of tracing behavior from sensor input to physical action is the foundation for understanding all learning robots that follow in this course.

1. Which description best matches the chapter's definition of a robot?

2. In the chapter's example, what is the difference between information and a decision?

3. What is the correct order of the sense-think-act loop described in the chapter?

4. According to the chapter, what makes a robot a learning robot rather than just an automated one?

5. Why do engineers usually build a stable control loop before adding learning?

A robot cannot make useful decisions unless it has some connection to the outside world. That connection comes through sensors. Sensors are the robot's way of noticing light, distance, touch, motion, temperature, position, and many other conditions. In everyday language, we can think of sensors as the robot's eyes, ears, skin, and inner sense of balance. But unlike humans, robots do not automatically understand what those signals mean. They receive measurements first, then software turns those measurements into something useful.

This chapter builds a practical view of robot sensing. We will look at what sensor data is, why it matters, and how raw electrical signals become usable input for decisions and actions. We will also separate four ideas that beginners often mix together: data, information, decisions, and actions. Data is a raw reading such as a camera pixel value or a distance measurement. Information is a more useful interpretation, such as "there is an obstacle 40 centimeters ahead." A decision is a choice, such as "slow down and turn left." An action is what the robot actually does through motors, wheels, arms, or brakes.

Good robot behavior depends on this chain working well. If the sensor data is poor, the information will be weak. If the information is weak, the decision may be wrong. If the decision is wrong, the action can fail. This is why engineers spend so much time choosing sensors, checking their errors, filtering noisy signals, and combining measurements from more than one source. A robot that senses poorly often behaves unpredictably, even if the code looks correct.

Another key idea in this chapter is feedback. Robots do not simply sense once and act once. They usually work in a loop: sense, interpret, decide, act, and sense again. That repeated checking allows the robot to improve its behavior. For example, a line-following robot reads reflectance sensors, steers toward the line, checks whether it moved too far, and corrects itself on the next cycle. Feedback turns sensing into control.

We will also compare simple rule-based behavior with learning-based behavior. A rule-based robot might use a fixed instruction such as "if the front distance is less than 20 cm, stop." A learning-based robot may use examples or training data to recognize patterns such as walls, doors, or pedestrians. Both approaches still depend on sensors. The difference is in how the robot converts sensor input into choices. In practice, many useful robots combine both methods: learned perception with rule-based safety checks.

As you read, keep an engineering mindset. Ask practical questions: What exactly is being measured? How often is it measured? How accurate is it? What can cause bad readings? What happens if the sensor briefly fails? Those questions matter more than fancy terminology. Robots succeed when sensing is reliable enough for the task, not when the sensor list looks impressive on paper.

By the end of this chapter, you should be able to explain in simple terms how robots sense the world, identify common sensors and their uses, understand why readings are imperfect, and describe how raw signals become useful input for basic robot choices.

Practice note for Learn what sensor data is and why it matters: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Identify common sensors used in robots: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Understand noise, errors, and uncertainty: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

A robot lives in a physical world, not in a perfect digital one. Motors slip, floors change, lighting varies, and objects appear unexpectedly. Sensors give the robot access to that changing reality. Without sensors, a robot can only follow a prewritten sequence and hope the world matches its assumptions. That can work in a tightly controlled factory process, but it fails quickly in homes, streets, warehouses, farms, and hospitals.

It helps to think of sensing as the first stage in a workflow. First, the robot collects data. Next, software transforms that data into information. Then a controller or decision system chooses what to do. Finally, the robot acts. A simple example is a robot vacuum. A bumper switch produces raw data when pressed. The software interprets that as contact with an obstacle. The controller decides to reverse and rotate. The motors carry out the action. This is the full chain from sensing to behavior.

Beginners often make the mistake of assuming sensors directly tell the robot what to do. They do not. Sensors only measure something. Meaning comes later. A camera does not output "chair" or "door" by itself. It outputs pixel values. An encoder does not output "the robot reached the goal." It outputs counts of wheel rotation. Engineers must decide how to convert those measurements into useful information for the task.

Engineering judgement matters here. The right sensor depends on the job. If the robot only needs to know whether it touched a wall, a simple switch may be enough. If it must estimate distance before collision, a distance sensor is better. If it must understand a cluttered scene, it may need a camera or lidar. More sensing is not always better. Extra sensors increase cost, wiring, processing load, calibration effort, and failure points.

Practical teams begin with a question: what must the robot know to behave safely and effectively? Then they choose the minimum sensing needed to answer that question well enough. That phrase, well enough, is important. Robotics is often about adequacy, not perfection. A warehouse robot does not need to understand the world like a human. It needs measurements reliable enough to navigate aisles, avoid collisions, and reach shelves repeatedly.

Feedback closes the loop between sensing and action. A robot drives forward, senses whether it stayed on course, and adjusts. This repeated loop is the basis of control. In rule-based systems, the loop may be simple and explicit. In learning-based systems, the interpretation stage may use a trained model. But either way, the robot still depends on sensors as its connection to reality.

Robots use many sensor types, but a few appear again and again because they solve common problems. Cameras capture images and are useful when the robot needs rich visual information: object recognition, lane following, reading markers, checking product quality, or identifying where an arm should place a tool. Cameras provide a lot of data, but they also require significant processing. Lighting changes, shadows, glare, and motion blur can reduce reliability, so engineers should not assume a camera alone is enough for all situations.

Distance sensors answer a more focused question: how far away is something? Common examples include ultrasonic sensors, infrared range sensors, and lidar. Ultrasonic sensors send sound waves and measure the echo time. They are inexpensive and useful for obstacle detection, but may struggle with soft surfaces or angled objects. Infrared sensors can work well at short range, though some are sensitive to material properties or ambient light. Lidar often provides more precise spatial information and can scan a wide area, but usually costs more.

Touch sensors are among the simplest and most robust sensors in robotics. A bumper switch, force sensor, or pressure pad tells the robot it has made contact or that pressure has exceeded a threshold. Touch sensing is valuable as a safety layer because it does not require estimating from a distance. If the robot physically contacts an object, the signal is clear. A common mistake is to use touch as the primary way to detect obstacles in tasks where collision should be avoided. Touch is often best as a backup or confirmation sensor.

Motion sensors help the robot understand how it is moving. An accelerometer measures acceleration. A gyroscope measures rotational motion. Together, often inside an IMU, they help estimate tilt, turning, vibration, and short-term movement. These sensors are especially useful in drones, self-balancing robots, and mobile platforms that must maintain orientation.

In practical design, sensor choice should follow the task, environment, and risk level. A line follower may only need reflectance sensors. A delivery robot may need cameras plus distance sensing plus wheel encoders. A warehouse arm may rely on vision for locating items and force sensing for safe gripping. Sensors are tools, and good robotic design means matching the tool to the decision the robot must make.

Many robots must know not only what surrounds them, but also where they are and how they are moving. Position, speed, and direction are basic quantities for navigation and control. One of the most common sensors for this job is the wheel encoder. Encoders measure wheel rotation by producing pulses or counts. From those counts, the robot can estimate how far it has traveled. By comparing the left and right wheels, it can estimate whether it moved straight or turned.

This sounds simple, but there is an important lesson: the sensor usually measures something indirect. The encoder measures wheel rotation, not actual ground movement. If the wheel slips on dust, carpet, or a wet surface, the encoder may report movement that did not really happen. That is why engineers say every sensor has assumptions. Encoders assume the wheel motion reflects robot motion, and that assumption is only partly true.

Speed can also be estimated from encoder counts over time. If the counts rise quickly, the wheel is rotating faster. Controllers use this information to regulate motor output. For example, if one wheel turns slower than expected, the robot can increase power to that side. This is feedback in action. The robot senses a difference between target speed and actual speed, then corrects itself.

Direction and orientation are often estimated with gyroscopes, compasses, and GPS in larger outdoor systems. A gyroscope is useful for short-term turning measurement, but it can drift over time. A compass can help estimate heading, though nearby metal or electrical equipment may disturb it. GPS can estimate global position outdoors, but standard GPS is often too coarse for precise indoor navigation or close maneuvering.

In practice, no single sensor gives a perfect answer. Engineers combine relative measures, like encoder counts, with absolute references, like GPS or landmarks, when available. For indoor robots, visual markers, maps, or lidar-based localization may help correct drift. For small educational robots, even a simple combination of encoders and a gyroscope can produce much better motion estimates than either sensor alone.

A common beginner mistake is to trust position estimates too far into the future without correction. Small errors accumulate. A robot that is off by one degree each turn may miss its destination by a large distance after several meters. The practical lesson is clear: movement sensing is essential, but it must be checked and corrected regularly if the robot is to behave reliably.

Real sensor readings are never perfectly clean. They contain noise, bias, delays, drift, missed detections, and occasional strange values. Understanding this is one of the most important steps in robotics. A beginner may look at a specification sheet and assume a sensor gives exact truth. In reality, a sensor gives an estimate influenced by hardware limits and environmental conditions.

Noise is random variation in readings. A distance sensor pointed at a fixed object may report slightly different values from one moment to the next. Bias is a more consistent error, such as a scale that always reads 2 units high. Drift is slow change over time, common in gyroscopes and some other motion sensors. Latency means the reading arrives slightly late, which matters when the robot moves quickly. Resolution limits how small a change the sensor can detect.

The environment causes many problems. Cameras can fail in dim light, intense glare, or smoke. Ultrasonic sensors may struggle with soft materials that absorb sound. Infrared sensors may be affected by sunlight. Encoders can mislead when wheels slip. Even touch sensors can bounce mechanically, producing multiple rapid signals from a single contact.

Uncertainty does not mean sensors are useless. It means robot software must treat readings carefully. Good engineers ask: how wrong could this measurement be, and what would happen if I trusted it too much? This question guides safe design. For example, a robot may use a conservative stopping distance if a front sensor is noisy. Or it may require several consistent readings before deciding an obstacle is truly present.

Another common mistake is ignoring calibration. Sensors often need setup and checking. A line sensor may need to learn what black and white look like under local lighting. A gyro may need a still moment at startup to estimate its baseline. If calibration is skipped, the robot may behave erratically even though the hardware is not broken.

Practical robotics does not aim to eliminate uncertainty completely. Instead, it manages uncertainty. That means choosing sensors with suitable limits, placing them carefully, testing them in realistic environments, and writing control logic that fails safely. Reliable robots are not the ones with perfect measurements. They are the ones designed to handle imperfect measurements well.

Because sensor readings are imperfect, robots often clean the data before using it. This process is called filtering. At a basic level, filtering means reducing random noise, rejecting impossible values, and smoothing fast fluctuations so the robot can make steadier decisions. The goal is not to hide reality. The goal is to make the signal more useful for the task.

One simple method is averaging. If a distance sensor gives slightly different values every moment, the robot can average the last few readings. This often reduces noise and makes the estimate more stable. But there is a tradeoff: averaging introduces delay. If the robot is moving fast, too much smoothing can make it react too slowly. Engineering judgement means choosing enough filtering to reduce noise, but not so much that the robot becomes sluggish.

Another common method is a median filter. This is especially helpful when a sensor occasionally produces one extreme bad value. Instead of averaging, the robot takes several recent readings and selects the middle one. That rejects sudden spikes. Thresholding is also common. For instance, if a touch sensor bounces rapidly, software may ignore repeated changes for a short time after the first contact. This is called debouncing.

Cleaning data also includes unit conversion and normalization. Raw signals may start as voltages, pulse counts, or pixel values. Software converts these into more meaningful forms such as centimeters, degrees per second, or a brightness score. This is where raw data becomes useful input. The robot is still not making a full decision yet, but it now has information in a form that control logic or learning algorithms can use.

Beginners often apply filters without checking whether the result still matches the real task. If a line-following robot smooths too heavily, it may miss sharp turns. If a balancing robot delays its IMU signal, it may fall. Always test with the robot moving in realistic conditions, not just with sensors sitting still on a table.

In practice, simple filters solve many real problems. A moving average, median filter, calibration step, and basic range check can dramatically improve behavior. Clean enough data leads to more stable decisions, and more stable decisions lead to better actions.

No single sensor sees the whole world clearly. That is why many robots combine multiple sensors, a practice often called sensor fusion. The main idea is simple: one sensor can cover another sensor's weaknesses. A camera may recognize objects but struggle with exact distance. A lidar may measure distance well but not identify object type. Encoders may track short-term motion while a camera or map helps correct long-term drift.

Consider a mobile robot navigating a hallway. Wheel encoders estimate movement. A gyroscope estimates turning. A front distance sensor checks for obstacles. A camera may detect doors or markers. Each measurement alone is limited, but together they provide a more reliable picture. If the encoder suggests forward motion but the distance sensor still shows the wall at the same range, the robot may infer wheel slip or a blocked path.

This section also helps explain the difference between rule-based and learning-based behavior. In a rule-based system, the combined sensors may feed clear conditions such as "if front distance is below 30 cm and left side is open, turn left." In a learning-based system, a model may take camera and range data together to classify a scene or predict a steering command. Even then, practical robots often keep hard safety rules outside the learned model, such as emergency stop conditions from bumper or proximity sensors.

Combining sensors requires care. Their update rates may differ. Their measurements may refer to slightly different positions on the robot. Their clocks may not align. Engineers must also decide which sensor to trust more in each situation. For example, indoors a robot may rely less on GPS and more on encoders, lidar, or vision. Outdoors in open space, GPS may become much more useful.

Feedback becomes stronger when multiple sensors are available. The robot can compare expectation with reality across several channels and adjust more intelligently. This leads to more robust behavior, especially in changing environments. The practical outcome is not perfection, but resilience. A robot that combines sensors thoughtfully is less likely to fail because one measurement became unreliable for a moment.

In real engineering, the best sensing system is usually not the most complex one. It is the one that provides enough trustworthy information for the robot to make safe, effective choices repeatedly. That is the core of robot sensing: turning imperfect measurements into useful understanding, then using feedback to guide better action.

1. What is the best description of sensor data in a robot?

2. Which example is information rather than raw data?

3. Why do engineers spend time filtering noisy signals and checking sensor errors?

4. What does feedback mean in robot behavior?

5. How does a learning-based robot differ from a rule-based robot, according to the chapter?

Robots do not merely sense the world and react at random. A useful robot must decide what to do next, when to do it, and when to stop. This chapter connects sensing, information, decisions, and action into one practical story. A robot starts with raw data from sensors such as cameras, distance sensors, wheel encoders, microphones, touch switches, or GPS. That data becomes information after it is interpreted: a wall is near, the battery is low, the path is blocked, or the target object is on the left. A decision is the selected response to that information, such as slow down, turn right, ask for help, or continue forward. An action is the physical result, created by motors, wheels, arms, grippers, or speakers.

Good robot decision making is not magic. It is a workflow. First, the robot observes. Next, it interprets what those observations mean. Then it compares choices using goals and constraints. Finally, it acts and uses feedback to check whether the action helped. This pattern appears in simple line-following robots, warehouse robots, delivery drones, and home assistants. The details differ, but the core idea is the same: robots continuously convert sensor inputs into basic choices.

Engineers often describe robot decisions using three broad approaches. In a rule-based system, the designer writes clear instructions such as “if obstacle is close, stop.” In a model-based system, the robot uses an internal representation of the world, such as a map or motion model, to predict what may happen next. In a learning-based system, the robot improves behavior from data and experience instead of relying only on hand-written rules. None of these approaches is automatically best. Practical robotics often combines them. A cleaning robot may use learned perception to identify furniture, a map-based planner to choose a route, and simple safety rules to avoid collisions.

Engineering judgment matters because the “best” decision is rarely just the fastest one. Robots must balance speed, safety, energy use, accuracy, comfort, and task success. A factory arm should move quickly, but not so quickly that it shakes a part loose. A mobile robot should reach its destination, but not by clipping a corner or running its battery flat. Designers also need to plan for mistakes. Sensors can be noisy. Models can be wrong. Learned systems can fail on unfamiliar situations. Good decision systems include checks, fallback behaviors, and feedback loops that help the robot recover.

As you read this chapter, focus on four ideas. First, robots choose actions from observations, not from perfect knowledge. Second, rules, models, and learned behavior each have strengths and limits. Third, goals tell the robot what success looks like, while constraints define what must never be violated. Fourth, even a simple decision process becomes powerful when repeated many times per second. Those repeated choices are what make a robot appear purposeful, adaptable, and intelligent.

Common beginner mistakes include treating sensor data as if it were perfect, writing too many brittle rules, ignoring battery and safety constraints, and forgetting to measure whether the chosen action actually improved the situation. Strong robot systems keep the loop closed: sense, decide, act, and check. In the sections that follow, we will build that loop piece by piece, from simple if-then logic to planning under uncertainty, and then walk through a complete beginner example step by step.

Practice note for Understand how robots choose what to do next: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Compare rules, models, and learned behavior: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

A robot never starts with a decision. It starts with observations. These observations come from sensors, and they are usually incomplete, noisy, and local. A front distance sensor may report that an object is 40 centimeters away, but it does not automatically tell the robot whether the object is a wall, a chair leg, or a person. A camera image contains many pixels, but the robot still has to interpret those pixels into meaningful information. This is why robot decision making begins with a transformation: raw data becomes useful information.

Consider a simple wheeled robot moving down a hallway. Its sensors might say: front distance is short, left side is open, right side is close, battery is medium, wheels are turning slower than commanded. From these signals, the robot can derive information such as “obstacle ahead,” “space available on left,” and “possible carpet drag reducing speed.” Only after interpretation can the robot compare options like stop, turn left, or push forward. This distinction matters because poor interpretation leads to poor choices. If the robot mistakes glare for open space, it may drive into a shiny wall.

A practical decision pipeline often has four stages: observe, interpret, evaluate, act. Observe means collecting current sensor readings. Interpret means estimating what is happening in the world. Evaluate means comparing possible actions against goals and constraints. Act means sending commands to motors or other actuators. Then the cycle repeats. Feedback from the latest action becomes the next observation. In many robots, this loop runs many times per second.

Beginners often try to jump directly from sensor reading to action with no middle step. That can work for very simple tasks, but it quickly becomes fragile. A stronger design creates a small set of meaningful internal facts, such as “path blocked,” “target visible,” or “wheel slip detected.” These facts are easier to reason about than hundreds of raw measurements. They also make debugging easier. If a robot makes a poor choice, an engineer can ask: was the sensor wrong, was the interpretation wrong, or was the action selection wrong?

The practical outcome is clear: good robot choices depend on clear information flow. When engineers separate observations, interpreted information, decisions, and actions, they can improve each part independently. That structure makes behavior safer, more explainable, and easier to extend as the robot gains new sensors or new tasks.

The easiest way to understand robot decision making is with rules. A rule-based robot follows explicit instructions written by a human designer. These instructions often use if-then logic: if the obstacle is too close, then stop; if the line is drifting left, then steer left; if the battery is low, then return to the charger. Rule-based control is popular because it is simple, transparent, and predictable. You can read the rule and understand why the robot behaved as it did.

Rules are excellent for safety and for tasks with clear conditions. For example, emergency stop behavior should usually be rule-based, not learned. If a touch bumper is triggered, stop the motors immediately. That response should be fast, understandable, and reliable. Rules are also useful for getting a first prototype working. A beginner can build a wall-following robot with a handful of thresholds and actions.

However, rules have limits. Real environments are messy. Sensors fluctuate. Thresholds are hard to tune. Two rules may conflict, such as “go to target” and “avoid obstacle,” leaving the robot to hesitate or oscillate. A robot with many special-case rules can become difficult to maintain. Engineers sometimes call this “spaghetti logic,” where every new exception makes behavior harder to predict.

Good engineering judgment means using rules where they fit and not expecting them to solve everything. Keep rules small, purposeful, and ordered by importance. Safety rules should override convenience rules. It also helps to avoid using raw sensor values directly in too many places. Instead, build rules around interpreted conditions like “safe to move” or “target lost.” That reduces brittleness.

Rules are only one approach. Compared with rules, model-based methods predict future outcomes using maps, motion estimates, or object relationships. Learned behavior uses data to improve decisions when hand-written rules would be too limited. In practice, robots often mix these methods. A delivery robot may use learned vision to detect pedestrians, a model to estimate stopping distance, and if-then rules to enforce a safe gap. The key lesson is not that rules are old-fashioned. It is that rules are best when the condition is clear, the response is important, and the consequences of error are high.

To make a decision, a robot needs more than sensor readings. It needs a notion of state. A state is the robot’s current situation as far as decision making is concerned. It may include position, speed, battery level, whether an object is held, whether a target is visible, or whether a task is complete. The state does not need to include every detail in the world. It only needs the information required to choose a sensible next action.

Goals define what the robot is trying to achieve. Constraints define the limits it must respect while trying to achieve that goal. For example, a warehouse robot may have the goal “deliver this bin to shelf B12” and constraints such as “do not collide,” “do not exceed speed limit,” and “keep enough battery to return safely.” Goals help the robot prefer one action over another. Constraints block actions that may be dangerous, impossible, or wasteful.

Possible actions are the options available from the current state. A mobile robot might choose from stop, move forward slowly, turn left, turn right, or back up. A robotic arm might choose to reach, grasp, lift, place, or wait. In more advanced systems, actions can include communication, such as asking a human for confirmation when confidence is low.

A useful way to think about decision making is this: the robot is in a state, it has a goal, it faces constraints, and it must select one action from several possibilities. That framing is simple but powerful. It explains why the same sensor reading may lead to different actions in different contexts. If an obstacle appears ahead while the robot is carrying a fragile object, the best action may be to stop gently. If it is exploring an empty room, it may turn and continue.

Common mistakes happen when goals are vague or constraints are ignored. If a robot is told only to “be fast,” it may take unsafe shortcuts. If it is told only to “be safe,” it may refuse to move in uncertain situations. Engineers therefore define priorities. Safety constraints usually come first, task completion second, efficiency third. That ordering helps produce behavior that is practical in the real world. A robot that understands state, goal, and action choices is already much closer to appearing intelligent, even when its control logic is still simple.

Some robot decisions are immediate reactions, but many require planning. Planning means looking ahead and choosing actions not just for the next second, but for what those actions will cause later. A robot in a maze should not simply turn away from the nearest wall forever. It should choose a path that makes progress toward an exit. A robot arm should not just move toward an object; it should choose a motion that avoids knocking over nearby items.

At a beginner level, planning can be understood as comparing possible next moves before acting. If the robot can go left, right, or forward, which option best supports the goal? The robot may use a map, a list of waypoints, or a simple score for each option. For example, forward may score well for speed but badly for safety if an obstacle is near. Left may score well for clearance but poorly for distance. The chosen action is the one with the best overall value after considering goals and constraints.

Model-based decision making is especially helpful here. A model can be a floor map, a rule about how quickly the robot can stop, or an estimate of how wheel commands change position. With a model, the robot can predict consequences. “If I turn left now, I will likely have a clear path.” “If I accelerate on this slope, I may slip.” These predictions make planning more than guessing.

Engineering judgment is important because planning must fit the robot’s resources. A tiny toy robot cannot run a massive search algorithm every millisecond. A practical design uses just enough planning for the task. Short-horizon planning is often enough for local movement, while larger route planning can happen less often. Designers also keep a fast reactive safety layer underneath the planner. If a child suddenly steps into the path, the robot should stop first and re-plan second.

The practical outcome of planning is smoother, more purposeful behavior. Instead of acting like it is surprised by every event, the robot seems to anticipate. It chooses turns that lead somewhere, manages energy better, and avoids getting trapped. Planning is simply structured foresight, and even a small amount of it makes robot decisions much more effective.

Real robots almost never know the world perfectly. Sensors are noisy, environments change, and some important facts are hidden. A camera may be blocked by shadows. A distance sensor may reflect badly from glass. Wheel encoders may suggest motion even when the wheels are slipping on gravel. Because of this, robots must choose under uncertainty. They do not ask, “What is certainly true?” They often ask, “What is most likely true, and what is the safest useful action?”

This is where feedback becomes essential. After acting, the robot checks whether the world responded as expected. If it commanded a turn and the hallway did not move to the side in the camera view, perhaps the wheels slipped or the estimate was wrong. Feedback allows correction. Rather than pretending the first decision was perfect, the robot updates its understanding and tries again. This is one of the central reasons robots can improve behavior over time, even without becoming fully autonomous experts.

Under uncertainty, engineers often favor conservative decisions. If the robot is unsure whether the path is clear, it may slow down. If object recognition confidence is low, it may request another camera angle. If battery estimation is uncertain, it may head to the charger earlier. These choices may seem cautious, but they improve reliability and safety.

Learned behavior is particularly relevant here. A learning-based system can discover patterns that are hard to capture with fixed rules, such as recognizing walkable floor in varied lighting or estimating grasp success from past attempts. But learning is not a free pass. Learned systems can be confidently wrong. They need testing, guardrails, and fallback rules. In many applications, the best design is hybrid: learning for perception or scoring options, rules for safety, and models for prediction.

A common beginner mistake is to demand certainty before acting. That can freeze the robot. Another mistake is to ignore uncertainty and act too aggressively. Strong robot design sits between those extremes. It estimates, chooses, measures the outcome, and adapts. That loop is what turns imperfect sensors into useful behavior in the real world.

Let us follow a simple robot step by step. Imagine a small indoor delivery robot whose job is to carry a snack box from a table to a marked station across a room. It has a front distance sensor, left and right distance sensors, wheel encoders, a basic camera that can detect the station marker, and a battery sensor. Its goal is to reach the station. Its constraints are: do not collide, do not tip the box, and do not run out of battery.

Step 1: observe. The robot reads its sensors. It sees open space ahead, a chair leg on the right, the station marker slightly to the left, and battery at 35%. Step 2: interpret. From the readings, it forms information: path mostly clear, right side crowded, target visible left of center, battery sufficient for short trip. Step 3: list possible actions. It can move forward, turn left slightly, turn right slightly, stop, or back up. Step 4: evaluate using goals and constraints. Turning right would move away from the target and toward the chair leg, so it scores poorly. Moving straight is acceptable, but a slight left turn both aligns with the marker and maintains clearance. Step 5: act. The robot turns left slightly and moves forward slowly.

Now feedback arrives. The marker grows larger, but the front sensor suddenly reports a close obstacle: a backpack has been left on the floor. The robot repeats its loop. New information says path blocked. The safety rule takes priority: do not collide. The robot stops. Then it compares alternatives. The left side is open, the right side is narrow, and the marker is still visible beyond the obstacle. It chooses to steer left around the backpack at reduced speed. After passing it, the robot straightens and heads toward the station.

Suppose the camera briefly loses the station marker because of glare. A fragile design might fail here. A stronger design uses state and planning. The robot remembers the marker was ahead-left and keeps moving cautiously while scanning. If the marker remains lost for too long, it slows further or stops to search rather than wandering blindly. If the battery estimate drops below a threshold, a higher-priority rule may interrupt the delivery and send the robot to charge.

This example shows the full decision process in action: sensing, interpreting, comparing options, applying goals and constraints, acting, and using feedback to adjust. It also shows why practical robotics mixes methods. Rules handled safety. A simple model of direction helped with alignment. Repeated feedback kept the behavior stable. That is robot decision making in its most useful beginner form: not mystical intelligence, but a disciplined loop that turns sensor inputs into sensible choices.

1. Which sequence best describes the robot decision workflow in this chapter?

2. What is the difference between data and information in robot decision making?

3. Which example best matches a model-based decision system?

4. According to the chapter, what is the role of goals and constraints?

5. Which choice reflects strong robot decision design rather than a beginner mistake?

So far, we have seen that robots sense the world, turn sensor readings into useful information, make decisions, and then act. This chapter adds an important idea: a robot does not always need to follow exactly the same fixed rules forever. In many systems, the robot can improve its behavior by using experience. That improvement is what we mean by learning.

For a robot, learning is not magic and it is not human-like understanding. It is a practical engineering process that changes future behavior based on past data. A robot may learn to recognize objects better from camera images, drive more smoothly after many attempts, or adjust its grasping force after repeated successes and failures. In each case, the robot collects signals from the world, compares outcomes with what was expected, and updates some internal model or policy. That update helps it make better decisions later.

Learning matters because real environments are messy. Sensors are noisy, lighting changes, floors are slippery, and people move unpredictably. A rule-based controller can be precise and reliable when conditions are known in advance, but it often becomes brittle when reality differs from the designer’s assumptions. Learning-based methods help the robot adapt. They do not replace sensing, decision-making, or action. Instead, they improve how those stages connect.

In practice, robot learning usually depends on three ingredients: examples, rewards, and feedback. Examples show the robot what correct outputs look like. Rewards tell the robot whether an outcome was good or bad. Feedback is the broader loop that measures performance and guides improvement over time. Engineers choose among these methods depending on the task, the available data, the safety limits, and the cost of making mistakes.

This chapter compares three major learning ideas used in robotics: supervised learning, unsupervised learning, and reinforcement learning. These are not just academic labels. They reflect different ways a robot can use experience. Supervised learning uses examples with correct answers. Unsupervised learning searches for structure in data without labels. Reinforcement learning improves behavior through trial and error using rewards. Across all three, the goal is the same: turn experience into better decisions and more useful actions.

Good engineering judgment is essential here. A learning system can perform impressively in a lab and fail badly in a warehouse, hospital, or farm if it was trained on narrow data, measured with the wrong success metric, or deployed without safety checks. Learning is powerful, but it works best when combined with careful sensor design, clear objectives, testing, and fallback rules. A smart robot is not merely one that learns. It is one that learns in a controlled, measurable, and dependable way.

As you read the sections in this chapter, keep one practical question in mind: what exactly is changing inside the robot after experience? Sometimes it is a classifier that interprets sensor inputs. Sometimes it is a map of likely patterns in the environment. Sometimes it is a policy that connects situations to actions. Whatever the mechanism, the central idea is simple. Experience changes future behavior.

Practice note for Understand what learning means for a robot: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for See the role of examples, rewards, and feedback: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Compare supervised, unsupervised, and reinforcement ideas: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Connect learning to better robot decisions: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

When engineers say that a robot learns, they mean that its behavior changes because of experience rather than only because of hand-written rules. This definition is useful because it is concrete. We can observe what the robot did before training, what happened during operation, and what it does after training. If its future choices improve in a measurable way, learning has occurred.

Consider a mobile robot that keeps drifting too close to walls. A rule-based system might use a fixed threshold from a distance sensor: if the robot is within 30 centimeters of a wall, turn away. That rule may work on one floor and fail on another because of wheel slip, sensor placement, or speed changes. A learning system can use many examples of sensor readings and outcomes to adjust how strongly it reacts in different situations. Over time, the robot may begin turning earlier at higher speeds or behaving differently in narrow hallways than in open areas.

This shows an important point: learning does not remove the sense-decide-act cycle. The robot still uses sensors, still forms information from data, still chooses an action, and still acts through motors. What changes is the mapping between the sensed situation and the chosen response. Learning refines that mapping.

In robotics, the thing being updated might be a model, a controller parameter set, a decision policy, or a prediction system. For example, a robot arm may update a model that predicts how much force is needed to lift different objects. A warehouse robot may update a classifier that recognizes pallets from camera images. A drone may tune a controller to better handle wind disturbances. These are different technical systems, but they all fit the same definition: behavior changes using experience.

A common mistake is to imagine that all changes count as learning. Simple recalibration, such as resetting a sensor bias each morning, may improve performance but is not always called learning unless the system uses accumulated experience to generalize future behavior. Another mistake is to assume that learning guarantees improvement in every condition. A robot can learn the wrong lesson if the data is biased, incomplete, or collected in a narrow environment. That is why engineers define success carefully and measure performance with test cases, not just training runs.

Practical robot learning starts with a clear target. What should improve: speed, safety, accuracy, energy use, object recognition, path quality, or grasp success? Without a target, learning becomes vague and hard to evaluate. In real projects, teams choose a measurable objective, collect data tied to that objective, then decide what aspect of behavior should adapt. Learning is useful when it leads to better decisions, not merely more computation.

Supervised learning is the approach most closely tied to examples. In this method, the robot is given input data along with correct answers, often called labels. The system studies many input-output pairs and learns a rule for predicting the right output when it sees new inputs later. In robotics, these inputs often come from sensors such as cameras, lidars, microphones, force sensors, or joint encoders.

Imagine a sorting robot on a conveyor belt. A camera captures images of items, and each image has a label such as metal, plastic, damaged, or safe to pack. The learning system trains on many labeled examples. Later, when a new item appears, the robot predicts its class and chooses an action, such as placing it in the correct bin. The value of supervised learning is that it can handle rich sensor data that would be hard to process with simple if-then rules alone.

Another example is a delivery robot that learns to detect pedestrians, curbs, doors, or floor markings from camera images. The labels teach the system what features matter. During training, the model adjusts its internal parameters to reduce prediction errors. During operation, those predictions support decisions like slowing down, rerouting, or stopping.

Engineering judgment matters a great deal here. The quality of the labels often matters as much as the quantity of the data. If images are mislabeled, the robot learns incorrect associations. If the data only includes bright indoor scenes, the robot may fail outdoors or at night. If the examples mostly contain one type of object, the model may perform poorly on rare but important cases. These are not minor details. They directly affect safety and usefulness.

Supervised learning also creates a useful connection between data, information, decisions, and actions. Raw sensor data becomes labeled training data. The model turns future sensor readings into information such as object identity or estimated position. That information supports a decision, and the decision leads to an action. The robot does not learn everything at once. Often it learns one piece of the pipeline, such as recognizing objects, while more traditional software handles planning and control.

Common mistakes include training on data that is too clean, too limited, or too different from real operation. Another mistake is measuring only average accuracy. In robotics, a rare failure can be more serious than many small successes are valuable. Missing a pedestrian once matters more than correctly classifying boxes all afternoon. Good practice includes collecting diverse examples, reviewing edge cases, and testing how prediction errors affect real robot actions.

Supervised learning is powerful because it lets robots benefit from human knowledge embedded in examples. But it depends on labeled data, and labeling can be expensive. That is one reason engineers also use methods that do not require explicit correct answers.

Unsupervised learning looks for structure in data when no labels are provided. Instead of being told the correct answer for each input, the robot searches for regularities, groups, or compact descriptions. This is useful when data is abundant but labeling is difficult or impossible. Robots produce large amounts of sensor data, so methods that discover patterns automatically can be very practical.

Suppose a service robot moves through an office building every day. It records depth readings, camera images, and motion data. No one manually labels every hallway, desk, chair, or doorway. Even so, the robot may find recurring patterns. It may cluster similar places, detect common movement routes, or identify unusual sensor readings that do not match normal conditions. This can support tasks such as mapping, anomaly detection, or environment understanding.

For example, a robot may group objects by shape and size before a human ever names those groups. A warehouse robot might notice that certain visual features often appear together and correspond to shelves, pallets, or empty floor regions. An agricultural robot may detect patterns that separate healthy plants from unusual ones even before a specialist provides exact categories. In each case, the robot is not learning labeled answers. It is organizing experience into a useful internal structure.

This kind of learning helps decision-making indirectly. The output may not be a final command like turn left or grasp now. Instead, it produces better internal information. If a robot can separate normal sensor patterns from abnormal ones, it can trigger inspection or caution. If it can compress high-dimensional sensor inputs into simpler features, later decision systems can work more efficiently. If it can cluster locations into meaningful regions, navigation becomes more organized.

A common mistake is expecting unsupervised learning to automatically produce human-friendly categories. The discovered patterns may be mathematically consistent but not operationally useful. Engineers must check whether the learned structure actually helps the robot do something better. Another mistake is forgetting that sensor noise can create false patterns. If a camera has a persistent artifact, the robot may cluster images based on the artifact rather than the environment. Careful preprocessing and validation matter.

In practical robotics, unsupervised learning is often a supporting tool rather than a complete decision system. It can help summarize data, reduce complexity, discover hidden regularities, and flag novelty. Those gains can improve later planning and control. Even without labels, experience still changes how the robot interprets the world, and that can lead to better choices.

Reinforcement learning takes a different approach. Instead of learning from labeled examples, the robot learns by trying actions and receiving rewards or penalties based on outcomes. The main question is not “What is the correct label?” but “Was that action good or bad in this situation?” Over many attempts, the robot aims to choose actions that produce higher long-term reward.

Picture a robot learning to navigate a room. It receives a positive reward for reaching a target and a negative reward for bumping into obstacles or wasting time. At first, its behavior may be poor. It tries many actions, some useful and some not. But as it gathers experience, it learns which action sequences tend to work. Eventually, it may develop a policy: a strategy for choosing actions from the current sensed state.

This method is especially attractive in robotics because many tasks involve sequences of decisions. A robot arm does not succeed at grasping because of one motor command alone. It must approach, align, close the gripper, sense contact, and lift. A walking robot must continuously adjust balance. A drone must react to changing wind while still heading toward a goal. Reinforcement learning can optimize this chain of choices when the final outcome matters more than any single step.

However, trial and error in the real world is expensive. Real robots can break hardware, waste time, drain batteries, or create unsafe situations. For that reason, engineers often begin in simulation, where the robot can practice many times without physical damage. Even then, simulation has limits. A policy that works in a simplified virtual world may fail on a real floor with imperfect friction, delays, or sensor noise. Transferring learning from simulation to reality requires care.

Reward design is also critical. If the reward is too simple, the robot may find a shortcut that technically increases reward but misses the true goal. For example, a cleaning robot rewarded only for speed may rush and miss dirt. A robot rewarded only for staying upright may learn to stand still instead of walking. Engineers must define rewards that reflect the actual objective, including safety, efficiency, and task completion.

Reinforcement learning highlights the role of feedback very clearly. The robot acts, observes the result, receives reward information, updates its strategy, and tries again. This loop connects sensing, decisions, and action in a continuous cycle of improvement. It can produce impressive adaptive behavior, but only when exploration, safety constraints, and evaluation are managed carefully.

Learning is not only about choosing an algorithm. It is a workflow. A practical robot learning project usually includes data collection, training, testing, deployment, monitoring, and revision. Skipping any of these steps often leads to systems that look good in demonstrations and perform poorly in practice.

Training is the phase where the robot or its model updates internal parameters from experience. In supervised learning, this means fitting the model to labeled examples. In unsupervised learning, it means discovering structure in raw data. In reinforcement learning, it means improving a policy from reward feedback. But training alone does not prove usefulness. A robot can appear excellent on the data it already saw and still fail in new conditions. That is why testing matters.

Testing asks whether the learned behavior works on unseen data or new situations. For a robot, that may include different lighting, floor surfaces, object types, speeds, or sensor noise levels. Engineers often separate data into training and test sets so they can estimate whether the model generalizes. In physical robotics, they also run real-world trials because software metrics alone do not capture every failure mode. A perception model with high image accuracy may still lead to poor navigation if its errors occur in dangerous moments.

Feedback supports ongoing improvement after the first deployment. If a robot repeatedly struggles at glossy surfaces, crowded intersections, or unusual object shapes, engineers can collect those difficult cases and use them to retrain or refine the system. This is one of the most practical ideas in robot learning: failures are valuable information when they are measured and fed back into development.

Good engineering practice includes choosing meaningful metrics. Depending on the task, useful measures might include collision rate, grasp success, path efficiency, battery use, false alarm rate, or recovery time after error. A common mistake is optimizing the easiest metric rather than the most important one. Another mistake is changing many parts of the system at once, making it hard to know what actually improved performance.

Improvement in robotics is usually incremental. Teams train a model, test it, inspect failures, adjust sensors or data, retrain, and repeat. Sometimes the right decision is not to use more learning at all, but to add a simple rule or safety constraint around the learned component. In real systems, dependable performance often comes from combining learned behavior with traditional engineering checks. Learning adds flexibility, while testing and feedback keep that flexibility under control.

Learning can improve robot behavior, but it has limits. Understanding those limits is part of responsible robotics engineering. A robot does not learn in a vacuum. It depends on sensors, data quality, hardware reliability, operating conditions, and the way success is defined. If any of these are weak, learning can amplify the weakness rather than solve it.

One major limit is data coverage. A robot trained in one environment may not perform well in another. A delivery robot that learned on dry sidewalks may struggle in rain. A grasping system trained on boxes may fail on soft bags or transparent containers. This gap between training conditions and deployment conditions is one of the most common causes of poor real-world performance. Learning systems are often strongest in environments similar to what they experienced before.

Another limit is safety during exploration and adaptation. Trial-and-error methods can be powerful, but physical robots cannot explore as freely as software can. A mistaken action may damage equipment or hurt people. For this reason, learning in robotics is often constrained by fallback controllers, emergency stop systems, speed limits, and protected training spaces. These constraints are not signs of weakness. They are signs of good engineering.

Interpretability is also a practical issue. Some learned models are hard to inspect. If a robot makes a poor decision, engineers may not easily know whether the cause was bad data, poor reward design, hidden bias, sensor failure, or simple randomness. Rule-based systems are often easier to debug, while learning-based systems may be more adaptable. This trade-off explains why many real robots use a hybrid design: explicit rules for safety-critical parts and learning for perception or optimization.

There is also the problem of maintenance. A learned model is not finished forever. Sensors age, environments change, and tasks evolve. A robot in a factory may face new packaging designs. A farm robot may see different crops by season. A hospital robot may encounter changed layouts and procedures. If the learning system is never updated, performance can decline. Continuous monitoring and periodic retraining are often necessary.