Career Transitions Into AI — Intermediate

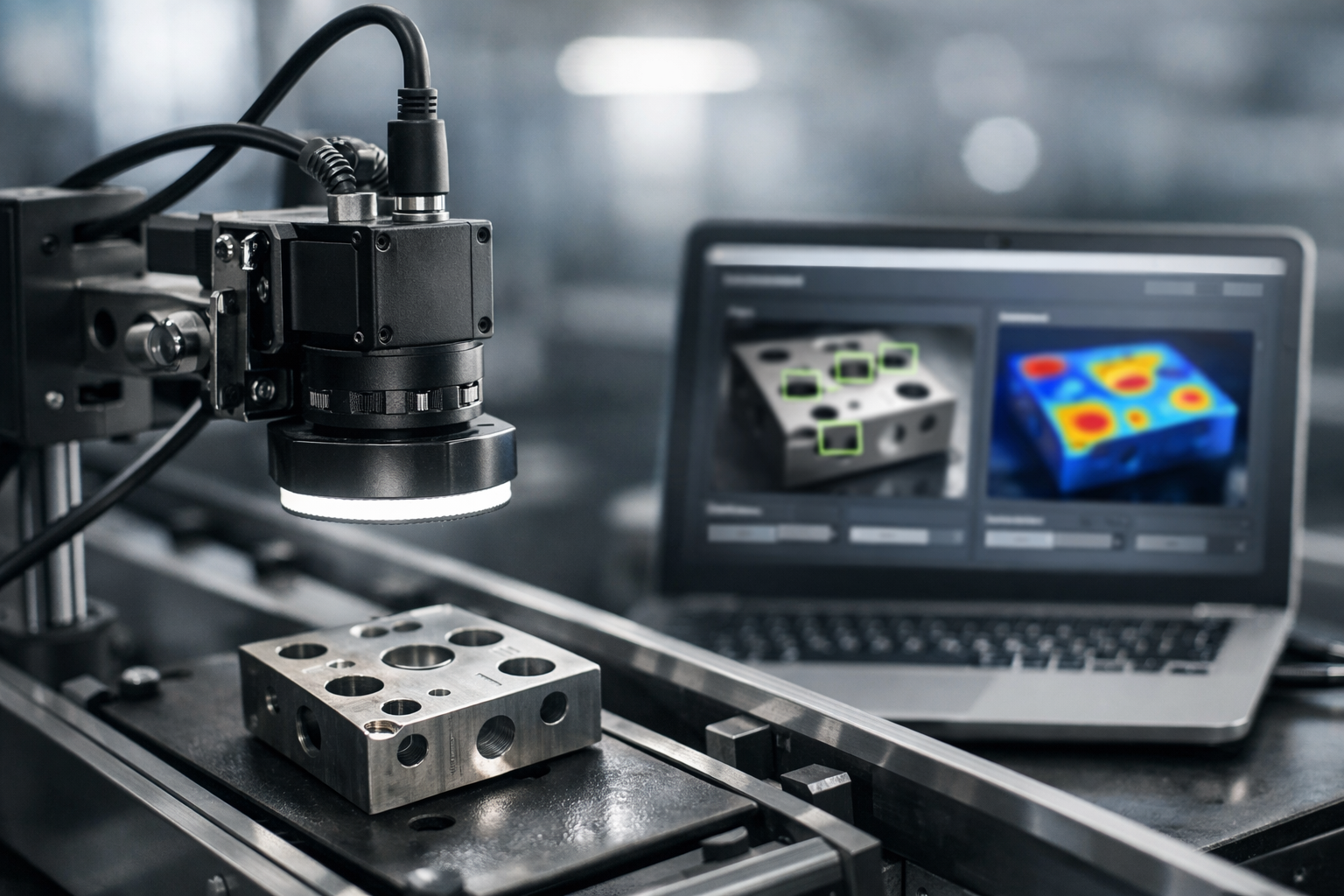

Go from shop-floor QA to deploying defect-detection vision AI.

This course is a short, book-style path for mechanical engineers (and adjacent disciplines) who want to pivot into applied AI by building something real: a camera-based defect detection system you can explain, measure, and deploy. Instead of starting with abstract neural network theory, you’ll start where manufacturing reality starts—what counts as a defect, what a “miss” costs, what your cycle time allows, and what your line can physically support.

You’ll learn to translate shop-floor inspection needs into computer vision requirements, design a capture setup that reveals defects consistently, create a dataset that matches production variation, train a modern detection/segmentation model, and then harden it for speed and reliability. By the end, you’ll have a portfolio-ready project blueprint and the vocabulary to interview for computer vision, automation AI, or industrial ML roles.

It starts with optics and process constraints, not just model code. If your lighting is wrong, the model will fail—so you’ll learn how to make defects visible and repeatable.

It teaches production-aligned evaluation. You’ll connect precision/recall trade-offs to false escapes, false rejects, and real cost.

It treats deployment as part of the system. Inference speed, drift, and monitoring are covered as first-class requirements.

Chapter 1 reframes your mechanical QA intuition into an AI problem statement with defect taxonomy, KPIs, and a one-page spec you can reuse at work or in interviews.

Chapter 2 covers camera, lens, lighting, mounting, and calibration—how to stop fighting reflections, motion blur, and unstable setups.

Chapter 3 builds your data pipeline: collection strategy, labeling rules, split design, and imbalance handling so your metrics mean something.

Chapter 4 trains and evaluates defect models (detection/segmentation) with reproducibility, error analysis, and decision policies that match real production risk.

Chapter 5 focuses on speed and robustness: profiling, optimization (e.g., ONNX/TensorRT paths), stress testing, and acceptance validation planning.

Chapter 6 ties it together: deployment patterns, integration concepts, monitoring, retraining loops, and how to turn the whole system into a career-transition portfolio.

This is designed for mechanical engineers, manufacturing engineers, QA/quality professionals, and automation-minded builders who want practical computer vision skills without losing the engineering discipline they already have. You don’t need a PhD in ML—just comfort with basic Python and a willingness to think in systems.

If you’re ready to build a defect-detection pipeline you can explain from photons to production, take the next step: Register free to access the course on Edu AI. Or, if you’re comparing learning paths, browse all courses to see related tracks in career transitions and applied AI.

Senior Computer Vision Engineer, Industrial Inspection Systems

Sofia Chen builds vision systems for high-throughput manufacturing lines, specializing in defect detection, lighting design, and edge deployment. She has shipped models to GPUs and embedded devices and mentors engineers transitioning from traditional disciplines into applied AI.

Mechanical QA already has a powerful structure: define what “good” looks like, sample parts, measure, decide, and close the loop with process changes. Vision AI does not replace that structure—it operationalizes it at speed, at scale, and with traceability. The transition challenge is rarely “train a model”; it is translating a shop-floor inspection intent into an unambiguous vision requirement with measurable performance, clear constraints, and a spec that production, quality, and automation can sign off on.

This chapter teaches you to frame a defect detection project the way a senior manufacturing engineer would: by starting from the inspection objective and acceptance criteria, choosing the right task type (classification vs detection vs segmentation), setting KPIs that match production risk (false escapes, false rejects, takt time, and cost of error), mapping line constraints (motion, vibration, cycle time, integration points), and producing a one-page vision inspection spec that becomes your portfolio artifact and your project’s “single source of truth.”

Throughout, you will practice engineering judgement: deciding what must be solved by optics and fixturing versus what can be solved by algorithms, and avoiding common mistakes like ambiguous defect definitions, mismatched metrics, and under-scoped integration constraints. By the end, you should be able to walk into a cross-functional meeting and lead the problem framing conversation confidently.

Practice note for Define the inspection objective, defect taxonomy, and acceptance criteria: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Choose the right task type: classification vs detection vs segmentation: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Set production KPIs: false escapes, false rejects, takt time, and cost of error: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Map the line constraints: motion, vibration, cycle time, and integration points: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Write a one-page vision inspection spec (portfolio artifact): document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Define the inspection objective, defect taxonomy, and acceptance criteria: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Choose the right task type: classification vs detection vs segmentation: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Set production KPIs: false escapes, false rejects, takt time, and cost of error: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Map the line constraints: motion, vibration, cycle time, and integration points: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Manufacturing inspection is a loop: (1) define the product and CTQs (critical-to-quality features), (2) observe/measure, (3) decide pass/fail or grade, (4) act—containment, rework, scrap, feedback to process, and (5) document and improve. Vision systems live in steps (2) and (3), but they must respect steps (1), (4), and (5) or they become a “black box camera” that no one trusts.

Start by stating the inspection objective in operational terms, not technical terms. “Detect surface defects” is vague; “prevent cosmetic scratches longer than 3 mm on the visible face from shipping” is actionable. From that objective, list the decision points: Is the system a gate (hard reject), a sorter (grade A/B/C), or a notifier (flag for human review)? Each role changes the acceptable tradeoffs for false rejects versus false escapes.

Next, map the process location. Inline inspection after machining has different constraints than end-of-line inspection after packaging. Inline may require immediate feedback to upstream tooling; end-of-line may prioritize traceability and customer risk. Clarify integration points early: PLC trigger availability, encoder signals, part-present sensors, reject mechanism timing, and where images and decisions must be logged (MES, SCADA, or a local database).

As a mechanical engineer, you already think in constraints. Apply that mindset: identify what is fixed (takt time, footprint, existing lighting, safety guards) and what can change (mounting bracket design, enclosure, controlled lighting). Vision works best when the physical world is made repeatable.

A defect taxonomy is your dictionary. Without it, you will label inconsistently, train inconsistent models, and argue endlessly about “what the AI missed.” Build the taxonomy like a PFMEA table: defect type, location, size/extent, appearance modes, severity, and disposition. Tie severity levels to acceptance criteria. For example: “Scratch on cosmetic face: Severity 1 if <1 mm, Severity 2 if 1–3 mm, Severity 3 if >3 mm or crosses logo.”

Ground-truth rules are the labeling laws that convert human judgement into consistent annotations. Define them before large-scale labeling. Rules should answer: What is the minimum visible evidence to call a defect? How do you label borderline cases? Do you label process artifacts (oil smear) as defects or as “ignore”? If multiple defects overlap, do you label one combined region or separate instances? If the part is partially occluded, do you label “unknown” rather than “good”?

Include “non-defect” confounders explicitly (dust, water spots, reflections, machining marks that are acceptable). These are the sources of false rejects. In production, false rejects cost time and money; in data, confounders cost model capacity. A good taxonomy reduces both.

Finally, decide how you will handle “severity as output.” Sometimes you need separate classes (Scratch_S1, Scratch_S2, Scratch_S3). Other times, a continuous measure (defect length estimated from segmentation) is more robust, with the acceptance threshold applied downstream. This is a mechanical QA mindset: measure first, then decide.

Choosing classification vs detection vs segmentation is not an ML preference; it is a measurement decision driven by acceptance criteria. If the only requirement is “is there any defect of type X anywhere on the part,” classification can work—provided the camera view reliably covers the full relevant surface. If you must locate the defect to enable rework, root cause analysis, or to avoid rejecting for defects outside the critical zone, you need detection. If acceptance depends on shape, area, edge raggedness, or precise boundary (e.g., coating void area %, flash thickness along an edge), segmentation is often required.

Each task implies a different labeling workload and dataset design. Classification labels are fast but hide failure modes; you may not know where the model looked. Detection requires bounding boxes and decisions about tightness (do boxes include surrounding context?). Segmentation requires pixel-level masks, which are expensive but unlock measurement-driven decisions: “reject if exposed substrate area > 2 mm².”

Data collection planning should mirror line variation. Capture shifts, operators, tool wear states, material lots, and environmental changes. Include both “known bad” defects (seeded samples, controlled defect injection) and naturally occurring defects (true production distribution). Avoid the trap of building a dataset from only the most obvious rejects; the hardest cases are near the acceptance boundary.

Also decide early how images map to parts. Will each part have one image, multiple views, or a short video sequence? Multi-view systems increase coverage but add synchronization and labeling complexity. Write these assumptions down; they will shape your spec and your KPI definitions.

In manufacturing, the most important metrics are not “accuracy” but the consequences of wrong decisions. Translate that into vision KPIs: false escapes (defects that ship), false rejects (good parts rejected), and takt time impact (latency and throughput). Add cost of error: scrap cost, rework cost, line stoppage cost, warranty cost, and reputational risk. This is how you turn model tuning into business decisions.

Precision and recall map directly: recall relates to catching defects (reducing false escapes), precision relates to avoiding false alarms (reducing false rejects). In many inspection gates, recall is prioritized because escapes are expensive. But pushing recall too high can flood production with false rejects, creating bottlenecks and operator workarounds. The correct balance is a business decision, documented and signed off.

Use a precision–recall (PR) curve instead of a single metric. It shows the tradeoff as you change the decision threshold. Then choose an operating point with an F-beta score, where beta > 1 weights recall more than precision (escape-averse) and beta < 1 weights precision more (reject-averse). For example, a safety-critical defect might use F2; a cosmetic defect on a high-volume line might use F0.5 to prevent throughput collapse.

Finally, connect metrics to takt time. If the line runs at 1 part/sec, your end-to-end decision (trigger → capture → inference → PLC output) must reliably fit inside that window with margin. Measure p50/p95 latency, not just averages. Reliability is a KPI too: dropped frames, camera reconnects, and disk-full conditions can cause silent escapes if not designed and monitored.

Before committing to deep learning, establish baselines. Classical vision (thresholding, edge detection, template matching, blob analysis) can be excellent when the imaging is controlled and the defect signature is simple: high-contrast presence/absence, consistent geometry, or metrology-like measurements. These methods are explainable, fast on low-cost hardware, and easier to validate for regulated environments.

Deep learning becomes attractive when variation is high: changing textures, subtle cosmetic defects, complex backgrounds, multiple defect modes, or when the part-to-part appearance drift is significant. It can also reduce brittle hand-tuning, but it shifts effort into data, labeling governance, and monitoring.

The key engineering decision is not “AI vs non-AI,” but “what do I solve physically, what do I solve algorithmically?” Often the best system uses both: lighting and optics to make defects visible; classical preprocessing to normalize illumination; a neural network to classify or segment; and rule-based post-processing to enforce acceptance thresholds and reject zones.

Document the decision points: expected defect contrast, allowable setup control (enclosure, fixed lighting), number of defect classes, and how often the appearance changes. These factors belong in the spec and will guide budget and timeline.

A one-page vision inspection spec is your scoping tool and your stakeholder contract. It prevents rework by forcing clarity on objective, constraints, and success criteria. Keep it short enough that production and quality will actually read it, but precise enough that it can be tested.

Include these elements: (1) inspection objective and station role (gate/sorter/notifier), (2) defect taxonomy and acceptance criteria including severity thresholds, (3) task type (classification/detection/segmentation) and output format (pass/fail, defect location, measurements), (4) production KPIs: maximum false escape rate, maximum false reject rate, and takt-time/latency requirements, (5) line constraints: part speed, motion/vibration, working distance tolerance, environmental constraints (oil mist, temperature), and integration points (trigger source, PLC I/O, reject timing), and (6) data and traceability requirements: image retention policy, part ID linkage, and audit logs.

End the spec with a validation plan: what sample size will be used for acceptance, what constitutes a “golden set,” and how you will test edge cases (dirty lens, lighting drift, part misload). Get sign-off from Quality, Production, Automation/Controls, and (if applicable) Customer/Regulatory. This sign-off is not bureaucracy—it is how you align the model’s metrics with production risk and lock the problem framing before you build.

1. What is described as the main challenge when moving from mechanical QA to Vision AI on the shop floor?

2. Which sequence best matches the chapter’s recommended framing approach for a defect detection project?

3. A team must decide between classification, detection, and segmentation. What is the chapter’s guidance for making this choice?

4. Which set of KPIs is explicitly called out as important for aligning Vision AI performance with production risk?

5. Why does the chapter recommend producing a one-page vision inspection spec?

Most defect-detection projects fail for a mechanical reason masquerading as an AI problem: the defect was never made reliably visible to the sensor. Before you think about model architectures, treat the imaging station like a measurement instrument. Your job is to convert “sometimes you can see it” into “it is consistently measurable” across production variation—surface finish drift, part orientation, temperature, dust, and operator handling.

In mechanical QA, you already think in tolerances, stack-ups, and gauge R&R. Vision systems require the same discipline. Start by writing down what must be detected (scratch, burr, dent, missing feature), the smallest relevant defect size, and the acceptable miss/false-call risk. Then design the camera, lens, lighting, and fixturing so the defect produces repeatable contrast and occupies enough pixels to be separable from noise. Only then does data collection and model training become predictable.

This chapter gives a practical workflow to select sensor type, resolution, frame rate, and shutter strategy; match lens and working distance to defect size and field of view; design lighting to maximize defect contrast and reduce glare; calibrate geometry and create repeatable fixturing and triggering; and establish a capture protocol for stable data collection. If you do these well, your dataset becomes easier to label, your models generalize better, and deployment metrics like false reject rate and escape rate become controllable.

The sections below walk from visibility fundamentals to hardware choices, then into mounting/triggering and finally calibration/standardization—because consistent images are what make consistent decisions.

Practice note for Select sensor type, resolution, frame rate, and shutter strategy: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Match lens and working distance to defect size and field of view: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Design lighting to maximize defect contrast and reduce glare: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Calibrate geometry and establish repeatable fixturing and triggering: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Create a capture protocol for stable data collection: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Select sensor type, resolution, frame rate, and shutter strategy: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Match lens and working distance to defect size and field of view: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Defects are detected when they create a stable difference from their surroundings. In images, that difference usually appears as contrast (brightness change), texture (local spatial variation), shape (edge/geometry), or color. Mechanical engineers often describe defects in physical terms (“a burr,” “a dent”), but the vision requirement must be expressed in image terms: “the defect must change pixel intensity by X relative to background under expected variation.”

Start by classifying the surface as diffuse (matte) or specular (shiny). Diffuse surfaces scatter light and are forgiving; specular surfaces act like mirrors and create glare that can completely hide defects. For specular parts (polished metal, glossy plastics), the main challenge is not resolution—it is controlling reflections. You typically want to make the environment that the surface reflects predictable, using dark-field or dome illumination, and then let defects break that predictability.

A common mistake is to judge visibility by looking at a few “hero images” on a monitor. Instead, run a quick study across representative parts and conditions: rotate parts through plausible angles, vary standoff slightly, and include borderline finish. Your goal is to learn what imaging configuration makes the defect visible across the whole tolerance envelope.

Practical outcome: by the end of this step, you should have a written statement like, “Scratches ≥0.2 mm must appear as a continuous high-frequency feature with at least 3–5 pixels width and >20 gray-level contrast under controlled lighting.” That becomes the specification that drives camera, lens, and lighting choices.

Selecting a camera is about matching the sensor behavior to your motion, defect type, and throughput. The highest-resolution sensor is not automatically the best; you need the right combination of resolution, frame rate, shutter strategy, and signal-to-noise.

Global vs rolling shutter: if the part moves during exposure (conveyor, rotating stage), rolling shutter can shear geometry because different rows are captured at different times. That distortion can look like a defect or hide one. Choose global shutter for moving parts and when you need consistent geometry. Rolling shutter can work for static stations or when you tightly control motion and use very short exposure, but it is risky in production where speed and vibration drift.

Resolution and pixel size: translate defect size into pixels. As a practical target, many defect tasks need the smallest defect dimension to span ~3–5 pixels for detection, and more for segmentation. If you need to resolve 0.1 mm on a 100 mm field of view, you want about 1000 pixels across that dimension for 0.1 mm/pixel, then add margin. But do not ignore pixel size: tiny pixels reduce full-well capacity and can increase noise unless you add light or increase exposure.

Frame rate and exposure: frame rate must cover line speed and buffering. Exposure time must avoid motion blur; if blur exceeds ~1 pixel, edge-based defects smear. The typical fix is more light (to allow shorter exposure) or strobe lighting synchronized to the camera.

Mono vs color: use mono whenever defects are defined by texture, shape, or subtle intensity changes; mono sensors avoid Bayer interpolation and give higher effective resolution and sensitivity. Use color when the defect is chromatic (discoloration, wrong component, contamination) or when color provides a stable separation that intensity cannot. A frequent mistake is choosing color “just in case,” then struggling with lower sharpness and inconsistent color balance; if you need color, plan for controlled illumination spectrum and white balance.

Interfaces and triggering: USB3 is convenient but can be fragile under cable length and EMI. GigE/PoE is robust for longer runs. CoaXPress and Camera Link offer high bandwidth and deterministic triggering for demanding lines. Whatever you choose, confirm hardware trigger support and the ability to timestamp frames—these become critical during synchronization and root-cause analysis.

Lens selection is where mechanical intuition about geometry pays off. You are building a mapping between the real world and pixels, and every compromise shows up as blur, distortion, or insufficient depth of field. Start with the required field of view (FOV) and working distance (WD), then compute magnification and focal length using vendor calculators. Your goal is to meet pixel coverage targets without pushing the lens into a corner of its performance envelope.

Focal length and working distance: longer focal length (with more WD) generally reduces perspective distortion and makes measurement more stable, but may require more space and can reduce light collection depending on aperture. Very short focal lengths increase distortion and can create edge stretching that complicates labeling and model learning. If the station allows it, choose a comfortable WD and a lens designed for your sensor size.

Distortion: for pure defect detection, mild distortion may be acceptable, but for geometric measurement, OCR alignment, or when defects near edges matter, distortion can shift features and hurt repeatability. Prefer low-distortion machine vision lenses, and plan for calibration if you need consistent geometry across the full FOV.

Depth of field (DoF): DoF is often the hidden failure mode. Parts are not perfectly flat; fixtures wear; operators tilt parts. If the defect plane moves out of focus, the “defect” turns into low-contrast mush. Increase DoF by stopping down (higher f-number), increasing WD, or reducing magnification—each has tradeoffs. Stopping down reduces light, which may force longer exposures and introduce blur unless you add illumination. Mechanical engineers should treat DoF like a tolerance: estimate expected Z variation and design optical DoF to exceed it with margin.

MTF basics: Modulation Transfer Function describes how well the lens preserves contrast at different spatial frequencies. Small defects are high-frequency details; if your lens MTF is poor at those frequencies, the camera will “see” a softened version of the defect. Practically: avoid cheap lenses, match lens resolution to sensor pixel size, and test with a sharpness target or fine pattern. If you see that edges never get crisp even in best focus, the lens is the bottleneck.

Common mistakes include using a consumer lens with unknown distortion and weak MTF, or running a lens wide open to “get more light” and then discovering shallow DoF and vignetting. Practical outcome: you should be able to state your achieved scale (mm/pixel), expected blur spot size, and DoF relative to fixture variation.

Lighting is the primary control knob for defect contrast. The same camera and lens can succeed or fail depending on how you illuminate. Design lighting by asking: do you want to emphasize surface (scratches, dents), edges (chips, cracks), or silhouette (missing holes, dimensional outline)? Then choose a pattern that makes the “normal” surface predictable and the defect anomalous.

Glare control is a workflow, not a single component. Use polarization (polarizer on light + analyzer on lens) to reduce specular reflection from some materials, but test—polarization can also reduce the useful signal. Control ambient light with shrouds and enclosures; “mystery reflections” from overhead fixtures are a common cause of day-to-day drift.

Strobing is a production-friendly technique: drive an LED light with a high-current pulse synchronized to exposure, yielding very short effective exposure and freezing motion without cranking sensor gain. Make sure the light and driver are rated for strobe currents and duty cycle.

Practical outcome: you should be able to produce at least two lighting setups—one optimized for defect visibility and one “stress test” setup that reveals whether your solution is robust to reflectance changes. Capture both during early trials to avoid overfitting your dataset to a single lighting quirk.

Even perfect optics and lighting will fail if the pose of the part is not repeatable. In practice, many “AI misses” are motion blur, micro-vibration, or inconsistent standoff that shifts focus and scale. Treat the station like precision equipment: stiff mounting, controlled cable routing, and predictable triggering.

Mounting and vibration control: mount the camera and light on a rigid frame with short cantilevers. Avoid mounting to vibrating guards or conveyors unless you isolate. If the environment has vibration, consider adding damping, increasing structural stiffness, and using shorter exposure via strobe. Also watch for thermal drift: LEDs and metal brackets expand; allow warm-up or design for stable operating temperature.

Fixturing: decide whether the part is constrained (nest/locators) or unconstrained (free on belt). Constrained fixturing improves repeatability and reduces model burden. If you cannot fixture tightly, plan for variability: larger DoF, wider FOV with software ROI alignment, or multiple cameras. Mechanical datum strategy matters: choose locating surfaces that are stable and not the defect-prone region.

Triggering: prefer hardware triggers (photeye, encoder, PLC output) over software timing. Hardware triggers reduce latency jitter and make frame-to-frame timing deterministic. If the part moves continuously, use an encoder to trigger at consistent spatial intervals (e.g., every 2 mm). This turns motion into a controlled sampling problem and stabilizes your dataset.

Synchronization: when using strobes or multiple cameras, synchronize with a shared trigger and, if needed, a strobe controller. Ensure the light pulse overlaps the camera exposure window. For multi-camera stations, document which camera corresponds to which view and embed that metadata into filenames or database records—future model debugging depends on it.

Common mistakes include using long, flexible mounts that resonate, relying on “best effort” software triggers, or letting cables tug the camera slightly during maintenance. Practical outcome: you can repeatably capture the same ROI location within a few pixels over long runs, which improves both labeling efficiency and model stability.

Calibration is how you turn a good-looking setup into a repeatable measurement system. You are not only calibrating geometry; you are standardizing the image so that the model learns defects, not illumination drift. This is the step that reduces “it worked on Tuesday” surprises.

Geometric calibration: if you need consistent dimensions, undistortion, or multi-camera alignment, use a calibration target (checkerboard, dot grid) matched to your FOV and resolution. Calibrate at the actual working distance and lock focus. Save the calibration parameters with versioning; if a camera is bumped, you can detect it by monitoring reprojection error or a simple fiducial check.

Flat-field correction: lenses and lights produce vignetting and non-uniform illumination. Flat-fielding compensates by capturing an image of a uniform target (diffuser or integrating surface) and using it to normalize pixel gain. This is especially valuable for segmentation tasks where brightness gradients can be mistaken for defects. Flat-field should be re-captured when lighting is changed or after significant LED aging.

White balance and color consistency: if you use color, fix the illumination spectrum (avoid mixed color temperatures) and lock white balance and exposure. Auto white balance is convenient for phones, but in inspection it creates frame-to-frame variation that the model can latch onto. Use a color chart during setup to validate that the same part looks the same across shifts and stations.

Exposure, gain, and gamma: lock them. Auto-exposure can hide real process drift by “correcting” it away, and it changes the pixel distribution your model expects. Use histogram checks to ensure you are not clipping highlights or crushing shadows. Keep gamma linear (or document it) so that intensity differences remain meaningful.

Image standardization for data collection: define a capture protocol: warm-up time, cleaning schedule for lenses and diffusers, verification images at shift start, and acceptance thresholds (e.g., mean intensity within a range, sharpness metric above a limit). Store metadata (exposure, strobe current, trigger source, calibration version) with each image. Practical outcome: your dataset becomes traceable, and when production changes, you can distinguish “process change” from “imaging change” quickly.

1. According to the chapter, what is the most common root cause of defect-detection projects failing?

2. Before selecting camera, lens, and lighting, what should you write down to drive the imaging design?

3. What does the chapter mean by treating the imaging station like a measurement instrument?

4. Which design principle best matches the chapter’s rule of thumb about optics/lighting and the model?

5. Why does the chapter emphasize calibration, repeatable fixturing/triggering, and a capture protocol?

In mechanical quality assurance, you already think in terms of “process capability,” “special causes,” and “risk to the customer.” A vision defect-detection system needs the same discipline, but expressed as data: what variation exists, what evidence the model will see, and what labels define “bad” consistently. This chapter focuses on the practical pipeline—collecting images across real production variation, structuring and versioning datasets, labeling with quality controls, and preparing splits that predict how the system will behave after deployment.

Most defect detection failures are not “model problems.” They are pipeline problems: images overrepresent one shift, labels drift between annotators, rare defects are missing until go-live, or the train/test split leaks near-duplicates from the same batch. Your goal is to build a dataset that matches production risk. That means you deliberately capture variation (materials, lots, shifts, wear), you write annotation guidelines that make disagreements resolvable, you manage class imbalance without fooling yourself, and you validate with splits that mimic process drift.

By the end of this chapter, you should be able to take a mechanical inspection requirement (e.g., “no burrs over 0.2 mm on sealing surfaces”) and turn it into a data and labeling plan that a team can execute repeatably—with audit trails, measurable coverage, and confidence in metrics.

Practice note for Plan data collection to cover variation (materials, lots, shifts, wear): document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Build a dataset structure, versioning, and annotation guidelines: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Label efficiently with QA checks and inter-annotator agreement: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Handle class imbalance and rare defects using sampling strategies: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Prepare train/val/test splits that reflect real production drift: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Plan data collection to cover variation (materials, lots, shifts, wear): document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Build a dataset structure, versioning, and annotation guidelines: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Label efficiently with QA checks and inter-annotator agreement: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Handle class imbalance and rare defects using sampling strategies: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Start data collection with a variation matrix: a table that lists the factors that change the appearance of both good parts and defects. Mechanical engineers are used to thinking about sources of variation—material lot, machine, tool wear, coolant condition, operator technique, shift lighting, and part orientation. For vision, those factors become “domains” the model must generalize across. If you do not plan for them, your dataset will accidentally overfit to one domain (often day shift, newest tooling, and clean fixtures).

Build the matrix with two categories: (1) controllable production factors (machine ID, cavity, tool change interval, line speed, camera exposure settings) and (2) environmental/appearance factors (surface finish, oil film, dust, glare patterns, packaging scratches). Add defect factors separately: defect type, typical size range, location on part, and severity threshold that matters to downstream performance. This matrix becomes your checklist for coverage.

Common mistake: collecting “a lot of images” without domain accounting. Ten thousand images from one machine and one shift do not equal a robust dataset. Practical outcome: your variation matrix lets you justify data collection time and ensures you can later explain model behavior when a new lot or tool-wear state enters production.

A defect-detection dataset is not just images plus labels; it is images plus labels plus context. The context (metadata) is what lets you debug and measure performance against the variation matrix: which line, which camera, which time window, what part revision, what exposure settings, what operator intervention. If you store images in ad-hoc folders and lose these fields, you lose the ability to explain failure patterns and you make retraining chaotic.

Use a consistent dataset structure that is friendly to both humans and pipelines. A practical pattern is: immutable raw images, derived “clean” images (cropped/normalized), and an annotations folder tied to a dataset version. Keep a single source of truth for metadata, ideally a CSV/Parquet file keyed by image ID.

Use a dataset versioning tool or workflow (DVC, Git-LFS, or object storage with manifest files) so you can reproduce a model later. Common mistake: “silent relabeling,” where labels are updated but the model artifact is not tied to the label revision. Practical outcome: you can answer, months later, “What data trained Model_2026-03-01?” and rerun evaluation exactly.

Choose annotation type based on what decisions the system must make on the line. In mechanical QA terms, ask: do you only need to reject a part (classification), do you need to localize the defect for operator review (detection), or do you need to measure area/length to enforce a spec (segmentation)? Annotation cost increases as you move from image-level labels to boxes to pixel-accurate masks.

Bounding boxes are fast and sufficient when the main requirement is “defect present and roughly where.” They work well for chips, missing features, and obvious cracks. However, boxes are weak for thin defects (hairline cracks) and for pass/fail rules based on size because the box includes background and can inflate measurements.

Polygons and masks (segmentation) support measurement-driven KPIs: defect area, percent coverage, length along a sealing surface, or distance to a critical edge. They are also better for complex boundaries like corrosion or coating voids. The tradeoff is labeling time and the need for clear rules about what to include (e.g., stain halo versus core defect).

Common mistake: starting with segmentation because it feels “more accurate,” then burning budget and still having inconsistent masks. Practical outcome: you align annotation type to inspection decisions and avoid collecting labels you cannot maintain.

Labeling is measurement. If the measurement system is unstable, your model metrics are meaningless. Treat annotation like a gauge R&R problem: you need repeatability (same annotator is consistent) and reproducibility (different annotators agree). Build quality controls into the workflow from day one, not after you see suspicious model behavior.

Create a golden set: a small, carefully reviewed subset of images (often 200–500) that represent key domains and defect severities. These are labeled by your most experienced reviewers (or a panel), frozen under a version tag, and used for onboarding, periodic checks, and tool calibration. Your golden set should include hard negatives (things that look like defects but are acceptable) because those are where production false rejects come from.

Common mistake: allowing “tribal knowledge” to define defects. If acceptance criteria are not encoded into guidelines with examples, the dataset drifts as teams change. Practical outcome: stable labels improve model learning and, just as importantly, make failures explainable to QA and operations.

In manufacturing, true defects are often rare, which creates class imbalance. A naive dataset might be 99.5% good parts, and a naive model can achieve 99.5% accuracy by always predicting “good.” You must design for rare-event detection while respecting real production rates, because your final KPI is usually tied to risk: false accepts (escapes) versus false rejects (scrap/rework).

Use sampling strategies deliberately. During training, you often oversample defect images or use loss functions that emphasize rare classes. During evaluation, you must keep a realistic mix so metrics reflect real operations. Separate “training balance” from “evaluation realism.”

Common mistake: relying on synthetic generation to “solve” rare defects, then discovering in production that real defects have different texture or specular behavior. Practical outcome: you reduce false rejects with hard negatives and improve recall for rare defects by aligning data capture with process knowledge.

How you split train/validation/test sets determines whether your metrics predict production performance. In vision inspection, “leakage” is common because images are highly correlated: consecutive parts look nearly identical; the same batch shares surface finish; the same machine shares vibration patterns; and the same lighting recipe produces repeatable reflections. If you randomly split images, near-duplicates can appear in both train and test, inflating metrics and hiding brittleness.

Split by the unit of correlation. If your risk is drift across time and tooling, split by time blocks (e.g., train on weeks 1–3, validate on week 4, test on week 5). If your risk is machine-to-machine variation, hold out one machine (or one cavity) for testing. If supplier lots dominate appearance, split by batch/lot so the model must generalize to unseen lots.

Common mistake: optimizing model selection on a validation set that is not independent (same day, same lot). Practical outcome: your test set becomes a credible proxy for go-live performance, and you can justify acceptance thresholds to stakeholders using evidence rather than hope.

1. A defect-detection model performs well in testing but fails after deployment. According to the chapter, what is the most likely root cause to investigate first?

2. When planning data collection for defect detection, what approach best matches the chapter’s guidance on covering real production conditions?

3. What is the main purpose of having clear annotation guidelines in a defect-labeling workflow?

4. Which practice best addresses labeling quality control as described in the chapter?

5. How should train/validation/test splits be prepared to best predict post-deployment behavior in a production environment?

In manufacturing inspection, “training a model” is not a single step—it is the controlled engineering process of turning labeled examples into a repeatable decision system with known failure modes. Mechanical QA tends to speak in terms of tolerances, capability, and risk; computer vision adds probabilistic outputs, dataset bias, and domain shift. Your job is to connect them: pick the right model type (detection vs segmentation), train with discipline, evaluate with metrics tied to scrap and escapes, then convert model scores into an accept/reject policy that operators can trust.

This chapter focuses on building a baseline model first (so you learn where the real bottlenecks are), then improving it through augmentation and tuning. You’ll learn how to evaluate using production-aligned metrics, not just leaderboard numbers, and how to calibrate thresholds so the same “0.7 confidence” means the same thing across days, cameras, and lots. Finally, you’ll package the model with explicit preprocessing and coordinate-system contracts so deployment doesn’t silently break performance.

Keep a consistent mindset throughout: every training choice must map to something physical—lighting changes, motion blur, part pose variation, surface finish, or label ambiguity. When training becomes an engineering discipline, you stop chasing random gains and start building reliable inspection capability.

Practice note for Train a baseline model and establish reproducible experiments: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Improve performance with augmentation, architecture choice, and tuning: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Evaluate with production-aligned metrics and error breakdowns: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Calibrate thresholds and build a reject/accept decision policy: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Package the model with preprocessing and postprocessing contracts: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Train a baseline model and establish reproducible experiments: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Improve performance with augmentation, architecture choice, and tuning: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Evaluate with production-aligned metrics and error breakdowns: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Calibrate thresholds and build a reject/accept decision policy: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Package the model with preprocessing and postprocessing contracts: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Start by choosing the simplest model family that matches the decision you need to make on the line. For many mechanical defects, you either need localization (where is the defect?) or area/shape (how big, how long, how close to an edge). Object detection models (Faster R-CNN, YOLO variants) output bounding boxes and class scores; segmentation models (U-Net, DeepLab-style, Mask R-CNN) output pixel masks and are better when defect geometry matters (cracks, scratches, porosity, coating gaps).

A practical baseline recipe is: (1) a YOLO detector for speed and straightforward deployment, plus (2) a U-Net-style segmenter for defects where bounding boxes are too coarse. In industrial settings, a fast detector can act as a “coarse filter” that triggers downstream actions, while segmentation can be reserved for high-value parts or for measuring defect severity. Your first baseline should not be over-optimized; it should be easy to reproduce and debug.

Common baseline mistakes include using a heavyweight model before you’ve verified label quality, mixing multiple defect definitions into one class (“scratch/crack/contamination”), and ignoring the minimum resolvable defect size in pixels. Before training, compute a back-of-the-envelope check: if the smallest unacceptable defect is 0.2 mm and your imaging yields 20 µm/pixel, then the defect spans ~10 pixels. That is barely learnable for many detectors unless you use higher resolution, tiling, or segmentation.

The point of the baseline is to establish a reference system so every later improvement is measurable and attributable.

Industrial model training fails most often due to poor reproducibility, not poor algorithms. If you can’t reproduce yesterday’s “good run,” you can’t trust tomorrow’s deployment. Build a training loop that is deterministic enough to compare experiments: fix random seeds, record dataset versions, and log every parameter that affects preprocessing, augmentation, and label parsing.

At minimum, your training pipeline should save: code commit hash, dataset manifest (file list + label checksums), train/val/test split IDs, model architecture and pretrained weights, image resize rules, augmentation configuration, optimizer settings, and the exact metric calculation method. Use a tool like MLflow, Weights & Biases, or a lightweight internal tracker; the tool matters less than the habit of capturing full context.

Checkpoints are not just for resuming; they are insurance against overfitting and a way to analyze training dynamics. Save the best checkpoint by a production-relevant metric (not just loss), plus periodic snapshots (e.g., every N epochs) so you can see when performance regressed. In manufacturing, the “best” model might be the one with slightly lower mAP but fewer catastrophic false accepts on critical defect types.

Practical outcome: you should be able to rerun an experiment and get the same validation curve shape and similar end metrics, then confidently attribute improvements to a specific change (augmentation, resolution, loss, architecture), not random luck.

Augmentation is how you teach robustness to the variations your hardware and process will inevitably produce. In factories, variation is often structured: small pose changes from fixturing tolerances, glare from new surface finishes, blur from vibration, and noise from shorter exposures. Apply augmentations that mimic the physics of your line—then validate that they don’t create impossible images that confuse learning.

Start with a conservative set: random brightness/contrast, mild Gaussian noise, small rotations, and small translations/crops that reflect real misalignment. Then add targeted augmentations that match known failure modes. If operators report intermittent false rejects when lights age or parts get oily, add glare/specular highlights or localized contrast shifts. If you have motion blur during conveyor movement, simulate directional blur with plausible kernel sizes.

A key judgment is when not to augment. If your camera mount is rigid and parts are keyed, heavy rotations may reduce accuracy. If your lighting is controlled, extreme brightness shifts can teach the model that defects are optional. Another common mistake is augmenting only the image and forgetting the labels: bounding boxes and masks must be transformed identically, or you silently inject label noise.

Practical outcome: after introducing a new augmentation, look at a batch of augmented images with labels overlaid. If you wouldn’t believe the image could occur on your line, remove or constrain that augmentation. Robustness should be earned through realistic variation, not fantasy.

Standard vision metrics like mAP are useful for comparing detectors, but manufacturing decisions are rarely symmetric. A false accept (escape) of a safety-critical crack can be far more costly than a false reject that triggers rework. Your evaluation must reflect that asymmetry and match your line’s risk posture.

Use mAP (and IoU-based metrics) to ensure the model localizes defects reasonably, but add production-aligned metrics that answer: “How often do we ship bad parts?” and “How often do we stop the line unnecessarily?” For detectors, measure per-defect-type recall at a fixed false-positive rate, or compute expected cost with a weighted confusion matrix. For segmenters, evaluate not only IoU/Dice but also derived measurements that matter: maximum crack length, area of coating void, distance to a sealing edge.

Confusion analysis is where you learn. Break errors into categories: missed tiny defects, confusion between visually similar classes (scratch vs machining mark), false positives near edges or holes, and failures under specific illumination. Slice metrics by part number, cavity, shift, camera, and lot. Many “model issues” are actually dataset coverage problems: one cavity produces a unique texture that the model hasn’t seen, or one operator labels borderline defects differently.

Practical outcome: you should be able to state performance as a business-relevant guarantee (e.g., “>99.5% recall on critical cracks above 0.3 mm at <1% false reject rate”), and you should know the top three error modes to target next.

Models output probabilities or confidence scores, but production requires discrete actions: accept, reject, or route to manual review. Thresholding is not a one-time choice; it is a policy that encodes risk. Set thresholds using validation data that reflects production, and tune separately by defect type and severity where needed. A single global threshold often fails because some defects are subtle (need lower thresholds to avoid misses) while others are high-contrast (can use higher thresholds to reduce nuisance rejects).

Calibration matters because raw scores are not always comparable across models, retrains, or domain shifts. Use calibration techniques (temperature scaling for classifiers, reliability diagrams) to ensure that “0.9 confidence” consistently corresponds to ~90% correctness. In industry, calibration supports stable decision-making when you change cameras, update lighting, or retrain monthly.

Plan explicitly for “unknown/uncertain” cases. There will be new defect modes, out-of-focus images, unexpected parts, and borderline callouts. Introduce a third state: hold for review. You can trigger this when: the top score is near the threshold, detections are inconsistent across frames, image quality checks fail, or the model sees an out-of-distribution pattern (e.g., embeddings far from training data).

Practical outcome: you end with a documented reject/accept policy that is testable, auditable, and adjustable without retraining, while still preserving safety margins and minimizing unnecessary scrap.

A model that performs well in a notebook can fail instantly in deployment if preprocessing and coordinate handling differ. Packaging is the discipline of turning training-time assumptions into explicit runtime contracts. Define exactly how images are read (color order, bit depth, gamma), normalized (mean/std, scaling), resized (letterbox vs warp), and cropped (ROI rules). Then ensure postprocessing converts predictions back to the original image coordinates and, ideally, to real-world units.

Resizing is a frequent source of subtle defects. Letterboxing preserves aspect ratio but adds padding; if you forget to remove padding when mapping boxes/masks back, your detections shift. Warping changes aspect ratio and can distort defect geometry—sometimes acceptable for detection, often harmful for segmentation-based measurement. If you use tiling for high-resolution inspection, define overlap strategy and non-maximum suppression rules to merge tile predictions reliably.

Coordinate systems must be unambiguous. Document whether boxes are (x1,y1,x2,y2) or (cx,cy,w,h), whether coordinates are normalized (0–1) or pixel-based, and whether the origin is top-left. For segmentation, specify mask resolution relative to input and how to upsample/downsample. In metrology-adjacent inspection, add a pixel-to-mm conversion and clarify where calibration is applied.

Practical outcome: when the model is deployed to an edge device or server, you can verify that the same input image produces the same detections as in training evaluation, eliminating a major class of “mysterious” production regressions.

1. According to the chapter, why should you train a baseline model first before pursuing improvements?

2. What is the core goal of evaluating with production-aligned metrics rather than only “leaderboard” numbers?

3. The chapter says you must connect mechanical QA concepts with computer vision realities. Which pairing best reflects that connection?

4. What does calibrating thresholds aim to achieve in this chapter’s framework?

5. Why does the chapter stress packaging the model with explicit preprocessing and coordinate-system contracts?

In production inspection, “works on my laptop” is not a milestone. Your system must meet a takt time, survive bad days on the line (dust, vibration, operators, variable parts), and produce decisions that are explainable and recoverable when something goes wrong. This chapter connects model performance to engineering realities: end-to-end latency, compute hardware tradeoffs, runtime optimization, robustness stress tests, decision logic with human review loops, and a validation plan that quality and operations can sign off on.

As a mechanical engineer, you already think in terms of tolerances, failure modes, and acceptance tests. Apply the same mindset here: define budgets (milliseconds, frames, bandwidth), identify bottlenecks (camera exposure, decode, inference, PLC handshake), design for worst-case conditions (not the demo), and create controlled change processes so your system doesn’t drift into chaos after the first update.

By the end of this chapter, you should be able to profile your pipeline, pick edge-ready hardware, accelerate inference safely, stress-test against shift and contamination, design fallback logic and human-in-the-loop queues, and build a production acceptance plan with sampling and change control.

Practice note for Profile inference and remove bottlenecks end-to-end: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Optimize models with quantization/pruning and runtime acceleration: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Stress-test for drift, lighting change, and new defect modes: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Design fallback logic and human-in-the-loop review queues: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Create a validation plan for production acceptance testing: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Profile inference and remove bottlenecks end-to-end: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Optimize models with quantization/pruning and runtime acceleration: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Stress-test for drift, lighting change, and new defect modes: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Design fallback logic and human-in-the-loop review queues: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Practice note for Create a validation plan for production acceptance testing: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.

Speed starts with an explicit latency budget, not with “optimize the model.” Write the pipeline as a timed chain: exposure/capture → transfer → decode → preprocess → inference → postprocess → decision → actuator/PLC. Put a millisecond budget next to each step and verify it with measurement. A common mistake is timing only inference and ignoring camera exposure, image conversion, network transfer, and PLC handshakes, which can dominate the cycle time.

Build an end-to-end profiler early. Add timestamps at each boundary (camera SDK callback, preprocessing start/end, inference start/end, postprocess start/end, decision published/acked). Log these in production-like conditions and plot distributions, not just averages. Your takt time cares about the 95th/99th percentile: an occasional 300 ms spike can blow a buffer and cause line stops.

Remove bottlenecks systematically. If capture is slow, reduce exposure time with better lighting or higher gain (but watch noise). If transfer is slow, switch from compressed streams to raw (or vice versa), change pixel format, or move from USB to GigE/CoaXPress. If preprocessing is slow, fuse operations (resize + normalize) and keep data on the same device (avoid CPU↔GPU copies). If postprocessing is slow, simplify NMS parameters, reduce output candidates, or constrain the region of interest (ROI) to where defects can physically occur.

Practical outcome: you end this section with a spreadsheet (or YAML) defining a latency budget and a dashboard that reports p50/p95/p99 latency for each stage, plus a prioritized list of changes that buy you the most milliseconds per engineering hour.

Hardware selection is a system design choice, not an AI choice. Start from constraints: available power, allowable enclosure temperature, network topology, maintenance model, and the required throughput (parts/minute × cameras × views). Then map those constraints to compute options.

CPU-only can be sufficient for lightweight classification or small detection models, especially if you can constrain ROI and run at modest resolution. Benefits: simpler deployment, fewer driver issues, easier IT approval. Risks: limited headroom, sensitivity to concurrent processes, and weaker scaling when you add cameras.

Discrete GPU in an industrial PC is the workhorse for higher resolutions, multiple cameras, and segmentation. Benefits: high throughput and mature runtimes. Risks: power/thermal demands, GPU availability, and the need for robust dust management. Plan for fans and filters, and monitor temperature and throttling.

NVIDIA Jetson (edge GPU) is attractive when you need compact, low-power inference near the camera. Benefits: integrated acceleration with TensorRT and good performance per watt. Risks: limited memory, careful version matching (JetPack/CUDA/TensorRT), and more tuning effort for peak performance. Jetson is often ideal for “one station, one device” deployments where network latency to a server is unacceptable.

Server-based inference can centralize maintenance and enable shared GPUs, but introduces network dependencies. If you go this route, engineer for network outages: local buffering, retry logic, and a safe-state on the PLC.

Common mistake: buying hardware based on peak FPS benchmarks without considering camera I/O, data copies, and real image sizes. Practical outcome: a hardware decision record (HDR) that ties compute choice to takt time, environment, and future expansion (additional cameras, new defect classes).

Once the pipeline is profiled and hardware is chosen, optimize in a way that preserves quality. Your goal is not “highest FPS” but “meeting latency/throughput with controlled accuracy loss.” Start by standardizing model export to ONNX so you can test multiple runtimes consistently and avoid framework lock-in.

On NVIDIA platforms, TensorRT is typically the largest single inference speedup. Convert your ONNX model to a TensorRT engine, benchmark it on the target device, and lock the engine build to a known driver/runtime version. Use FP16 when supported; it often improves throughput with negligible accuracy loss in vision tasks. INT8 can be faster but requires calibration data and can degrade small-defect sensitivity if poorly calibrated.

Quantization (FP16/INT8) and pruning are tools, not defaults. Quantization works best when you calibrate using images that reflect production distributions: lighting extremes, dirty lenses, borderline defects. A common mistake is calibrating on “clean lab images,” then discovering that production noise pushes activations outside calibrated ranges. If you see a recall drop on small defects, consider keeping certain layers in higher precision, increasing input resolution, or revisiting ROI strategy.

Also optimize around the model: batch size (often 1 for real-time), pinned memory, asynchronous transfers, and avoiding repeated allocations. Pre-allocate buffers and reuse them. Fuse preprocessing into the runtime when possible, or move it to the GPU to avoid copies.

Practical outcome: a reproducible optimization recipe (export → validate numerics → accelerate → re-evaluate KPIs) and a deployment artifact you can rebuild deterministically, including the calibration set and runtime versions.

A model that meets metrics on a test split can still fail on Monday morning. Robustness testing is your equivalent of a mechanical stress test: you deliberately push the system beyond nominal conditions to map failure boundaries. Design tests around realistic shift sources: lighting drift, camera aging, lens contamination, focus changes from vibration, and motion blur from speed variations.

Create a structured robustness suite. Include: (1) lighting sweeps (intensity ±30%, color temperature variation, flicker), (2) contamination cases (smudges, dust specks, coolant mist), (3) focus offsets (slight defocus, tilt), (4) motion blur (increased conveyor speed, strobe timing offsets), and (5) new defect modes (previously unseen scratches or burr shapes). Capture short runs for each condition and label a targeted subset focused on borderline parts.

Measure how KPIs move: precision/recall at fixed thresholds, false reject rate, and miss rate for critical defects. Track not just aggregate metrics but per-defect and per-condition breakdowns. If performance collapses under a specific shift (e.g., blur), don’t jump to “train more.” First consider physical mitigations: strobing, higher shutter speed, better mounting, or a telecentric lens. Robustness often improves more from optics and lighting than from more epochs.

Integrate drift detection in production: monitor input brightness histograms, focus metrics, and embedding distances; trigger alerts or a review queue when distributions shift. Practical outcome: a documented stress-test plan and a set of “known failure modes” with mitigations (physical, software, or operational).

Model output is not a decision. Decision engineering is where you convert probabilities, boxes, or masks into actions that protect customers without stopping the line unnecessarily. Start by defining classes of outcomes: auto-accept, auto-reject, and manual review. Manual review is not failure; it is a control knob to manage uncertainty while collecting data for retraining.

Use thresholds that reflect production risk. For critical defects, bias toward recall: lower thresholds and accept a higher review rate. For cosmetic defects, you may prioritize precision to avoid false rejects. Consider per-defect thresholds and “two-signal” rules (e.g., defect must appear in two consecutive frames or two views) to reduce noise-driven false alarms. Buffering is crucial: if inspection is asynchronous, implement a ring buffer keyed by part ID (encoder/trigger) so decisions align with the correct part and actuator timing.

Design fallback logic. If the camera feed drops, exposure saturates, or the model returns invalid outputs, fail safely: either stop the station, route to manual inspection, or default to reject depending on cost-of-escape. Log every fallback event with enough context to debug: last N frames, device temperatures, and latency spikes.

Confidence UX matters. If operators are involved, show clear, minimal explanations: the view, the highlighted region, the defect type, and the reason for review (“low confidence,” “novel appearance,” “image quality low”). Common mistake: exposing raw probabilities without guidance, which leads to inconsistent human decisions. Practical outcome: a decision policy document and an operator-friendly review queue that doubles as a data collection engine.

Before go-live, you need a production acceptance test that QA, operations, and engineering agree on. Define what “passing” means with measurable criteria: maximum false accept rate for critical defects, maximum false reject rate, minimum uptime, maximum p95 latency, and required traceability (image retention, logs). Then design a sampling plan that gives statistical confidence.

Use risk-based sampling. If escapes are high cost, require larger sample sizes and include deliberate seeded defects (golden parts with known issues) to verify sensitivity. Ensure your validation includes the full range of normal variation: different operators, shifts, part lots, and environmental conditions. Run the test in the actual cell with the real PLC timing, not a bench setup.

Change control keeps the system stable after launch. Treat model updates like process changes: version everything (model, thresholds, preprocessing, camera settings), record training data lineage, and maintain a rollback plan. Establish triggers for retraining and revalidation: drift alerts, rising review rates, new defect types, or hardware changes (new camera, lens replacement). A common mistake is “silent improvements” that accidentally change behavior and break downstream assumptions.

Practical outcome: a signed validation report, a deployment checklist (including backups and rollback), and a lightweight governance process so you can improve the system without losing trust on the factory floor.

1. Why is "works on my laptop" not a meaningful milestone for a production inspection system?

2. Which approach best reflects the chapter’s recommendation for improving system speed?

3. What is the primary purpose of robustness stress-testing in this chapter?

4. What role do fallback logic and human-in-the-loop review queues play in a production defect detection system?

5. What should a production acceptance (validation) plan include according to the chapter?

A defect model that performs well in a notebook is not yet a production inspection system. Production means: deterministic triggers, predictable latency, traceable decisions, and a clear plan for what happens when reality changes (new suppliers, new surface finishes, new lighting drift, new defect modes). In this chapter you will turn your vision model into an inspection “product”: a deployed service integrated with PLC/MES concepts, instrumented with monitoring, and supported by a retraining loop and governance process. This is also the chapter where you convert engineering work into career evidence—portfolio artifacts and interview stories that show trade-offs, not just code.

As a mechanical engineer, you already understand process capability, control plans, and failure modes. Think of deployment and monitoring as the “control plan” for your AI: what gets measured, what thresholds trigger action, and how you keep the system in control without stopping the line unnecessarily.

Practice note for Deploy an inspection service/API and integrate with PLC/MES concepts: document your objective, define a measurable success check, and run a small experiment before scaling. Capture what changed, why it changed, and what you would test next. This discipline improves reliability and makes your learning transferable to future projects.